Clear Sky Science · en

Stochastic Poisson-embedded privacy framework for federated learning with secure homomorphic encryption in medical AI

Keeping Medical Secrets Safe While Teaching Machines

Hospitals are collecting huge numbers of X‑ray images that could help doctors spot diseases like COVID‑19 earlier and more accurately. But those images are also deeply personal, and strict privacy rules make it hard to pool data in one place to train powerful artificial intelligence (AI) tools. This study shows a way to let hospitals cooperate on a shared X‑ray diagnosis system without ever handing their raw images to anyone else, aiming to keep patient data locked down while still getting top‑tier accuracy.

Why Sharing Medical Data Is So Hard

Modern AI thrives on large, varied datasets, yet hospitals typically store images locally and are reluctant—or legally unable—to send them to a central server. Traditional approaches that copy all data into one big database risk leaks and cyberattacks, undermining public trust and violating regulations. Even newer methods, where hospitals train a shared model together in a setup called “federated learning,” are not fully safe: clever attackers can sometimes work backward from model updates to guess what patient images looked like. At the same time, medical data are often uneven and messy, with some hospitals having many more cases of a particular disease than others, which can destabilize training and reduce reliability.

A Cooperative Network That Never Shares Raw X‑Rays

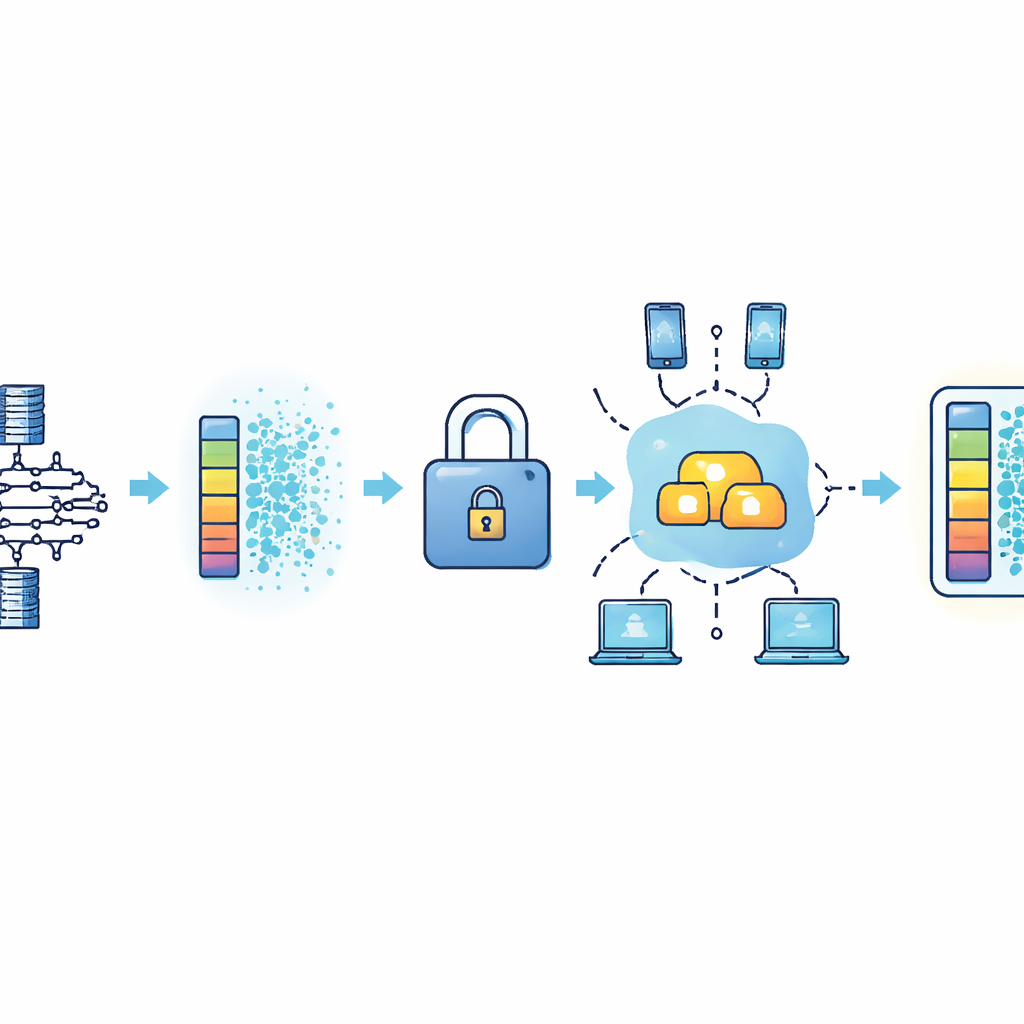

The authors design a federated learning framework centered on a strong image‑recognition model known as ResNet‑50 to distinguish COVID‑19 from normal chest X‑rays. Each hospital trains its own copy of this model on its local images, keeping all X‑rays on site. Instead of shipping pictures, hospitals only send numerical updates describing how their local model should change. A central server averages these updates to form an improved global model and then sends the refined model back to every hospital. Repeating this cycle lets the shared model benefit from the combined experience of all participants without exposing individual scans.

Adding Digital “Static” and Lockboxes for Extra Privacy

To stop attackers from reconstructing patient images from model updates, the framework layers two privacy techniques on top of federated learning. First, each hospital adds carefully calibrated random noise to its model updates, a bit like adding static to a radio signal so individual voices are harder to pick out while the overall message still comes through. Second, before updates travel over the network, they are encrypted using a method that allows the server to add them together while they stay locked—similar to summing values inside sealed envelopes. Only a trusted key holder can unlock the combined result, and the central server never sees any one hospital’s update in plain form. Together, these steps are designed to frustrate attempts to reverse‑engineer patient data while preserving the usefulness of the shared model.

Putting the System to the Test

The team evaluates their framework on a balanced set of COVID‑19 and normal chest X‑ray images, simulating several hospitals as separate training sites. They compare three setups: classic centralized training with all data pooled, standard federated learning without extra protections, and their privacy‑enhanced approach. Despite the added noise and encryption, the protected system reaches strikingly high scores—about 99.6% accuracy, with similarly strong precision, recall, and F1 values—matching or beating both the pooled and unprotected federated versions. Measurements of communication rounds, training loss, and computation time show that accuracy improves steadily as sites collaborate, while the extra time cost from encryption remains modest. Ablation experiments, where parts of the system are turned on and off, confirm that the chosen noise levels and encryption‑plus‑compression strategy offer strong privacy with only minor performance trade‑offs.

What This Means for Future Care

For non‑experts, the key message is that this work demonstrates a practical recipe for teaching AI from many hospitals’ X‑rays without ever exposing raw images or weakening privacy laws. By combining a high‑performing image model with digital “static” and encrypted aggregation, the framework shows that hospitals can jointly build accurate diagnostic tools while keeping patient records on site and out of reach of prying eyes. Although tested on a relatively small dataset and focused on COVID‑19 X‑rays, the same ideas could extend to other diseases, imaging types, and even other sensitive fields like finance. In short, the study points toward a future where powerful AI and strong medical privacy can reinforce, rather than oppose, each other.

Citation: Gomathi, R., Saranya, K., Mahaboob John, Y.M. et al. Stochastic Poisson-embedded privacy framework for federated learning with secure homomorphic encryption in medical AI. Sci Rep 16, 10931 (2026). https://doi.org/10.1038/s41598-026-41469-4

Keywords: federated learning, medical imaging, data privacy, homomorphic encryption, X-ray diagnosis