Clear Sky Science · en

Explainable and secure federated learning for privacy-enhancing skin cancer classification using a lightweight multi-scale CNN

Why Smarter Skin Cancer Checks Matter

Skin cancer is the most common cancer worldwide, and catching it early can save lives. Yet accurate diagnosis still depends heavily on specialists carefully inspecting images of moles and spots on the skin. Many clinics lack such expertise, and sharing large collections of patient images to train better computer tools raises serious privacy concerns. This study introduces a new way to let hospitals work together to train a powerful skin cancer detection system without ever sharing raw patient images, while also giving doctors clear visual explanations of what the system sees.

Working Together Without Sharing Secrets

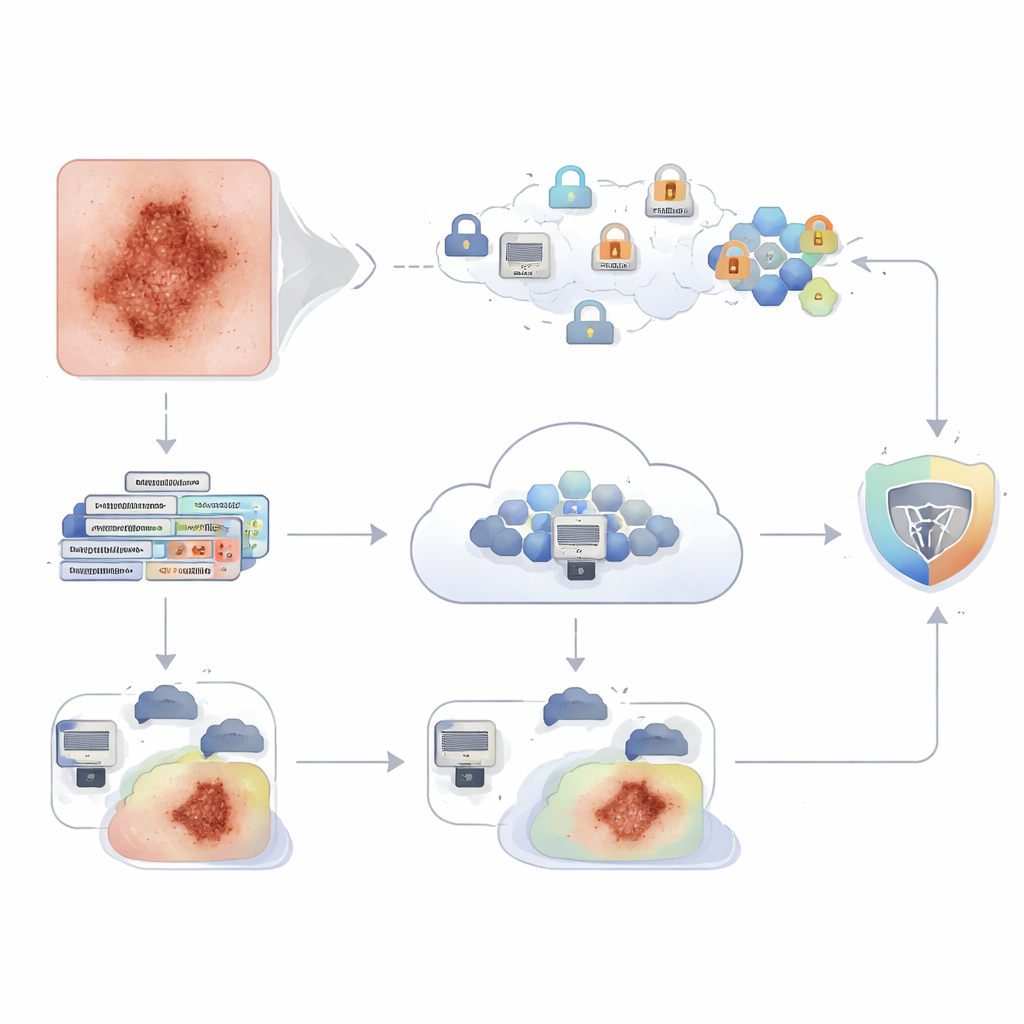

The core idea is a training method called federated learning. Instead of sending skin images to a central server, each hospital keeps its pictures on site and trains a local copy of the same computer model. Only the learned “know‑how” (model updates) is sent to a central server, where it is combined into a better global model and then sent back to all hospitals. In this work, the authors simulate several hospitals cooperating in this way on a large public dataset of skin lesions, so the model benefits from diverse cases while patients’ images never leave their home institution.

A Lean But Sharp-Eyed Image Reader

To make this collaboration practical, the team designed a new lightweight multi‑scale convolutional neural network (LWMS‑CNN). Many popular image models are huge and slow to transmit over hospital networks; by contrast, this model uses under one million trainable parameters, a fraction of what well‑known architectures require. Its structure processes each skin image at several detail levels in parallel, from fine edges and textures to broader patterns, then fuses these clues. This compact design proved both accurate and efficient, beating or matching heavier models like ResNet and DenseNet on standard measures such as accuracy, precision, and F1‑score, while being much smaller and faster—important for use on modest hospital servers or even edge devices.

Locking Down Privacy With Encryption

Although federated learning avoids sending raw images, the shared model updates can still leak information under sophisticated attacks. To close this gap, the authors wrap the whole exchange in homomorphic encryption, a cryptographic technique that lets the server add and average model updates while they remain encrypted. Hospitals encrypt their model changes before sending them; the server only ever sees scrambled numbers, yet can still compute the combined update. Only a trusted party can decrypt the aggregated result. Tests showed that adding this protection barely affected performance: accuracy dropped by only about 0.3 percentage points (from 98.62% to 98.34%), a small price for greatly strengthened privacy and compliance with strict medical data regulations.

Opening the Black Box for Clinicians

High accuracy alone is not enough in medicine; clinicians must understand why an algorithm made a particular call. The study therefore adds explainable‑AI tools on top of the trained model. One tool, SHAP, highlights which parts of an image most influenced a decision, treating each pixel patch as a “player” in a voting game. Another, Grad‑CAM, overlays a heatmap onto the lesion, showing where the network focused its attention when, for example, calling a spot malignant or benign. Together, these views let dermatologists check that the model is looking at meaningful structures—such as irregular borders or color variations—rather than at hairs, lighting artifacts, or background skin, and to scrutinize uncertain or incorrect cases.

From Lab Tests to Real-World Clinics

The encrypted LWMS‑CNN federated system was trained and evaluated on the HAM10000 skin lesion dataset and then tested on two additional collections, ISIC 2019 and PAD‑UFES‑20, that differ in cameras, lesion types, and patient populations. It achieved high accuracies across all three, suggesting that the approach generalizes well beyond a single source of data. The authors also explored tougher, more realistic settings in which different “hospitals” see different mixes of cases, and compared several ways of combining model updates; the standard FedAvg method worked best. While the experiments ran in a simulated multi‑client setup rather than on physically separate hospitals, the results show that a compact model, privacy‑preserving training, and clear visual explanations can be combined into a single framework. For patients, this points toward future skin cancer checks that are more accurate, more widely available, and more respectful of privacy, while still keeping doctors firmly in the loop.

Citation: Sayeed, A.S.M., Birahim, S.A., Ullah, M.S. et al. Explainable and secure federated learning for privacy-enhancing skin cancer classification using a lightweight multi-scale CNN. Sci Rep 16, 11414 (2026). https://doi.org/10.1038/s41598-026-41360-2

Keywords: skin cancer detection, federated learning, medical data privacy, explainable AI, homomorphic encryption