Clear Sky Science · en

A novel lightweight hybrid CNN–ViT for maize leaf disease classification

Helping Farmers Spot Sick Corn Plants Sooner

Maize, or corn, feeds people, animals, and even fuels our cars. But hidden infections in its leaves can quietly cut yields and livelihoods. This study introduces a smart, lightweight computer-vision system that spots disease in corn plants automatically, even in messy real-world field images. By combining two different types of artificial intelligence and tailoring them for low-cost devices, the researchers show how farmers could one day use phones, drones, or simple cameras to monitor crop health quickly and accurately.

Why Corn Diseases Are Hard to Catch

In real fields, corn plants rarely pose neatly for the camera. Leaves overlap, lighting changes, and soil or pots clutter the background. Human experts walking through fields can miss subtle early symptoms, and their time is limited. Many existing image-based tools are trained on idealized photos showing a single leaf against a plain backdrop—quite unlike the tangle of leaves a drone or fixed camera actually sees. That mismatch means today’s algorithms often struggle once they leave the laboratory, especially when they also need to run on modest hardware such as mobile phones or small edge devices.

Two Ways Machines “See” and Why They Need Each Other

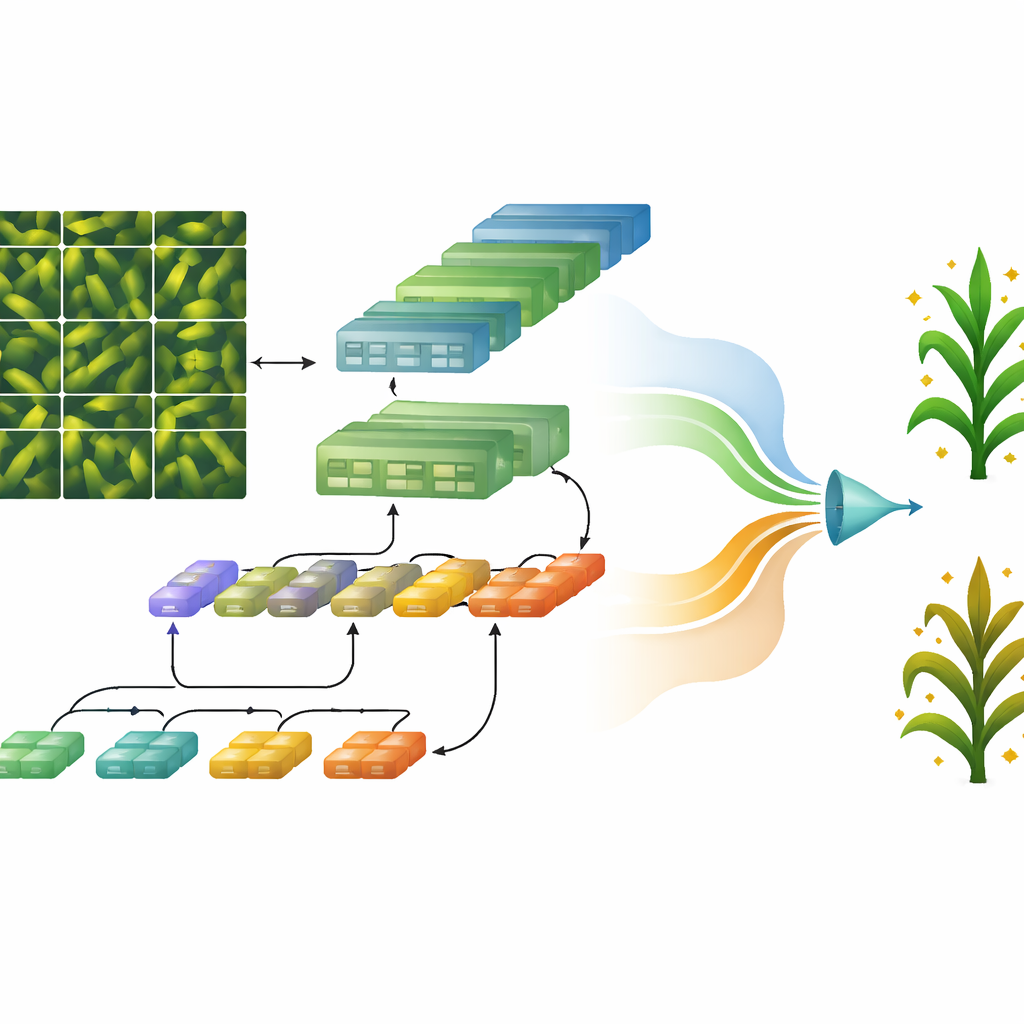

Modern image-recognition systems tend to rely on either convolutional neural networks or a newer family called vision transformers. Convolutional networks excel at picking up fine details such as edges and spots in small neighborhoods of an image, making them good at finding local disease clues. Transformers, on the other hand, are better at understanding the bigger picture—how patterns relate across widely separated parts of an image—but they typically demand huge training sets and powerful computers. Used alone, each approach has drawbacks: convolutions can miss long-range context, while transformers can be too heavy and data-hungry for everyday farm use.

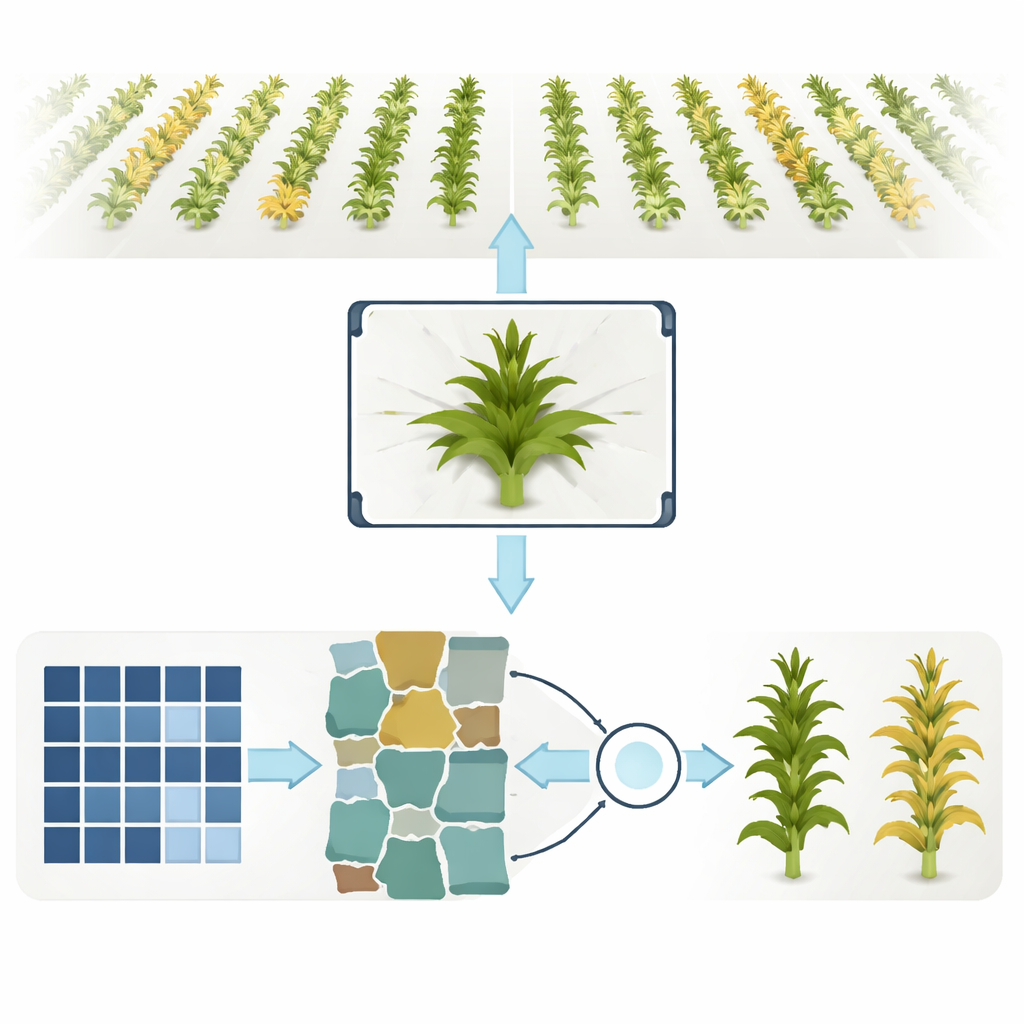

A Lightweight “Team of Experts” Model

The researchers designed a hybrid model, called MXiT, that deliberately combines these two ways of seeing. Incoming plant images are first broken into overlapping patches so that small textures are preserved. One path through the network uses convolutional layers to focus on local textures and leaf details; another path uses a streamlined attention mechanism inspired by transformers to capture global structure across the whole plant canopy. A simple gating unit then decides, for each image, how much to trust the “local-detail expert” versus the “global-context expert,” blending their outputs into a single prediction of whether the plant is healthy or diseased. Crucially, the attention component is pared down and optimized so that the overall system uses few parameters and relatively little computation, making it suitable for portable devices.

Testing on Realistic and Benchmark Datasets

To see how well the model works outside of ideal conditions, the team relied on a challenging dataset of top-down maize images known as PlantScanner. Each frame shows an entire plant from above, with multiple overlapping leaves and natural variation in shape. A plant is labeled “infected” if any leaf shows symptoms of a fungus called Ustilago maydis. The same model was also evaluated on a well-known benchmark collection of maize leaf photos called PlantVillage, which includes several distinct disease types as well as healthy leaves. In both datasets, MXiT was trained from scratch and compared against established lightweight and transformer-based models such as MobileViT, PiT, EdgeNeXt, and DeiT.

Near-Perfect Accuracy with Less Computing Power

On the demanding PlantScanner dataset, MXiT reached about 99.9% accuracy while using fewer model parameters and lower computational cost than its competitors. It converged quickly during training and showed stable behavior, unlike some alternatives whose accuracy fluctuated or lagged despite larger size. On the PlantVillage benchmark, the hybrid model again achieved top-tier accuracy with the smallest footprint among the best-performing systems. Visualizations of where different models “look” in the images revealed that MXiT consistently focused on biologically meaningful regions—stressed leaf tissue and plant centers—while other models often wasted attention on soil or background, hinting that the hybrid design is not only accurate but also more interpretable.

What This Means for the Future of Crop Care

For a non-specialist, the core message is simple: by letting two complementary vision systems work together and share the load efficiently, MXiT can spot corn leaf disease in realistic field-style images with almost perfect reliability, without needing a supercomputer. This kind of compact, accurate model could power practical tools that run on drones, tractors, or smartphones, giving farmers early warnings before problems spread. While the current work focuses on whether a plant is healthy or sick, the same approach could be extended to estimate how severe an infection is, paving the way for smarter, more precise, and less chemical-intensive crop management in the years ahead.

Citation: Mehdipour, S., Mirroshandel, S.A. & Tabatabaei, S.A. A novel lightweight hybrid CNN–ViT for maize leaf disease classification. Sci Rep 16, 10468 (2026). https://doi.org/10.1038/s41598-026-41190-2

Keywords: maize leaf disease detection, hybrid CNN transformer, plant phenotyping, precision agriculture, lightweight deep learning