Clear Sky Science · en

Staining normalization in histopathology: Method benchmarking using multicenter dataset

Sharper tissue images for doctors and computers

When pathologists look at tissue samples under the microscope, they rely on subtle shades of pink and purple to decide if cells are healthy or cancerous. Today those colors can vary a lot from one hospital lab to another, which not only complicates human diagnosis but also trips up artificial intelligence tools trained on these images. This study set out to measure just how big that color problem is and to test which computer techniques work best to make slide images look more alike without losing important detail.

Why color varies from lab to lab

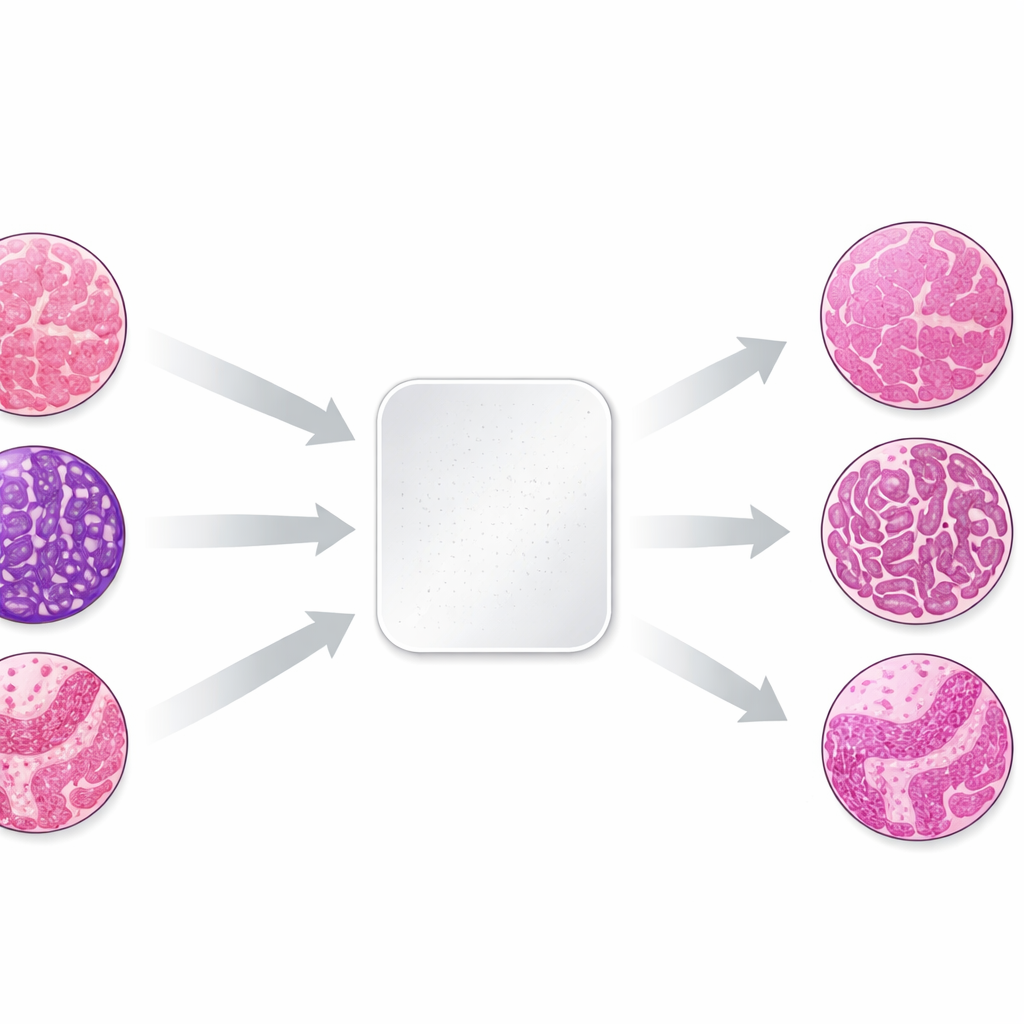

The work focuses on the most common dye pair in pathology, hematoxylin and eosin, which stains cell nuclei bluish-purple and the surrounding tissue pink. Small differences in how labs fix, process, and stain tissue, and in how scanners capture images, can shift these colors dramatically. To study this effect in a controlled way, the authors took three tiny tissue samples—skin, kidney, and colon—from the same donor blocks and sent identical unstained sections to 66 laboratories in 11 countries. Each lab used its routine staining procedure, then the finished slides were digitized. Because the biological material was nearly identical, any differences in appearance mainly reflected how each lab stained and imaged the tissue.

Building a unique testbed for color correction

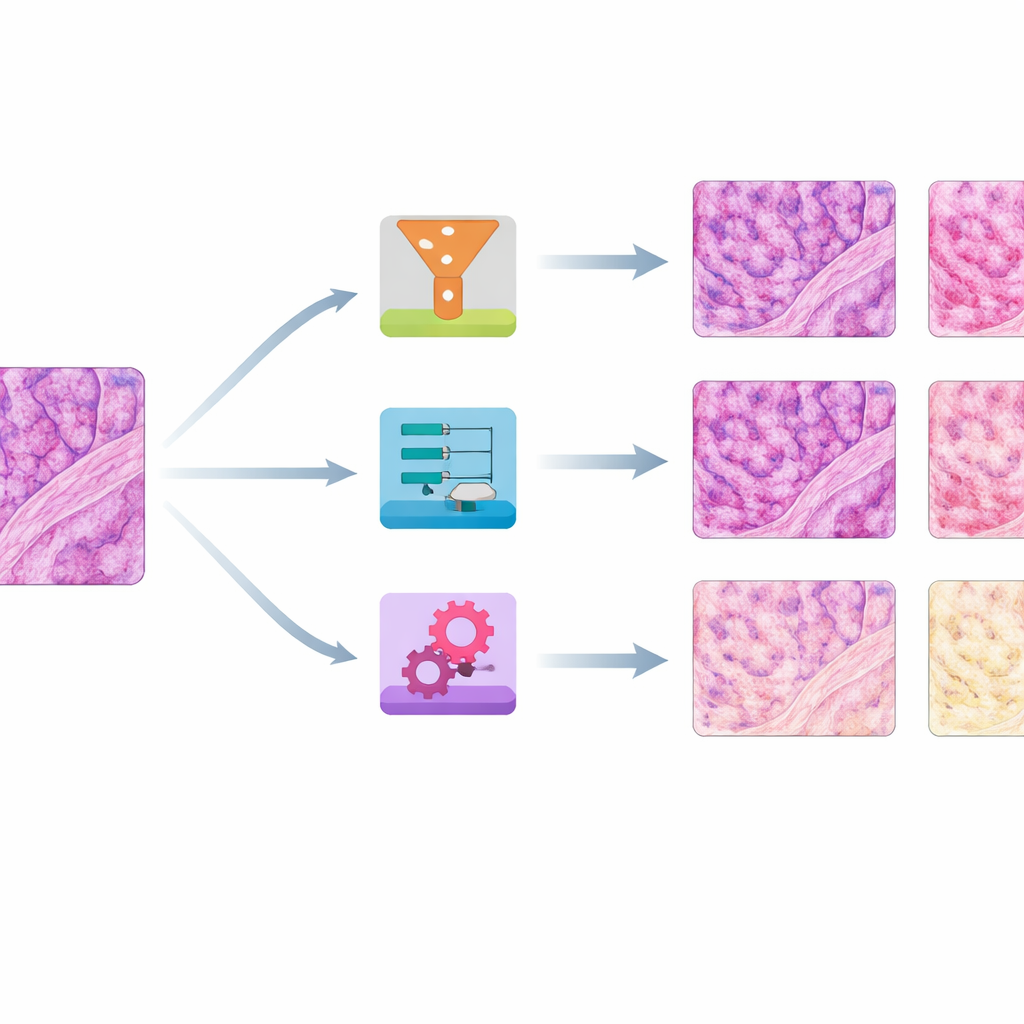

The resulting image collection showed striking variation: slides from the same tissue block could range from pale to almost black, or drift from cool to very warm hues. The team first quantified these differences by measuring average red and blue color levels in each slide. They then chose a single well-balanced slide per tissue type as a reference and applied eight different stain normalization methods to all the others. Four methods were older, math-based approaches that adjust global color statistics or separate and rescale stain components. Four were based on modern "generative" AI, which learns how to transform images from one color style to another using neural networks.

Which methods worked best on color and structure

To judge performance, the authors asked two main questions: How closely did the corrected images match the reference colors, and how well did they preserve fine tissue structure? They used several numerical scores that compare color distributions, a high-level image similarity measure borrowed from computer vision, and a structural index that is sensitive to blurring or distortions. Across skin, kidney, and colon, a simple method called histogram matching—essentially reshaping each slide’s color distribution to mimic the reference—consistently produced the closest color match while keeping structures mostly intact. Another traditional approach, Reinhard normalization, often performed nearly as well. A third, Vahadane, excelled at preserving structure but tended to push everything toward a pink tone and to suppress the blue nuclear stain.

How the images looked to human experts and AI tools

Experienced pathologists reviewed normalized colon slides to see how the methods affected real-world interpretability. They checked whether important layers and cell types remained easy to distinguish, whether over- or under-stained originals were improved, and whether any odd digital artifacts appeared. No single method fixed every problem, but histogram matching generally yielded even, reference-like colors without obvious artifacts, especially in heavily over-stained samples. Some AI-based methods, particularly certain versions of CycleGAN and Pix2pix, produced realistic-looking results but occasionally introduced subtle fake structures or color glitches in blood cells and background areas. The team also showed that normalization changed how a state-of-the-art cell detection algorithm counted nuclei and how a large "foundation" model represented the slides, underscoring that color correction can strongly influence downstream AI behavior.

What this means for future digital diagnosis

Overall, the study reveals that color differences between laboratories are large enough to matter for both human readers and automated systems, and that making images more uniform is an important step toward reliable, shareable digital pathology. Surprisingly, in this carefully controlled dataset with very similar tissue content, straightforward global methods like histogram matching often outperformed more complex deep-learning techniques, which need far more training data than a single slide per lab. The authors release their 66-center dataset openly so others can benchmark new methods and better design training data that reflects real-world variation. For patients, progress in this area could translate into AI systems that travel well from one hospital to another, offering more consistent diagnoses no matter where a biopsy is processed.

Citation: Khan, U., Härkönen, J., Friman, M. et al. Staining normalization in histopathology: Method benchmarking using multicenter dataset. Sci Rep 16, 11097 (2026). https://doi.org/10.1038/s41598-026-40943-3

Keywords: digital pathology, stain normalization, histology imaging, medical AI, color variation