Clear Sky Science · en

Pan-cancer frozen section classification using a soft mixture of experts vision transformer under weak supervision

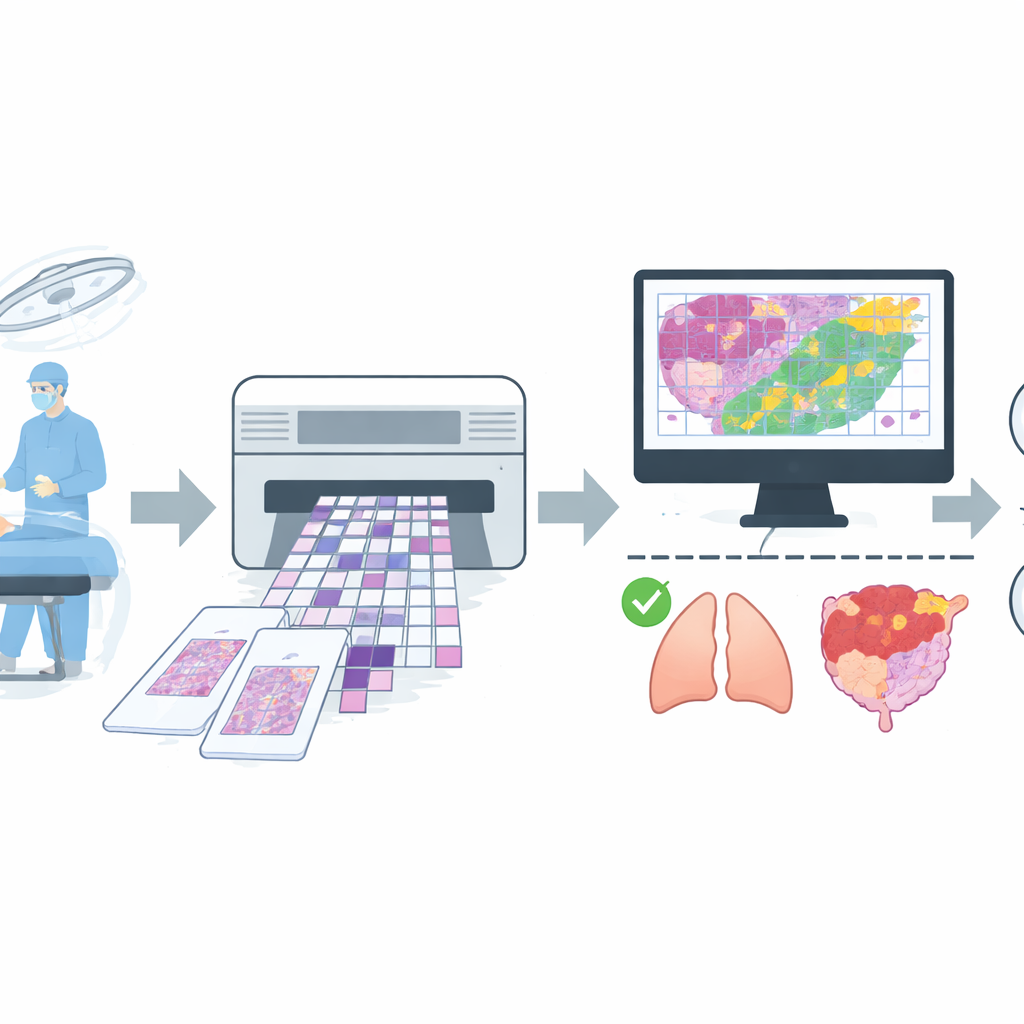

Why this matters in the operating room

When surgeons remove a suspected tumor, they often have only minutes to find out whether all the cancer has been taken out. Pathologists rush to examine a rapidly frozen slice of tissue and give an answer while the patient is still on the table. This high‑pressure process can be hampered by blurry slides, subtle tumors, and simple time limits. The study described here explores how an artificial intelligence (AI) system can help pathologists quickly and reliably distinguish harmless from dangerous tissue across many different organs, using equipment that is realistic for everyday hospitals.

A fast test with built‑in challenges

Frozen section analysis is a staple of modern surgery: a thin piece of tissue is frozen, sliced, stained, and viewed under the microscope to judge whether it is benign or malignant and whether the surgical margin is clear. Unlike permanent laboratory slides, frozen sections often suffer from cracks, folds, and uneven staining. Different pathologists may disagree on borderline cases, and the clock is always ticking. These issues are especially serious in smaller or busy hospitals, where only a few specialists must cover many types of cancer. The authors argue that a robust computer‑based assistant could make frozen section decisions more consistent, faster, and more widely available.

Building a wide‑ranging real‑world picture set

To train such an assistant, the team assembled a large collection of digital images from routine operations at a major hospital. They gathered 4,754 whole‑slide images of frozen tissue from more than 2,600 patients, then applied strict quality rules to remove slides with severe artifacts or uncertain diagnoses. The final dataset contained 4,667 slides, each labeled simply as benign or malignant based on agreement between the quick frozen reading and the later, more careful permanent section report. The slides covered common sites such as lung, breast, thyroid, lymph nodes, and female reproductive organs, plus a mixed group of less frequent locations like stomach, liver, and skin. The data were split into separate groups for training, fine‑tuning, and final testing, with care taken so that images from the same patient never appeared in more than one group.

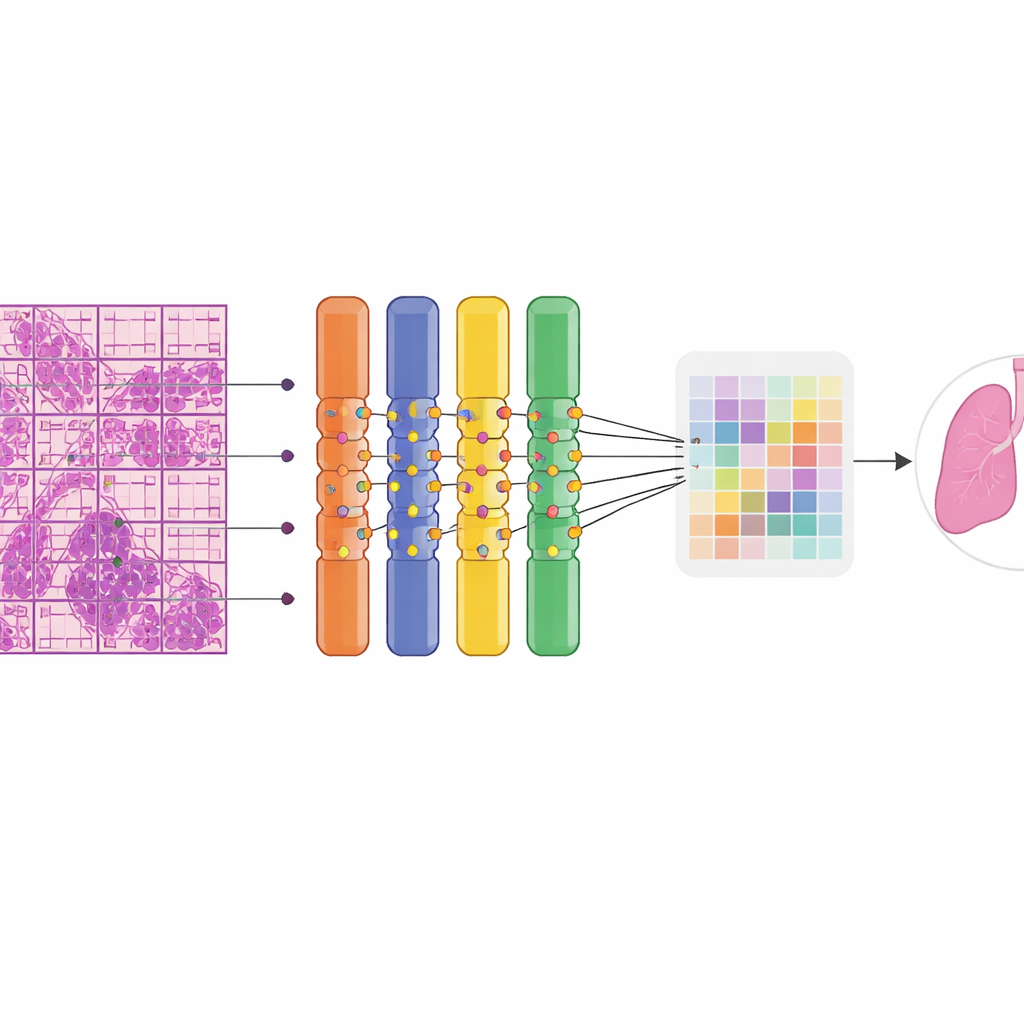

How the AI learns from weak clues

The researchers built their model on a class of neural network called a Vision Transformer, which excels at finding patterns across large images. Each giant tissue image was automatically chopped into many smaller tiles and patches so it could be processed on standard graphics hardware. A key innovation was replacing part of the network with a “soft mixture of experts,” a set of many small specialist branches that each focus on different visual patterns. Rather than switching experts on and off, the system blends their contributions smoothly, which stabilizes training and makes better use of limited data. Because pathologists did not draw outlines around tumors, the model had to learn from weak supervision: it knew only whether an entire slide was benign or malignant. A multiple‑instance learning strategy promoted the most suspicious patches within a malignant slide to serve as positive examples, allowing the network to gradually hone in on the most informative regions.

How well the system works in practice

When tested on 669 unseen slides, the AI distinguished benign from malignant tissue with high accuracy. It correctly classified about 90% of cases overall and showed an excellent ability to separate the two groups across probability thresholds. Sensitivity, the chance of flagging a truly malignant slide, was around four in five, while specificity, the chance of reassuringly calling a benign slide benign, was even higher. Importantly, performance remained strong across organs: it perfectly detected all malignant lung and breast cases in the test set and performed well on rarer groups such as female adnexal tumors and mixed “other” organs. Color heatmaps overlaying the slides revealed that the model’s attention concentrated on regions that expert pathologists recognized as tumor, including metastatic foci in lymph nodes, while largely ignoring normal structures. The system ran efficiently, needing less than 5 GB of memory, making it suitable for use on commonly available graphics cards rather than expensive clusters.

Limits, missteps, and room to improve

The authors also examined where the AI stumbled. False‑negative results often involved extremely sparse cancer cells, blurred scanning areas, or heavy inflammation that disguised malignant nests. False positives tended to arise in benign conditions that mimic cancer under the microscope, such as reactive overgrowths or distorted frozen tissue. Because routine surgical workflows do not include detailed outlines of tumor regions, the team could not quantify exactly how well the heatmaps matched expert markings, relying instead on qualitative review. Some organ types, like tongue or certain soft tissue tumors, remained underrepresented, hinting that larger, multi‑center collections will be needed to extend the system’s reach.

What this could mean for patients and hospitals

Overall, the study demonstrates that a carefully designed AI system can accurately and interpretably assist with a core task of surgical pathology: deciding, in real time, whether tissue is benign or malignant across many organ types. By operating with only slide‑level labels, running on widely available hardware, and highlighting suspicious areas for human review, the model offers a practical path toward more consistent frozen section decisions. For patients, this could translate into better‑informed surgery in a single operation; for hospitals, especially those with limited expert staff, it points toward a future where advanced digital tools help deliver high‑quality cancer care more equitably.

Citation: Wu, J., Yang, M., Li, J. et al. Pan-cancer frozen section classification using a soft mixture of experts vision transformer under weak supervision. Sci Rep 16, 10297 (2026). https://doi.org/10.1038/s41598-026-40924-6

Keywords: frozen section, digital pathology, cancer diagnosis, vision transformer, weakly supervised learning