Clear Sky Science · en

Explainable AI for gastrointestinal lesion surveillance and precision targeted drug delivery

Smarter Scans, Safer Treatments

Many people dread the idea of cancer drugs because of their harsh side effects. This research explores a future in which tiny swallowable cameras, smart algorithms, and microscopic drug carriers work together so that powerful medicines are delivered only where they are truly needed. By closing the loop between seeing a problem in the gut and treating it on the spot, the authors aim to make gastrointestinal care more accurate, less invasive, and much safer.

Tiny Camera on a Journey

At the heart of the system is a wireless ingestible imaging device—a vitamin‑sized capsule that travels naturally through the digestive tract while taking tens of thousands of pictures. Instead of relying solely on a doctor to review this flood of images, the capsule sends them to a wearable unit outside the body. There, a compact computer uses advanced pattern‑recognition software to sort normal tissue from suspicious lesions that could be cancerous or severely inflamed. This setup mirrors existing capsule endoscopy in hospitals but is upgraded to work in real time and to connect directly with treatment tools.

Artificial Intelligence as the Decision Maker

The wearable unit runs a carefully trained image‑analysis model based on modern computer vision techniques. It learned to recognize 25 different gastrointestinal conditions—from polyps and ulcers to severe inflammation—using a large public collection of endoscopy and tissue images. To cope with the fact that some diseases are much rarer than others, the authors trained the system in two stages: first to learn general visual fingerprints of each condition, and then to fine‑tune it so that dangerous but uncommon findings are not ignored. In tests, this approach correctly classified images more than nine times out of ten and did especially well on cancer‑related categories.

Seeing Inside the "Black Box"

Because medical staff must trust any automated diagnosis that might influence a drug dose, the authors used explainable AI techniques to show which parts of each image drive the model’s decision. Heat‑map style overlays highlight the exact regions the system considered important. These explanation maps were not just inspected by eye; they were scored with quantitative tests that measured how much the model’s confidence changed when highlighted regions were removed or added, how stable the explanations were across repeated training runs, and how well they overlapped with expert‑drawn lesion outlines. Among several methods tested, one called LayerCAM produced the most faithful and consistent explanations, helping doctors verify that the system is “looking” in the right place.

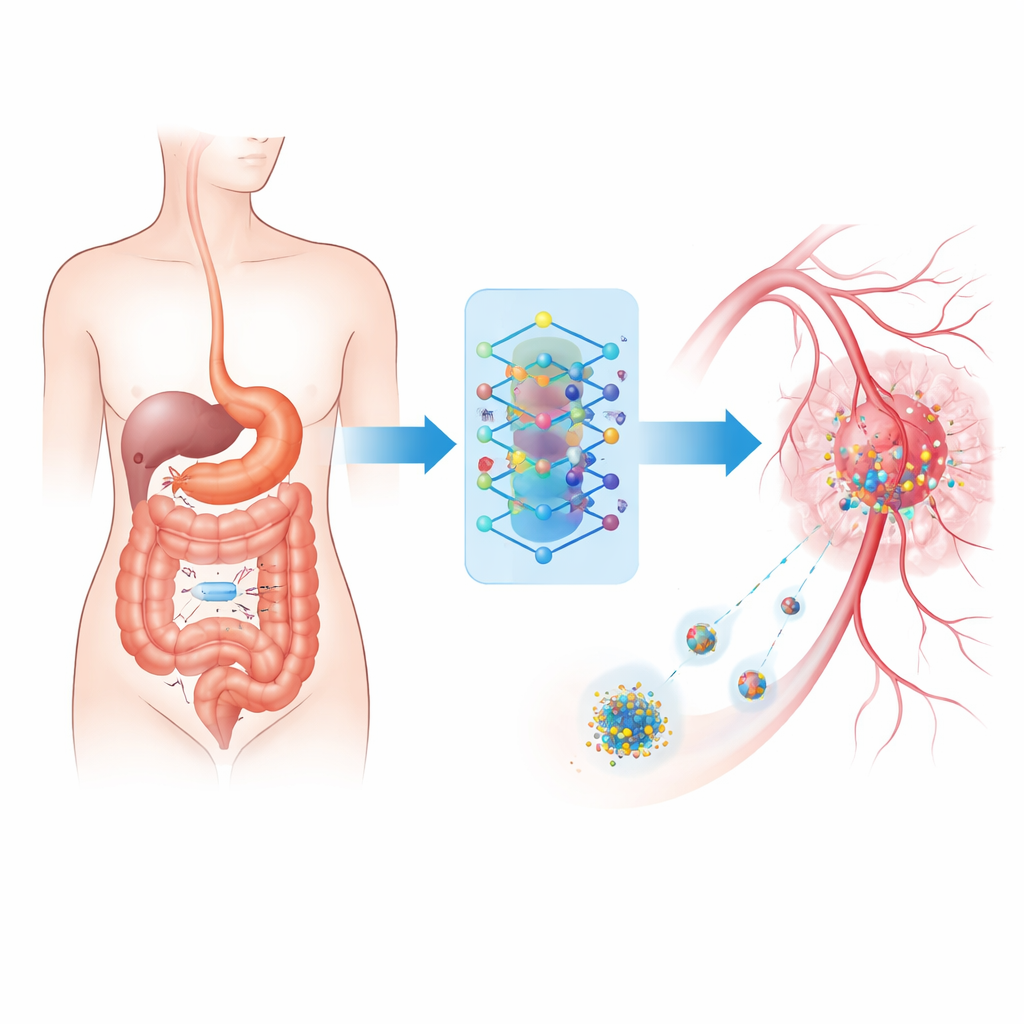

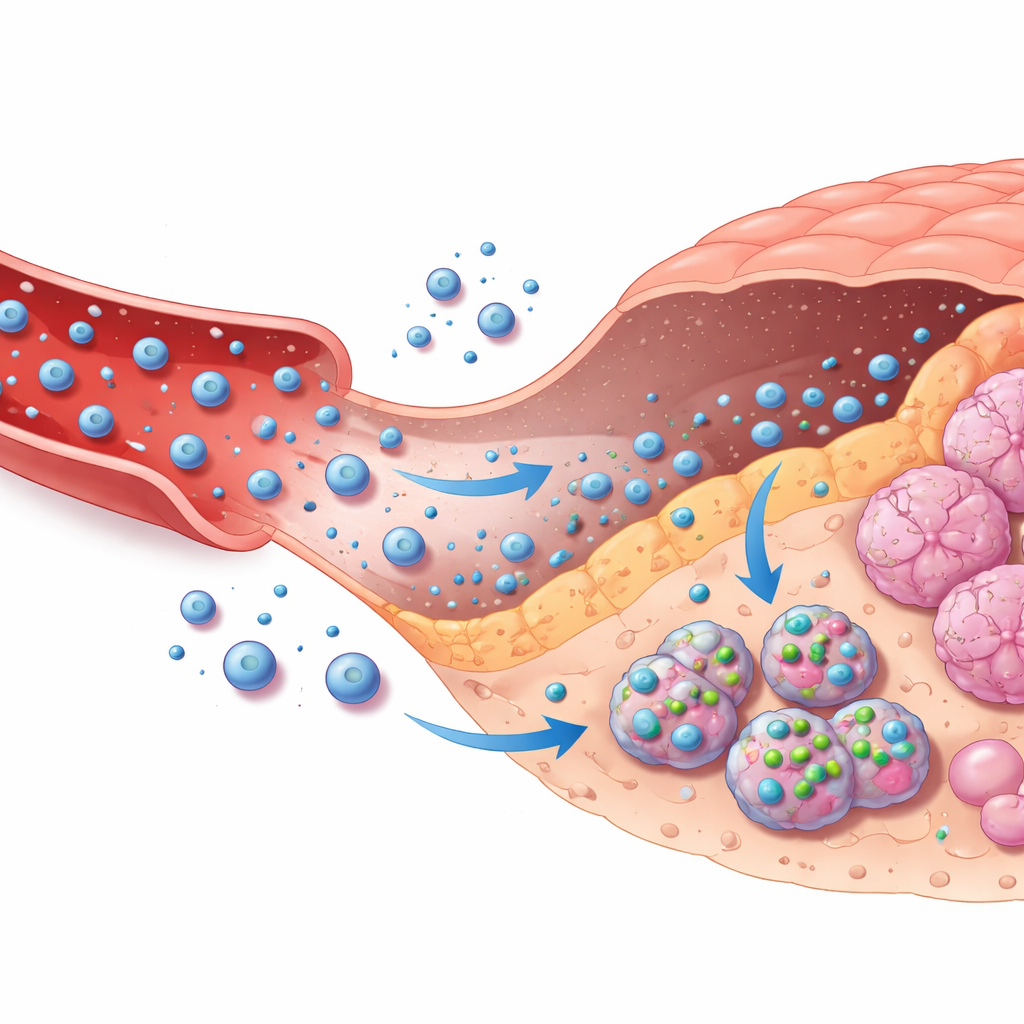

Guiding Drug Carriers Through the Body

The second half of the framework connects these image‑based decisions to targeted chemotherapy delivery. The authors model how a common cancer drug, doxorubicin, travels from an external pump through the bloodstream, leaks into tumor tissue, enters tumor cells, and is eventually cleared. This is captured in a multi‑compartment mathematical model that tracks drug levels in blood, surrounding tissue, and inside cells. Based on the AI’s confidence that a lesion is malignant and how severe it appears, a simple rule system chooses among no treatment, moderate treatment, or intensive treatment, adjusting how quickly drug‑loaded nanoparticles release their cargo and how long infusion lasts. A safety layer constantly checks predicted drug levels inside cells and automatically dials down dosing if a safe ceiling is approached, even if the AI is overly confident.

Protecting Privacy and Preventing Misuse

Because the same link that carries images can also carry treatment commands, security is critical. The authors introduce a lightweight privacy scheme that scrambles biomedical signals using a chaotic mathematical map before they travel through the body’s nano‑network, making intercepted data very hard to interpret. On top of this, the wearable gateway authenticates devices and verifies that control signals match expected physical patterns, helping to block fake commands. Simulations show how different privacy settings trade a small loss in detection accuracy for stronger protection, and identify operating points that keep clinical performance high while sharply limiting data leakage. Together with strict dose limits, emergency shut‑off rules, and safety logs, these measures aim to make the system resilient to both accidents and attacks.

What This Could Mean for Patients

Put simply, this work sketches how a “see‑and‑treat” loop could work inside the body: a swallowable camera finds suspicious spots, an intelligent assistant interprets what it sees with transparent reasoning, and a controlled drug delivery system responds with carefully bounded doses focused on diseased tissue. The study is still theoretical and based on simulations, but it shows that such a closed‑loop design can both hit therapeutic targets and respect strict safety limits, even when the AI makes mistakes or when conditions vary from person to person. If realized in practice, this kind of system could help turn blunt chemotherapy into a far more precise and personalized tool for gastrointestinal disease.

Citation: Kamal, I.R., El-Zoghdy, S.F. & Soliman, R.F. Explainable AI for gastrointestinal lesion surveillance and precision targeted drug delivery. Sci Rep 16, 9807 (2026). https://doi.org/10.1038/s41598-026-40882-z

Keywords: gastrointestinal imaging, explainable AI, targeted drug delivery, nanomedicine, capsule endoscopy