Clear Sky Science · en

Human versus artificial intelligence in oral pathology diagnosis: a comparative study of ChatGPT, Grok, and MANUS

Why this matters for your next dental visit

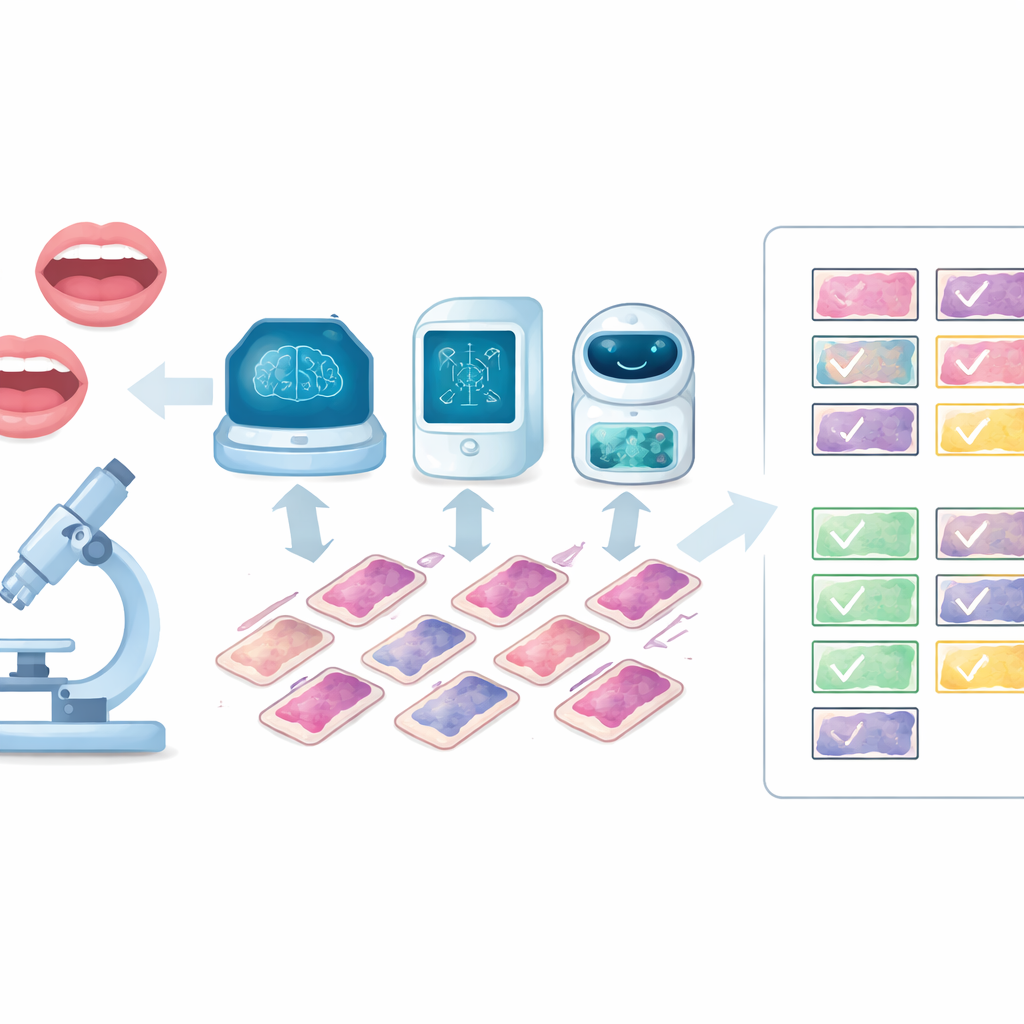

When a dentist finds a suspicious spot in your mouth, the final word on whether it is harmless or dangerous usually comes from a specialist who studies tissue under the microscope. This work is careful, time‑consuming, and in many parts of the world there are not enough experts. This study asks a timely question: can modern artificial intelligence systems help read these microscopic images of mouth tissues with accuracy close to that of human specialists, making diagnosis faster, more consistent, and more widely available?

What the researchers set out to test

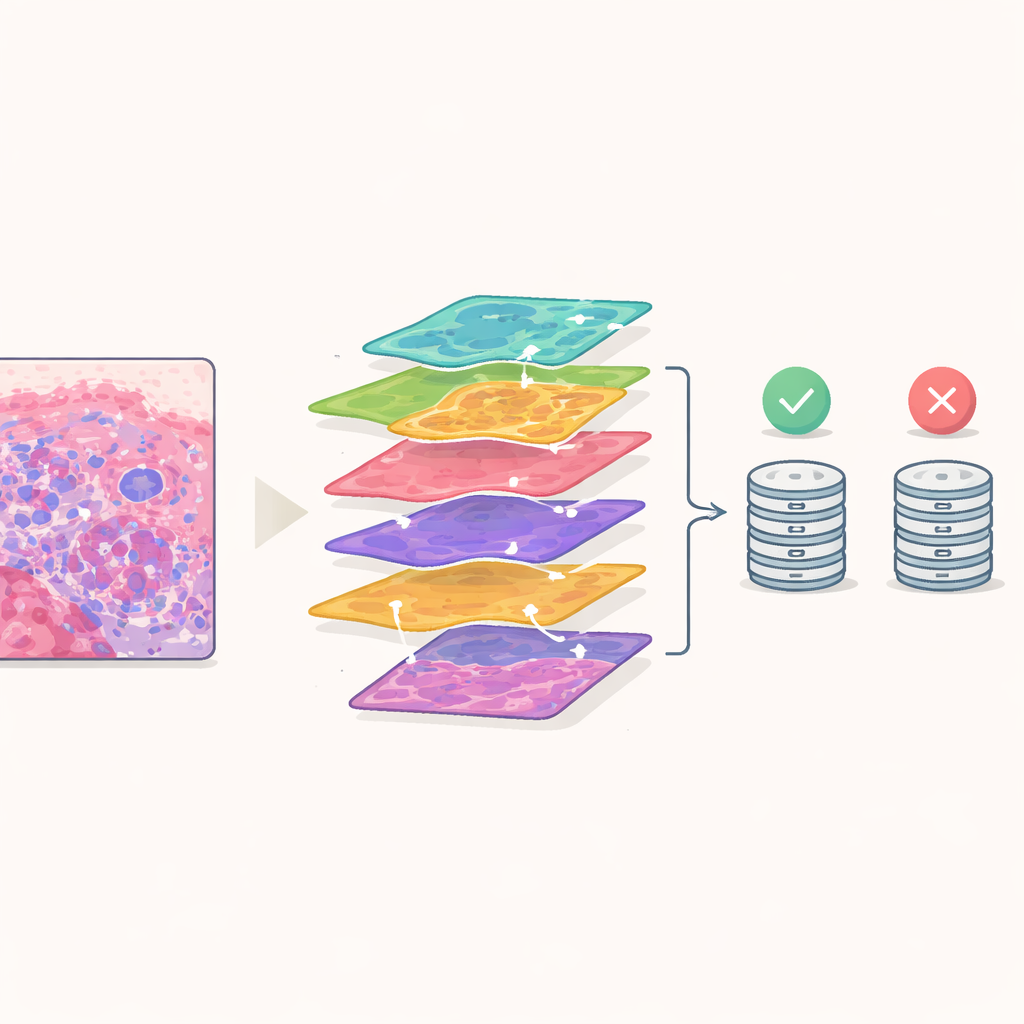

The team focused on three advanced computer programs known for understanding both pictures and language: ChatGPT, Grok, and a medical system called MANUS. Instead of using real patient data, they drew on 100 clear, high‑quality microscope images from a standard textbook of oral diseases. Each image showed a different kind of problem, ranging from early pre‑cancerous changes to tumors, cysts, and reactive growths. Two experienced oral pathologists first agreed on the correct diagnosis for every slide, creating a strong human standard against which to compare the machines.

How the head‑to‑head comparison worked

Each of the 100 slides was shown to all three AI systems using the same short message describing the case and the same digital picture. The models were asked to name the single most likely diagnosis, just as a specialist would when issuing a report. To see whether the systems gave steady answers over time, the researchers repeated the entire process two weeks later with the same slides and instructions. Meanwhile, the two human pathologists independently read the slides without seeing the AI outputs, then discussed any differences until they agreed on a final decision. These expert decisions were treated as the best available answer.

How well the machines and humans performed

All three AI tools did strikingly well. In the second round of testing, Grok correctly identified 97 out of 100 cases, MANUS 96, and ChatGPT 94. The pair of human specialists scored slightly higher, correctly classifying 98 slides. ChatGPT stood out for giving almost exactly the same answers in both rounds, showing very strong internal consistency, while MANUS and Grok also showed solid and stable performance. When the systems were compared with one another, they agreed on the vast majority of cases, suggesting that different AI designs can still arrive at very similar judgments when given the same high‑quality images.

How closely AI matched expert thinking

Matching the correct answer is only part of the story; it also matters whether the computers tend to agree with human reasoning patterns. Here, MANUS showed the closest alignment with the pathologists’ decisions, even when it did not outperform Grok on raw accuracy. Grok, although slightly more accurate overall, sometimes arrived at different choices than the experts in the few difficult cases. Most errors for all three systems arose in slides that were visually confusing even to trained eyes, where tissue changes overlapped or looked borderline between two conditions. Still, there were no major performance gaps between the models, and all three showed levels of agreement with humans that the authors describe as moderate to substantial.

What this could mean for future care

The study suggests that today’s multimodal AI systems are already capable of serving as reliable helpers in the microscopic diagnosis of oral diseases. They are not a replacement for pathologists, who still had the best overall accuracy and provide essential clinical judgment, but they could act as rapid second readers, support training of new specialists, or offer expert‑level assistance in regions with limited access to dental pathology services. Because the research used carefully chosen textbook images rather than messy real‑world samples, the authors stress that more testing is needed on larger, more varied clinical collections and with added patient information. If these further checks confirm the early promise, AI could make mouth‑disease diagnosis more accurate, consistent, and accessible for patients everywhere.

Citation: Alshammari, A.F., Madfa, A.A. & Anazi, B.A. Human versus artificial intelligence in oral pathology diagnosis: a comparative study of ChatGPT, Grok, and MANUS. Sci Rep 16, 11057 (2026). https://doi.org/10.1038/s41598-026-40792-0

Keywords: oral pathology, digital pathology, artificial intelligence, large language models, histopathology diagnosis