Clear Sky Science · en

A lightweight convolutional neural network for real-time monitoring of smart mango orchard systems

Smarter Mango Farms for Everyday Life

For people who enjoy mangoes at the table, it can be easy to forget how fragile these fruits are on the tree. Farmers often lose large portions of their harvest to diseases that first appear as tiny spots on leaves—far too many leaves, and often too subtle, for the human eye to monitor constantly. This paper presents a new way to help: a compact artificial intelligence (AI) system, called mangoNet, that can watch over orchards in real time using simple cameras and phones, warning farmers about leaf diseases before they spread and ruin the crop.

Why Sick Leaves Threaten a National Treasure

Mangoes are a major source of income in regions like Bangladesh, one of the world’s leading producers. Yet the trees are vulnerable to a range of leaf diseases caused by fungi, bacteria, and insects. These problems usually begin as small, irregular patches on leaves and slowly spread through the tree and then the orchard, reducing both yield and fruit quality. Traditionally, farmers or experts must walk the fields and inspect leaves by eye—a slow, error-prone process that is becoming even harder as climate change and shifting weather patterns make outbreaks more frequent and severe. Detecting these diseases early, before they become visible to non-experts, is critical to protecting livelihoods and food supplies.

Bringing Orchard Eyes into the Digital Age

In recent years, deep-learning tools called convolutional neural networks have transformed how computers recognize patterns in pictures, including plant diseases. However, the most powerful versions of these models are very large and demand strong processors, power-hungry graphics chips, and steady internet access. That makes them difficult to run on inexpensive farm devices such as small cameras and smartphones. The authors of this study set out to design a leaner model that could still be highly accurate but light enough to run directly on “edge” devices in the field, without relying on cloud servers. Their vision is a “smart mango orchard” where low-cost cameras send leaf images to a local AI model that quickly decides whether a leaf is healthy or diseased and sends results to a farmer’s phone.

A Tiny Model That Punches Above Its Weight

The team built mangoNet as a streamlined image-recognition engine. Instead of an intricate maze of layers, it uses a carefully arranged sequence of five main processing stages that first pick up simple shapes like leaf edges and veins and then move on to more complex patterns such as disease spots. The model was trained on two eight-class image collections: a custom dataset of mango leaves gathered from orchards in Bangladesh and a public dataset from another Bangladeshi orchard. Each image went through a thoughtful preparation pipeline—improving contrast, reducing noise, and augmenting the data by rotating and flipping the leaves—so that the model would cope better with real-world variations in lighting, angle, and background. Despite having far fewer adjustable settings than popular big-name models, mangoNet achieved an overall accuracy of about 99.6% in cross-validation and around 99% on new, unseen test images, beating six state-of-the-art competitors.

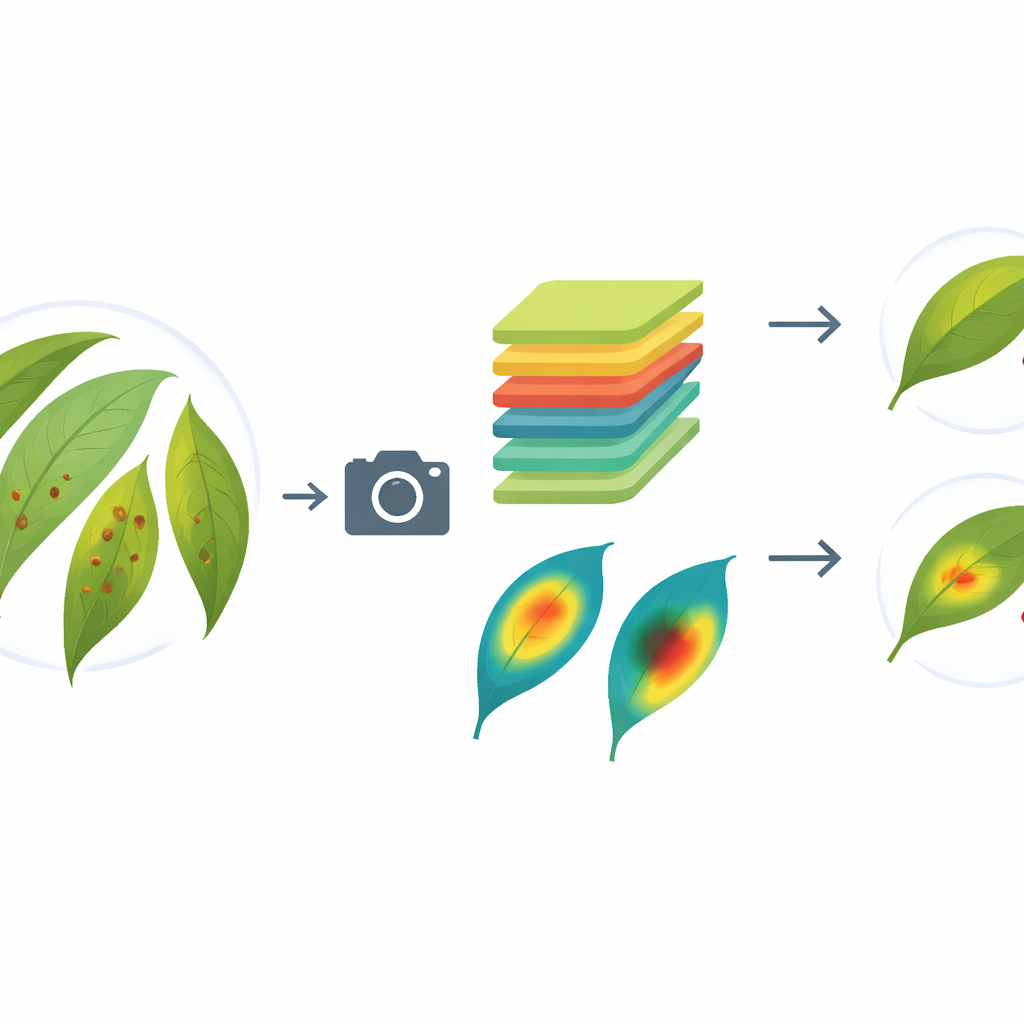

Seeing What the Machine Sees

High accuracy alone is not enough for farmers and agronomists who need to trust why a digital system makes a particular call. To open the “black box,” the researchers used explainable AI methods that highlight which parts of each leaf image drive the model’s decisions. One technique produces colored overlays that show which pixels push the model toward or away from a disease diagnosis; another generates heatmaps that glow over the regions the model considers important. These visual explanations revealed that mangoNet focuses on meaningful features such as lesion color and texture instead of irrelevant areas. The authors also analyzed the brightness patterns in correctly and incorrectly classified leaves, showing that images with clearer, more distinct intensity patterns are easier for the model to classify reliably.

From Lab Prototype to Orchard Helper

To show that their approach can work outside the lab, the authors embedded mangoNet into a simple web interface and an Android mobile app. In their proposed setup, cameras installed in the orchard or used by hand capture leaf images and send them to a small local server or directly to a phone, where mangoNet makes its prediction in a fraction of a second. In tests on an affordable smartphone, the system ran continuously while consuming modest battery power and without overheating the device. Combined with wireless networking, this design could let farmers walk through the orchard, snap photos of suspicious leaves, and receive immediate guidance.

What This Means for Farmers and Consumers

In plain terms, this study shows that it is possible to shrink powerful image-based AI down to a size and speed that fits into everyday farm tools without losing accuracy. For farmers, mangoNet could mean earlier warnings, fewer chemical sprays, and more stable harvests. For consumers and communities, it promises more reliable supplies of high-quality mangoes and a step toward smarter, more sustainable agriculture. While the current system focuses on mango leaves in Bangladesh, the same principles could be adapted to other crops and regions, turning ordinary phones and cameras into accessible disease sentinels for farms around the world.

Citation: Ahad, M.T., Chowdhury, N.H., Ahmed, A. et al. A lightweight convolutional neural network for real-time monitoring of smart mango orchard systems. Sci Rep 16, 11281 (2026). https://doi.org/10.1038/s41598-026-40758-2

Keywords: mango leaf disease, precision agriculture, smart orchard, lightweight deep learning, IoT farming