Clear Sky Science · en

Opening the black box: explainable AI for automated bioturbation analysis in cores and outcrops

Seeing Hidden Clues in Ancient Mud

When animals burrow through soft seafloor mud, they leave behind a maze of tunnels that can be preserved for millions of years. These subtle patterns, called bioturbation, help geologists read past environments and even find oil and gas reservoirs. But spotting and grading these traces by eye is slow, subjective work. This study shows how a new generation of "explainable" artificial intelligence can not only automate that task, but also reveal exactly what the computer is looking at, turning a black box into a glass one.

Why Burrows in Rock Matter

Many geological decisions still start with simple looking: at cliffs, drill cores, and thin slices of rock. The way layers are arranged, how clean or disturbed they appear, and where burrows cut through them all hint at water depth, energy, oxygen levels, and the creatures that once lived there. Geologists often summarize this disturbance as bioturbation intensity, ranging from untouched layers to completely churned sediment. Those grades are vital for reconstructing ancient shorelines and for judging how easily fluids can move through buried sandstones that may serve as reservoirs. Yet even experts can disagree, especially on borderline cases where bioturbation is moderate rather than clearly weak or strong.

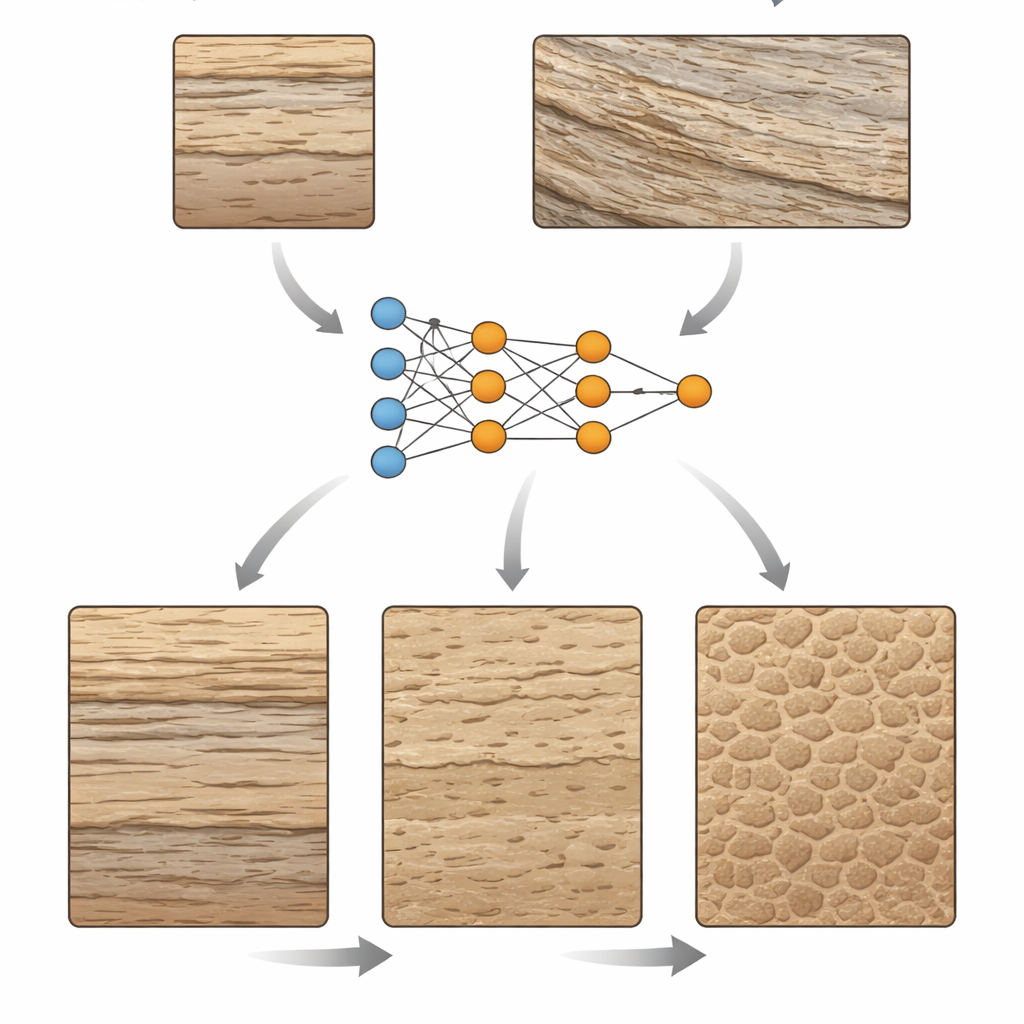

Teaching a Computer to Read Rock Photos

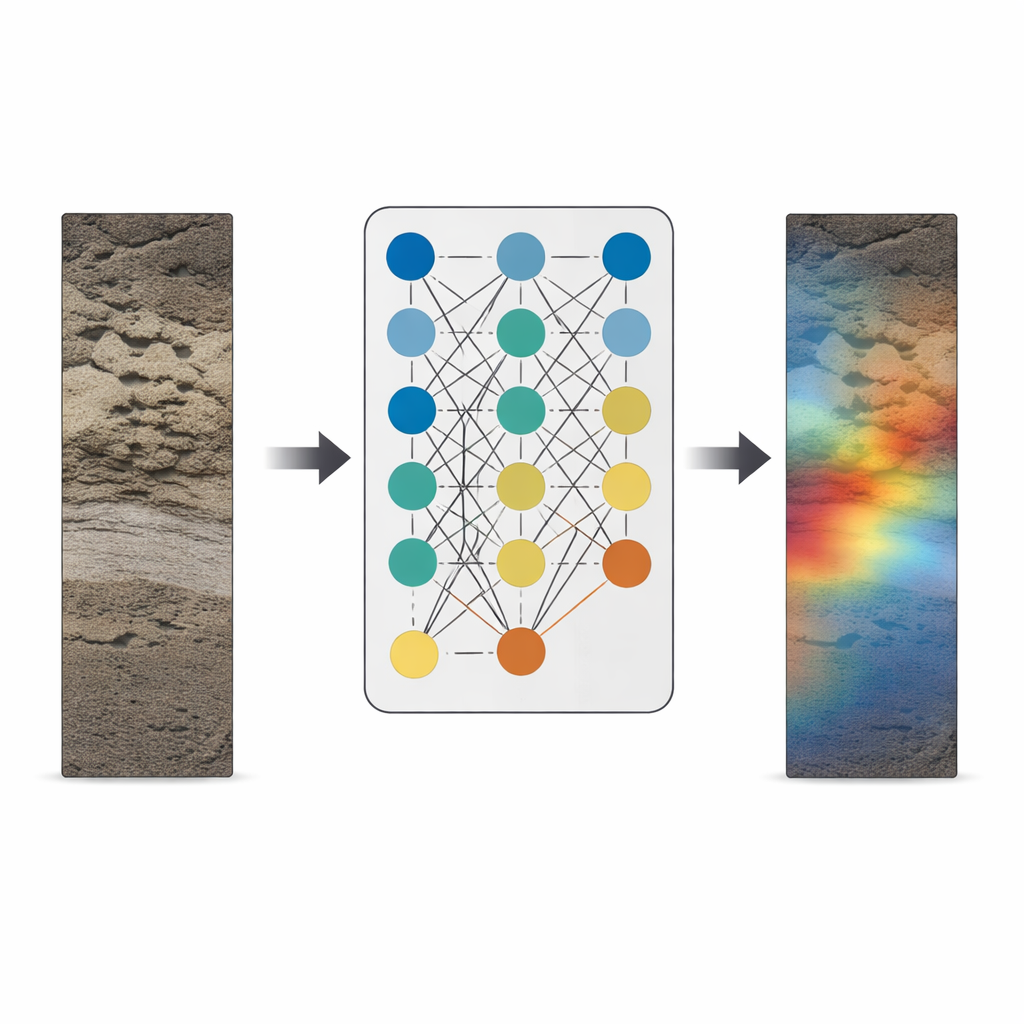

The authors build on an earlier deep-learning model trained to sort photos of sandstone cores and outcrops into three broad levels of bioturbation: unworked, moderately worked, and intensely worked. The model had already shown high accuracy, correctly classifying most of 262 test images. In this study, the focus shifts from "How well does it do?" to "What is it actually seeing?" To answer that, the team uses explainable AI tools that produce heat maps over each image, highlighting the regions that most strongly influenced the model’s choice. Redder areas matter more to the decision; cooler tones matter less. This approach lets geologists compare the machine’s visual attention with that of an experienced ichnologist—an expert in trace fossils.

How the Black Box Lights Up

The method, known as Grad-CAM, taps into the final layers of the neural network, where the image has been distilled into coarse patches of features. When the model decides on a class, Grad-CAM measures how sensitive that decision is to each patch, then projects the result back onto the original photo as a colored overlay. For unbioturbated rocks, the heat maps tend to light up patches of well-preserved layering or massive, undisturbed units, sometimes also highlighting natural fractures or scattered pebbles that stand out against a uniform background. In moderately bioturbated images, the maps usually zero in on individual burrows or zones where layering is partially disrupted, closely matching what human experts would circle on a page. In intensely worked samples, where nearly all original structure is erased, the maps show a speckled pattern spread across the image, mirroring the pervasive churning of the ancient seafloor.

What the Model Gets Wrong—and Why

Because the explanations are visual, the researchers can probe the model’s mistakes instead of just logging them as errors. Some unworked images were misread as bioturbated when certain clasts or textures happened to resemble burrows. In other cases, tiny or very faint trace fossils were overlooked, especially when they occupied only a small corner of the photo. Very large structures also posed problems: if a single broad burrow filled most of the frame and its internal details were subdued, the model treated it as a featureless mass rather than a trace. Importantly, the heat maps show that the system generally ignores non-geological clutter such as pen marks, saw cuts, and shadows, demonstrating that it has learned to focus on rock fabrics rather than photographic noise. The authors suggest that more varied, higher-quality training images and better coverage of borderline intensity levels would further improve performance.

From Expert Tool to Teaching Aid

By opening the model’s inner workings to inspection, explainable AI helps close the trust gap between geoscientists and algorithms. The study shows that the network’s attention usually aligns with expert judgment, homing in on the same burrows and disturbed zones that a trained ichnologist would emphasize. This transparency makes it easier to adopt automated bioturbation analysis in both research and industry, where consistent, rapid screening of large image libraries can save time and reduce human bias. At the same time, the colorful heat maps double as a teaching tool, guiding students’ eyes toward the subtle textural cues that separate unworked, moderately worked, and thoroughly churned rock. In turning invisible model decisions into visible patterns, the work points toward a future where AI does not replace geological intuition, but sharpens and scales it.

Citation: Ayranci, K., Yildirim, I.E., Yildirim, E.U. et al. Opening the black box: explainable AI for automated bioturbation analysis in cores and outcrops. Sci Rep 16, 9725 (2026). https://doi.org/10.1038/s41598-026-40747-5

Keywords: explainable AI, bioturbation, geological image analysis, deep learning, sedimentary cores