Clear Sky Science · en

Some new quantitative randomized response models using optional and partial scrambling for sensitive data

Why asking hard questions is so tricky

Many of the most important social questions—about drug use, hidden income, tax evasion, or illegal behavior—are exactly the ones people least want to answer honestly. If they fear judgment or punishment, they may lie or refuse to respond, and that makes survey results misleading. This article presents new ways to design surveys so that people can safely hide their personal answers while still allowing researchers to measure, with high accuracy, how common these sensitive behaviors really are in the population.

How chance can protect your privacy

Since the 1960s, statisticians have used a clever trick known as randomized response. Instead of answering a sensitive question directly, a person uses a random device—like a coin flip or a spinner—to decide whether to tell the truth or give a disguised answer. Because only the respondent sees the outcome of the random device, no outsider can know whether any particular answer is genuine. Yet, by knowing the random rules, researchers can still reconstruct accurate averages for the whole group. Later work extended this idea from yes–no questions to numerical ones, such as how many times someone broke the law or how much undeclared income they have.

Letting people choose how much to hide

Traditional privacy methods treat everyone the same: every respondent has their answer scrambled in the same way, even if some people are not especially worried about the question. That “one size fits all” approach can waste information and still fail to make cautious people feel safe. To fix this, researchers developed optional models. In these, each person can either report their true number or send a scrambled version, depending on their comfort level. The new study builds on this idea for numerical data by creating four models that mix direct answers with different types of scrambling—sometimes adding random noise, sometimes multiplying by a random factor, and sometimes using several stages of randomization.

Four new ways to balance safety and accuracy

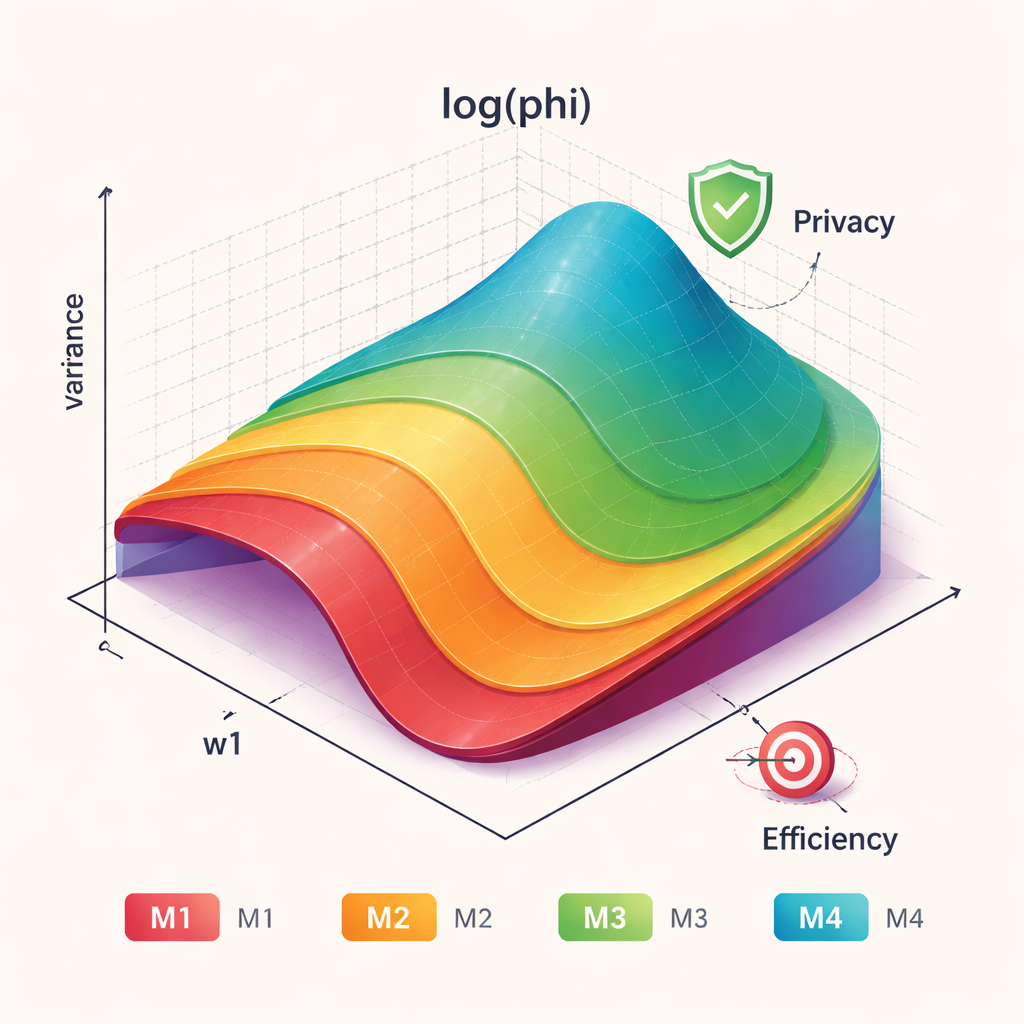

The authors introduce four related models, labeled M1 through M4. All aim to estimate the average level of a sensitive number in the population without bias, meaning that, on average, they recover the true value. M1 extends an existing method by adding a second stage of randomization, which increases the uncertainty about any one person’s answer while keeping the overall calculation simple. M2 combines a first step where some people answer directly with a second step that scrambles answers either by multiplication or by adding random noise. M3 and M4 further generalize earlier multi-option designs, giving respondents several possible scrambled forms of their true value. These extra layers of choice and randomness create more “cover” for individuals while still allowing statisticians to untangle the overall pattern.

Measuring both privacy and precision

Because more scrambling can protect people but also blur the data, the crucial question is how to judge the trade-off between privacy and precision. The authors compare their four models to seven well-known earlier methods using several yardsticks. They look at statistical efficiency, which reflects how variable the final estimate is, and at measures of privacy, which capture how far reported values tend to be from a person’s true number. They also use a combined score—called the phi measure—that lets the analyst choose how much weight to give privacy versus efficiency. Across a wide range of settings, the new models, especially M1 and M4, show consistently better combined scores than the older methods.

Choosing the right tool for a sensitive topic

The study does not claim that one single model is best for all situations. Instead, it offers clear guidance on when to use each approach. When protecting individual privacy is the top priority, and researchers are willing to accept a bit more statistical noise, models M1 to M3 are recommended. They give strong guarantees that no single person’s true answer can be easily guessed. When survey organizers care more about squeezing as much accuracy as possible from limited data—for example, in small or expensive studies—model M4 tends to perform best. Overall, the message for non-specialists is reassuring: by carefully designing the random rules behind a survey, it is possible to ask very sensitive numerical questions in a way that is both ethically safer for participants and scientifically more reliable.

Citation: Iqbal, S., Hussain, Z. & Omer, T. Some new quantitative randomized response models using optional and partial scrambling for sensitive data. Sci Rep 16, 7734 (2026). https://doi.org/10.1038/s41598-026-40714-0

Keywords: privacy-preserving surveys, randomized response, sensitive data, survey methodology, statistical confidentiality