Clear Sky Science · en

A thermal resistance prediction model for heterogeneous integrated chips incorporating an AI-based BP neural network

Why cooler chips matter

Our phones, laptops, and data centers keep getting more powerful by squeezing many different kinds of tiny chips into a single package. This "heterogeneous" stacking boosts speed and capability, but it also traps heat in tight spaces. If engineers cannot predict and manage this heat quickly and accurately, devices can slow down, fail early, or waste energy. This paper presents a new way to forecast how well such complex chips shed heat, using an artificial intelligence model that is guided by the basic laws of physics rather than ignoring them.

The heat problem inside modern chips

As chip makers stack multiple processing units, memory, and other components into thick three-dimensional structures, heat can no longer escape easily. Hotspots form where power is dense or materials conduct heat poorly, and tiny interfaces between layers become bottlenecks. Traditional computer simulations based on physics can predict temperatures in great detail, but they are slow—often taking tens of minutes or hours for a single design. Simple formulas are much faster, yet miss the fine structural details that now dominate heat flow. Engineers are stuck between accuracy and speed just when they need to explore thousands of design options.

Blending physical insight with neural networks

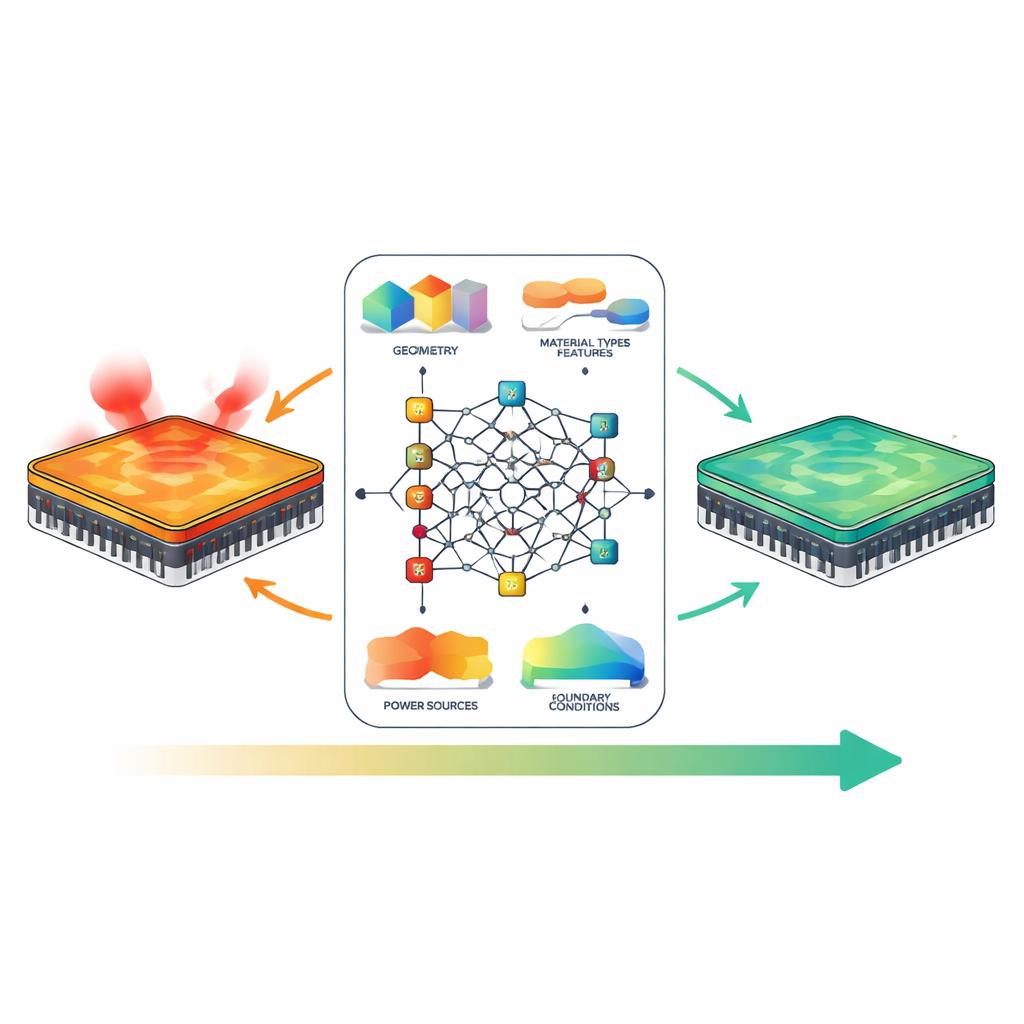

Instead of treating the chip as a mysterious black box, the authors teach a backpropagation (BP) neural network about what really controls heat: geometry, materials, power, and cooling conditions. They build a feature system that describes how many layers the chip has, their thicknesses, how dense the tiny vertical connections are, how well each material conducts heat, how power is spread across the surface, and how strongly the top and bottom are cooled. Some features are direct measurements; others combine basic heat-transfer formulas into meaningful indicators, such as how close an interface is to ideal thermal contact. This physics-guided description feeds the network with information that engineers themselves use when reasoning about heat.

Teaching AI to respect the laws of nature

The neural network architecture is customized so that its behavior stays in line with physical intuition. Inputs are grouped into channels—geometry, materials, power, and boundaries—so that related quantities interact first before mixing. In a key internal layer, the connections are forced to have signs that match known cause-and-effect: increasing thermal conductivity must always decrease predicted resistance, while thickening a poor conductor or raising power must always increase it. This is enforced mathematically so that no amount of data can push the model into violating these trends. Another layer uses an attention mechanism: it automatically learns which combinations of features matter most in each situation, for example when dense vertical connections become crucial for cooling hotspots deep inside the stack.

Learning several heat signals at once

Rather than predicting only one number, the model learns three related outcomes at the same time: the overall thermal resistance from chip to environment, the single hottest temperature on the chip, and how uneven the temperature field is. Sharing information across these tasks acts as a kind of training discipline, nudging the network toward representations that make sense for all three. To keep it honest, the loss function also includes terms that reward monotonic behavior and approximate energy conservation—ensuring that the predicted heat leaving the chip matches the heat generated. Trained on 1,500 high-fidelity simulation cases, the physics-informed model outperforms standard neural networks, random forests, and other common methods. It reaches a determination coefficient of 0.982 for total thermal resistance and 0.969 for maximum temperature, while cutting mean-squared error nearly in half compared with a conventional neural net.

From days of simulation to milliseconds of insight

Once trained, the model delivers predictions in just a few thousandths of a second, compared with about 25 minutes per detailed simulation. This speedup of more than 180,000-fold means chip designers could use it interactively inside design software: adjusting layer thicknesses, materials, or power maps and seeing thermal consequences almost instantly. Tests show that the model stays reliable even for more complex structures with many layers and dense connections, because it has learned not only statistical patterns but also broad physical rules. Although it does not yet produce full 3D temperature maps or handle every exotic cooling scheme, the framework can be extended and combined with other tools to fill those gaps.

What this means for everyday technology

In practical terms, this work offers a fast, trustworthy thermal "co-pilot" for chip designers. By fusing physics with machine learning, it avoids the worst pitfalls of black-box AI—nonsense predictions that break basic laws—while still gaining enormous speed over brute-force simulations. As companies push toward ever more compact and powerful chips for consumer devices, data centers, and advanced sensors, such physics-informed models could help keep future electronics cooler, more reliable, and more energy-efficient, ultimately benefiting anyone who depends on digital technology.

Citation: Li, Y., Xu, S. & Guo, L. A thermal resistance prediction model for heterogeneous integrated chips incorporating an AI-based BP neural network. Sci Rep 16, 9781 (2026). https://doi.org/10.1038/s41598-026-40640-1

Keywords: chip thermal management, heterogeneous integration, physics-informed AI, neural network modeling, electronic cooling