Clear Sky Science · en

Evaluation of AI language models in answering pregnancy-related questions assessed by obstetrics specialists

Why this matters for expecting parents

Pregnancy is a time filled with questions, and many people now turn to online tools and chatbots for quick answers. This study asked a simple but important question: when it comes to common concerns in pregnancy, how good are today’s popular artificial intelligence (AI) chatbots at giving clear, accurate, and reassuring information that doctors would trust?

Comparing three digital “answer engines”

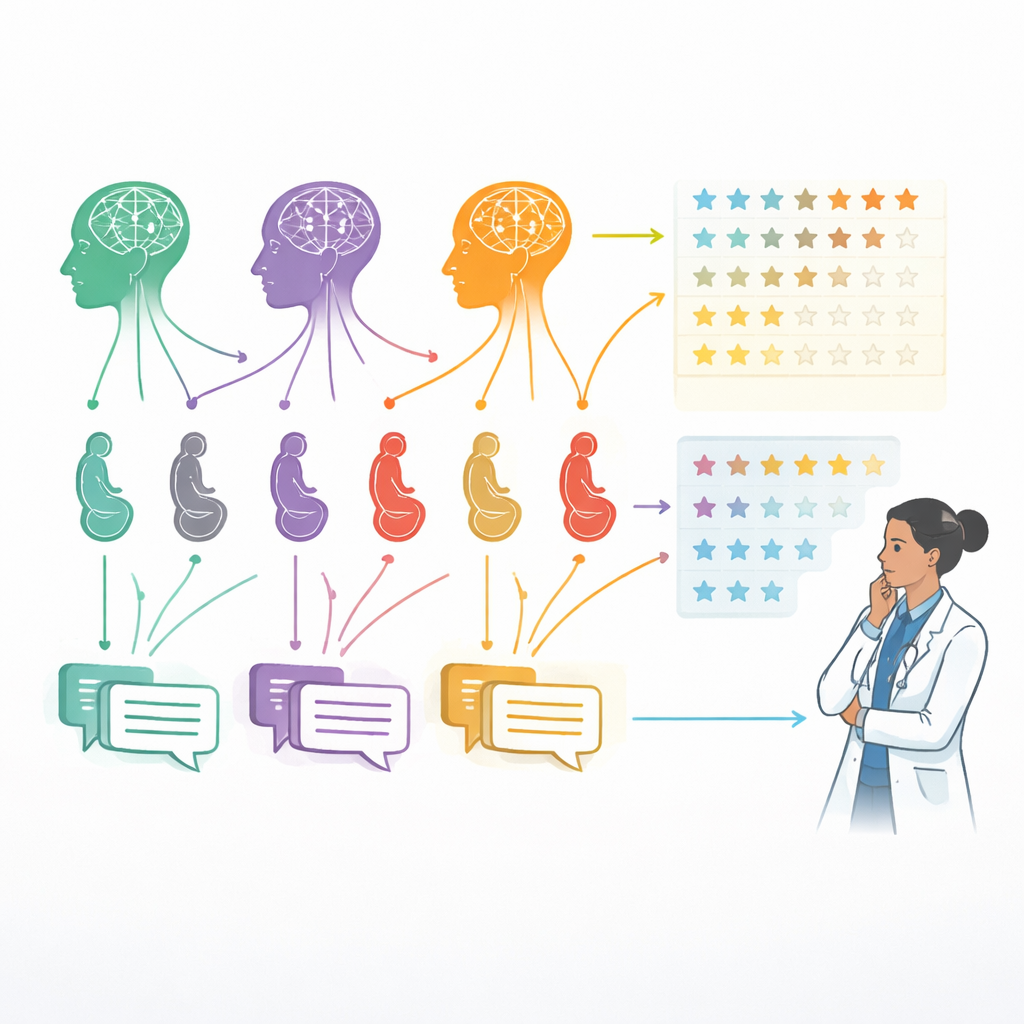

Researchers in Turkey set out to compare three well-known AI language models—an earlier version of ChatGPT (3.5), a newer version (4.0), and Google’s Gemini. They focused on ten everyday questions that pregnant individuals frequently ask, such as which foods to avoid, whether exercise and sex are safe, what early bleeding might mean, how to think about fetal movements, and which warning signs need urgent care. Each question was entered into all three systems using the same simple instructions, with settings tuned to reduce randomness so that the answers would be consistent rather than chatty or creative.

Each model produced one answer per question, in Turkish, without follow-up prompts or editing. The responses were then stripped of any clues that might reveal which system wrote them and shuffled into random order. This way, the human reviewers—obstetrics and gynecology specialists—judged only what was on the page, not the brand name or writing style they thought they recognized.

How doctors judged the answers

Seventy-five obstetric specialists, ranging from early-career doctors to very experienced clinicians, scored all 30 anonymized answers. For each response, they used a five-point scale to rate four qualities: accuracy (does it match current medical knowledge and guidelines?), reliability (is the message internally consistent and free from unsafe advice?), patient-friendliness (is the tone suitable and reassuring for non-experts?), and comprehensibility (is the language clear, well-structured, and easy to follow?). In total, the experts provided 9,000 individual ratings—a large dataset that allowed the researchers to detect meaningful differences among the three AI tools.

The team then used statistical methods designed for rating scales to compare models. They also checked how consistently different doctors rated the same answers and explored whether more experienced clinicians scored things differently than their younger colleagues. The goal was not to build a working chatbot, but to take a careful snapshot of how these systems behave under controlled conditions when answering realistic pregnancy questions.

Which chatbot did best?

Across the board, the newer ChatGPT-4.0 came out on top. Doctors rated its answers as the most accurate and the most patient-friendly, and it also performed best on reliability. Gemini generally landed in the middle: its replies were often clear and easy to read, and on sheer understandability it was similar to ChatGPT-4.0, but it tended to be a bit less detailed and precise. ChatGPT-3.5, the older model, consistently received the lowest scores, often giving shorter or less complete explanations. Interestingly, when it came to basic clarity and structure, all three models looked more similar, suggesting that making text readable may be easier than ensuring that every medical detail is correct and well-balanced.

The doctors’ ratings were highly consistent with one another, indicating that the results were not driven by a few outlier opinions. There was a modest trend for more experienced clinicians to give slightly higher reliability scores overall, but their views did not differ much on how friendly or easy to understand the answers were.

What this means for real-world use

For a layperson, the takeaway is that modern AI tools—especially ChatGPT-4.0—can already provide pregnancy information that many obstetric specialists view as reasonably accurate, safe, and easy to read. That said, the study also underscores an important boundary: even the best-performing system is not a doctor. The researchers did not compare the chatbot answers to official guideline “gold standards,” nor did they test how patients actually interpret or act on the advice. Because the work was done entirely in Turkish, performance in other languages and cultures may differ.

In plain terms, these AI chatbots can be helpful companions for learning about pregnancy, especially when a clinic visit is far away or time with a provider is short. They may support, but should not replace, conversations with healthcare professionals. The authors stress that expert oversight remains essential to catch errors, avoid false reassurance, and make sure that nuanced or high-risk situations get the personal, in‑person care they require.

Citation: Keyif, B., Yurtçu, E., Başbuğ, A. et al. Evaluation of AI language models in answering pregnancy-related questions assessed by obstetrics specialists. Sci Rep 16, 9322 (2026). https://doi.org/10.1038/s41598-026-40609-0

Keywords: pregnancy education, AI chatbots, online health advice, obstetrics, patient information quality