Clear Sky Science · en

Privacy-preserving federated learning with light-weight attention improved CNNs for automated leukemia detection across distributed medical imaging

Why sharing knowledge without sharing secrets matters

Modern medicine increasingly relies on computers to read medical images, from X-rays to microscope slides. But teaching these systems usually means collecting sensitive patient data in one place, which raises serious privacy concerns. This study shows a way for hospitals to build a powerful system for detecting leukemia from blood images without ever sharing raw patient data, combining privacy protection with near top-tier diagnostic accuracy.

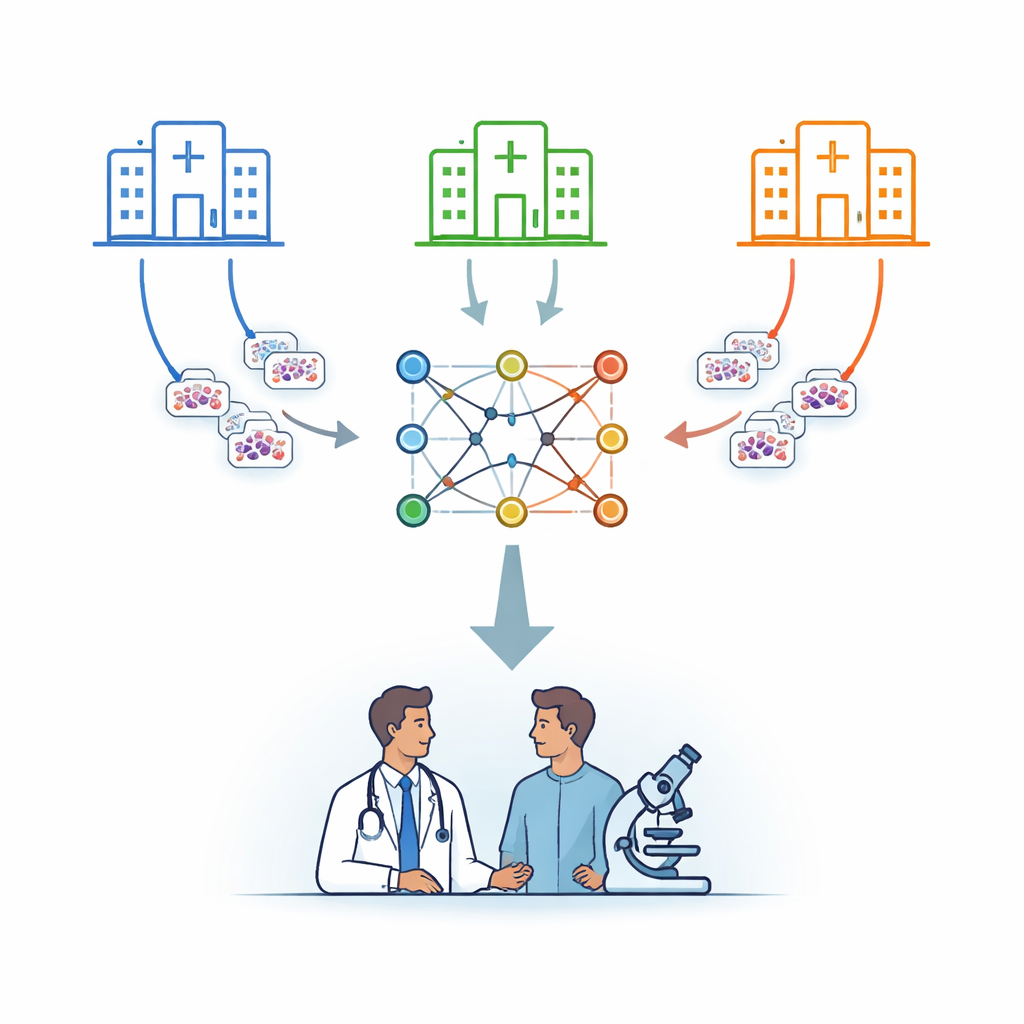

Many hospitals, one shared brain

The researchers focus on leukemia, a cancer of the blood that is diagnosed in part by examining cells under a microscope. Instead of sending patient images to a central server, they use a strategy called federated learning. In this setup, several hospitals each keep their images on site and train a copy of the same computer model locally. Periodically, only the model’s learned parameters are sent to a secure central server, which averages them and sends an improved combined model back. In this way, knowledge is pooled while the underlying images never leave their home institution.

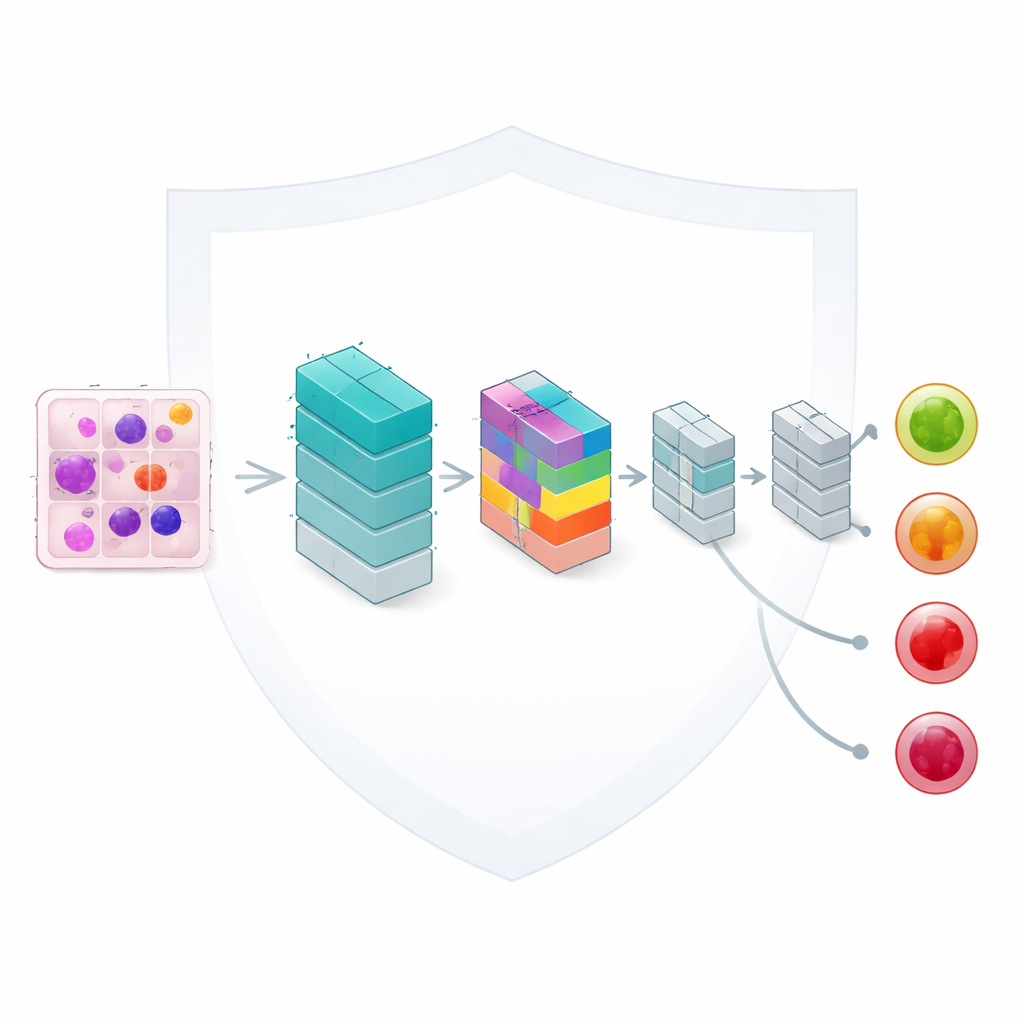

Teaching a small network to pay close attention

At the heart of the framework is a lightweight image-analysis model based on convolutional neural networks, a standard tool for reading pictures. The authors enhance it with a compact “attention” mechanism that helps the network focus on the most informative parts of each blood cell, such as the shape of the nucleus and the texture of the surrounding material. Although the model has only about 33,000 adjustable settings—a fraction of the size of many modern networks—it can still distinguish four clinically important categories: benign cells, early changes, pre-leukemic states, and fully developed pro-leukemic cells. Careful design keeps computation fast enough for realistic use in routine laboratories.

Fair learning from uneven and scattered data

In real health systems, hospitals do not see the same mix of patients. One center might see mostly early-stage disease, another more advanced cases. The team deliberately mirrors this real-world imbalance by splitting a dataset of 3,256 blood smear images across multiple simulated hospitals with differing proportions of each leukemia stage. They then analyze how this uneven distribution affects learning, using statistical measures to quantify how different each hospital’s data is and how similar their final accuracies are. A weighted averaging scheme ensures that sites with more data have a proportionate influence while still keeping performance differences between sites very small.

Accuracy that rivals centralized training

Despite keeping data fragmented and unevenly distributed, the shared model learns to classify leukemia stages with impressive skill. With three simulated hospitals, the global model reaches about 95.7% accuracy on held-out test images; with five hospitals and more training rounds, accuracy rises to roughly 96.6%. Malignant categories—those that represent pre-leukemic and more advanced disease—are recognized especially well, with near-perfect scores in some cases. The more challenging benign category, which is underrepresented, performs slightly worse, highlighting the need for better balance or targeted techniques for rare but important classes. Still, the federated system comes within a fraction of the accuracy achieved when all data are centralized, while retaining the privacy benefits of local storage.

Making the machine’s reasoning visible and trustworthy

To build trust with clinicians, the authors go beyond raw accuracy and examine how the model makes its decisions. They generate visual overlays that highlight which parts of each cell image most influenced the outcome. These maps reveal that the model concentrates on medically meaningful features, such as abnormal nuclear shapes in more dangerous stages of leukemia, and shows more diffuse patterns for benign cells. The team also studies how confident the model is in its predictions and finds that correct answers tend to have high confidence, especially for malignant stages, suggesting a good match between the system’s certainty and its reliability.

What this means for future cancer diagnosis

For non-specialists, the key message is that it is now possible for hospitals to collaborate on smarter cancer diagnostics without handing over their patients’ images. This work demonstrates that a compact, carefully designed model trained through federated learning can approach the accuracy of traditional pooled-data methods while respecting privacy rules and practical limits on computing power and network traffic. With further work to better handle underrepresented cell types and reduce communication costs, similar privacy-preserving systems could be extended to other cancers and imaging tests, helping clinicians worldwide benefit from shared experience without exposing individual patients.

Citation: Awan, M.Z., Khan, N.A., Strakos, P. et al. Privacy-preserving federated learning with light-weight attention improved CNNs for automated leukemia detection across distributed medical imaging. Sci Rep 16, 9768 (2026). https://doi.org/10.1038/s41598-026-40581-9

Keywords: federated learning, leukemia imaging, medical AI privacy, attention-based CNN, digital pathology