Clear Sky Science · en

Explainable AI in education: integrating educational domain knowledge into the deep learning model for improved student performance prediction

Why Smarter Predictions About Students Matter

Schools are increasingly turning to artificial intelligence to spot which students might struggle and who may need extra support. But when these systems behave like sealed black boxes, they can highlight odd patterns—such as saying a teenager’s love life matters more than their study time—leaving teachers and parents unsure whether to trust the results. This paper shows how to build a student performance prediction system that not only makes better forecasts of math grades, but also "reasons" in ways that fit what decades of education research already tell us.

From Raw Data to Risk Alerts

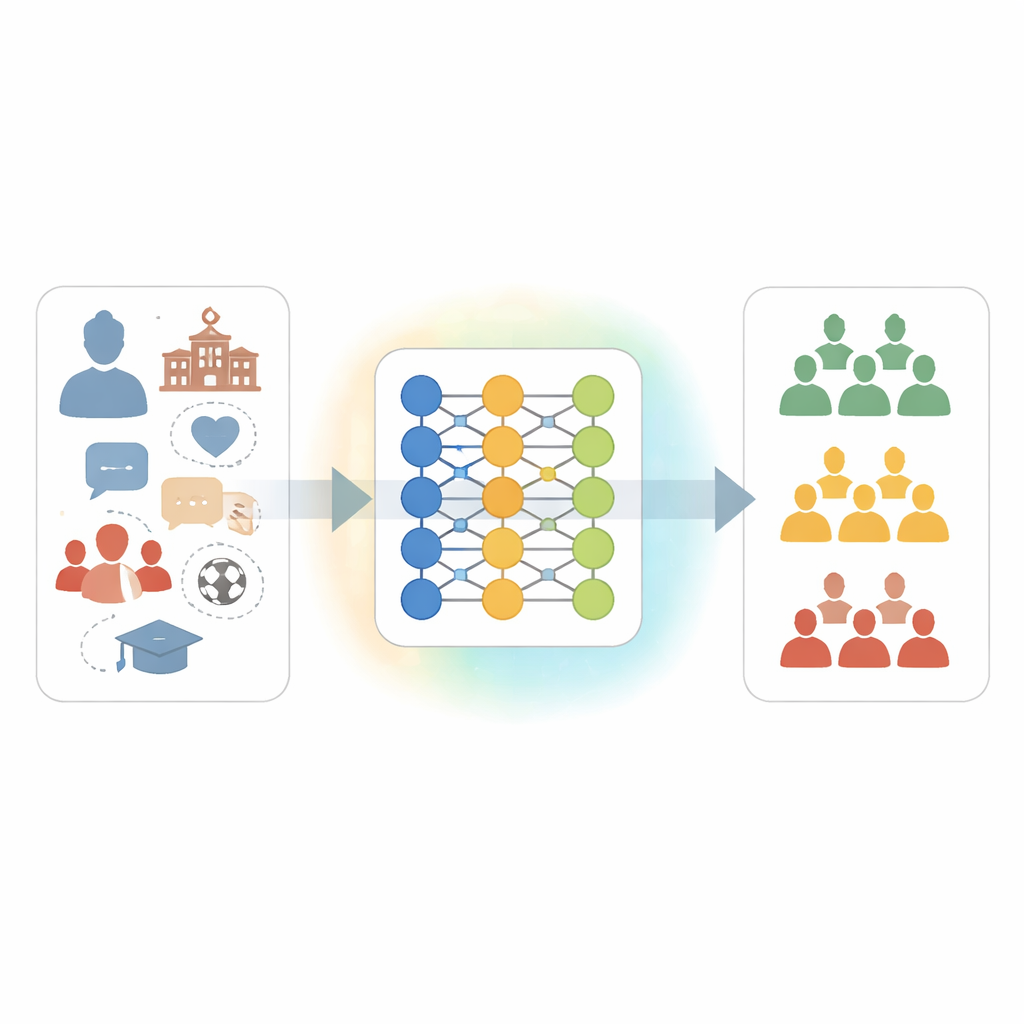

The researchers worked with a well-known public dataset of 395 Portuguese high school students, each described by 30 pieces of information. These ranged from basic demographics (age, sex, family size) to school-related details (study time, absences, extra classes) and aspects of social life and well-being (family relationships, free time, going out with friends). The goal was to predict each student’s final math grade and then group them into three practical categories: likely to fail, on track, or performing excellently. A deep learning model called an artificial neural network (ANN) was trained to pick up subtle patterns across all these factors.

When the Black Box Gets It Wrong

Although the original ANN reached respectable accuracy, closer inspection revealed something troubling. Using a modern explanation technique known as SHAP, the authors examined which features the model relied on most. Some of its strongest signals clashed with well-established educational findings. For example, the school a student attended, their romantic status, and how often they went out appeared unusually influential, while research-backed factors such as parents’ education, the mother’s job, early nursery attendance, family size, and weekly study time were given surprisingly little weight. These mismatches suggested that the ANN was latching onto quirks of this particular dataset rather than relationships that educators consider meaningful or fair.

Teaching the Network What Educators Already Know

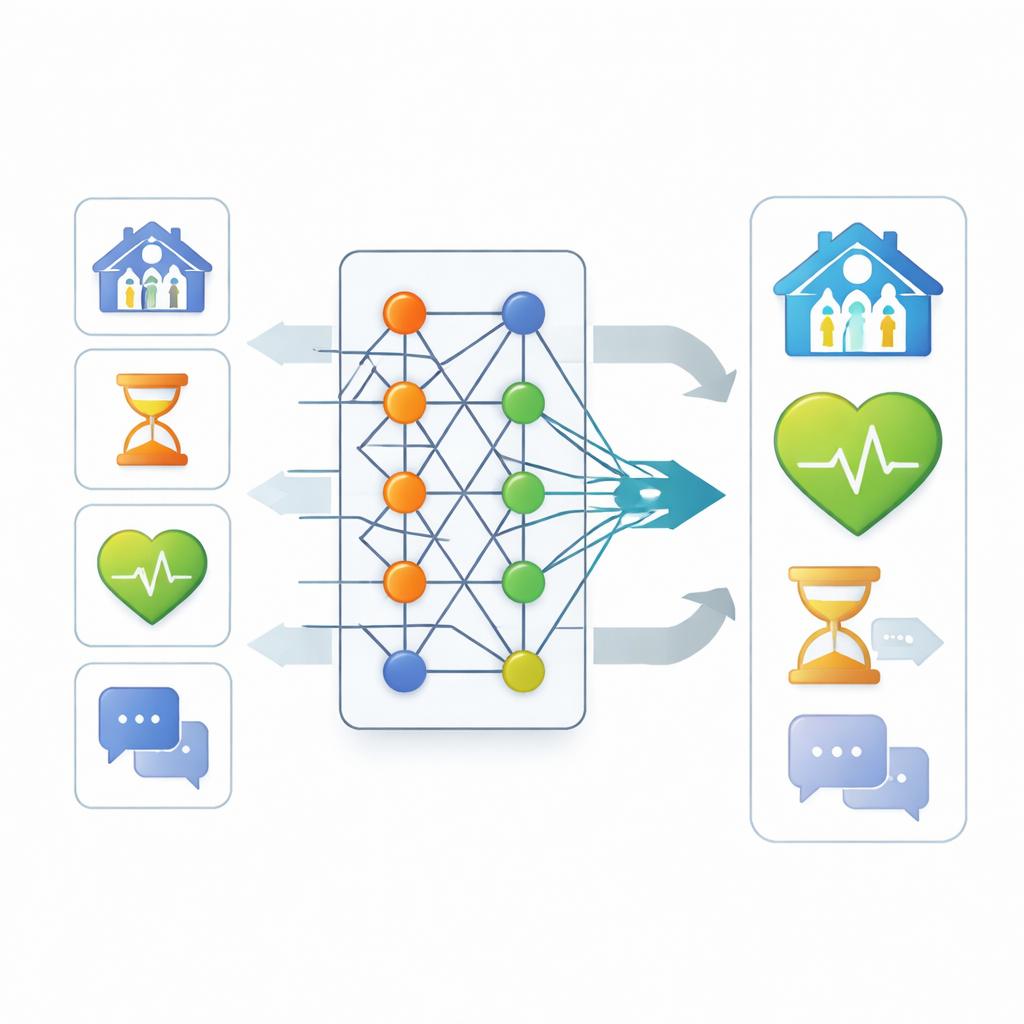

To realign the model with educational insight, the authors proposed a new training strategy called the Students’ Performance Prediction Explanation (SPPE) algorithm. First, they combed through the education literature to sort features into two rough groups: those consistently linked to achievement (like study time, parents’ education, and higher-education aspirations) and those that are weaker or more uncertain predictors (such as romantic status or generic family relationship ratings). During training, SPPE nudges the neural network to increase its reliance on the first group and tone down the second. It does this by monitoring how much each feature contributes to predictions and adding a gentle penalty whenever the network’s learned importance pattern drifts away from this domain knowledge.

Clearer Explanations and Sharper Predictions

After the SPPE adjustments, the model’s internal reasoning changed in ways that better matched educator expectations. Study time, parents’ background, family size, and early schooling moved up the importance ladder, while school identity, going out, and romantic status became less dominant. Just as important, this realignment did not trade away accuracy—it boosted it. When predicting which of the three grade bands a student would fall into, the improved network correctly classified about two-thirds of students, compared with little more than one-third for the original model. Standard measures of precision, recall, and a blended F1 score all rose substantially, and statistical tests confirmed that the gains were unlikely to be due to chance. The authors also showed that the same SPPE strategy improved several other neural network designs, suggesting the approach is robust rather than a one-off trick.

What This Means for Classrooms and AI

For educators and policymakers, the study offers a way out of the uncomfortable choice between accurate but opaque models and transparent but weak ones. By weaving human expertise into the learning process itself, SPPE produces predictions that are both more reliable and easier to justify: time spent studying and long-term educational ambitions count for more than which school a student happens to attend. While the work focuses on one math dataset from Portugal, the broader message is that explainable, knowledge-guided AI can support better, fairer decisions about student support—provided that local context and expert judgment are built in from the start.

Citation: Qiang, M., Liu, Z. & Zhang, R. Explainable AI in education: integrating educational domain knowledge into the deep learning model for improved student performance prediction. Sci Rep 16, 9515 (2026). https://doi.org/10.1038/s41598-026-40538-y

Keywords: student performance prediction, explainable AI, educational data mining, neural networks in education, domain knowledge integration