Clear Sky Science · en

Neonatal jaundice detection using a vision transformer-based deep learning model

Why this matters for new parents

Most newborns develop some yellowing of the skin, known as jaundice. Usually it fades on its own, but in some babies high levels of the pigment bilirubin can harm the brain if not caught in time. Today, checking bilirubin often requires a needle stick or an expensive bedside device. This study explores whether an ordinary smartphone, combined with a new kind of artificial intelligence, could offer a low‑cost, non‑invasive way to spot risky jaundice early—especially in hospitals and clinics that lack advanced equipment.

The hidden risk behind a common yellow tint

Jaundice affects well over half of full‑term newborns and even more premature babies. It appears as a yellow color in the skin and the whites of the eyes when bilirubin builds up in the blood. Mild cases are harmless, but severe or missed cases can lead to a form of brain damage called kernicterus, long‑term disability, or even death. Standard care relies on visual inspection followed by blood tests or specialized meters pressed against the skin. These methods work, but they are subjective, invasive, slow, or costly—barriers that are especially serious in crowded or low‑resource nurseries where many babies must be screened quickly.

Turning a phone camera into a health tool

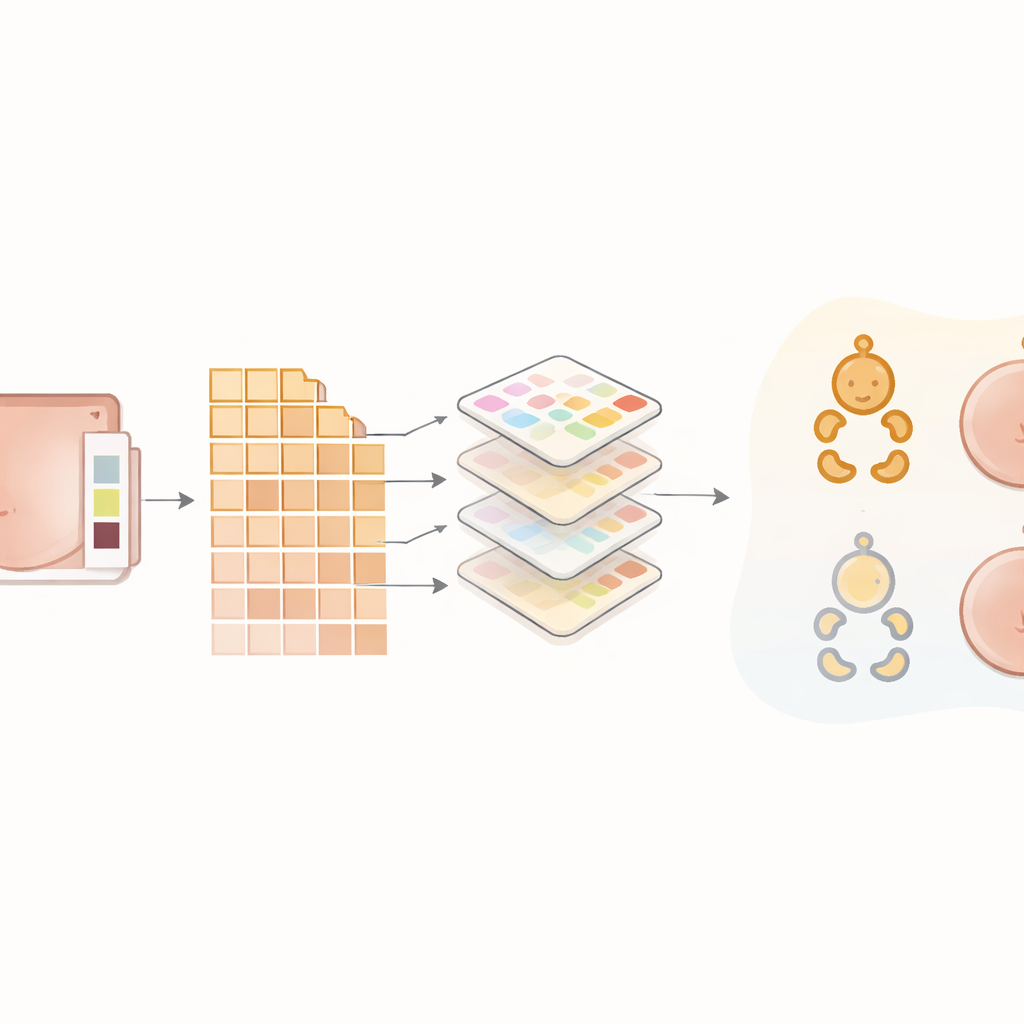

The researchers set out to build a practical screening pipeline using just a smartphone camera and a modern AI model. They enrolled 500 newborns at a children’s hospital in Tehran, Iran, imaging three body regions—the face, abdomen, and inner forearm—with an iPhone mounted on a tripod in a room with tightly controlled lighting. A color card with many colored squares was placed next to the baby’s skin in every photo to standardize color across images. At nearly the same time, each baby had a routine blood test to measure bilirubin; doctors used those values to label each baby as jaundiced or not, creating a trustworthy reference for training and testing the algorithms.

Cleaning and focusing the images

Before any AI model saw the pictures, the team put the images through a careful cleaning process. Low‑quality shots with blur or poor framing were discarded, and the remaining photos were saved in high‑fidelity format to preserve subtle color differences. Computer routines then adjusted the images using the color card as a reference, boosted local contrast to make small changes in skin tone more visible, and converted colors into forms that help separate skin from background. A semi‑automatic step isolated smooth, evenly lit patches of skin and cropped them into standardized small squares. To teach the models to cope with natural variation, the researchers also created modified versions of some training images—slightly rotated, flipped, or brightened—without altering their medical meaning.

How the new AI compares with older approaches

The heart of the study is a model called a vision transformer, adapted from tools originally designed to understand complex patterns in images. Unlike traditional convolutional neural networks, which mostly look at small neighborhoods of pixels, the transformer learns to pay attention to both tiny details and broader patterns across the image. The authors trained this model, called T2T‑ViT, to decide whether each skin crop came from a jaundiced or non‑jaundiced baby. They directly compared its performance with three established methods: a popular deep network known as ResNet‑50 and two classic machine‑learning techniques, support vector machines and k‑nearest neighbors, which relied on simple color statistics rather than raw images. On an independent test set, the transformer correctly classified virtually every case, reaching about 99% for accuracy, sensitivity, and specificity. It clearly outperformed the other methods, which misclassified more babies and especially struggled with borderline jaundice.

Promises and real‑world challenges

These results suggest that, under controlled conditions, a smartphone plus a well‑trained transformer model can match or surpass far more expensive tools for identifying newborns who may need closer monitoring or treatment. The system is lightweight enough to run on consumer‑grade hardware and uses images that any trained nurse or technician could capture, making it attractive for busy clinics or regions with limited resources. Still, the authors stress important caveats: all data came from a single hospital, one phone model, and mostly Iranian infants, and experts manually refined which skin areas to analyze. Real‑world use will require testing across many hospitals, phone types, lighting conditions, and skin tones, as well as automating more of the image‑selection steps.

What this could mean for newborn care

In simple terms, the study shows that a phone camera, guided by an advanced AI that is sensitive to very slight color shifts, can almost always tell which newborns have clinically important jaundice. If future work confirms these findings in more diverse settings, this approach could become a fast, painless “first check” that helps decide which babies need blood tests or treatment and which can safely go home. For families and health workers alike, that could mean fewer needle sticks, lower costs, and, most importantly, earlier protection against a preventable form of brain injury.

Citation: Lotfi, M., Rabiee, M., Nazarpak, M.H. et al. Neonatal jaundice detection using a vision transformer-based deep learning model. Sci Rep 16, 9243 (2026). https://doi.org/10.1038/s41598-026-40515-5

Keywords: neonatal jaundice, smartphone screening, medical imaging AI, vision transformer, newborn health