Clear Sky Science · en

Object-aware semantic mapping using probability density functions for indoor relocalization and path planning

Why smarter indoor maps matter

As home and service robots move from labs into real apartments, they must do more than avoid walls and furniture. To be truly helpful, a robot should understand that a bed usually means a bedroom, or that a fridge hints at a kitchen. This paper presents a new way for robots to "see" indoor spaces through the objects that define each room, allowing them to figure out where they are and choose paths that better match how people use their homes.

Seeing rooms through their everyday objects

Traditional robot maps focus either on raw geometry or on abstract symbols. Grid maps built from laser scans capture detailed shapes, but become heavy to store and slow to search, and can push robots into stiff, grid-like paths. High-level graphs of rooms and doors are easier to handle, but they throw away the fine detail needed for precise driving. The authors bridge this gap by organizing maps around rooms and the key static objects inside them – beds, sofas, fridges, tables and the like. Each room is outlined on a flat floor plan, and every important object class gets its own layer, so different kinds of furniture never overwrite each other.

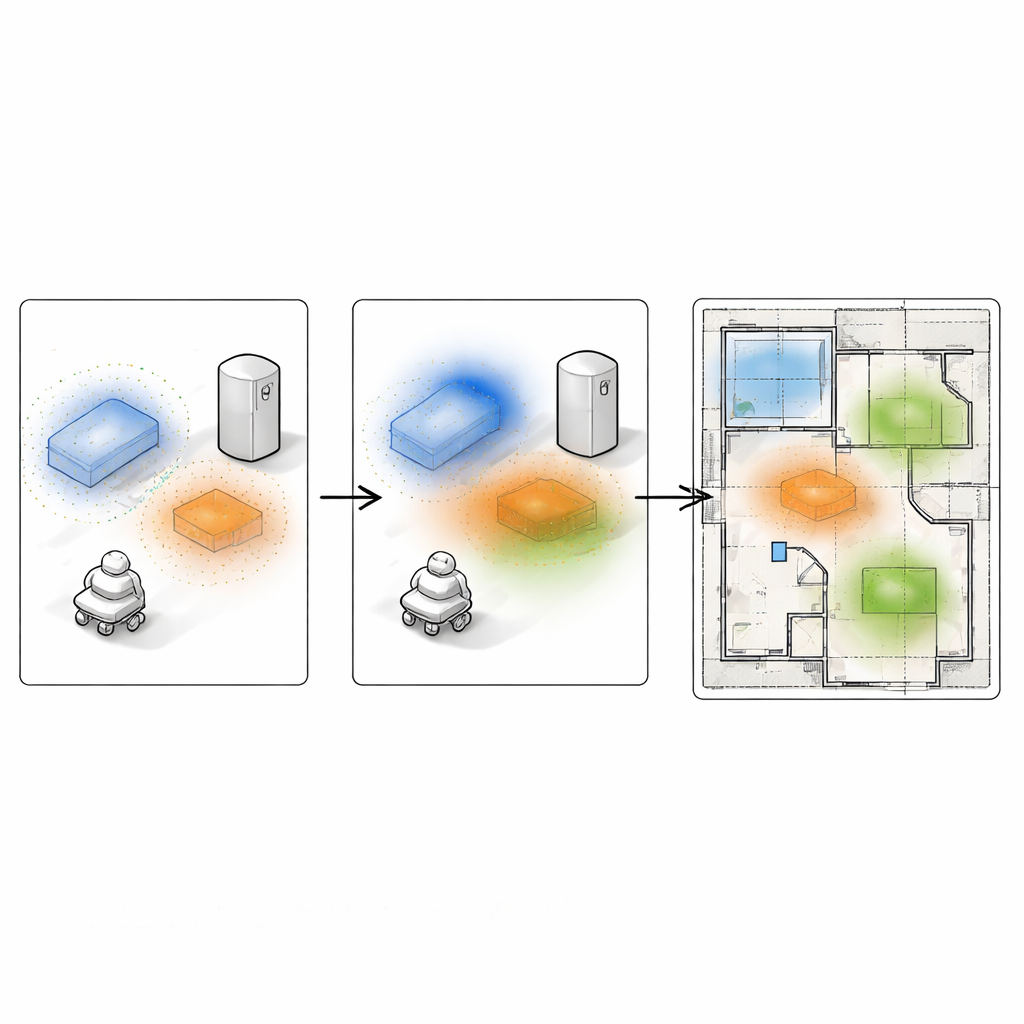

Turning furniture into soft probability clouds

Instead of drawing each object as a hard-edged box, the method converts 3D scans of furniture into smooth “heat maps” on the floor. The robot first reconstructs each room in 3D using an RGB‑D camera and standard tools, then semantically labels points belonging to objects such as walls, beds or chairs. For each object type inside a room, clusters of points are found and projected onto the floor. From these clusters, the system estimates a continuous probability density – a soft blob that is highest where the object most likely is and gently fades outward. Stacking these blobs per object type yields a compact, layered map that keeps both the meaning of objects and their approximate shape, while naturally handling noise and partial views.

Letting robots rediscover where they are

One major use of this object-centered map is helping a robot relocate itself when it has no idea where it is on the floor plan – a common problem when the robot first wakes up or has been moved. The robot takes a fresh look with its depth camera, detects objects in view, and builds its own small set of probability blobs for that partial scene. Then, an evolutionary search algorithm explores many possible robot poses across the building map. For each candidate pose, the local blobs are overlaid onto the global map, and their similarity is measured using a statistical distance. Room boundaries and line-of-sight checks discard impossible poses, such as seeing a fridge through a wall. Over many generations, the population of candidate poses evolves toward the location where the observed objects best match the stored probability fields, yielding a robust estimate of the robot’s position and orientation.

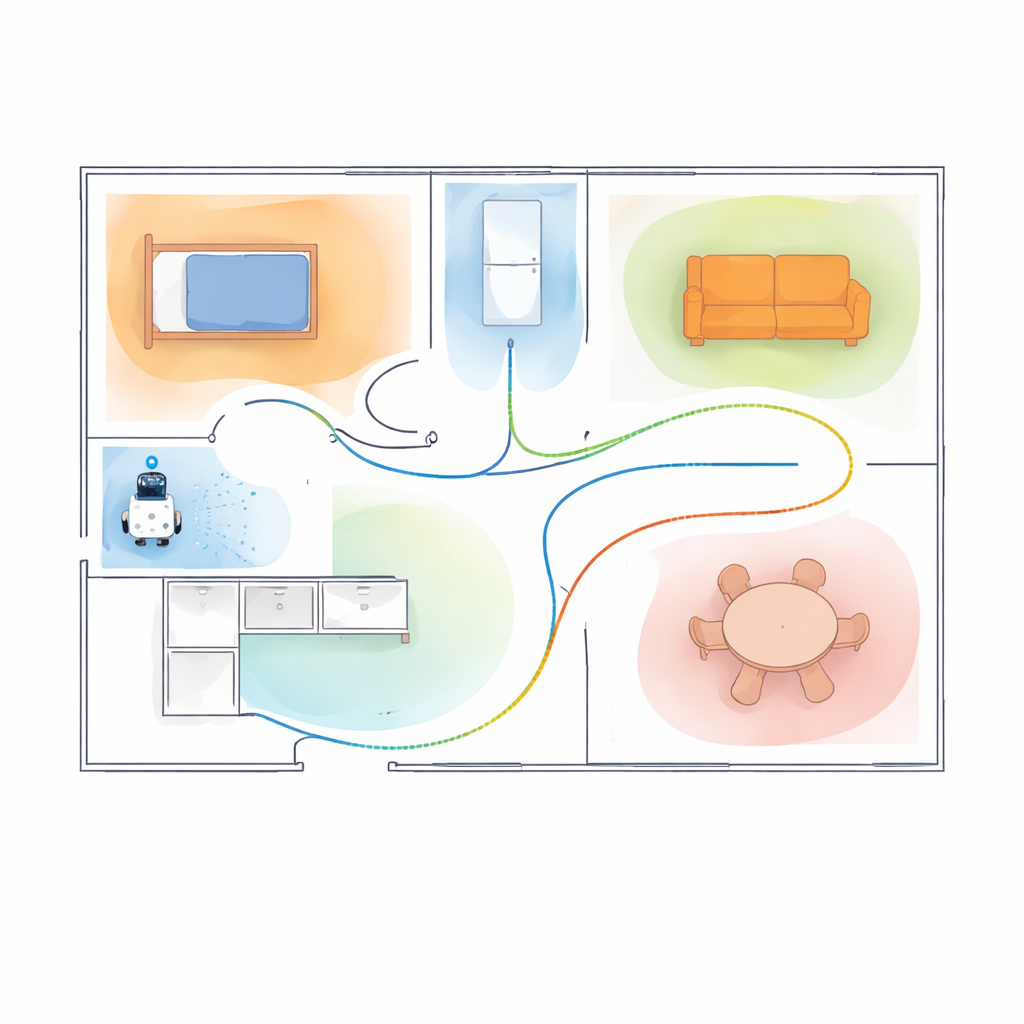

Planning paths that respect how people use space

The same map also guides how the robot moves. Because every object type is represented as a smooth influence field, the robot can be told to favor or avoid certain areas by adjusting numerical weights. Beds may become regions to steer clear of at night, while tables can become attractors when searching for items. These semantic preferences are combined with a standard obstacle map and a safety margin around walls to form a single cost landscape over the floor plan. A classic path planner then finds routes that are not only collision-free but also follow the desired social or task-related biases. Experiments in a realistic dataset and a real furnished apartment show that these semantically biased paths better follow the intended preferences, sometimes at the expense of small increases in path length, and can be smoother in real homes.

What this means for everyday robots

In simple terms, this work teaches robots to think of homes the way people do: as rooms defined by their furniture, not just as empty boxes with walls. By wrapping each key object in a soft probability cloud, a single compact map can support both "Where am I?" and "How should I get there?" without needing separate, task-specific models. Tests show that this approach helps robots localize more reliably in cluttered or look-alike rooms and choose routes that better match human expectations. As these ideas mature, future home robots may navigate more politely and intelligently, moving through our spaces with an awareness that feels far less mechanical.

Citation: Mora, A., Mendez, A., Moreno, L. et al. Object-aware semantic mapping using probability density functions for indoor relocalization and path planning. Sci Rep 16, 9450 (2026). https://doi.org/10.1038/s41598-026-40498-3

Keywords: indoor robot localization, semantic mapping, object-aware navigation, probabilistic robotic maps, path planning