Clear Sky Science · en

Deep transfer learning based image colorization using VGG19 and CLAHE

Bringing Old Photos Back to Life

Many of us have boxes of black‑and‑white family photos or enjoy classic films and vintage documentaries. Imagining what those scenes looked like in real life—blue skies, green fields, warm skin tones—can make the past feel closer and more real. This paper explores a new computer method that automatically adds realistic color and pleasing contrast to grayscale images, making it easier to restore old pictures, refresh black‑and‑white movies, and even improve medical scans, without needing an expert to paint in every shade by hand.

From Hand‑Tinting to Smart Machines

Colorizing images is harder than it looks because a single shade of gray could correspond to many possible colors: a medium gray might be a red brick, a green leaf, or a blue shirt. Earlier tools leaned heavily on human guidance. Artists could draw quick color “scribbles” on parts of a picture, and software would spread those hints across similar regions. Other systems borrowed colors from a reference photo with similar content. While these methods could be convincing, they broke down when the guidance was sparse, the reference image was not a perfect match, or the scene was complex. As deep learning took off, new programs learned to “guess” colors directly from large collections of example photos, reducing the need for manual work but demanding enormous training time and computing power.

Teaching a Network What the World Looks Like

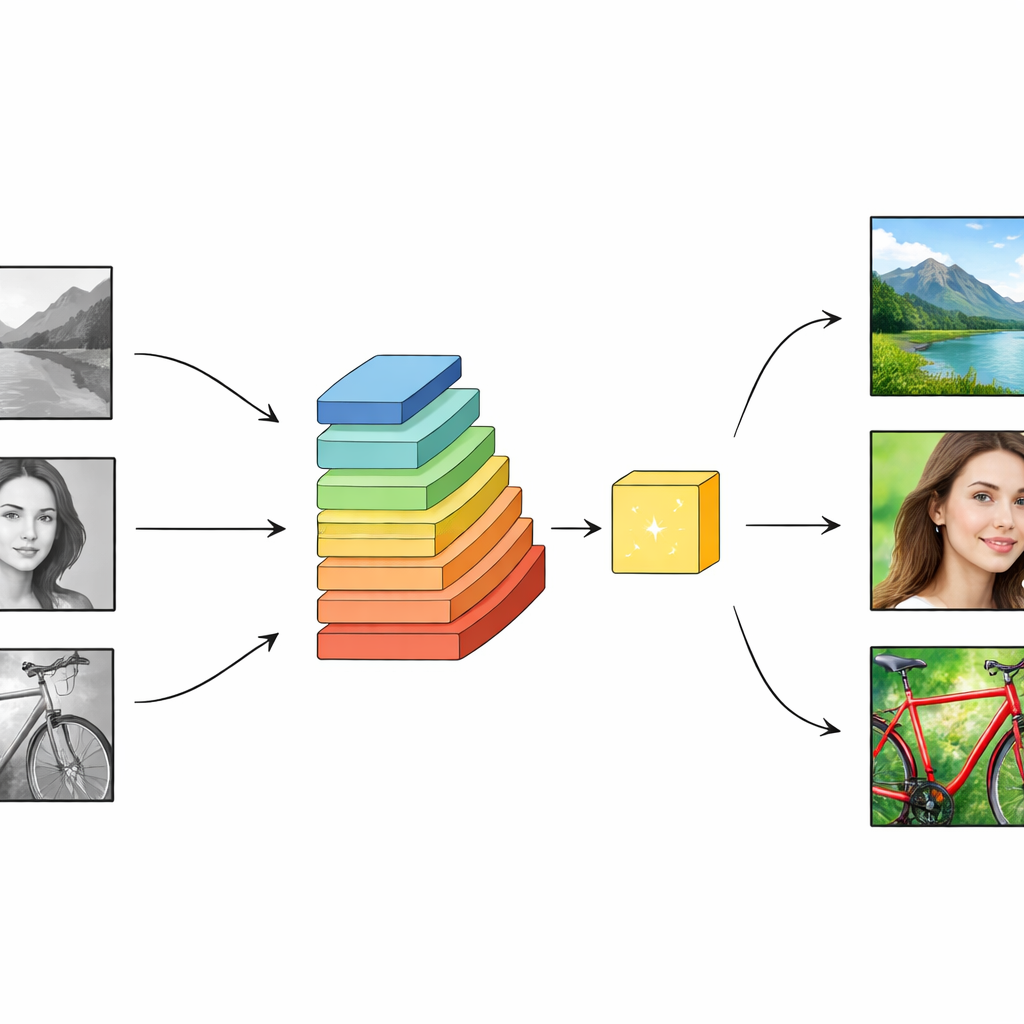

The authors build on this progress with a strategy known as transfer learning. Instead of training a new system from scratch, they reuse a powerful vision network called VGG19 that has already been trained on millions of color images. This network has many layers that gradually move from simple patterns such as edges and textures to whole objects and scenes: faces, trees, buildings, skies. The colorization system feeds a grayscale version of an image into VGG19 and collects features from several layers at once, forming a rich “stack” of information for every pixel. This helps the model understand both fine detail—such as strands of hair or leaf edges—and the broader context, like whether the scene is a beach, a city street, or a forest. With this context, the network is better positioned to choose believable colors, not just mathematically plausible ones.

Turning Light and Shade into Color and Contrast

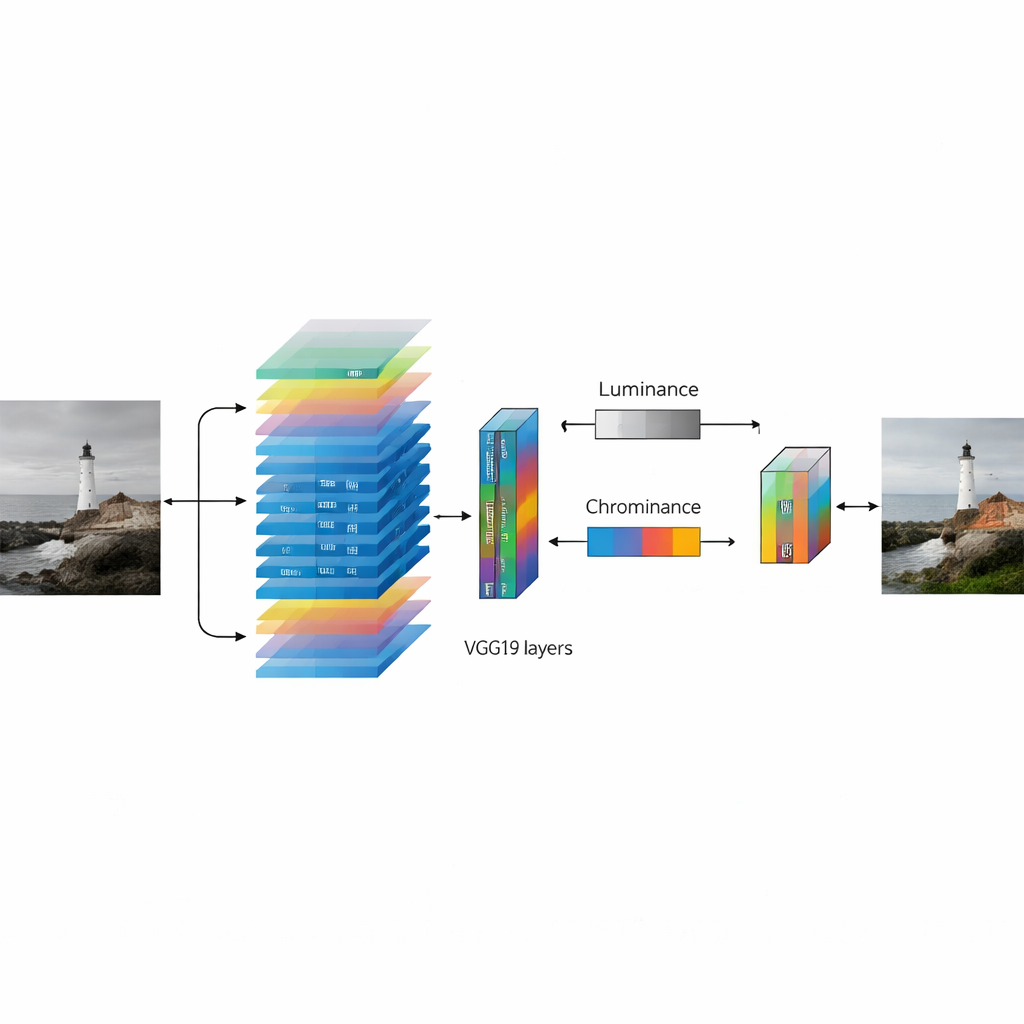

To make color decisions more stable, the method represents images in a color system that separates brightness from color content. The grayscale input serves as the brightness channel, while the network’s task is to predict the two remaining channels that encode subtle shifts between reds and greens, and between blues and yellows. By keeping brightness fixed, the system preserves the original shading and structure of the image. After the network produces its best guess at the missing color information, a final enhancement step is applied. Here the authors use a technique called adaptive histogram equalization, which locally stretches the range between dark and light areas. This makes textures clearer, edges sharper, and colors appear more vibrant, without simply “blowing out” bright regions or losing detail in shadows.

Putting the Method to the Test

To see how well their approach works in practice, the researchers trained and evaluated it on several well‑known image collections that include objects, scenes, people, and everyday environments. They compared their results with a variety of rival methods, including systems guided by user hints, generative models that try to invent realistic pictures, and newer transformer‑based models. Using standard measures of image quality, their method consistently produced crisper, more faithful colors and clearer structures, with especially strong performance on a challenging set of scene photographs. Visual comparisons show that their colorized outputs often look closer to the original color photos, with richer yet controlled saturation and better‑balanced contrast. They also highlight where the method struggles: very dark or overly bright images, or scenes with unusual textures and rare colors, can still lead to odd hues or uneven lighting.

What This Means for Everyday Images

In simple terms, this study shows that giving a colorization system a strong prior education about the visual world—and then carefully enhancing the result—can produce images that look more natural to the human eye. By standing on the shoulders of a large, pre‑trained network and adding a smart contrast‑boosting step, the authors deliver a practical tool that can breathe life into historical photographs, enrich black‑and‑white films, and make certain kinds of medical images easier to interpret. While it is not perfect and may stumble on extreme lighting or very unusual scenes, this approach moves automated colorization closer to something that non‑experts can rely on, bringing realistic color within reach for a wide range of everyday uses.

Citation: Ghosh, N., Mandal, G. Deep transfer learning based image colorization using VGG19 and CLAHE. Sci Rep 16, 9528 (2026). https://doi.org/10.1038/s41598-026-40292-1

Keywords: image colorization, deep learning, transfer learning, photo restoration, contrast enhancement