Clear Sky Science · en

Adaptive example selection for prototype based explainable mitosis detection in digital pathology

Why this matters for cancer care

When pathologists look at cancer samples under the microscope, counting how many tumor cells are actively dividing helps decide how aggressive a cancer is and which treatments to choose. Artificial intelligence can now spot these dividing cells quickly in digital slides, but its decisions are often a mystery even to experts. This paper introduces a method called Adaptive Example Selection (AES) that lets an AI system "show its work" by pointing to real past cases that support or contradict each decision, making automated mitosis detection more transparent and clinically trustworthy.

The challenge of spotting dividing cells

Dividing tumor cells, known as mitotic figures, are tiny, rare, and visually diverse. Under the usual pink-and-purple stain, they can look very similar to harmless structures such as dying cells or certain immune cells. Human experts must scan huge digital slides to find them, a process that is slow, tiring, and prone to disagreement. Modern deep learning systems can match or surpass expert performance at this task, but they behave like black boxes: they produce a score for each suspected cell without clearly explaining why. In medicine, where treatment decisions can be life-changing, this lack of clarity is a serious obstacle to using AI in everyday practice.

Building a strong but opaque detector

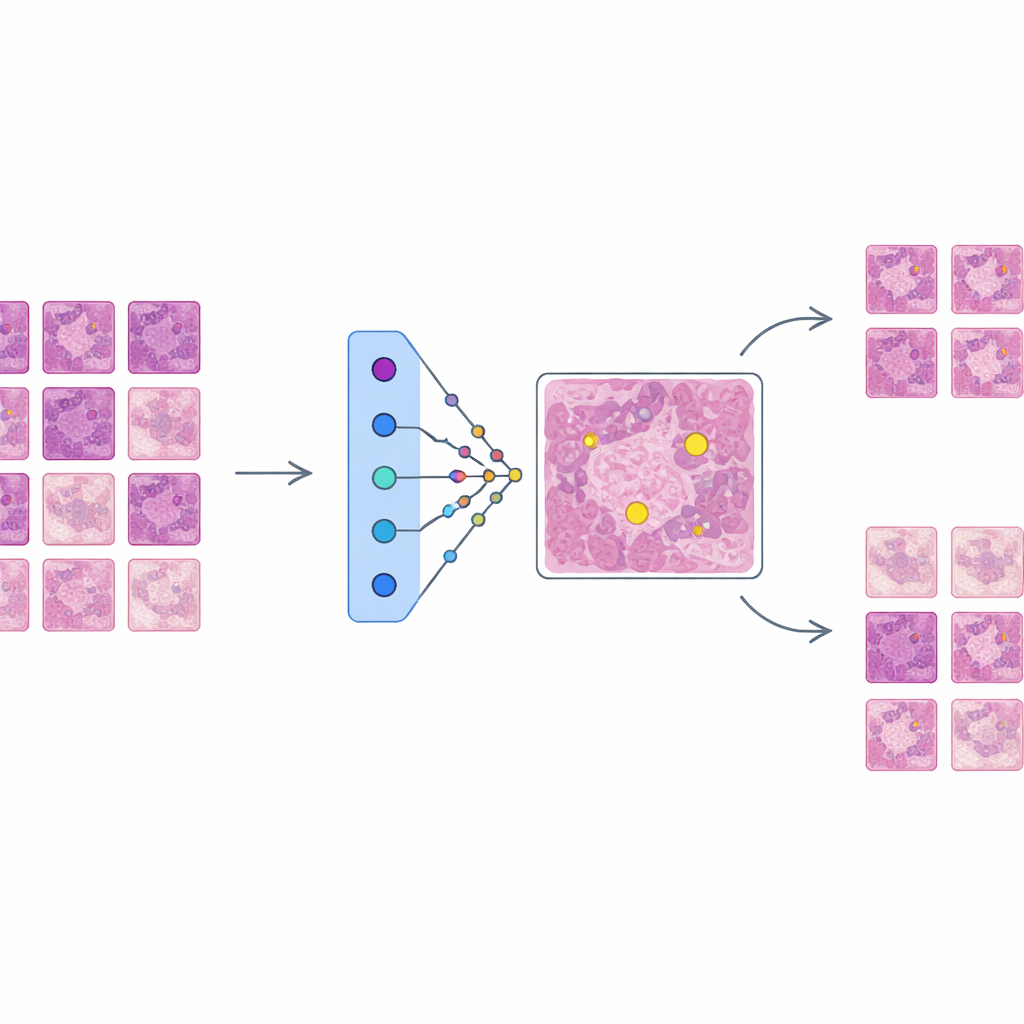

The authors first train a state-of-the-art object detection network, based on the Faster R-CNN architecture, to find mitotic figures in a large, diverse dataset called MIDOG++. These images come from both human and canine tumors, across several cancer types and laboratories, and include more than eleven thousand carefully labeled dividing cells. To preserve fine detail, the slides are cut into small patches and heavily augmented to mimic real-world variations in staining and imaging. The resulting detector achieves solid performance across tumor types, with F1-scores up to 0.84, confirming it is accurate but complex—exactly the kind of system that needs better explanation before clinicians can trust it in practice.

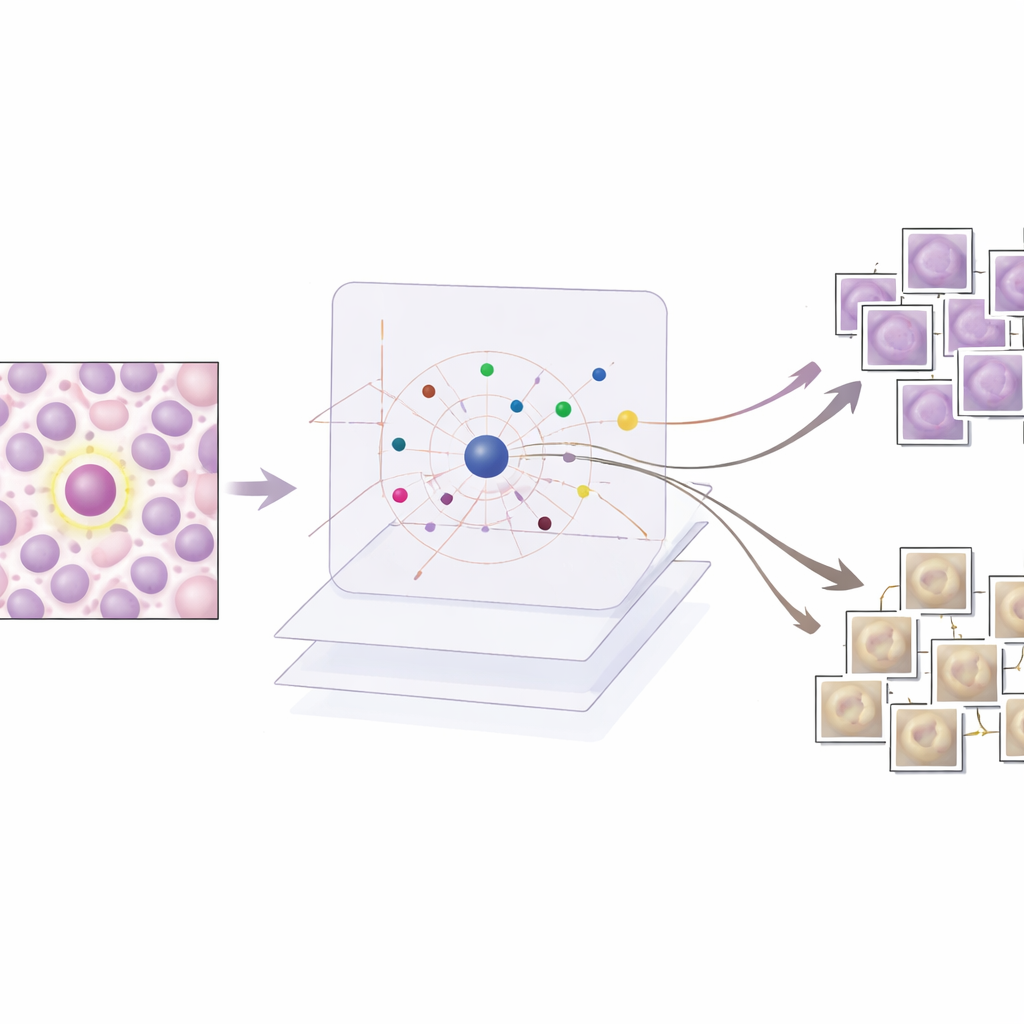

Teaching the AI to explain itself with examples

AES sits on top of this trained detector as an interpretability layer rather than changing how the detector works. For every candidate region the detector marks as possibly mitotic, AES looks into a library of real tissue patches taken from the training data. From this library it selects a small set of "supporting" examples that resemble true mitoses and a set of "contradicting" examples that look more like non-mitotic cells. Behind the scenes, AES treats the detector’s confidence scores as a smooth landscape and uses a mathematical tool called radial basis functions to approximate how that confidence changes near each case. Only prototypes that meaningfully influence the local confidence are kept, so the explanation for a single decision typically involves around ten carefully chosen examples rather than hundreds of barely relevant ones.

What the examples reveal about AI decisions

The researchers evaluate AES on both numbers and stories. Quantitatively, they show that a compact dictionary of about 190 prototype images can mimic the detector’s confidence scores with very high accuracy, while keeping the number of examples shown per case low enough for a human to review. Qualitatively, they examine three common scenarios. When the detector is clearly correct, AES returns only mitotic prototypes that strongly support the decision, which is reassuring to clinicians. For false alarms, the method surfaces lookalike mitotic examples that show why the detector was misled by similar texture or chromatin patterns, often alongside weaker non-mitotic prototypes that hint at uncertainty. For missed mitoses, AES tends to return mainly non-mitotic prototypes or ambiguous examples, pointing to blind spots in the training data and suggesting where new or better-labeled examples are needed.

From black box to collaborative tool

By grounding each prediction in a handful of real, labeled tissue patches, AES makes a complex AI detector behave more like a human colleague who justifies decisions by recalling past cases. The system not only reports whether a cell is likely dividing but also shows why, and how confident it is, through the mix and influence of supporting and contradicting prototypes. This design allows pathologists to confirm strong predictions quickly, focus attention on borderline or confusing regions, and identify systematic error patterns that can guide further training. Although developed for mitosis detection, the same approach could extend to other tasks in digital pathology, helping move AI from opaque automation toward an interpretable, case-based assistant that clinicians can question, trust, and refine.

Citation: Banik, M., Kreutz-Delgado, K., Mohanty, I. et al. Adaptive example selection for prototype based explainable mitosis detection in digital pathology. Sci Rep 16, 9481 (2026). https://doi.org/10.1038/s41598-026-40283-2

Keywords: explainable AI, digital pathology, mitosis detection, prototype-based models, cancer diagnostics