Clear Sky Science · en

An integrated framework for proactive deepfake mitigation via attention-driven watermarking and blockchain-based authenticity verification

Why faked videos are everyone’s problem

Videos that look and sound real can now be forged with off‑the‑shelf software, blurring the line between truth and fiction online. These so‑called deepfakes are already being used for scams, harassment, and political tricks. Instead of trying to spot fakes after they spread, this study asks a different question: what if we could quietly protect genuine videos at the moment they are created, so that any later tampering becomes obvious?

From chasing fakes to protecting originals

Most current research tries to catch deepfakes after the fact, training algorithms to spot tiny glitches left behind by generative models. But as those models improve, this cat‑and‑mouse game becomes harder to win. The authors argue for a proactive approach: protect authentic footage as it is recorded, so that viewers and platforms can later verify whether what they see is the untouched original. Their framework combines three layers: a smart video analyzer that decides where protection matters most, an invisible digital mark woven into each frame, and a blockchain record that locks in the identity of the file as a whole.

Teaching the system what really matters in a video

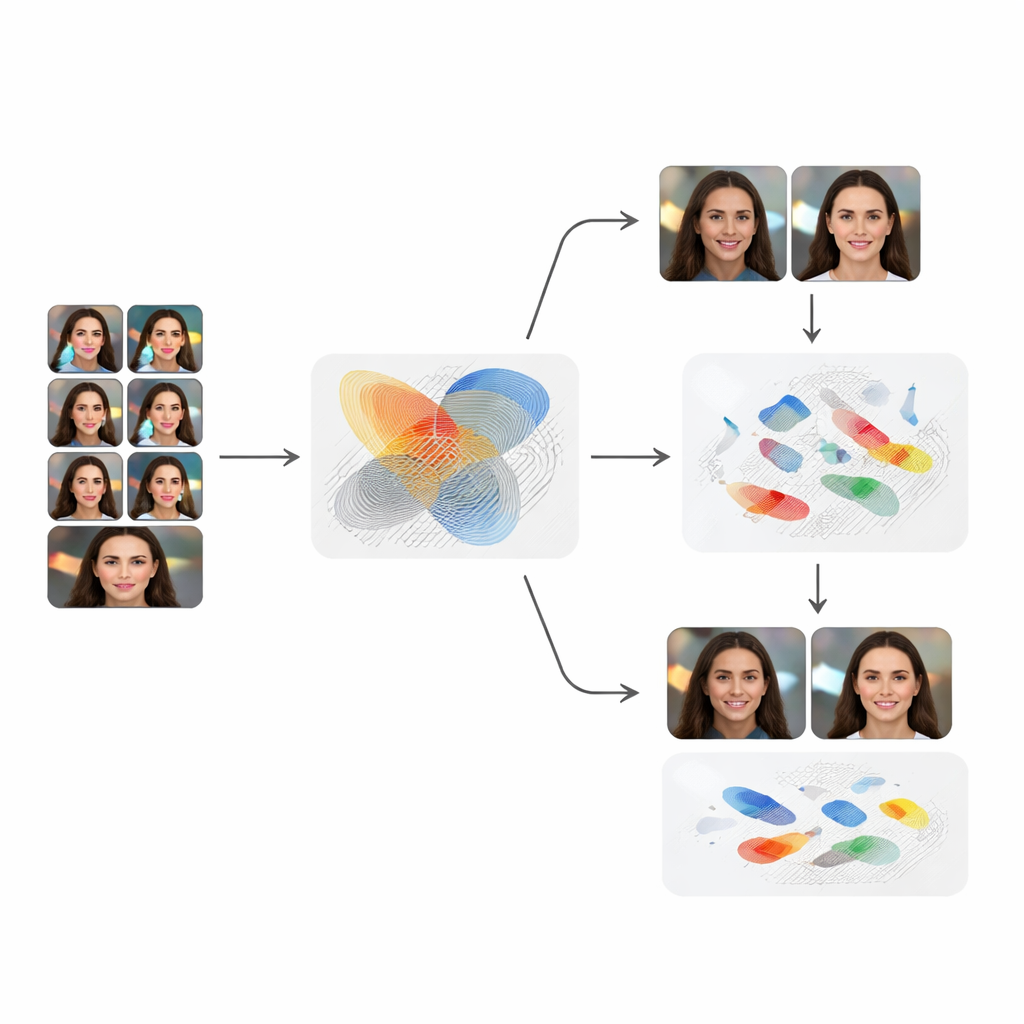

The first layer is an attention model that learns which parts of a video carry the most meaningful motion and detail over time. The team trains a compact but powerful network on thousands of everyday clips showing people performing actions. One part of the network examines each frame like a still photograph, while another looks at how things move across 16‑frame snippets. Together, they achieve more than 97% accuracy on a standard action‑recognition test, showing that the system has learned rich patterns of how humans and scenes change over time. These patterns are then turned into attention maps that highlight regions where any tampering would most affect the story the video tells.

Hiding a secret mark where forgers will do the most damage

Next, an invisible digital mark—a watermark—is embedded in each frame, but not in a simple, uniform way. A generative network creates a subtle, noise‑like pattern that is blended more strongly into regions the attention model flags as important, such as faces or moving hands, and more weakly elsewhere to preserve visual quality. Viewers do not notice a difference, and quality scores confirm that the marked frames are almost indistinguishable from the originals. Yet the pattern is strong and complex enough that a companion network, trained as a kind of decoder, can later recover the hidden signature from genuine footage, frame by frame.

Putting deepfakes and everyday distortions to the test

To see whether this protection holds up in the real world, the authors run a series of stress tests. They first watermark a diverse set of short stock videos and then feed them into DeepFaceLab, one of the most widely used face‑swapping tools, to create convincing deepfakes. In every one of 50 manipulated clips, the hidden mark is either destroyed or badly scrambled, and the system correctly flags the video as tampered. The method also stands up well to common processing steps like heavy recompression, resizing, and blurring that often occur when clips are shared online, though very strong random noise can eventually overwhelm the hidden signal. Careful experiments show that both the attention guidance and the use of motion over time are crucial; removing either component makes the protection noticeably weaker.

Locking in trust with a permanent fingerprint

The final layer goes beyond the content of the frames and secures the video file itself. After watermarking, the complete file is run through a cryptographic function that produces a short digital fingerprint. That fingerprint, along with basic information about the clip, is written to a blockchain ledger, where it cannot be altered without leaving a trace. Later, anyone can upload a copy of the video: the system tries to recover the watermark and also recomputes the fingerprint. If both the hidden mark and the cryptographic fingerprint match the original records, the video can be treated as authentic with high confidence; if either fails, viewers know the footage has been changed.

What this means for the videos you see

In plain terms, this work shows that we can shift from guessing whether a video is fake to proving that a video is real. By quietly weaving an intelligent, hard‑to‑forge mark into the most meaningful parts of each frame and backing it up with a tamper‑proof ledger entry, the framework catches all tested face‑swap attacks and survives many everyday distortions. While it still struggles under extreme visual noise and needs broader testing, it points toward a future where cameras, platforms, and newsrooms can ship videos with built‑in proof of authenticity—making it much harder for deepfakes to pass as the truth.

Citation: Hajjej, F., Hamid, M. & Alluhaidan, A.S. An integrated framework for proactive deepfake mitigation via attention-driven watermarking and blockchain-based authenticity verification. Sci Rep 16, 9545 (2026). https://doi.org/10.1038/s41598-026-40166-6

Keywords: deepfake protection, video authenticity, digital watermarking, blockchain verification, media security