Clear Sky Science · en

Characterizing stroke-affected speech using F0 and duration-based features

Why stroke changes the sound of a voice

When a person has a stroke, doctors focus first on saving brain tissue and restoring movement. But one of the most personal losses often comes later: the clear, familiar sound of their own voice. This study asks a simple but powerful question—can we measure those changes in speech in a way that helps us better detect, understand, and eventually monitor stroke-related damage?

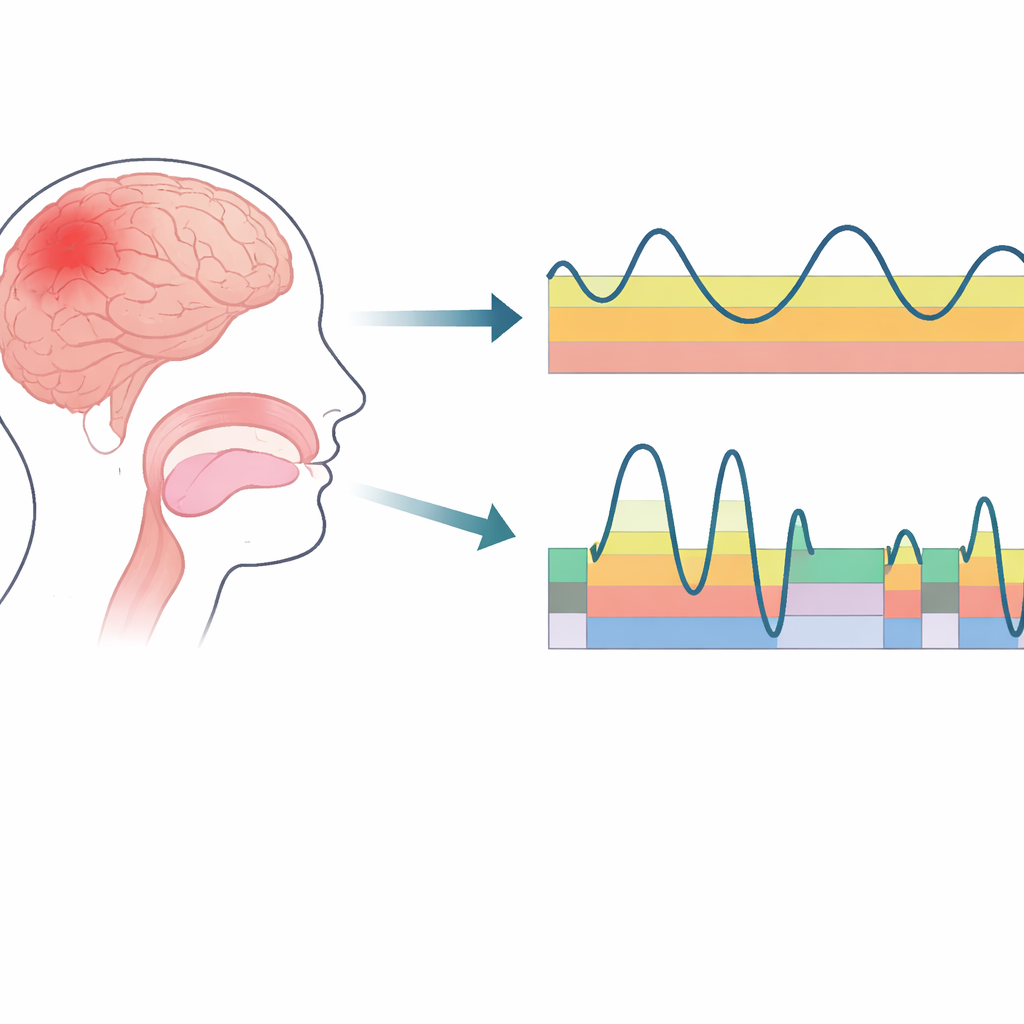

Listening to pitch and timing, not just words

Our ears do more than decode words; they track the musical “shape” and rhythm of speech, known as prosody. Two basic ingredients shape this sound pattern: pitch (how high or low the voice is) and timing (how long parts of sounds last and how quickly we move from one sound to another). The researchers focused on these two elements to see how speech from people who had experienced a stroke differs from that of healthy speakers. To do this, they built a dedicated speech database in a hospital ward in India, recording five sustained vowel sounds and short three-word sentences from 50 stroke patients and 50 healthy volunteers who spoke Telugu as their first language.

Capturing the voice’s hidden music

To track pitch, the team used a fine-grained method that follows the tiny, rapid vibrations of the vocal folds cycle by cycle, rather than averaging over several cycles. This allowed them to build a detailed contour of how pitch moves over time, even in the noisy environment of a busy hospital. From these contours, they measured simple statistics such as the average pitch, the middle (median) pitch, and how much the pitch wobbled around that center. When they compared stroke patients with healthy speakers, a striking pattern emerged that depended on gender: male stroke patients tended to speak with a slightly higher typical pitch than healthy men, while female stroke patients tended to speak with a clearly lower typical pitch than healthy women. These differences were strong enough to show up both in the full dataset and in a carefully age-matched subgroup.

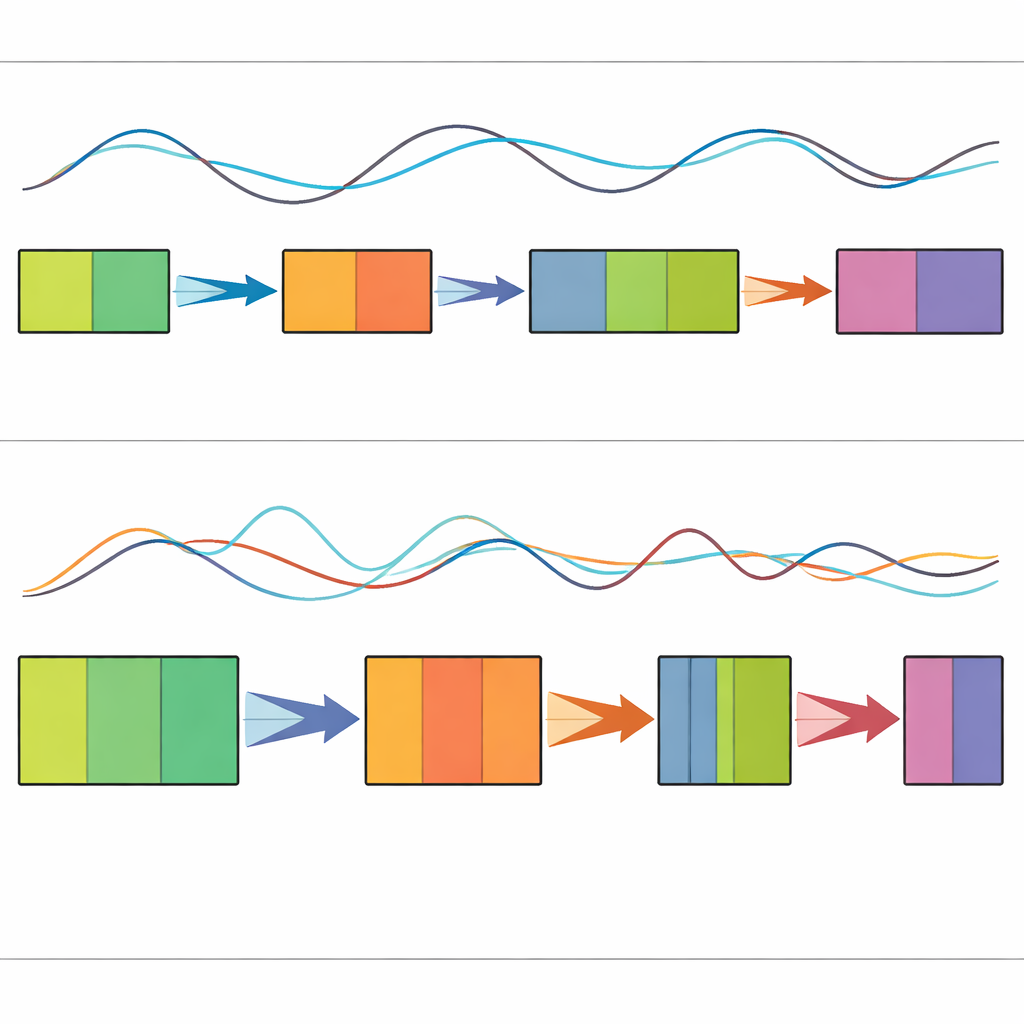

Timing the glide between sounds

Speech is not just a stream of steady notes. Our mouths glide from one sound to the next, passing through brief “transition” regions where the shape of the vocal tract changes quickly, and “steady” regions where a single sound is held more or less constant. The researchers developed automatic measures that identify these two types of regions by tracking how rapidly the acoustic fingerprint of the voice shifts from moment to moment. In healthy speakers, transitions and steady segments are relatively balanced. In stroke patients, however, the pattern changed: the transitions between sounds were shorter overall, but the changes during those brief moments were more abrupt, while the steady portions in between became noticeably longer.

What the patterns reveal about slurred speech

Putting these findings together paints a picture of how stroke reshapes speaking. Many patients live with weakness or partial paralysis on one side of the body, which can make it harder to smoothly control the muscles of the lips, tongue, and jaw. The study’s results suggest that, instead of gently gliding between sounds, articulators may remain in one position a bit too long and then shift more suddenly, creating shorter, more intense transitions and extended steady stretches. These longer steady regions line up well with what listeners describe as “slurred” or drawn-out speech.

From careful listening to clinical tools

To a lay listener, the main conclusion is this: stroke does not just weaken speech; it leaves a measurable fingerprint on the voice’s pitch and rhythm. Male and female patients show opposite shifts in typical pitch, and all stroke patients in the study tend to have shorter, sharper transitions between sounds and longer held portions in between. Because these patterns can be captured with simple numerical features, they could power future computer-based tools that help clinicians detect stroke-related speech problems earlier, track recovery over time, and possibly even estimate stroke severity from voice alone. In short, by turning careful listening into data, this research takes a step toward making the sound of a person’s voice a practical window into their brain health.

Citation: Jyothi, M.V.S., Banerjee, O., Govind, D. et al. Characterizing stroke-affected speech using F0 and duration-based features. Sci Rep 16, 9146 (2026). https://doi.org/10.1038/s41598-026-40155-9

Keywords: stroke speech, dysarthria, voice analysis, speech prosody, clinical speech database