Clear Sky Science · en

Ensemble machine learning strategies for mineral prospectivity mapping under data scarcity

Finding Ore With Fewer Clues

Modern society depends on metals like lead and zinc for batteries, electronics, and infrastructure, yet the easiest deposits have already been found. In new regions, geologists often have only a handful of confirmed mineral discoveries, scattered chemical samples, and patchy maps to guide them. This study shows how to use machine learning not to chase the highest possible score on past data, but to deliver predictions that decision‑makers can actually trust when information is scarce.

Why Data Are Thin in the Real World

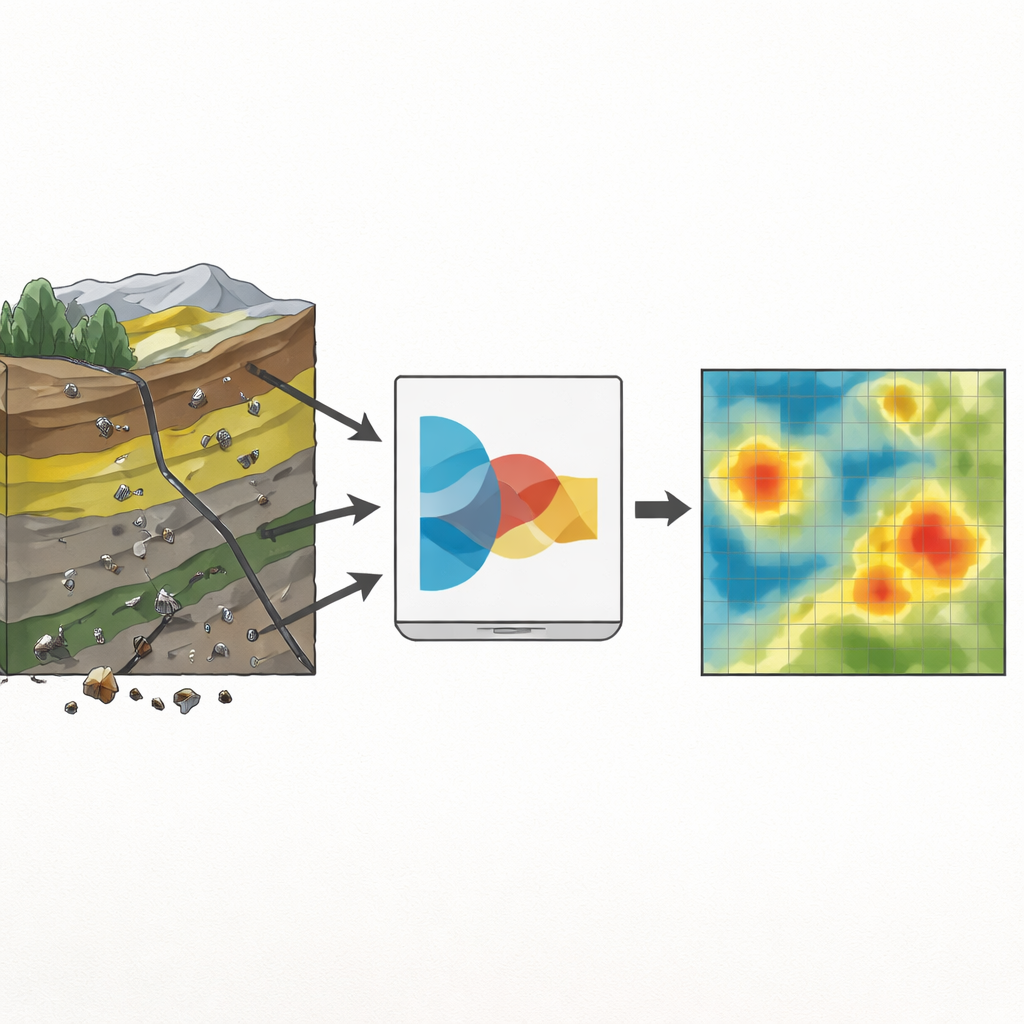

Mineral prospectivity mapping aims to highlight parts of a landscape that are more likely to contain ore. It combines layers of information, such as rock types, faults, satellite images, and stream‑sediment chemistry, into a probability map that guides field work and drilling. In early‑stage projects, however, only a few deposits are known and many parts of the map have never been sampled. Standard machine learning tools thrive on large, well‑labeled datasets; when faced with only a few dozen positive examples, they can become unstable and overconfident, giving numbers that look precise but are poorly tied to reality.

Turning Sparse Clues Into Usable Signals

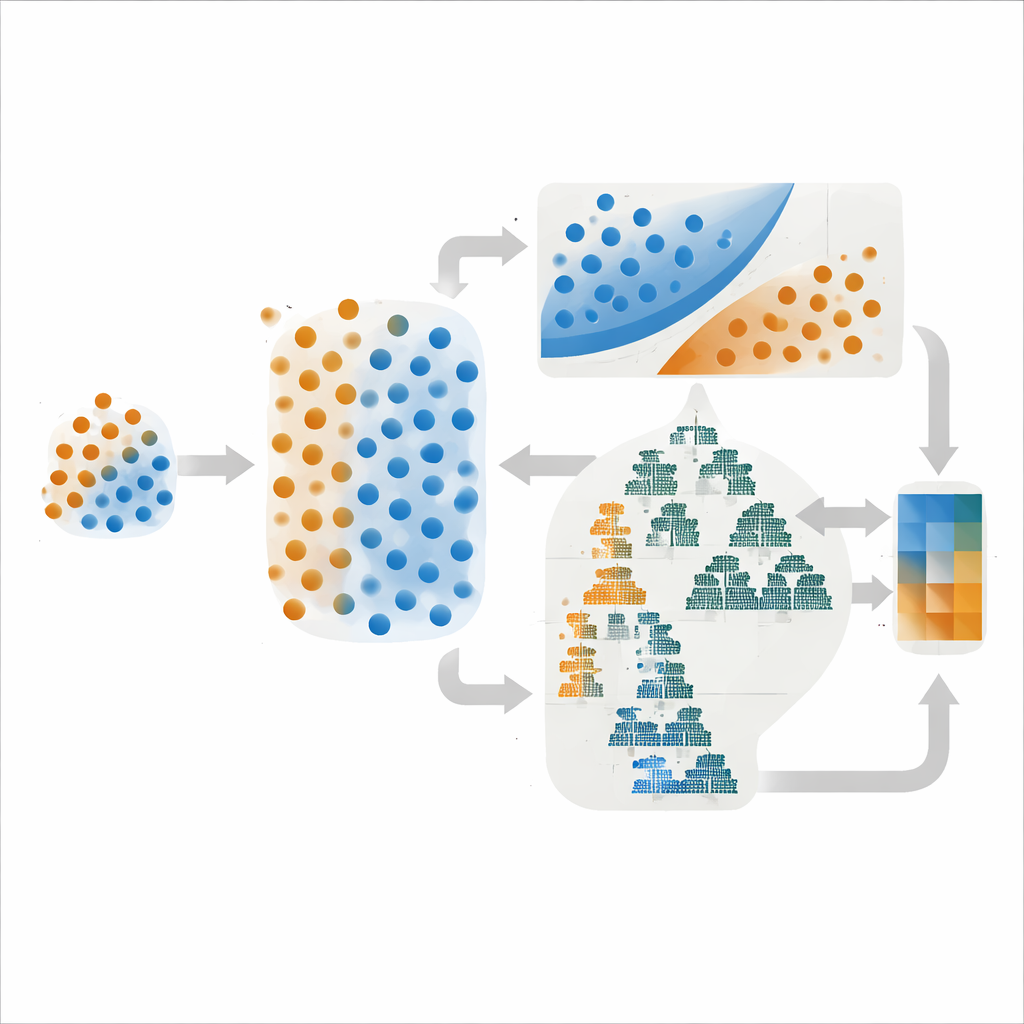

The authors worked in the Dehaq lead–zinc district of central Iran, a region where mineralization is tied to specific limestone layers, faults, and zones of chemical alteration. They built digital maps of host rocks, fracture density, and alteration from geological surveys and satellite images, and extracted geochemical anomalies from 624 sediment samples. From this rich but uneven evidence, they distilled just 108 labeled locations: 27 with known deposits and 81 without. To avoid the majority class overwhelming the few ore examples, they used a technique that creates realistic synthetic deposit points by interpolating between existing ones, evening up the classes only within the training data. This provided a more balanced set of examples while keeping separate validation and test sets that mirror real‑world rarity.

Building Teams of Models Instead of One Hero

Rather than rely on a single algorithm, the study paired methods with different strengths. One ensemble combined a support vector machine, which draws the sharpest possible boundary between classes, with a simple probabilistic model called Gaussian Naive Bayes. The other blended two tree‑based methods, LightGBM and AdaBoost, that excel at capturing complex patterns in many variables. In both cases, the final prediction was an average of the component models’ probability estimates, a strategy that often reduces wild swings in performance. Crucially, the authors compared not only how often these models were right, but also how well their predicted probabilities matched reality—a property known as calibration.

Tuning for Trust, Not Just for Score

Choosing the settings of a model—how strongly it penalizes errors, how many trees it grows, and so on—can dramatically change its behavior. The team tested three common tuning strategies: Grid Search, which systematically scans a fixed menu of options; Random Search, which samples combinations at random; and Bayesian Optimization, which uses previous trials to guess promising new ones. On paper, Bayesian Optimization delivered the single highest discrimination score (an ROC–AUC of 0.95) for the support‑vector‑based ensemble. Yet when the authors examined calibration curves, which compare predicted probabilities with actual outcomes, the Grid Search versions of both ensembles produced smoother, more stable results, especially in the middle‑probability range where exploration cutoffs are usually set.

From Numbers to Field Decisions

For early exploration, where every drill hole is expensive, the authors argue that well‑behaved probabilities matter more than squeezing out a tiny gain in accuracy. Their most practical recommendation is the simpler support‑vector‑plus‑Bayes ensemble tuned by Grid Search. It achieves strong discrimination while offering the most reliable link between probability values and real discovery rates, which allows geologists to set thresholds that match their risk tolerance. As projects mature and more data accumulate, more complex tree‑based models like the LightGBM ensemble can be introduced to refine predictions, but always with an eye on calibration. In this way, machine learning becomes not a black‑box score generator, but a transparent partner in making risk‑aware decisions about where to look for the next generation of mineral resources.

Citation: Amirajlo, P., Hassani, H., Pour, A.B. et al. Ensemble machine learning strategies for mineral prospectivity mapping under data scarcity. Sci Rep 16, 9171 (2026). https://doi.org/10.1038/s41598-026-40125-1

Keywords: mineral prospectivity mapping, ensemble machine learning, data scarcity, model calibration, mineral exploration