Clear Sky Science · en

The impact of generative AI on social media: an experimental study

Why this matters for your online life

Every day, more of what we read and write on social media is quietly shaped by artificial intelligence. This study asks a question that affects anyone who posts, comments, or scrolls: when AI helps people write, does it make conversations better, or just louder? By recreating a social-media style discussion space with hundreds of everyday users, the researchers show that AI tools can draw more people into the conversation—but may also make those conversations feel more generic, less trustworthy, and less human.

Setting up a realistic online conversation

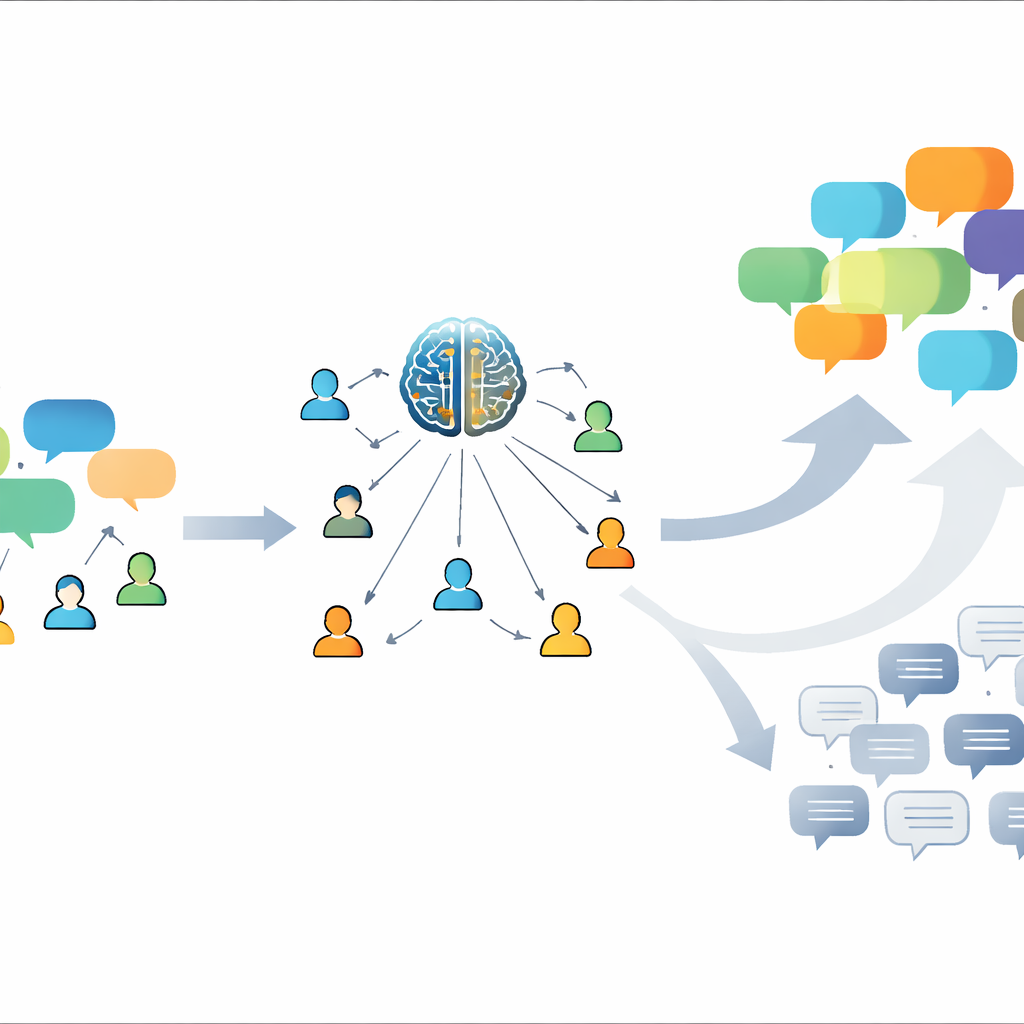

To probe these questions, the team built its own discussion platform modeled on popular forum sites. Six hundred eighty adults from across the United States were placed into small groups of five and asked to debate three kinds of topics: a light one (cats versus dogs), a science-related one (health benefits of oats), and a political one (universal basic income). Some groups had no technological help at all. Others used one of four different AI tools: an open chat assistant, short AI-written conversation starters, suggestions for replies, or feedback on drafts people had written themselves. This setup let the researchers compare how people behaved and how they felt about the discussion with and without AI support.

More voices and longer posts, but mixed feelings

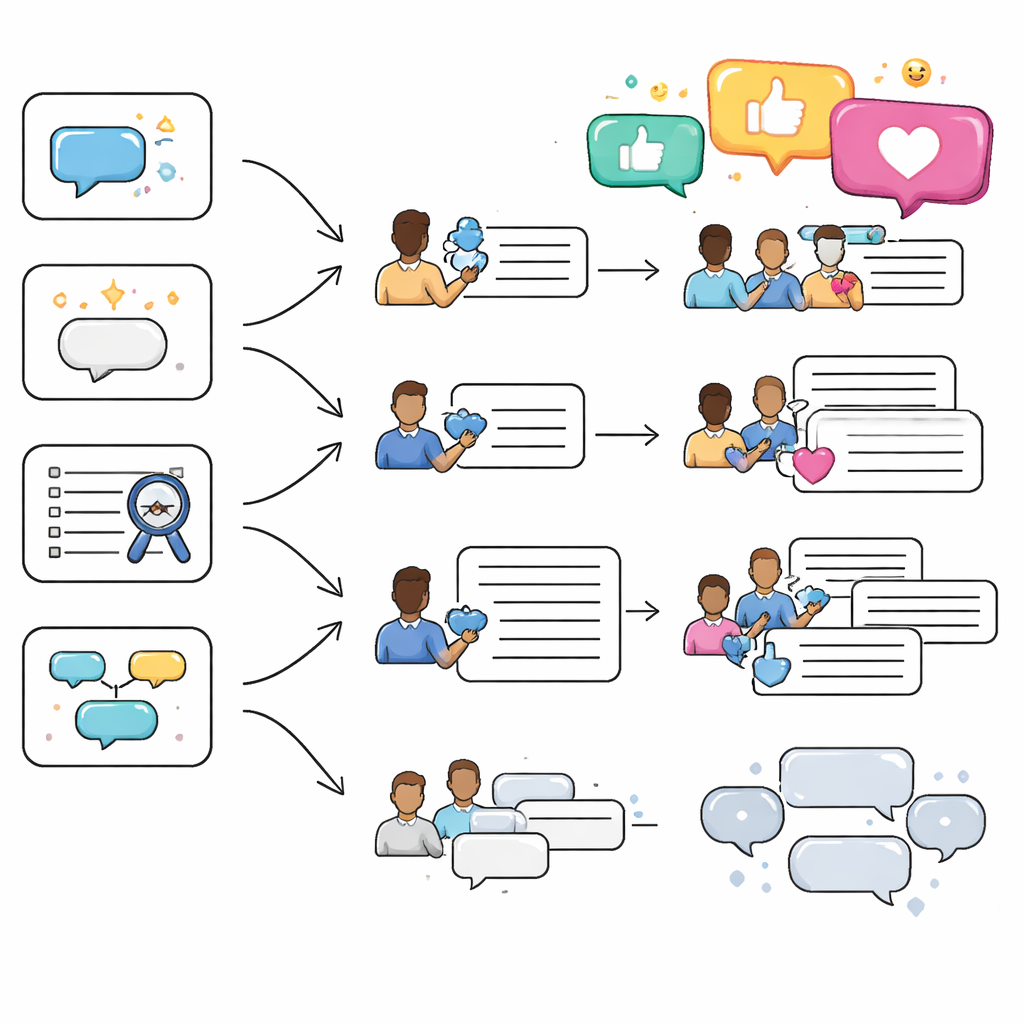

On one level, the AI tools did exactly what designers might hope. Participants with AI assistance wrote more and longer comments than those in the control groups. Some tools also encouraged a wider spread of participation, so that discussion was less dominated by just one or two people. For example, AI-generated opening prompts helped quieter users jump into the conversation, and the chat assistant made people feel more willing to post by supplying ideas, facts, or phrasing when they were unsure what to say.

When helpful turns into hollow

Yet when the same conversations were judged from the reader’s point of view, the picture changed. Across most AI conditions, people rated what they saw as less informative and lower quality than in human-only discussions. They reacted with more “dislikes” and described many AI-influenced comments as “robotic” or “generic.” While reply suggestions were sometimes appreciated, other tools made people feel that the tone of the discussion had become less authentic. Even those who did not use the AI themselves could feel its presence once it entered a thread, as it nudged the overall style of talk toward longer but less meaningful responses—a kind of “semantic garbage” that clutters the space without adding much substance.

How people actually used the tools

Looking closely at behavior revealed that participants did not treat all AI help the same way. The chat assistant was widely used, especially for fact-checking the science topic and for exploring arguments in the political one. Feedback on drafts was embraced when the stakes felt higher—such as when discussing health or political issues—and often led people to strengthen structure and arguments. Conversation starters lowered the barrier to getting involved but were just as often ignored when they did not match a user’s intent. Reply suggestions were used moderately and, in tense topics, people strongly preferred suggestions that agreed rather than disagreed, hinting that AI might gently steer discussions toward safer, less confrontational ground.

Design lessons for a more human online future

From these experiments, the authors argue that the way forward is not to reject AI in social media, but to design it more carefully. People liked having AI as an optional helper, especially for brainstorming, checking information, and overcoming “writer’s block,” but they wanted tools that felt more personal and better tuned to the topic and to their own voice. The researchers recommend clear labeling when text is copied directly from AI, smarter personalization that adapts to each user, context-aware behavior that shifts tone between casual, scientific, and political talk, and simple, familiar interfaces. Without such guardrails, they warn, social platforms risk filling public spaces with smooth but shallow chatter that erodes trust. With them, AI could instead lower barriers to participation and support more inclusive, thoughtful conversations that still sound, and feel, like they come from real people.

Citation: Møller, A.G., Romero, D.M., Jurgens, D. et al. The impact of generative AI on social media: an experimental study. Sci Rep 16, 9376 (2026). https://doi.org/10.1038/s41598-026-40110-8

Keywords: social media, generative AI, online discussion, authenticity, human-computer interaction