Clear Sky Science · en

Chrysanthemum classification via color space fusion transformer

Why a flower’s hometown and color really matter

Chrysanthemums are more than pretty flowers for autumn bouquets. In China, they are also a classic herbal remedy and a valuable crop, but their healing properties and market price depend strongly on the plant’s variety and where it was grown. Today, telling one medicinal chrysanthemum from another often demands expert eyes, chemical tests, or genetic analysis—approaches that are slow, expensive, and hard to use in the field. This study introduces a camera‑based method that lets a computer sort chrysanthemums quickly and accurately, simply from images, by looking very carefully at color in a new way.

Seeing flowers the way a camera does

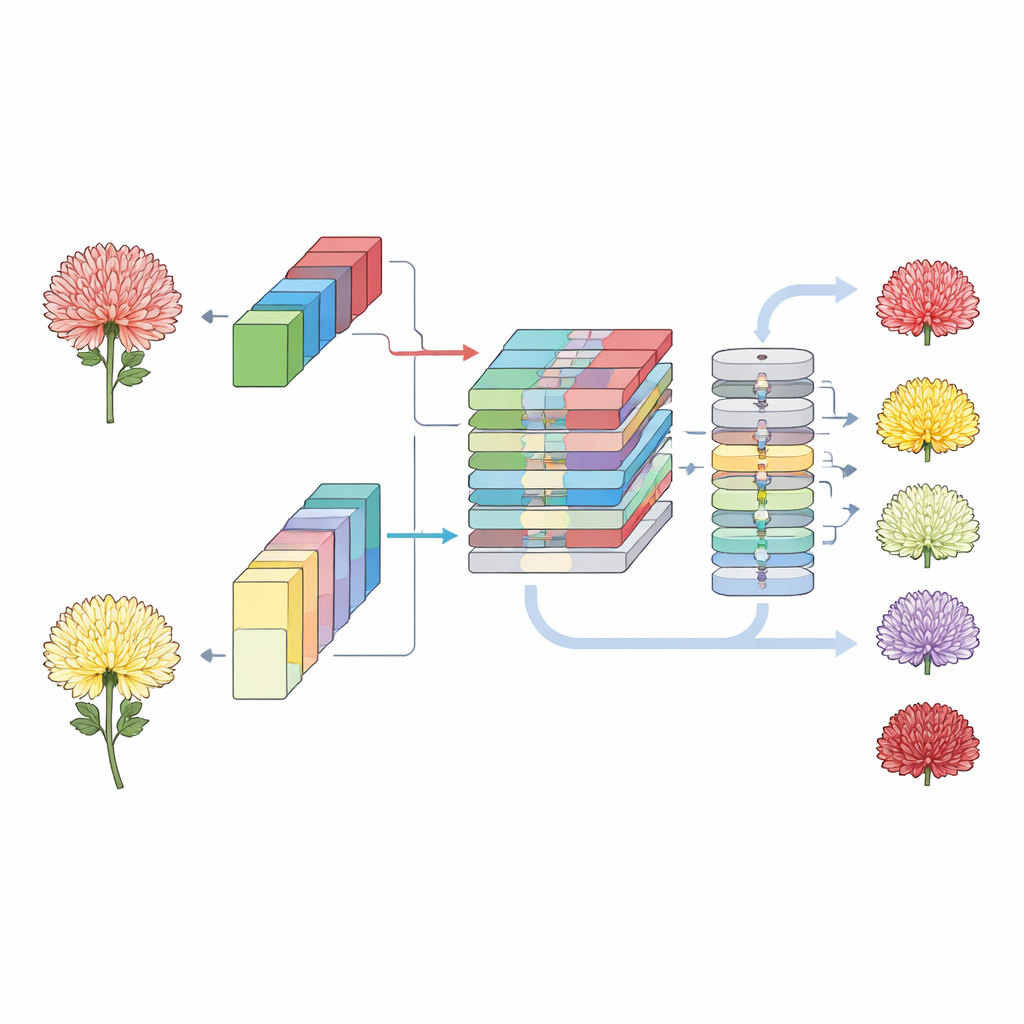

Most digital photos store color as mixtures of red, green, and blue (RGB). That works well for display, but it does not always match how humans perceive brightness and subtle color shifts, especially when lighting changes. The authors take the usual RGB images of chrysanthemum flower heads—especially the backs of the blossoms, which carry rich structural and color clues—and convert them into a second color system known as LAB. In LAB, one channel tracks light versus dark, while the other two describe how colors differ along reddish–greenish and yellowish–bluish axes. By working in both systems at once, the method can keep the fine detail of the original photo while also capturing more stable, human‑like color differences between similar flowers.

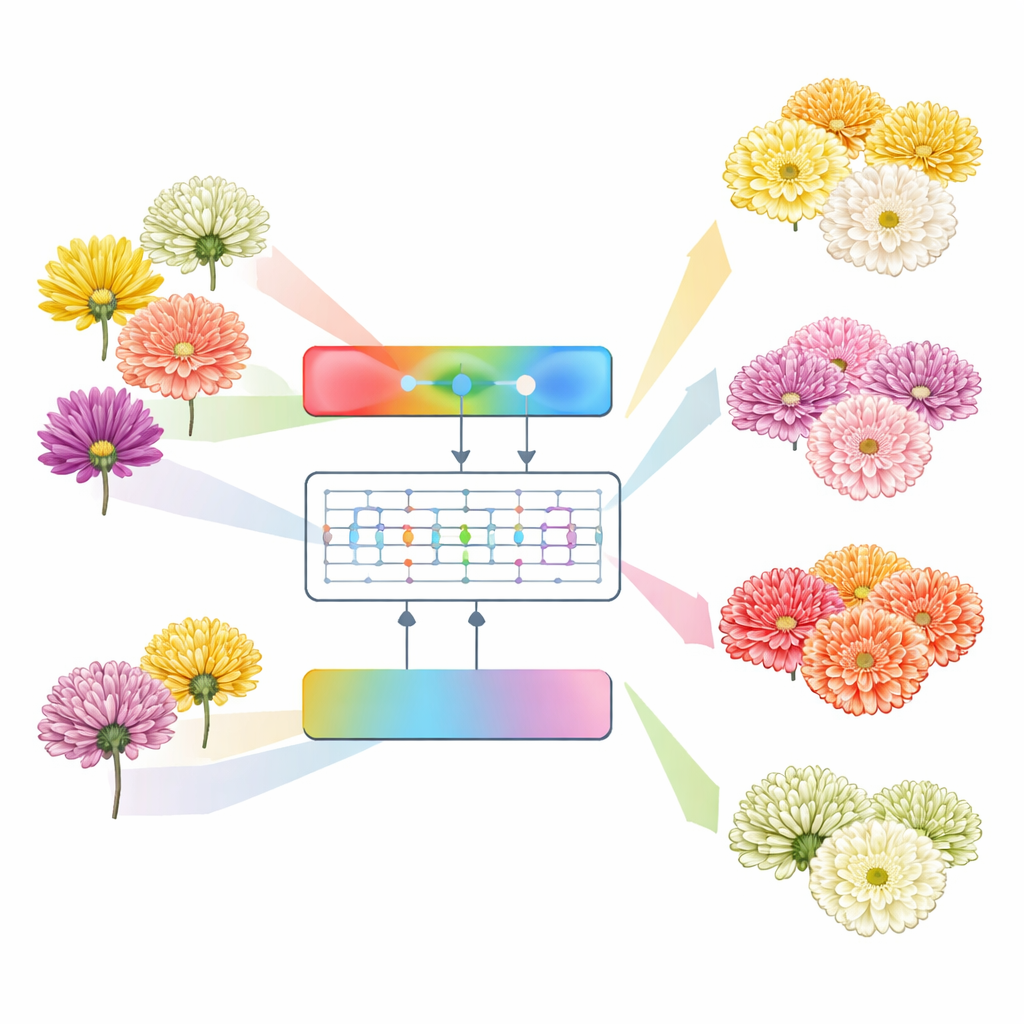

Two parallel views of the same flower

To make the most of these complementary views, the researchers design a “multi‑path” network—essentially two expert lanes working in parallel. One lane studies the RGB version of each image, and the other analyzes the LAB version. Each lane is built from modern convolutional blocks, a type of deep‑learning structure that excels at picking out edges, textures, and shapes. Early layers pay attention to petal outlines and small texture patterns, while deeper layers summarize broader structures. At several stages, the network fuses what each lane has learned by stacking their feature maps together. This lets the model weigh crisp outlines from RGB against smoother, lighting‑robust color structure from LAB, combining them into a richer internal picture of every blossom.

Letting attention find the telling details

After the parallel lanes have distilled the flower images into compact feature maps, a second kind of model takes over: a vision transformer. Transformers were originally invented for language, where they excel at spotting long‑range relationships, and they now play a growing role in image analysis. Here, the fused chrysanthemum features are cut into many small patches and fed to the transformer, which uses an “attention” mechanism to decide which patches matter most for telling varieties apart. This global view helps the network connect subtle color differences near the base of the petals with patterns further out on the flower head, leading to a more reliable judgment of each flower’s type and origin.

Putting the system to the test

The team assembled a substantial image collection: more than 9,000 photos of the backs and fronts of flowers from 18 chrysanthemum types and 15 growing regions, with some varieties—such as Hangbai chrysanthemum—represented across many different locales. They trained and evaluated their model on this dataset and compared it with well‑known deep‑learning architectures that are widely used in image recognition. The results are striking: when working on back‑view images, the new method reached an accuracy of about 96–97% on their own chrysanthemum dataset and more than 99% on a standard public flower‑image benchmark. It surpassed several strong competitors, including both pure convolutional networks and pure transformer models, and maintained not only high accuracy but also stable performance across many different chrysanthemum categories.

What this means for growers and herbal medicine

In everyday terms, the study shows that a carefully designed image‑analysis system can match—and in some cases exceed—the reliability of more complicated laboratory approaches for recognizing medicinal chrysanthemums. By combining two ways of representing color with two complementary types of neural network, the method can spot fine visual cues that distinguish look‑alike flowers from different regions. This could support rapid quality checks in markets, help trace where dried flower heads really came from, and eventually extend to other herbal plants that rely on precise variety identification. As such tools move from the lab to handheld devices or sorting machines, they promise to make the “trained eye” of an expert available wherever medicinal plants are grown, traded, or prescribed.

Citation: Jiang, J., Yang, X., Wang, T. et al. Chrysanthemum classification via color space fusion transformer. Sci Rep 16, 9397 (2026). https://doi.org/10.1038/s41598-026-40027-2

Keywords: chrysanthemum classification, plant image recognition, color space fusion, vision transformer, medicinal herbs