Clear Sky Science · en

UAV photogrammetry and lidar integration for high-fidelity 3D campus mapping at KFUPM

Why turning a campus into a digital world matters

Imagine exploring a university campus online as if you were walking through it in person—peeking at building facades, checking wheelchair‑friendly routes, or inspecting roofs without ever climbing a ladder. This paper shows how researchers turned the campus of King Fahd University of Petroleum and Minerals (KFUPM) into a highly detailed three‑dimensional “digital twin” using camera‑equipped drones, laser scanners, and clever image‑enhancement software. Their goal is not just pretty pictures, but a practical, updatable 3D map that can support safety, maintenance, navigation, and virtual visits.

Flying robots as campus cartographers

At the heart of the project are unmanned aerial vehicles—drones—that fly over the campus following carefully planned routes. Some flights use a straight, lawn‑mower‑style grid where the camera looks straight down, ideal for capturing roofs, streets, and open areas. Other flights circle building clusters with the camera tilted at an angle, which reveals vertical walls, balconies, and hidden corners that a top‑down view would miss. Mounted on the same drone are a high‑resolution color camera and a laser scanner. The camera records detailed images, while the laser scanner measures millions of distances to create a cloud of 3D points describing the shape of the ground and buildings.

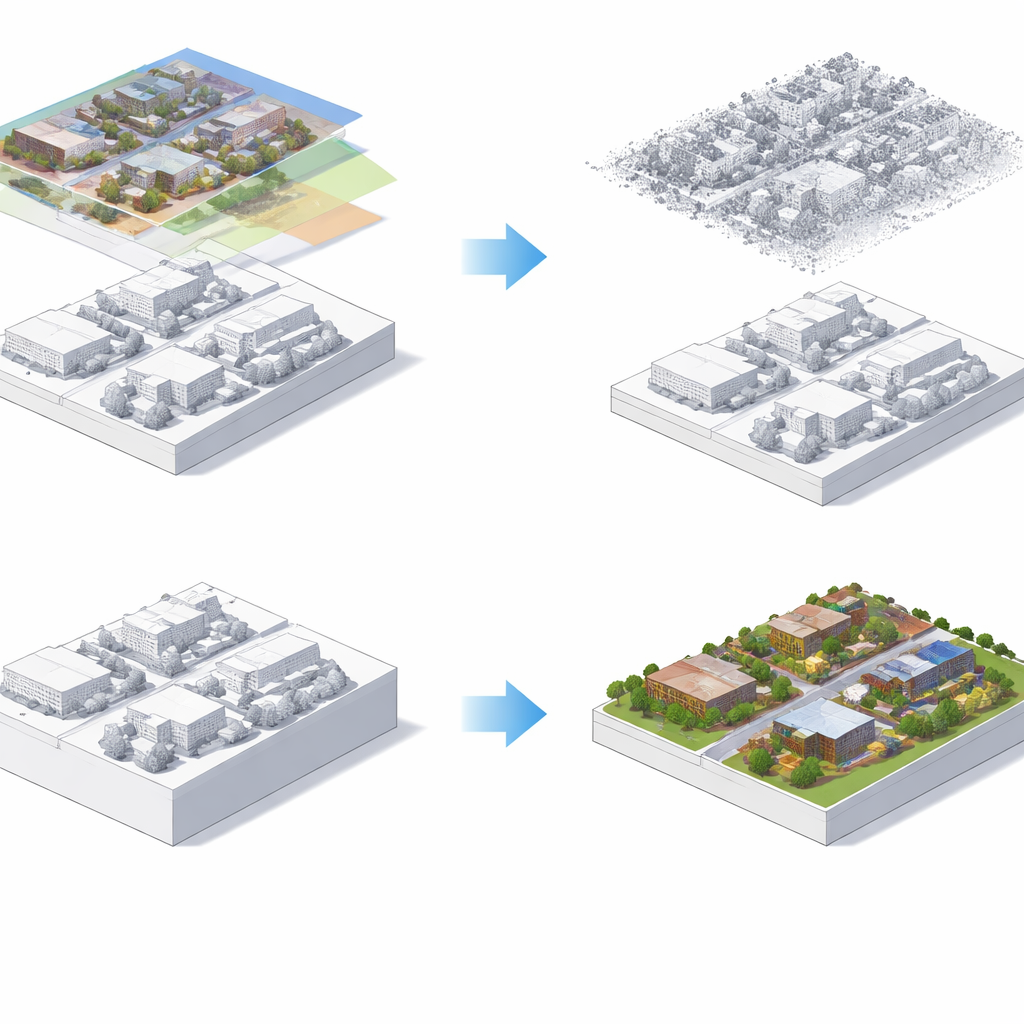

Building a virtual campus from points and pictures

Once the flights are complete, the heavy lifting moves to software. Algorithms first reconstruct a 3D model from overlapping photos, a process that figures out where each image was taken and how surfaces line up. In parallel, the laser data are cleaned, aligned, and classified into ground, buildings, and vegetation, then thinned so they are dense enough for detail but light enough to process efficiently. The two 3D worlds—one born from images and one from laser measurements—are then brought into the same geographic frame and gently snapped together so that roofs and walls match as closely as possible. The laser points provide the trustworthy shape of the campus, while the photos contribute color and material appearance, which are “baked” onto a surface mesh like wrapping a skin around a sculpture. This separation keeps measurements accurate while still delivering a visually rich model.

Sharpening the view without bending reality

For users who zoom in closely on building facades, simple textures can start to look blurry or blocky. To combat this, the researchers add a lightweight “super‑resolution” step: a compact deep‑learning network that takes each aerial photo and produces a sharper, more detailed version at twice the resolution. Crucially, this sharpening is applied only to the image textures, after the 3D geometry has already been fixed by the laser data. That means the walls and roofs do not move; only their visual clothing becomes crisper. Tests on sample facades show clearer edges and more legible fine details, such as windows and small structural elements, with modest extra processing time. The team also compares this learned sharpening with traditional tricks like contrast boosts, finding that the learned method offers more consistent gains without overly amplifying noise.

From research model to everyday campus tool

The finished 3D campus is exported into a web‑based mapping platform, where users can pan, zoom, tilt, and take measurements inside a browser.

What this means for future digital campuses

The study demonstrates that combining angled and top‑down drone views with laser scanning yields a more complete and accurate 3D campus than relying on overhead photos alone, especially for complex building facades. It also shows how image sharpening can safely improve visual quality without undermining measurement accuracy, as long as it is applied only to textures, not geometry. Beyond KFUPM, the same recipe could be reused for hospitals, industrial parks, or city districts that need regularly updated, web‑ready 3D maps. In short, the work points toward a future where campuses maintain living digital twins that serve inspectors, planners, students, and visitors alike—making the built environment easier to understand, manage, and explore.

Citation: Keshk, H.M., Abdallah, A.M., Almutairi, S. et al. UAV photogrammetry and lidar integration for high-fidelity 3D campus mapping at KFUPM. Sci Rep 16, 8328 (2026). https://doi.org/10.1038/s41598-026-39888-4

Keywords: smart campus, 3D mapping, drone imaging, LiDAR, digital twin