Clear Sky Science · en

An intelligent healthcare framework for hepatocellular carcinoma diagnosis based on aggregated learners from biomedical data utilising explainable artificial intelligence

Why smarter liver cancer checks matter

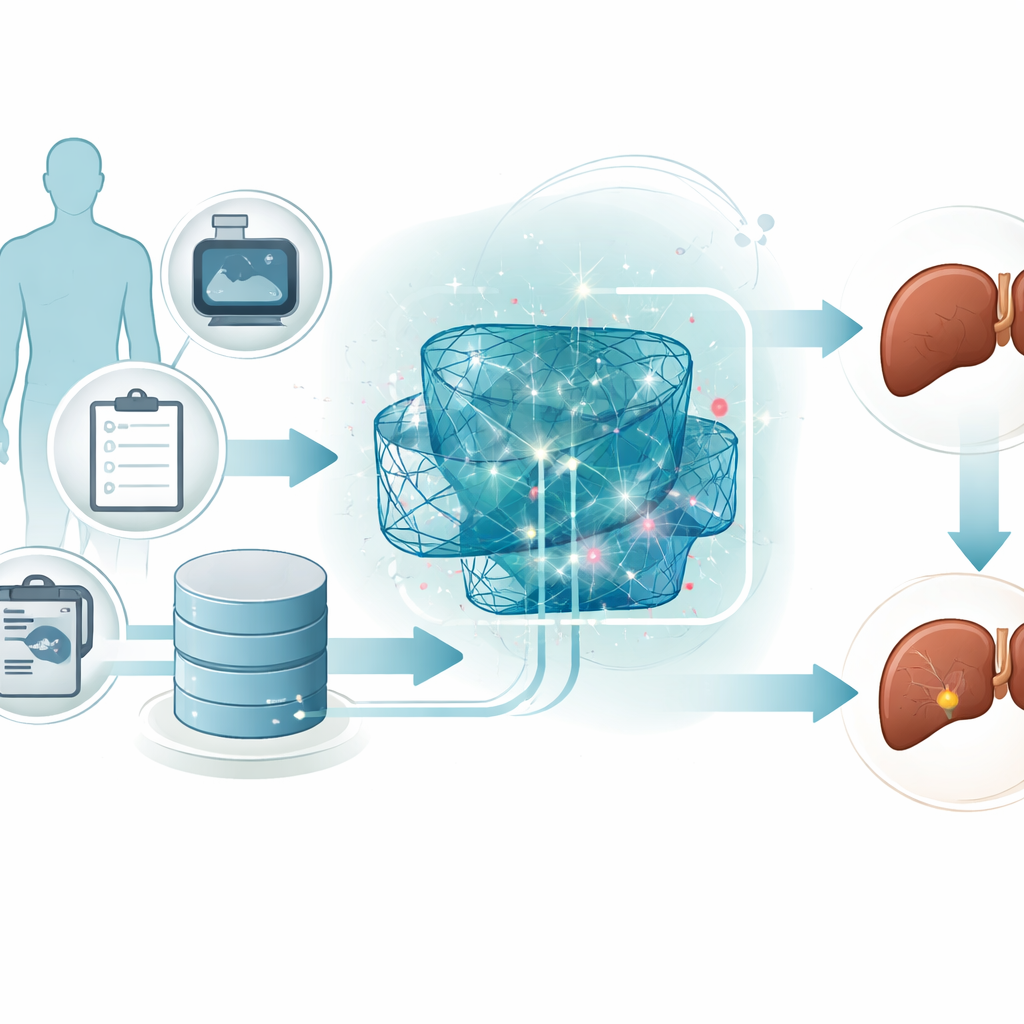

Liver cancer, especially a common type called hepatocellular carcinoma, often grows quietly until it is difficult to treat. Doctors already collect a wealth of routine test results from patients, but turning all those numbers into an early warning is hard. This study explores how advanced computer programs can sift through everyday medical data to spot which patients are at high risk, while also explaining their reasoning in ways doctors can trust.

Turning routine tests into early warnings

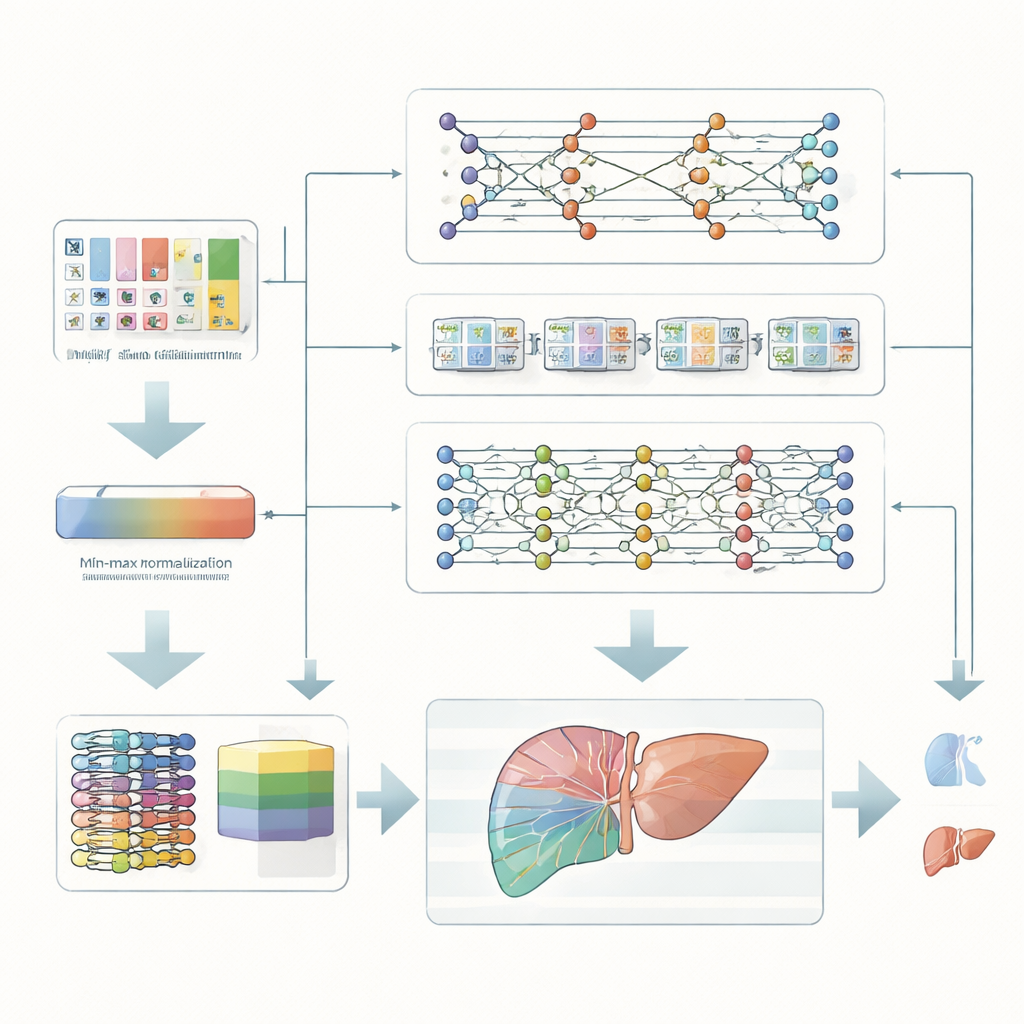

The researchers focus on patients with chronic liver problems, who face a higher chance of developing serious liver cancer. Instead of relying on expensive scans or complex genetic tests, they use standard clinical measurements—such as blood chemistry, liver enzymes, and basic health information. These measurements are first carefully rescaled so that all features fall into the same numerical range. This simple but important cleaning step helps computer models learn patterns more reliably and avoids letting any one unusually large value dominate the predictions.

Many digital “second opinions” working together

Rather than depending on a single algorithm, the team builds an ensemble, or team, of three different deep learning models. One model compresses the data to uncover the most informative combinations of features. A second model is designed to recognize patterns that unfold like sequences, capturing how several measurements together may hint at risk. A third model stacks several simple layers to capture complex, non‑linear relationships hidden in the data. Each model makes its own judgment about whether a patient belongs to a high‑risk or low‑risk group, and a higher‑level combiner weighs and merges these opinions into one final decision.

Opening the black box for doctors

Powerful as they are, deep learning systems are often criticized for acting like mysterious “black boxes.” To tackle this, the authors add an explainable artificial intelligence layer based on a method known as SHAP. This technique estimates how much each input feature pushes an individual prediction toward a safer or riskier outcome. For example, certain liver enzyme levels, markers of liver function, and signs of spread outside the liver emerge as especially influential. Doctors can see not only that the system flags a patient as high risk, but also which specific measurements drove that decision and in which direction, creating a more transparent partnership between clinician and machine.

How well does the approach work?

The team tests its framework on a public dataset of 165 patients tracked for at least a year, each labeled as surviving or not surviving. Despite the modest size of the dataset, the combined model learns to separate high‑risk from low‑risk patients with striking accuracy: it correctly classifies roughly 98 out of 100 cases in the final training stages. When compared with a range of existing methods—including classic statistical models and several modern neural networks—this approach not only matches or exceeds their accuracy, precision, and balance between missed and false alarms, but also does so with relatively low computing time. An ablation study, where the three component models are tried alone, shows that each contributes value, yet their combination performs best.

What this could mean for patient care

For everyday medical practice, this work points toward decision tools that are both sharp and understandable. A system built along these lines could help flag liver patients who quietly drift into a danger zone long before symptoms appear, using data already collected in many clinics. At the same time, its explanations—highlighting which test results and clinical signs matter most for a given person—could support doctors in refining treatment plans and discussing risks with patients. While the study still relies on a relatively small, single‑source dataset and omits imaging and genetic data, it offers a roadmap for smarter, more transparent cancer risk models that, with larger and more diverse data, could one day become routine allies in the fight against liver cancer.

Citation: Alqaralleh, B.A.Y., Alksasbeh, M.Z., Kulakli, A. et al. An intelligent healthcare framework for hepatocellular carcinoma diagnosis based on aggregated learners from biomedical data utilising explainable artificial intelligence. Sci Rep 16, 9357 (2026). https://doi.org/10.1038/s41598-026-39871-z

Keywords: liver cancer, medical AI, early diagnosis, explainable AI, clinical decision support