Clear Sky Science · en

A machine learning based scheme for enhancing the detection of position falsification attacks in vehicular ad hoc networks

Smarter Cars Watching Out for Cheats

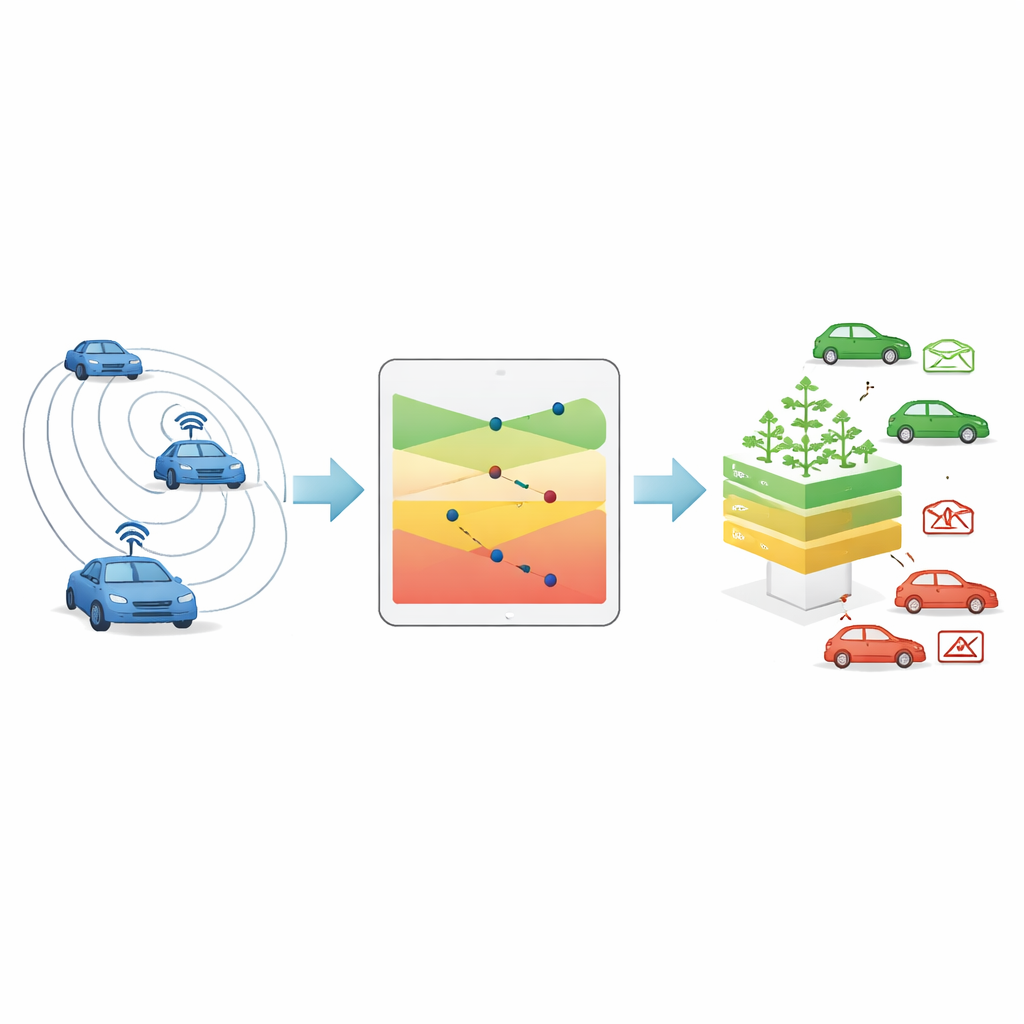

Modern cars are starting to talk to each other, warning about sudden braking, nearby crashes, or blocked lanes. These wireless conversations can make roads safer, but only if the shared information is honest. This study tackles a serious problem: what happens when a car lies about where it is? The authors show how a tailored form of machine learning can spot vehicles that fake their position, making connected-car networks more trustworthy and potentially preventing accidents triggered by false data.

Why Lying Cars Are So Dangerous

Vehicles in so‑called vehicular ad hoc networks constantly broadcast short safety messages that include their location, speed, and direction. Nearby cars and roadside units use this stream of updates to judge when to warn drivers or trigger automatic responses. If a malicious vehicle reports a fake position, it can mislead others into slowing down, changing lanes, or rerouting unnecessarily. In the worst case, it could prevent a collision warning from being issued in time. Because cars move quickly and connections change every moment, detecting such misbehavior is challenging, and existing methods still miss too many attacks for comfort.

Turning Radio Signals into a Trust Clue

The core idea of the paper is to cross‑check what a car claims with what the radio signal quietly reveals. Every wireless message arrives with a measurable signal strength. In general, signals weaken as distance grows, though real streets add noise through reflections, buildings, and traffic. Instead of naively turning signal strength into an exact distance, the authors first study many honest messages to learn how strong the signal tends to be at different ranges. For each distance band they compute three nested zones of plausible signal values: a tight, medium, and wide confidence range. When a new message arrives, the system checks whether its signal falls inside one of these ranges for the claimed distance and assigns a simple trust score accordingly, from clearly plausible to highly suspicious.

Teaching a Digital Forest to Spot Fakes

Signal strength alone is not enough, so the authors combine this trust score with other straightforward information from safety messages—such as the car’s reported position and speed, how those change over time, and how far apart the sender and receiver actually are. From these they build three alternative bundles of input features and train several common machine‑learning algorithms on a public dataset that simulates realistic traffic and five styles of position cheating. Among the tested models, a technique called a random forest—essentially a voting committee of many simple decision trees—paired with one particular feature bundle gave the best balance of accuracy and speed. It correctly identified nearly all fake‑position messages across all attack types while keeping the computing burden low enough for use inside moving vehicles.

Putting the New Feature to the Test

To show that their signal‑based trust score really adds value, the researchers compared the full model with a version that uses exactly the same information except for this new feature. Evaluated on a separate simulation run it had never seen before, the full model stayed noticeably more accurate, especially for attacks where a car keeps broadcasting one fixed false position or pretends to stop suddenly. In some of these cases the improvement in a key performance measure was dramatic, meaning the system missed far fewer bad messages without increasing false alarms too much. Statistical tests confirmed that the difference between the two models is not just due to chance.

What This Means for Safer Roads

From a non‑specialist’s perspective, the work shows that cars can use the natural behavior of radio signals as an independent reality check on what neighboring vehicles claim about themselves. By folding that check into a lightweight machine‑learning model that runs on each car, the system can spot lying vehicles far more reliably than earlier methods tested on the same benchmark data. While the results come from simulations rather than real‑world trials, they suggest a clear path toward smarter, self‑protecting traffic networks where even small gains in catching misbehavior could translate into lives saved.

Citation: Abdelkreem, E., Hussein, S. & Tammam, A. A machine learning based scheme for enhancing the detection of position falsification attacks in vehicular ad hoc networks. Sci Rep 16, 8950 (2026). https://doi.org/10.1038/s41598-026-39867-9

Keywords: connected vehicles, wireless road safety, machine learning security, location spoofing, vehicular networks