Clear Sky Science · en

Robust place recognition under illumination changes using pseudo-LiDAR from omnidirectional images

Robots That Never Get Lost in the Dark

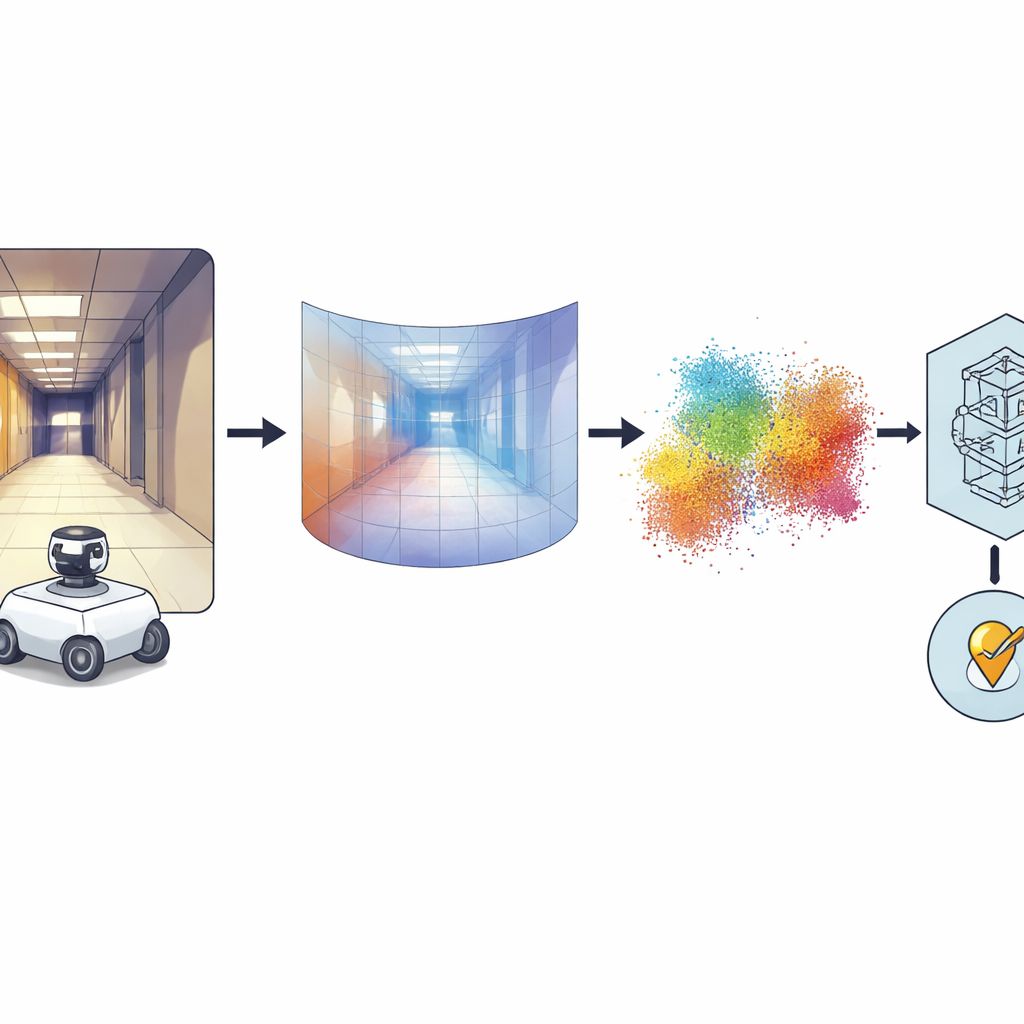

Imagine a robot that can recognize where it is in a building, whether it’s midday with sun streaming through the windows or late at night with only a few lamps on. This paper presents a new way to give robots that kind of reliable sense of place using just a single, relatively cheap camera. By turning flat images into 3D information, the researchers make robot navigation far less sensitive to shadows, glare, and other tricky lighting changes that usually confuse vision-based systems.

Why Finding the Same Place Twice Is Hard

For a robot, “place recognition” means realizing, “I’ve been here before,” so it can localize itself on a map and navigate safely. Traditional systems rely on either regular cameras or laser-based range sensors known as LiDAR. Cameras are inexpensive and capture rich color and texture, but their view changes drastically between cloudy, sunny, and night-time scenes. LiDAR is much more stable because it measures distance directly, but it is bulky and costly. Some robots combine several sensors, but this raises the price and complexity of the system. The authors of this work take a different route: they keep the hardware simple, using only one omnidirectional camera that sees all around the robot, and upgrade the software so that the robot reasons about 3D structure instead of raw appearance.

From All-Around Photos to 3D Shapes

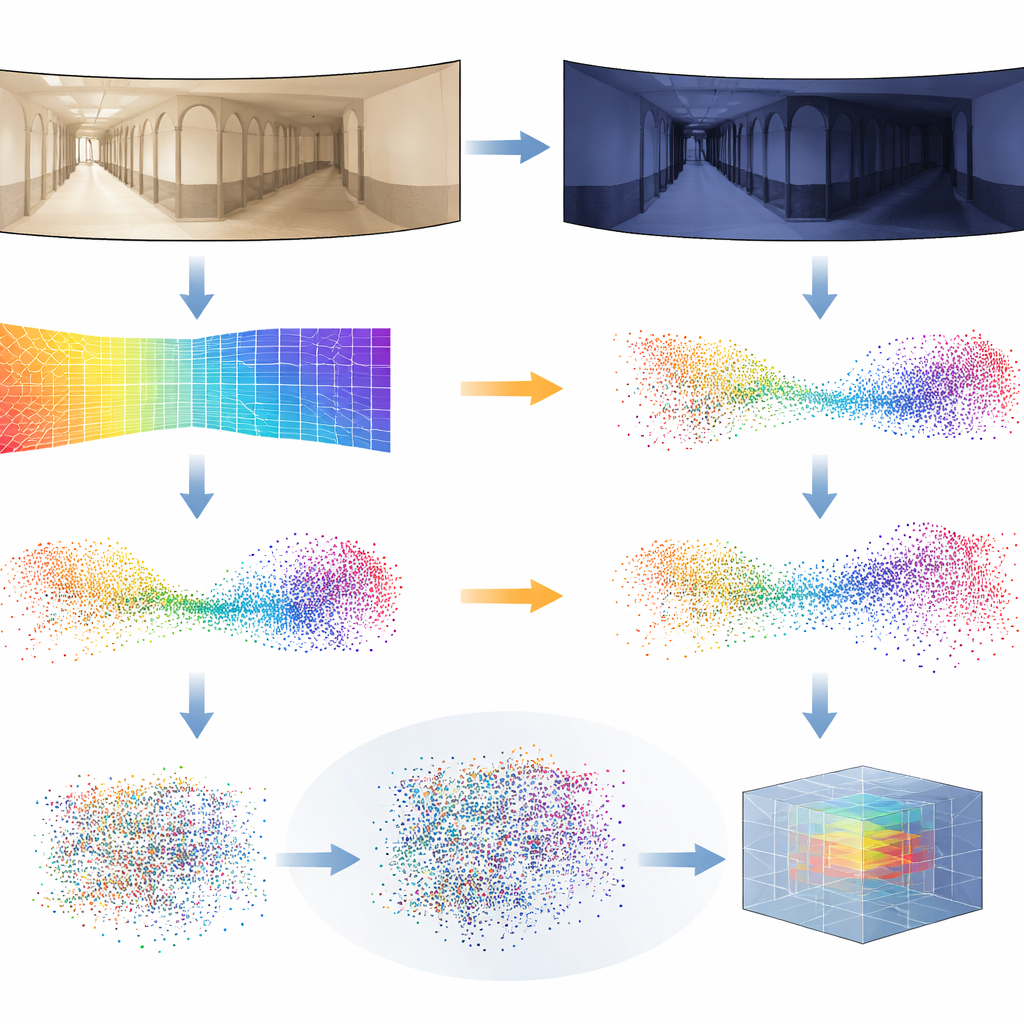

The key idea is to convert each panoramic image into a dense picture of depth, where every pixel encodes how far that part of the scene is from the camera. To do this, the authors rely on a powerful “foundation” model called Distill Any Depth, which has learned to infer depth from enormous collections of images. The resulting depth map is then turned into a cloud of 3D points—a kind of virtual LiDAR, or pseudo-LiDAR—without needing a real laser scanner. Extra processing cleans up artifacts introduced by the special mirror used for the 360-degree camera, so missing or occluded regions are filled in. Finally, a neural network called MinkUNeXt, designed to work directly on 3D point clouds, compresses each cloud into a compact fingerprint that captures the overall layout of the place.

Teaching the System to Ignore Lighting Tricks

Depth estimates are not perfect, especially when lighting changes drastically from one moment to the next. To make the system robust, the researchers introduce a new training trick they call Distilled Depth Variations. Instead of trusting a single depth model, they deliberately mix in depth predictions from several smaller, less accurate versions of the depth estimator. This controlled “noise” mimics the kinds of distortions that appear under different illumination conditions, forcing the 3D network to learn what really matters about a place’s geometry and what can be safely ignored. They also enrich each 3D point with information about image edges and texture strength—features that tend to be more stable across lighting changes than raw color.

Proving It Works in the Real World

To test their approach, the team turned to demanding public datasets of indoor robot journeys. In these collections, a robot roams corridors and rooms multiple times under cloudy daylight, bright sunshine, and at night, while furniture and people move around. The authors trained their system using only cloudy images from one building and then evaluated it across all buildings and lighting conditions, including scenes it had never seen before. Their pseudo-LiDAR method consistently matched or outperformed leading 2D image-based techniques and other 3D systems, especially in the toughest cases such as night-time runs or transfers to entirely new environments. They also showed that the same pipeline works with ordinary forward-facing cameras, not just panoramic ones, by swapping in the appropriate projection from depth to 3D.

What This Means for Future Robots

In everyday terms, this work shows that a robot can gain LiDAR-like awareness of its surroundings using only a single camera and clever software. By focusing on 3D structure rather than the fickle details of lighting and color, the system can recognize places reliably across day, night, and weather changes, all while keeping the hardware simple and affordable. This could make robust indoor navigation more accessible for service robots, warehouse vehicles, and assistive devices, and it opens the door to future systems that blend depth with higher-level scene understanding for even more reliable autonomy.

Citation: Cabrera, J.J., Alfaro, M., Gil, A. et al. Robust place recognition under illumination changes using pseudo-LiDAR from omnidirectional images. Sci Rep 16, 8817 (2026). https://doi.org/10.1038/s41598-026-39848-y

Keywords: robot localization, 3D vision, place recognition, depth estimation, omnidirectional cameras