Clear Sky Science · en

Enhancing remote sensing road extraction via DS-Unet with complementary attention and surrogate gradients

Sharper Maps from Space

Modern digital maps rely heavily on satellite and aerial photos, but automatically tracing roads in these images is surprisingly hard. Shadows, trees, dirt tracks, and seasonal changes can confuse computer programs, leading to broken or false roads on the map. This paper introduces a new image-analysis method, called DS-Unet, that aims to draw cleaner, more complete road networks from remote sensing images, making future maps more reliable for navigation, planning, and disaster response.

Why Finding Roads Is So Tricky

From high above, real-world roads weave through cities, farmlands, and factories, often hidden by buildings, vegetation, and changing light. Traditional deep-learning systems, which already power many mapping services, look at images piece by piece. They are good at spotting local patterns, such as a strip of asphalt, but they struggle to understand how far-apart pieces connect into a continuous road. As a result, they may miss skinny streets in dense villages, break long highways into fragments, or mistake similar-looking features, like dirt paths or parking-lot markings, for real roads.

A New Way to Combine What the Network Sees

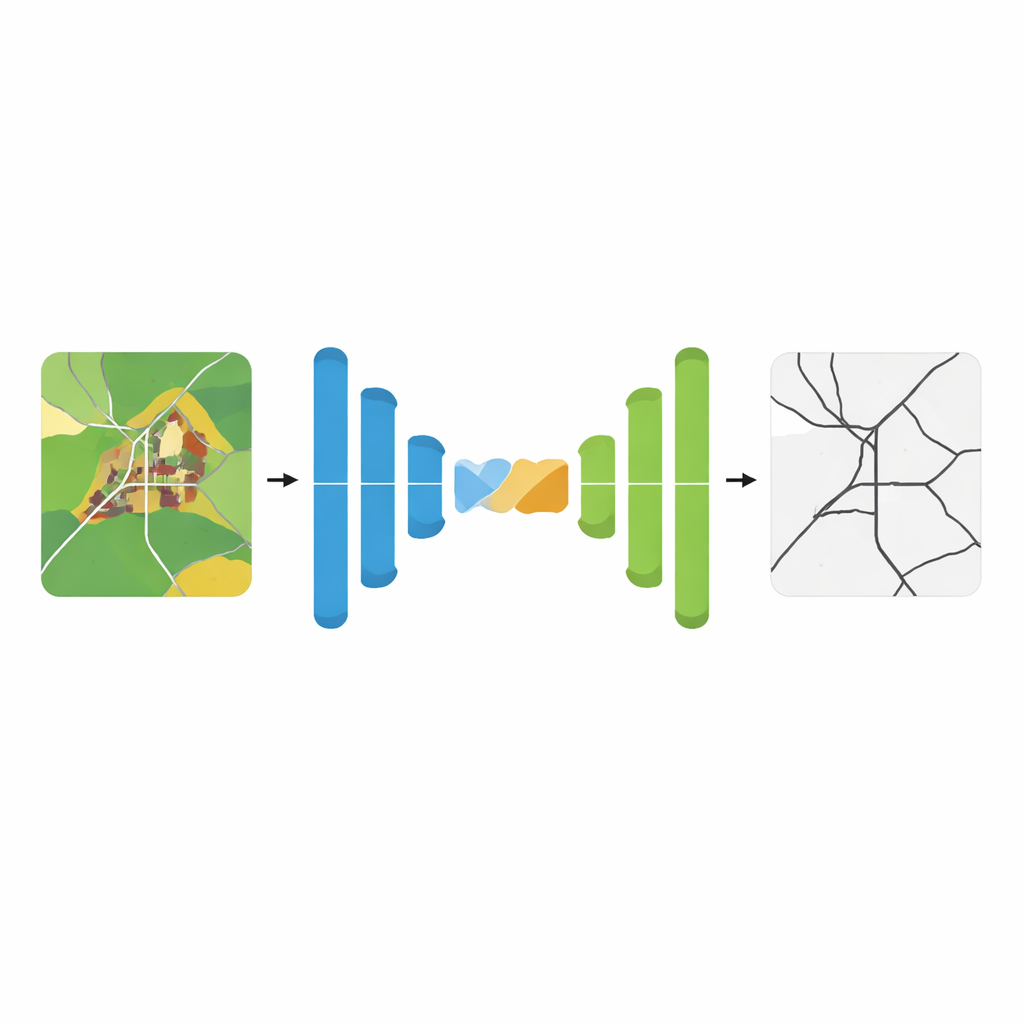

DS-Unet builds on a popular neural network design that processes an image through a contracting path (which summarizes details) and an expanding path (which rebuilds a full-resolution prediction). Classic designs link these paths with simple shortcuts that pass along early visual details. The authors argue that these shortcuts mix information in a crude way, often blending useful road edges with distracting background patterns. DS-Unet replaces them with a smarter connector, the Complementary Attention Fusion Module, which tries to highlight the right details while also keeping track of the big picture.

Letting the Network Focus and Look Wide

The new fusion module works in two stages that complement each other. First, a “discriminative” stage focuses on what makes roads stand out from their surroundings. It effectively subtracts broad, low-detail background patterns from the feature maps, acting like a high-pass filter that sharpens road boundaries and textures while suppressing clutter such as fields or rooftops. Next, a “global context” stage gathers information from across the entire image so that distant road segments can be treated as part of a single network. By combining these two views, the model is better at preserving tiny grid-like streets in villages and maintaining continuous loops and curves in industrial zones.

Keeping the Learning Process Alive

Deep networks learn by adjusting many internal “neurons,” but a common activation rule, known for its simplicity and speed, can cause some neurons to stop updating altogether. When too many go silent, training becomes unstable and the final predictions lose fine detail. To avoid this, the authors adopt a technique they call SUGAR, which keeps the simple rule for forward calculations but uses a smoother, artificial gradient behind the scenes when the model updates itself. This trick keeps gradient signals flowing even when inputs are weak, so more neurons remain active and can contribute to learning subtle road patterns.

Proving It Works in the Real World

To test DS-Unet, the team used two well-known collections of satellite road images from different regions and landscapes. They cut the large images into manageable tiles, applied realistic variations in brightness, color, and orientation, and then trained their system alongside 17 leading road-extraction and segmentation methods, including both classic convolutional networks and newer transformer-based designs. Across all key measures of accuracy—how much of the true road area is captured, how often false roads are avoided, and how well the predicted and true road maps overlap—DS-Unet consistently came out on top, while still running fast enough to be practical for large-scale mapping.

What This Means for Better Maps

In plain terms, this work shows that teaching a neural network to both sharpen away background clutter and understand the broader layout of a scene can deliver cleaner, more connected road maps from satellite imagery. Paired with a more stable learning rule that keeps the model’s internal units actively improving, DS-Unet traces narrow village streets, avoids mistaking dirt tracks for real roads, and links scattered road fragments into coherent networks better than existing systems. As mapping agencies and tech companies push toward fully automated, frequently updated maps, approaches like DS-Unet could play a key role in turning raw imagery into accurate, usable road information for everyday life.

Citation: Wang, J., Huang, Z., Ren, C. et al. Enhancing remote sensing road extraction via DS-Unet with complementary attention and surrogate gradients. Sci Rep 16, 9044 (2026). https://doi.org/10.1038/s41598-026-39811-x

Keywords: remote sensing roads, satellite mapping, deep learning segmentation, attention-based networks, aerial imagery analysis