Clear Sky Science · en

ACFM: adaptive channel weighted fusion algorithm for improving small object detection performance in UAV traffic

Seeing More from the Sky

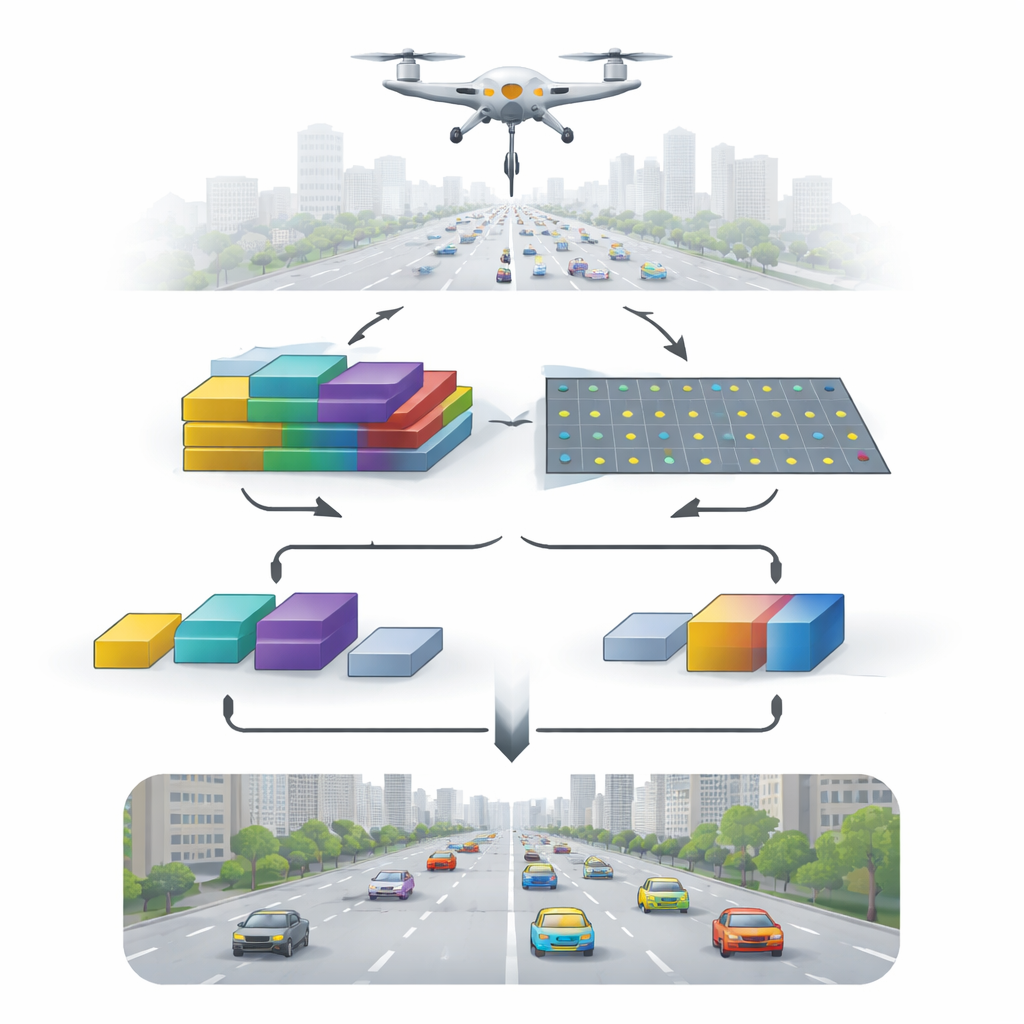

As drones increasingly monitor traffic, crowd safety, and disaster zones, they face a simple but stubborn problem: from high above, the things we care about—cars, buses, even people—often occupy just a few pixels. These tiny specks are easy for algorithms to miss, especially in busy city streets full of shadows, signs, and motion blur. This paper introduces a new way to help computers “see” such small objects more clearly in drone footage, without slowing detection to a crawl.

Why Tiny Dots Matter

Drone cameras capture wide scenes from high altitudes, so a single image can contain highways, buildings, trees, and dozens of vehicles. Most of those vehicles appear very small and may overlap or hide behind one another. Traditional deep-learning detectors are excellent at finding large, clear objects, but they tend to lose track of fine details as information flows through deeper layers of the network. The result is that small vehicles blend into the background, particularly in crowded junctions, low light, or slightly blurred footage. Existing multi-scale methods help somewhat by combining information from different layers of the network, but they usually rely on fixed, pre-set rules and struggle to adapt when the scene becomes especially cluttered or complex.

A Smarter Way to Blend Clues

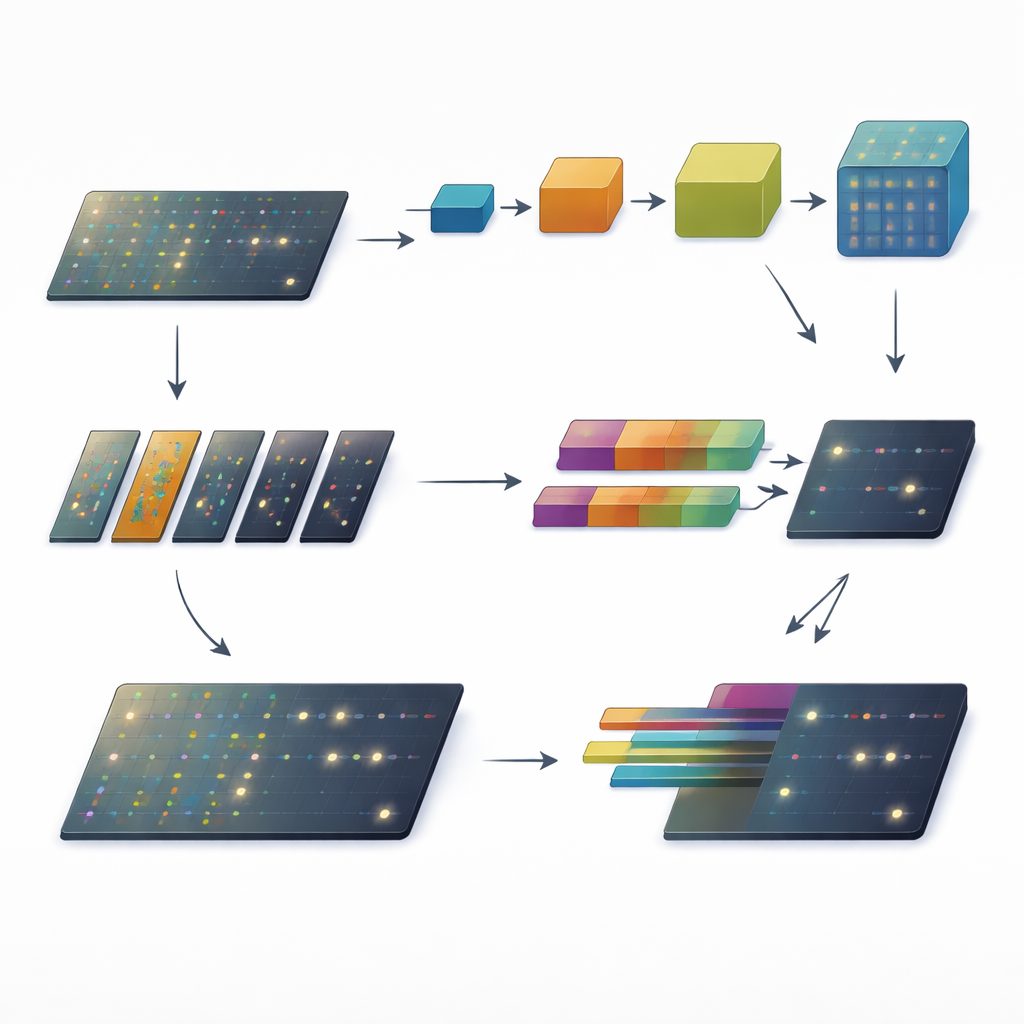

The authors propose an adaptive channel weighted fusion module, or ACFM, designed to plug into existing object detectors and make them better at spotting small targets in drone traffic scenes. Instead of treating all visual information equally, ACFM runs two specialized branches in parallel and then fuses their outputs in a scene-dependent way. One branch refines how features are handled across scales so that fine spatial details are not washed out. The other branch acts like a spotlight, boosting truly important small-object cues while suppressing distracting background patterns. Crucially, the fusion between these branches is not fixed. ACFM adjusts how much it trusts each branch according to the current image, allowing the system to respond differently to a quiet highway than to a dense intersection.

Keeping Details Across Scales

In the first branch, called the multi-scale refinement block, the network sends features through a pair of paths. One path simply preserves the original information, ensuring that the fine, high-resolution details survive. The other path compresses and then expands the image representation, encouraging the model to understand the broader context of where small vehicles are located within the scene. At the end, these paths are merged so that each output pixel benefits both from sharp local detail and from an understanding of the surrounding area. This makes it easier for the detector to draw tighter, more consistent bounding boxes around small cars and buses of different sizes, even when the background is busy or partially obscured.

Turning Down the Background Noise

The second branch focuses on attention. It splits the feature channels into groups and, for each group, learns a sparse “mask” that highlights only the most promising regions. Areas that look like background—road surfaces, building roofs, tree canopies—are dialed down, while tiny but meaningful signals, such as the reflections and edges of vehicles, are amplified. By combining these sharpened details back with the original features in a controlled way, this grouped sparse attention branch produces a cleaner, more discriminative view of the scene. This makes it less likely that the detector will confuse patterns on the asphalt or shadows cast by buildings with real vehicles.

Letting the Scene Choose the Mix

The final piece of ACFM is a channel-level adaptive weighting mechanism that decides, channel by channel, how much to rely on each branch. It first summarizes what is happening in the whole image, then uses a lightweight operation to infer a set of weights between zero and one. If the scene is simple and the objects are well separated, the network may lean more on the multi-scale refinement. If the scene is dense, cluttered, or noisy, it can shift more emphasis to the attention branch that suppresses background distractions. This dynamic balancing replaces rigid, hand-crafted fusion rules with an automatic, data-driven strategy, enabling the detector to respond flexibly as conditions change from one frame to the next.

Sharper Eyes for Drone Traffic

When plugged into a popular detector called GFL and tested on three public drone traffic datasets, ACFM consistently improved detection scores, especially on challenging sets with many small, overlapping vehicles. The gains in accuracy came with little extra computational cost, meaning the enhanced system can still operate close to real time—a critical requirement for practical traffic surveillance. For non-specialists, the takeaway is straightforward: by preserving details, suppressing noise, and adapting how features are combined based on the scene, ACFM helps drones act more like attentive human observers and less like rigid pattern matchers, offering a more reliable foundation for future smart-city and aerial monitoring applications.

Citation: Liu, S., Zhu, H., Yuan, Z. et al. ACFM: adaptive channel weighted fusion algorithm for improving small object detection performance in UAV traffic. Sci Rep 16, 8366 (2026). https://doi.org/10.1038/s41598-026-39789-6

Keywords: drone traffic monitoring, small object detection, computer vision, attention mechanisms, multi-scale feature fusion