Clear Sky Science · en

Measurement noise attenuation in modified Smith predictor and automatic offset controllers for integrator plus dead-time system

Why this matters for everyday technology

Many devices we rely on—from chemical plants and power systems to cars and small lab rigs—must react to sensor readings that arrive late and are polluted by noise. This paper asks a simple but crucial question: when signals are delayed and noisy, which type of automatic controller keeps things stable, accurate, and gentle on the equipment? The authors compare a popular prediction-based method with a newer approach that actively estimates and cancels disturbances, revealing why one is far more reliable in the messy real world.

Delayed reactions and shaky sensor readings

In many processes, changing an input (like a heater power or valve position) does not affect the output immediately. There is a built-in delay while heat spreads, chemicals mix, or mechanical parts move. Engineers often describe such systems as an “integrator plus dead time”: the output keeps accumulating the effect of the input, but only after a waiting period. At the same time, sensors measuring temperature, flow, or position always contain some random noise. A controller must therefore steer a system whose response is both delayed and observed through a jittery lens. If this is done poorly, the control signal can swing wildly, wear out actuators, and still fail to reach the desired target value.

Old predictor versus new offset remover

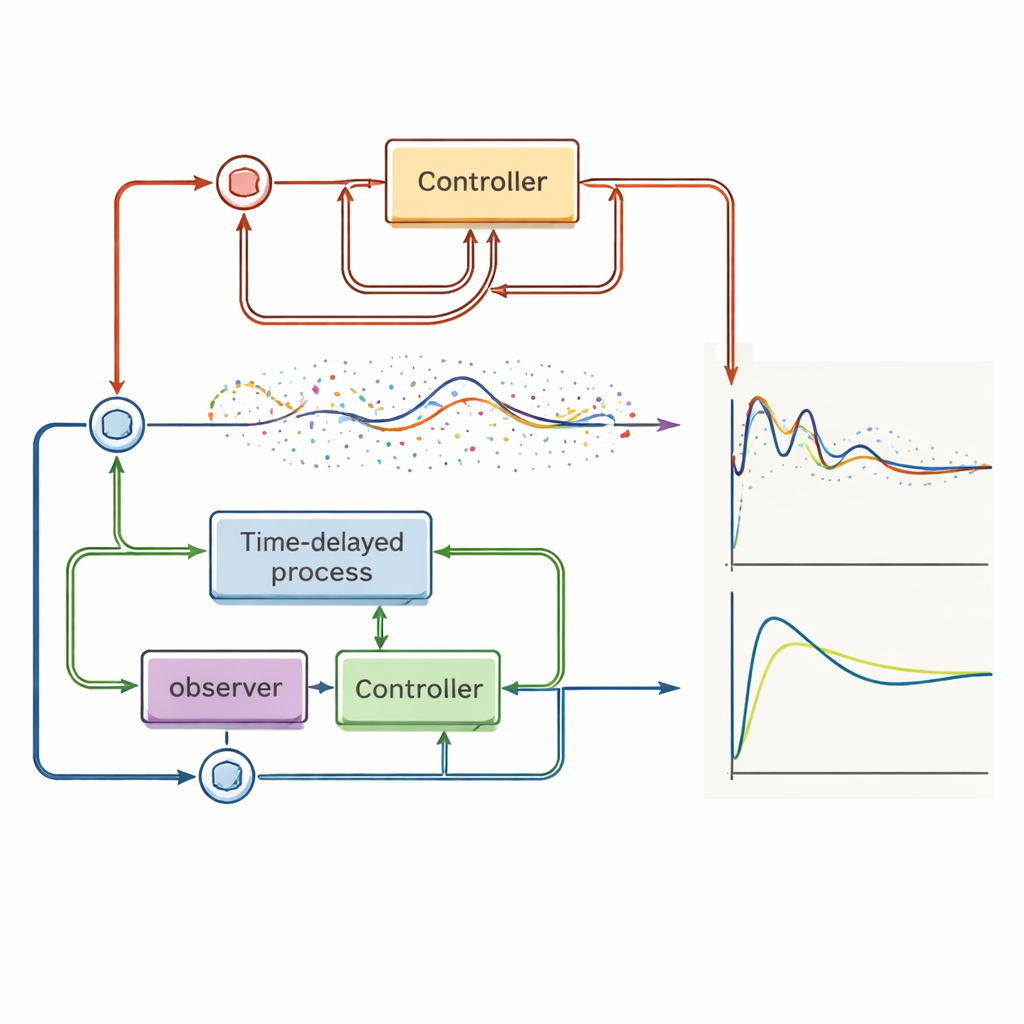

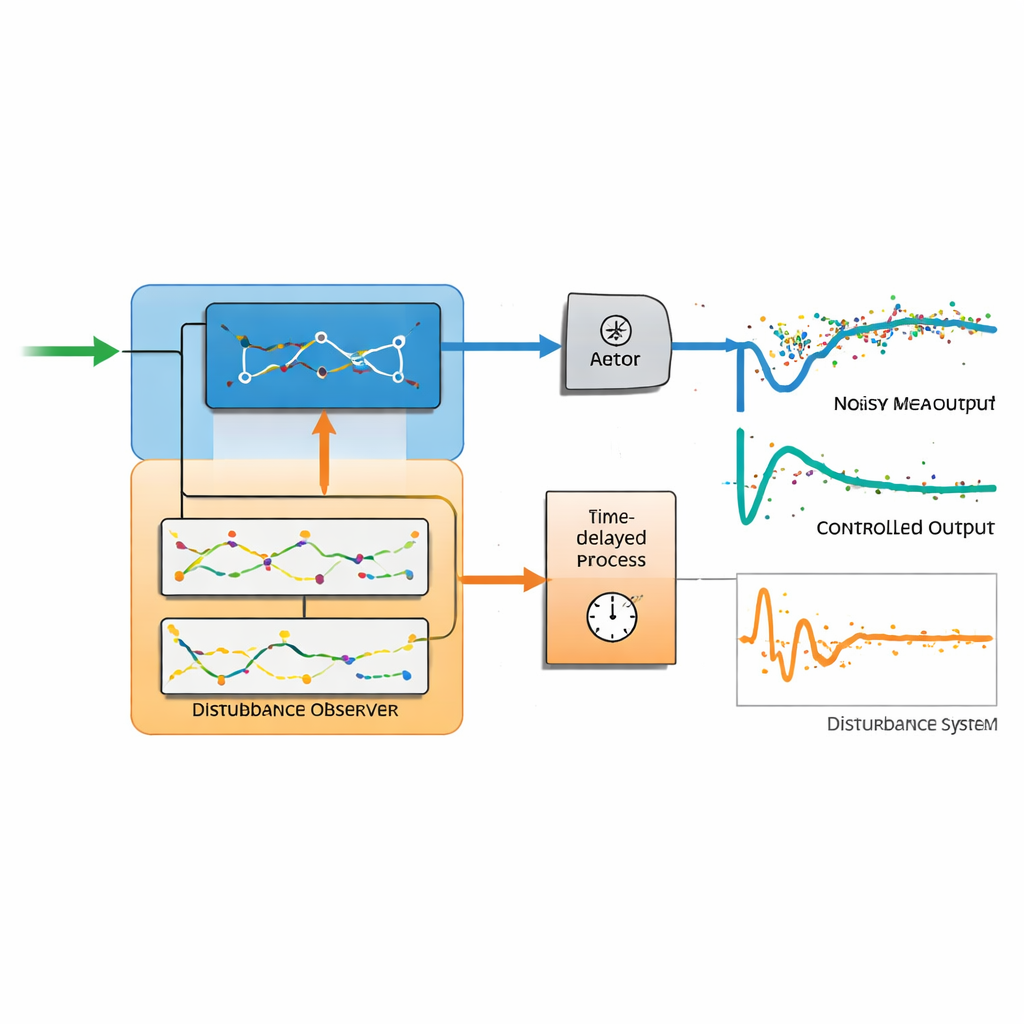

The classic Smith predictor and its modern variant, the Åström–Smith predictor, tackle delay by building an internal model of the process and using it to predict the future output. Under ideal conditions, this can give fast, sharp responses. The competing design examined here, called the automatic offset controller, takes a different route. It combines an ordinary stabilizing controller with a disturbance observer—a module that infers the hidden disturbances acting on the input and cancels them automatically. The key twist is that this observer uses a full internal model of the delayed system, along with carefully designed low‑pass filters and, if needed, higher‑order derivatives of the output. This structure lets engineers tune how quickly disturbances are reconstructed without disturbing how the system tracks a desired setpoint.

What happens when noise is real, not ideal

When the authors add realistic measurement noise in simulations and experiments, the difference between the two approaches becomes stark. The predictor-based controller, which relies on several marginally stable integrator blocks, becomes extremely sensitive to noise. Even for noise levels as low as about one percent of the signal, the control effort explodes—hundreds to thousands of times larger than with the automatic offset controller—and the actuator signal becomes violently jittery. Worse, the predictor can no longer guarantee that the output eventually matches the setpoint: persistent offsets and even instability appear, especially when actuators hit their saturation limits. By contrast, the automatic offset controller maintains smooth control signals, effectively rejects constant disturbances, and keeps the output close to the target value, thanks to its filtering and disturbance-estimation structure.

Putting the methods to practical tests

The paper does not stop at abstract models. The authors apply both controllers to an unstable chemical reactor approximated by a simple delay-dominated model, and to a real thermal laboratory setup built around a lamp, temperature sensor, and cooling fan. In the unstable case, the automatic offset controller still works reliably, although its tuning must be softened to avoid overshoot, while the predictor-based method suffers from growing errors as noise intensifies. On the thermal plant, the automatic offset controller produces near–time-optimal responses that are smooth in both temperature and control effort, even when the fan introduces sudden changes. The predictor-based controller, in contrast, shows visible steady errors and noticeably slower, less trustworthy behavior whenever realistic noise and actuator limits are present.

What this means for future controllers

From a lay perspective, the takeaway is clear: a controller that merely predicts the future based on an ideal model can look impressive on paper, but may misbehave badly once real‑world noise and limits appear. The automatic offset controller, with its built‑in disturbance observer and carefully filtered internal model, proves more robust, more accurate, and easier to tune across a wide range of delayed processes. The authors conclude that while a modified Smith predictor can still be useful in niche, low‑noise situations, a disturbance‑observer‑based design is a simpler and more reliable default choice for modern control systems where sensors are imperfect and stability truly matters.

Citation: Huba, M., Bistak, P. & Vrancic, D. Measurement noise attenuation in modified Smith predictor and automatic offset controllers for integrator plus dead-time system. Sci Rep 16, 8335 (2026). https://doi.org/10.1038/s41598-026-39732-9

Keywords: time-delay control, disturbance observer, measurement noise, automatic offset controller, Smith predictor