Clear Sky Science · en

Enhanced breast cancer detection framework based on YOLOv11n with multi-scale feature calibration

Why finding tiny warning signs matters

Breast cancer is far easier to treat when it is caught early, but the earliest warning signs can be almost invisible even to trained experts. On microscope slides, dangerous cells may be tiny, oddly shaped, and blurred into the surrounding tissue. This study introduces an artificial intelligence (AI) system designed specifically to spot these subtle changes more reliably and quickly, potentially helping doctors catch cancers sooner and with greater confidence.

The challenge of seeing the nearly invisible

Traditional imaging methods, from mammograms to microscope slides, depend heavily on a doctor’s experience and moment-to-moment focus. Small tumors or borderline cases can hide in dense tissue or look very similar to harmless changes. Computer vision tools have begun to assist, but many existing systems struggle with the tiniest lesions, with tumors of unusual shapes, and with fuzzy edges that do not clearly separate healthy and abnormal tissue. These weaknesses are especially serious for intermediate-grade tumors, which are both common and clinically important but hard to distinguish.

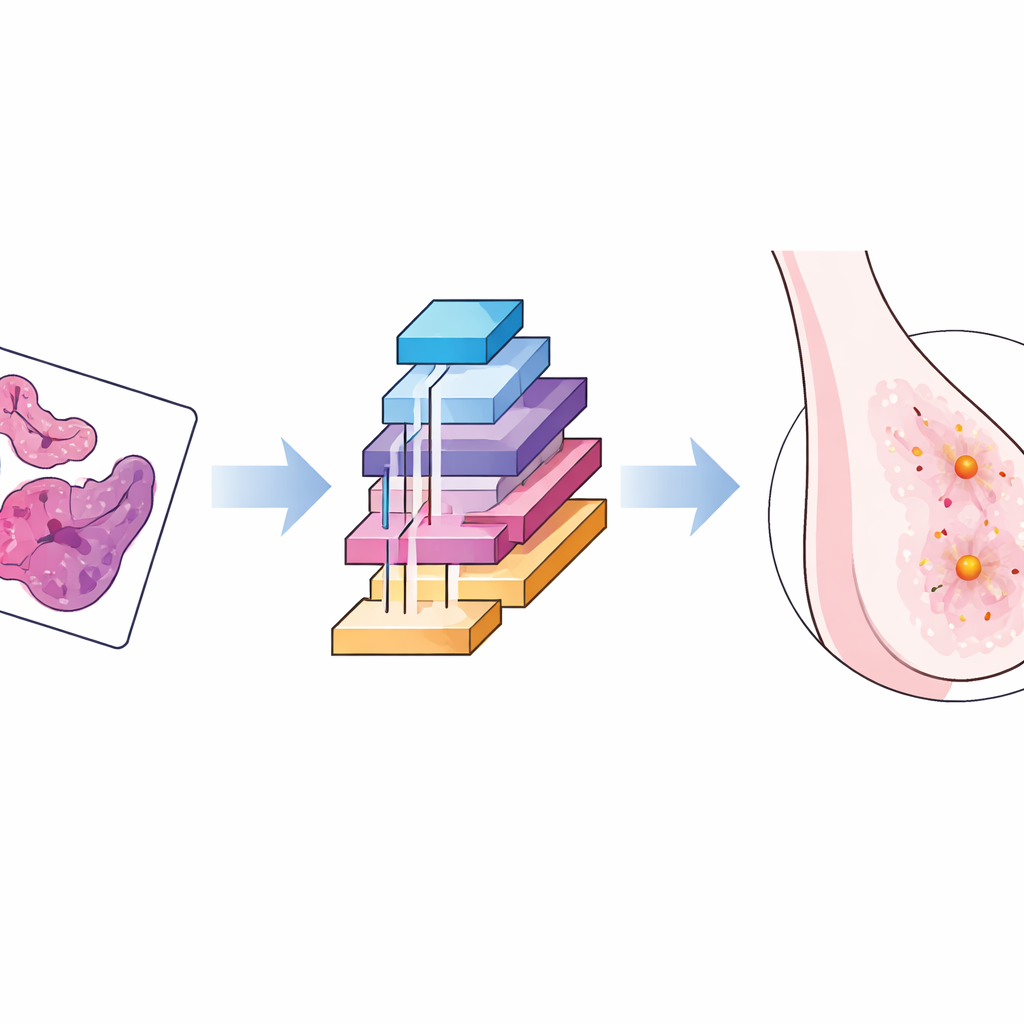

An AI model tailored for breast tissue images

The researchers built on a fast object-detection family of algorithms known as YOLO, selecting a lightweight version that can run quickly even on modest hardware. They then reshaped its inner workings to better match the quirks of breast cancer images taken under the microscope. The new framework adds three key building blocks that work together: one that adapts to distortions and changes in scale, one that learns to focus on the most informative channels of visual data while ignoring background clutter, and one that carefully calibrates context and spatial detail so that small lesions stand out more clearly from surrounding structures.

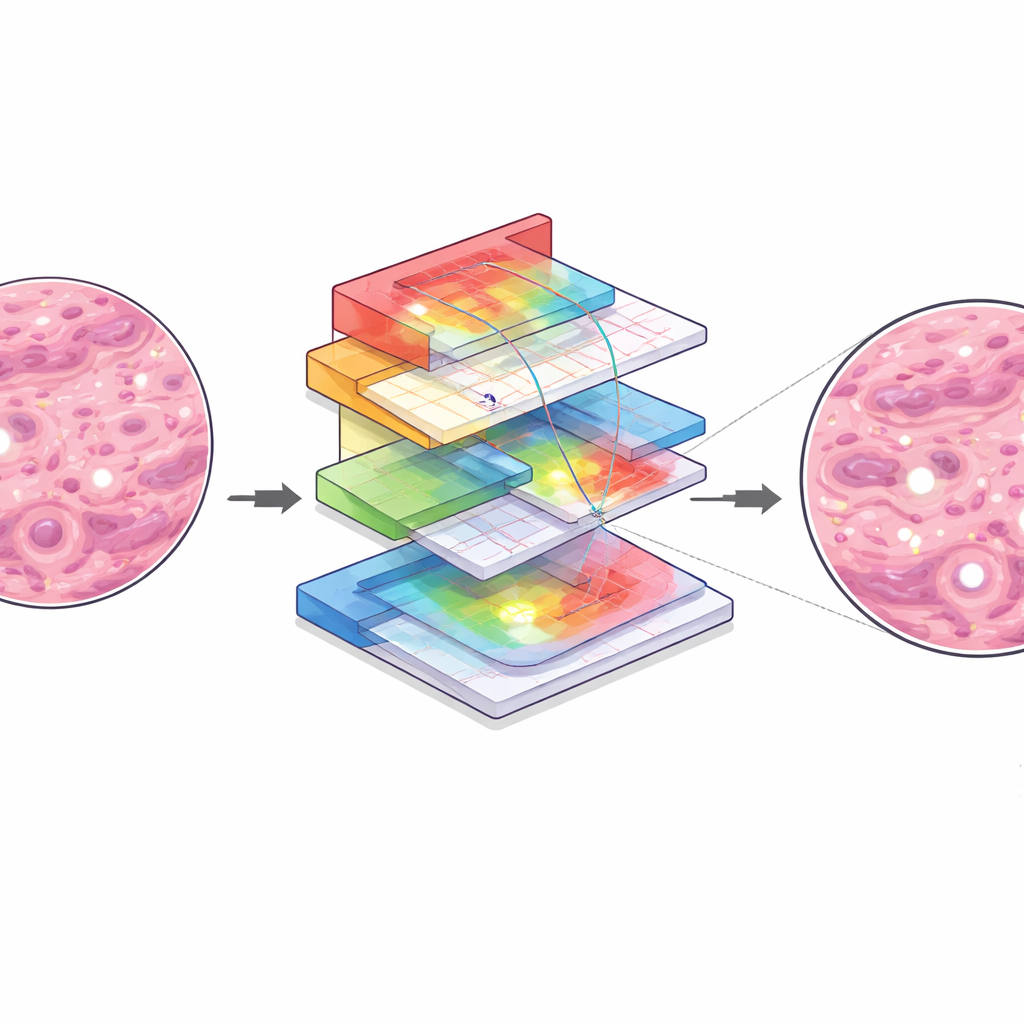

How the smarter vision system works inside

In simple terms, the first module lets the AI “flex” its viewing window, adjusting how it samples an image so that both tiny spots and larger structures are analyzed with equal care. The second module acts like a set of tunable spotlights, emphasizing image patterns most likely to indicate disease while dimming unhelpful textures. The third module looks at the broader neighborhood around each pixel and then fine-tunes the alignment between coarse, high-level patterns and fine-grained details, so that the system’s internal map of “suspicious” regions lines up closely with the true lesion boundaries. Together, these steps help the AI distinguish between very similar tumor grades and reduce confusion between abnormal tissue and normal background.

Putting the system to the test

To evaluate their approach, the authors used a public collection of over five thousand high-resolution breast pathology images, covering benign samples and several grades of malignant tumors. They trained and tested their model under the same conditions as several state-of-the-art detectors, including newer YOLO versions and a popular transformer-based method. The enhanced system achieved the best overall accuracy, with higher precision and a stronger average score across all categories. It was especially effective for the difficult mid-grade tumors, where its detection scores rose sharply compared with the original YOLO model. Importantly, it maintained very high processing speed, suggesting it could handle large slide sets or real-time workloads in clinics.

Resilience, shortcomings, and next steps

The team also examined how the system behaves when images are corrupted by noise, blur, or brightness changes—common problems in everyday clinical practice. While performance dropped somewhat, as expected, the new modules helped the AI degrade more gracefully than the baseline model, holding on to more correct detections of small lesions. At the same time, the authors highlight remaining weaknesses: the system can still struggle on borderline cases between certain tumor grades, may misplace lesion boundaries when tissue structures overlap, and can occasionally mistake staining artifacts for cancer. They note that the study relies on a single dataset and retrospective testing, so wider clinical trials and multi-hospital data are needed before routine use.

What this means for patients and doctors

For a lay reader, the main message is that this work refines an AI “second pair of eyes” to better catch small and subtle breast cancer lesions, particularly those that are hardest for both humans and machines to classify. By more reliably flagging suspicious areas on pathology slides, and doing so at very high speed, such systems could support pathologists in making earlier and more accurate diagnoses. While this tool does not replace expert judgment, it represents a step toward safer, more consistent screening and could ultimately contribute to better outcomes by reducing missed cancers and guiding timely treatment.

Citation: He, Z., Zhang, C., Liang, C. et al. Enhanced breast cancer detection framework based on YOLOv11n with multi-scale feature calibration. Sci Rep 16, 8535 (2026). https://doi.org/10.1038/s41598-026-39723-w

Keywords: breast cancer detection, medical imaging AI, pathology deep learning, small lesion detection, YOLO object detection