Clear Sky Science · en

Gaussian-Haar transform fusion enhances DEIM for pomegranate maturity detection

Smarter Harvests for a Growing World

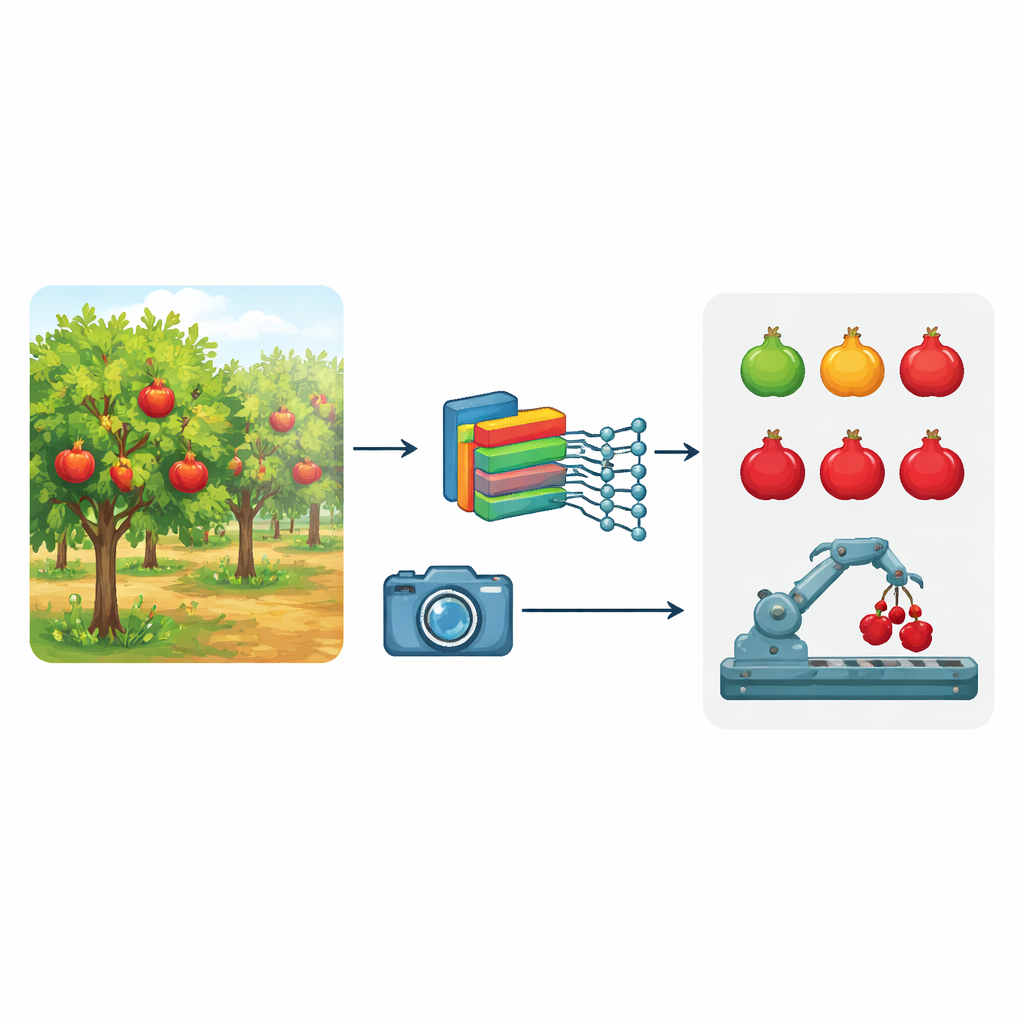

Knowing exactly when fruit is ready to pick is one of farming’s most important – and most difficult – decisions. This study tackles that problem for pomegranates, a crop of rising economic and nutritional importance. Instead of relying on human eyes or slow laboratory tests, the authors present a compact artificial intelligence system that can look at ordinary photos taken in real orchards and determine how far along each pomegranate is in its growth, from tiny buds to fully ripe fruit. Their goal is to make automated harvesting, yield prediction, and orchard management faster, more accurate, and practical even on low-power devices.

Why Pomegranate Growth Is Hard to See

Out in real orchards, spotting pomegranates is not as simple as it sounds. Early in the season, small green fruits almost disappear against dense green leaves, confusing many existing computer-vision methods that mostly look at color. Later, ripening fruit may be partly hidden by foliage or thrown into deep shadow by uneven sunlight, causing algorithms to place detection boxes in the wrong spot or miss fruits entirely. Most previous systems also focus on fruit after harvest or on a single point in the growth cycle, which limits their usefulness for planning irrigation, fertilization, and pest control across an entire season. On top of that, very accurate models are often too large and power-hungry to run on the small computers used in field robots and edge devices.

Teaching a Camera to See Beyond Color

To overcome these obstacles, the researchers built a new detection system they call GLMF-DEIM. First, they assembled a specialized dataset of 5,855 high-quality images from orchards in Shandong, China, captured from April to October under many lighting and weather conditions. Experts labeled 11,482 individual pomegranate buds, flowers, and fruits, dividing them into five growth stages and three size ranges. This rich collection lets the model learn what pomegranates look like at every step of development, from tiny, tightly closed buds to large, brightly colored ripe fruit, and how they appear under different times of day and degrees of leaf cover.

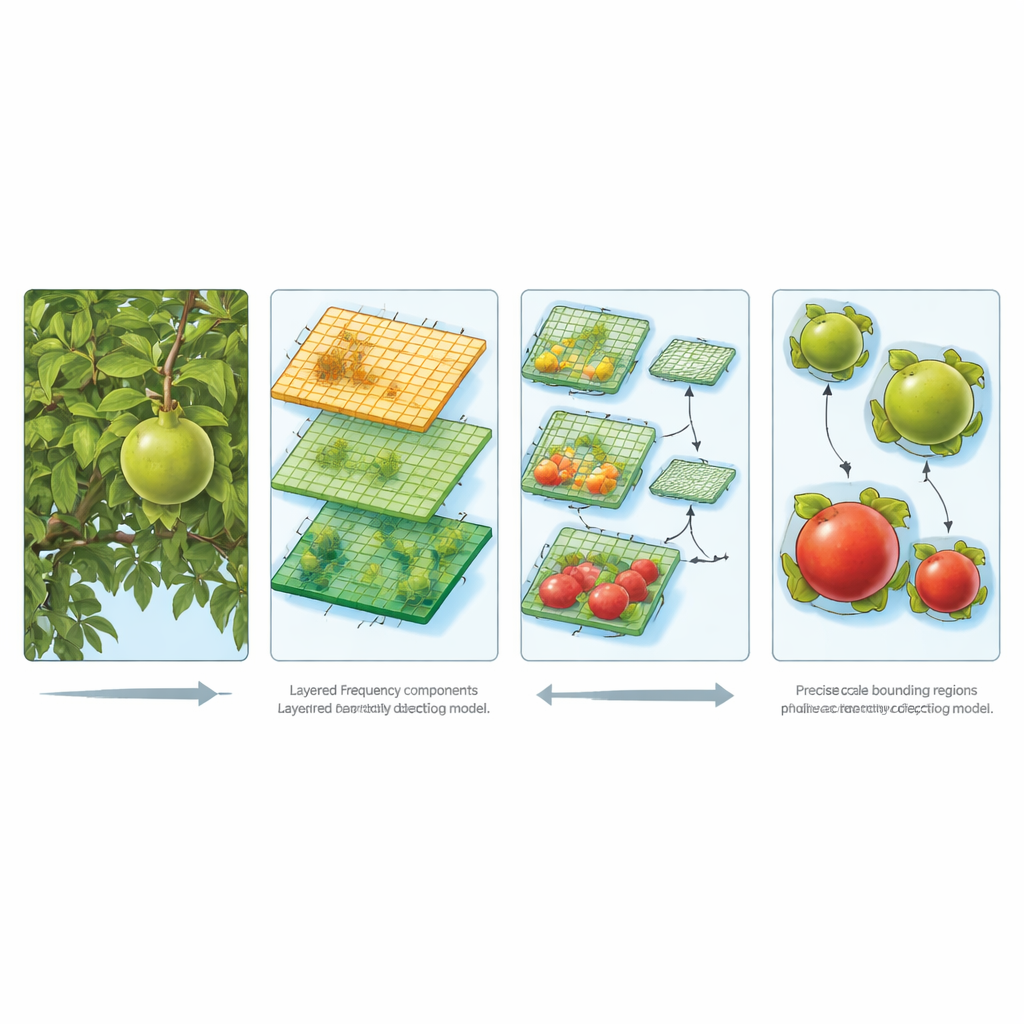

Looking at Texture and Detail, Not Just Color

The heart of GLMF-DEIM is a set of clever tricks that help the computer distinguish fruit from foliage and notice small, subtle features without wasting computation. A front-end module uses a mathematical operation similar to splitting sound into low and high notes. It decomposes the image into smooth regions and sharp edges, after first gently smoothing away tiny background noise. Because pomegranate skins are relatively smooth while leaves form a busy, textured backdrop, this frequency-based view makes it easier to tell them apart even when they share the same shade of green. Other lightweight modules adapt how the image is reduced in size so that important surface details linked to maturity are preserved, and they learn to pay special attention to information spread across different spatial scales, from small buds to big ripe fruits.

Seeing Every Fruit, Large or Small

Beyond recognizing individual textures, the system must handle fruits of many sizes scattered throughout the scene. To do this, the authors design a feature-fusion network that builds a kind of pyramid of image representations. At higher levels, the model captures broad shapes; at lower levels, it retains fine-grained edges and patterns. Information flows both up and down this pyramid so that each detection layer understands both context and local detail. The detection head then uses a modern “transformer” architecture – a way of modeling relationships between many points in an image at once – combined with a refined training strategy that feeds it densely varied examples and a loss function that punishes both overconfident mistakes and underconfident hits. Together, these choices help the system converge quickly and remain robust in difficult scenes with overlapping fruits and cluttered backgrounds.

Better Accuracy with Less Computing Power

In side-by-side tests against leading object-detection systems, the new approach comes out on top. It correctly identifies ripe pomegranates with about 93 percent precision at a standard evaluation setting and maintains strong performance even under stricter scoring rules. It shows especially notable gains for small, hard-to-spot targets, while still excelling at large fruits. At the same time, it uses far fewer calculations and parameters than heavy-duty models, making it suitable for deployment on field robots, drones, or low-cost monitoring stations. In everyday terms, this means a camera-equipped device could roam a pomegranate orchard, reliably track how each tree’s fruit is progressing, and help farmers decide when and where to harvest or intervene – all without needing a supercomputer in the barn.

Citation: Wang, Y., Liu, S., Hao, P. et al. Gaussian-Haar transform fusion enhances DEIM for pomegranate maturity detection. Sci Rep 16, 8246 (2026). https://doi.org/10.1038/s41598-026-39620-2

Keywords: pomegranate detection, fruit maturity, smart agriculture, computer vision, deep learning models