Clear Sky Science · en

AsynDBT: asynchronous distributed bilevel tuning for efficient in-context learning with large language models

Why smarter prompts matter for everyday AI

Large language models now power chatbots, search engines, and writing assistants that many people use daily. Yet getting useful answers still depends heavily on how we phrase our questions and which examples we show the model. This paper introduces a new way to automatically refine those prompts and examples across many devices, while keeping each user’s data private. The result is an AI system that learns to respond more accurately and efficiently, especially on specialized tasks like telecom network maintenance.

Teaching AI by showing, not retraining

Instead of constantly retraining gigantic AI models, a growing trend is to teach them “in the moment” by supplying a few carefully chosen examples in the prompt—a process known as in-context learning. For instance, to classify movie reviews as positive or negative, you might show the model a small set of labeled examples and then ask it to label a new review. The catch is that the choice of examples and the exact wording of the instructions can dramatically change the model’s performance. Finding good combinations by hand is slow and expensive, and sharing raw data among organizations is often impossible due to privacy rules.

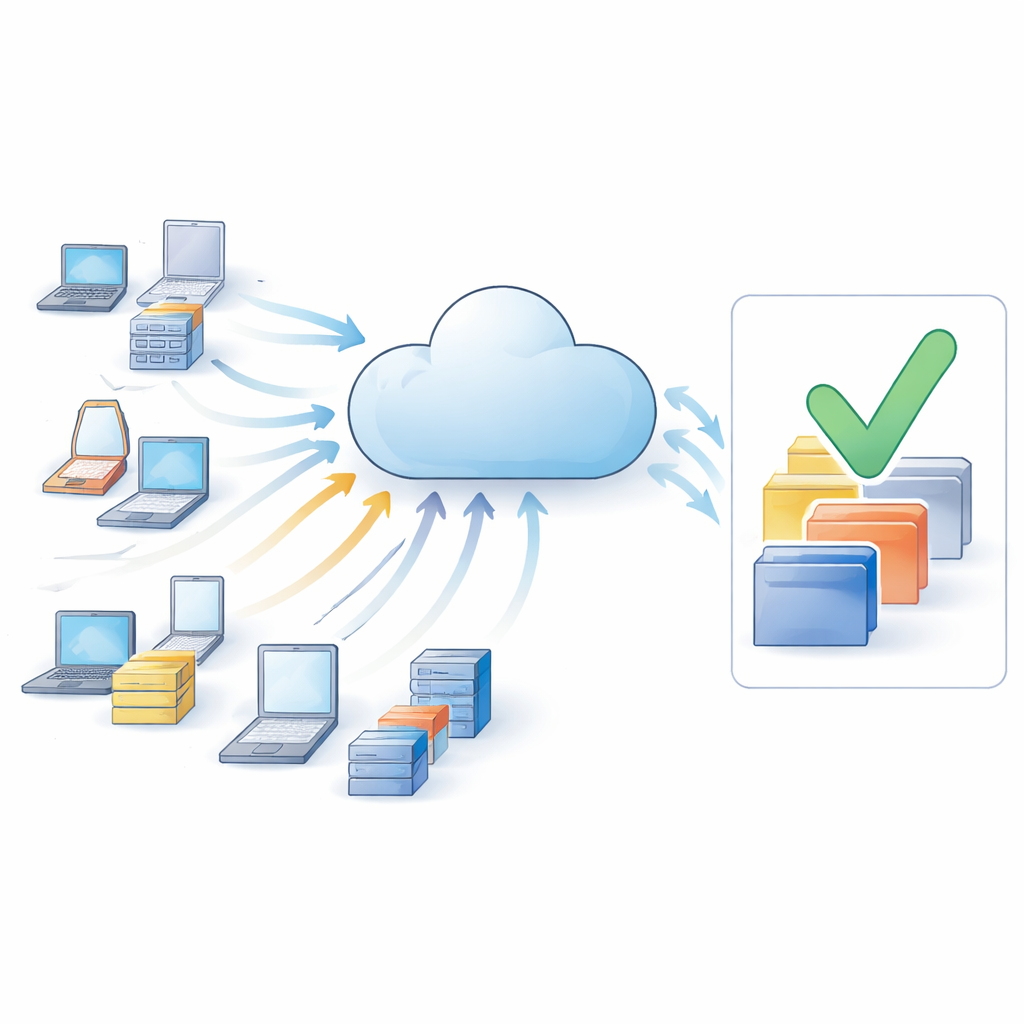

Cooperating without sharing private data

To get around data-sharing barriers, the authors build on federated learning, a setup where many separate devices or organizations keep their data locally but collaborate through a central server. Each worker—say, a telecom base station or a company server—talks to the same cloud-based language model, but never uploads its raw text. Instead, it sends back only feedback signals about how well different prompts and example choices seem to work. A new algorithm called AsynDBT (asynchronous distributed bilevel tuning) coordinates these workers so that they jointly improve a shared prompting strategy while respecting privacy and coping with slow or unreliable network connections.

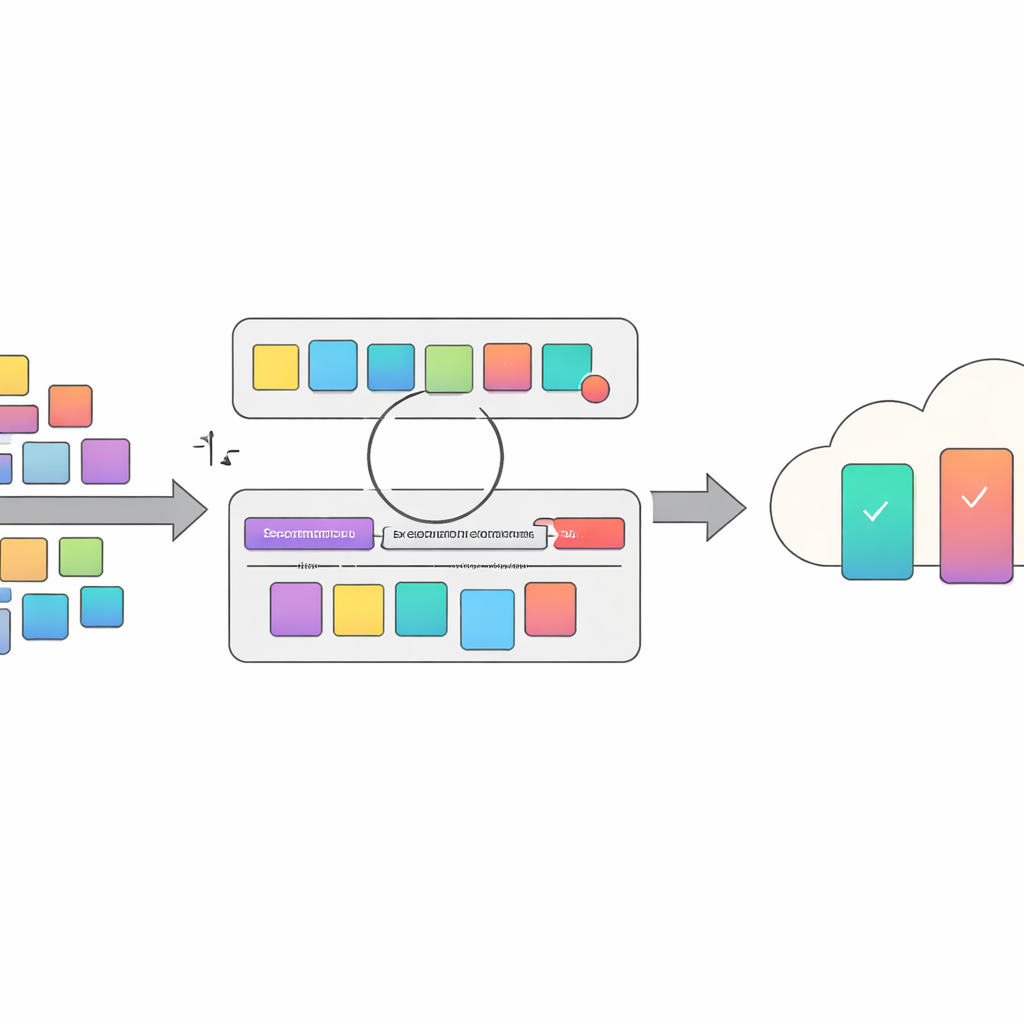

Optimizing both the question and the examples

A key idea of the paper is to treat prompt design as a two-layer optimization problem. At the lower layer, the system tweaks short fragments that get appended to the task instruction—small changes in wording that can nudge the model toward better reasoning. At the upper layer, it decides which labeled examples to include as demonstrations. These two layers interact: different example sets call for different prompt tweaks, and vice versa. AsynDBT formalizes this relationship mathematically and uses an efficient approximation method so that each worker can gradually update its local choices while a central server keeps a consistent global view of the lower-layer decisions.

Handling slow devices and malicious participants

In real networks, some devices respond late or drop out, creating “stragglers” that can stall standard synchronized training. AsynDBT instead works asynchronously: the server updates its variables whenever a subset of workers report in, without waiting for everyone. The method also guards against workers that might send misleading updates, intentionally or by mistake. By blending regularization techniques with robust aggregation rules, the algorithm reduces the impact of poisoned or low-quality example selections on the global strategy, helping the overall system remain stable and reliable even under attack.

Proven gains on language and telecom tasks

The researchers tested AsynDBT on six text classification problems, including a demanding 5G network dataset where the model had to judge whether specialized technical terms were related, using only fragments of telecom standards for context. Compared with a range of existing prompting and example-selection methods, the new approach achieved the best or second-best accuracy on nearly all tasks. On the 5G task in particular, it improved accuracy by about ten percentage points over the strongest baseline. At the same time, the asynchronous design cut training time by roughly 40 percent relative to a similar centralized method that does not distribute the work.

What this means for future AI tools

For non-experts, the takeaway is that better prompts and smarter example choices can noticeably improve how AI systems behave—without changing the underlying model. AsynDBT offers an automated, privacy-preserving way to do this across many collaborating devices, yielding more accurate and efficient language tools for domains like telecom operations, customer support, and other specialized fields. Looking ahead, the authors plan to combine their framework with graph-based knowledge retrieval so that prompts can also draw on up-to-date factual information, further reducing hallucinations and making AI assistants more trustworthy in high-stakes settings.

Citation: Ma, H., Dou, S., Liu, Y. et al. AsynDBT: asynchronous distributed bilevel tuning for efficient in-context learning with large language models. Sci Rep 16, 9381 (2026). https://doi.org/10.1038/s41598-026-39582-5

Keywords: in-context learning, prompt optimization, federated learning, large language models, privacy-preserving AI