Clear Sky Science · en

Automated cone photoreceptor detection using synthetic data and deep learning in confocal adaptive optics scanning laser ophthalmoscope images

Sharper Views of the Living Eye

Seeing the eye’s light-sensing cells one by one could transform how doctors detect and track blinding diseases. But today, experts must painstakingly mark these cells by hand in highly magnified images of the retina, a process that is slow, subjective, and hard to scale to thousands of patients. This study shows how computer models trained on realistic “fake” eye images can learn to find these cells automatically, opening the door to faster, more reliable eye checkups and better evaluation of new treatments.

Why Tiny Cells Matter

The back of the eye is lined with photoreceptors—specialized cells that turn light into the signals our brain interprets as vision. Cone photoreceptors, in particular, are essential for sharp central vision and color perception, and their loss is a hallmark of many retinal diseases. A powerful imaging technology called adaptive optics scanning laser ophthalmoscopy (AOSLO) can capture detailed pictures of these cells in living people. However, before doctors and researchers can measure cone density or track changes over time, they must first pinpoint each individual cone in the image. Manual marking not only takes a great deal of time but can also vary from person to person, limiting its usefulness in routine clinics and large trials.

From Handcrafted Rules to Learning from Data

Earlier computer programs tried to automate cone detection by following fixed rules: for example, looking for bright spots of a certain size or spacing. These rule-based methods could work well on clean images from healthy eyes, but they often struggled when images were noisy, slightly blurred, or came from patients with disease. Deep learning offers a different strategy. Instead of hand-designing rules, a neural network learns patterns directly from examples. The snag is that these models usually need huge numbers of images that have already been carefully labeled by experts—exactly the kind of data that is rare and expensive in AOSLO imaging.

Building a Virtual Training Ground

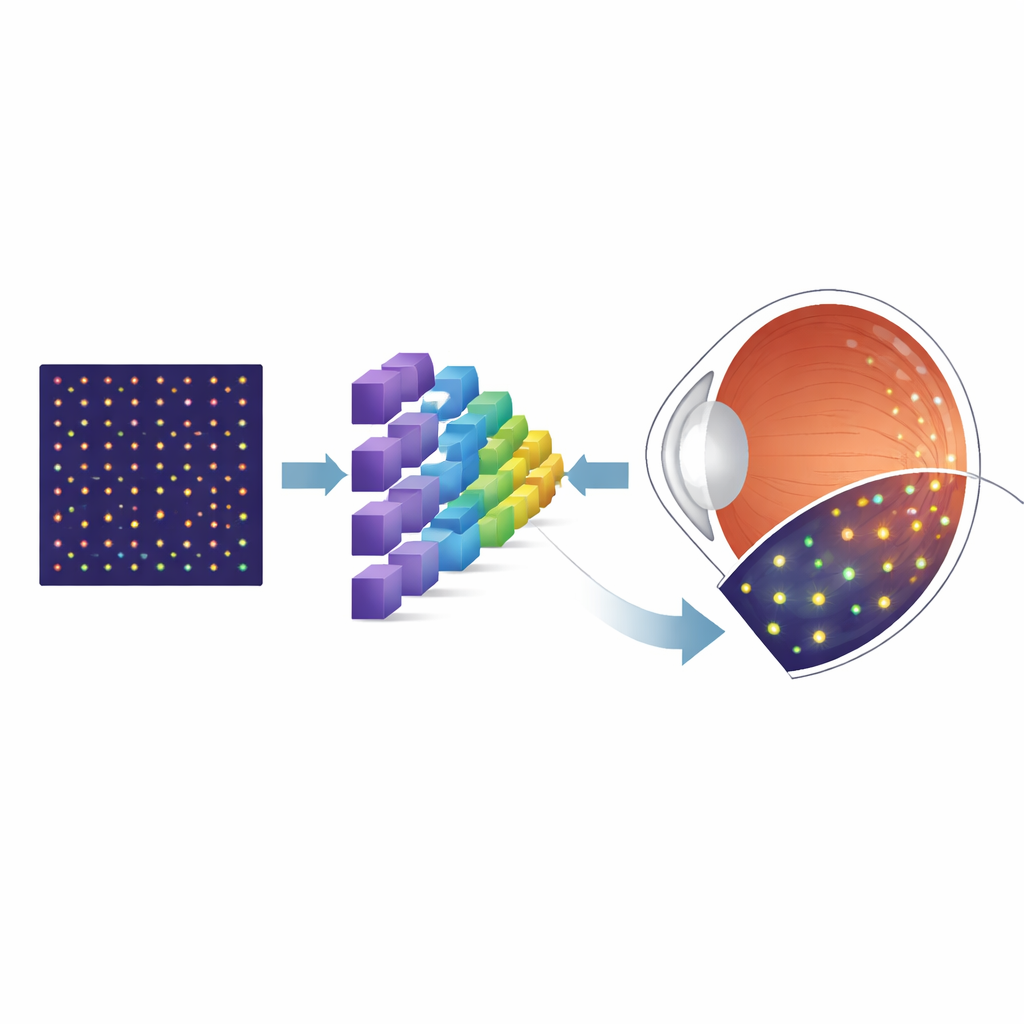

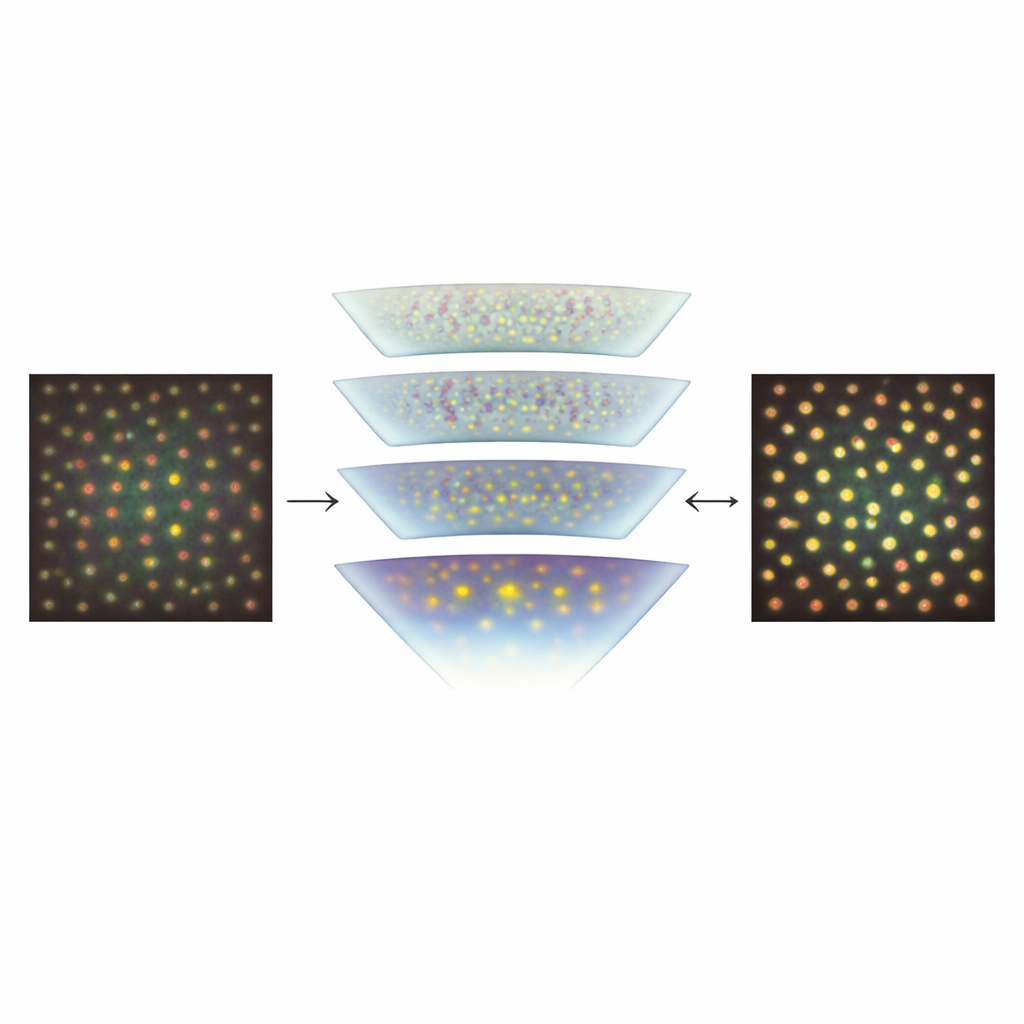

To sidestep the shortage of labeled real images, the researchers turned to a simulation tool called ERICA, which can generate realistic AOSLO-like pictures of cone mosaics along with perfect “ground truth” knowledge of where every cone lies. They created large sets of these synthetic images spanning many positions in the retina, while systematically varying key imperfections that affect real pictures, such as random noise and subtle optical blurring. They then trained a specialized neural network architecture, known as a U-Net, to transform each input image into a probability map showing where cones are most likely to be. After this initial training on synthetic data, the team fine-tuned the model using a much smaller collection of real AOSLO images from a well-known public dataset, and finally tested it on independent images from another lab to see how well it generalized.

How Well the Computer Matches Human Experts

The team compared their automated method with painstaking manual labeling and with two leading cone-detection algorithms. Using a standard measure of overlap between predicted and manual cone markings, the new U-Net matched or nearly matched the performance of both the expert graders and the competing automated methods on the public dataset. Crucially, when tested on a separate set of images taken at different distances from the center of vision and collected with a different instrument, the model still performed very well. This suggests that training heavily on synthetic data covering a broad range of visual conditions helped the network learn features that transfer to real-world images, rather than overfitting to one specific camera or patient group.

What This Could Mean for Future Eye Care

For non-specialists, the core message is that a computer program trained largely on “virtual” eye images can now find real cone cells in high-resolution retinal scans about as reliably as human experts. By making cone detection faster, more objective, and easier to apply across different scanners and clinics, this approach could help turn detailed retinal imaging into a routine tool for tracking diseases at the level of individual cells. In the longer term, similar synthetic-data-driven methods could be extended to detect other cell types and to model disease-related cell loss, supporting earlier diagnosis, better monitoring of progression, and more precise evaluation of new treatments aimed at preserving sight.

Citation: Shah, M., Young, L.K., Downes, S.M. et al. Automated cone photoreceptor detection using synthetic data and deep learning in confocal adaptive optics scanning laser ophthalmoscope images. Sci Rep 16, 8313 (2026). https://doi.org/10.1038/s41598-026-39570-9

Keywords: retinal imaging, cone photoreceptors, deep learning, synthetic data, adaptive optics