Clear Sky Science · en

Modified ShuffleNet trained on gradient pattern and shape-based features for lung cancer classification with improved M-SegNet segmentation

Why early lung checks matter

Lung cancer is one of the deadliest cancers worldwide, largely because it is often found too late. Doctors already use CT scans to look for suspicious spots in the lungs, but carefully examining hundreds of images per patient is slow and tiring work. This paper describes a computer system that learns to read these scans automatically, aiming to help doctors spot cancer earlier, more consistently, and in hospitals that may not have teams of specialists on hand.

A smart helper for reading lung scans

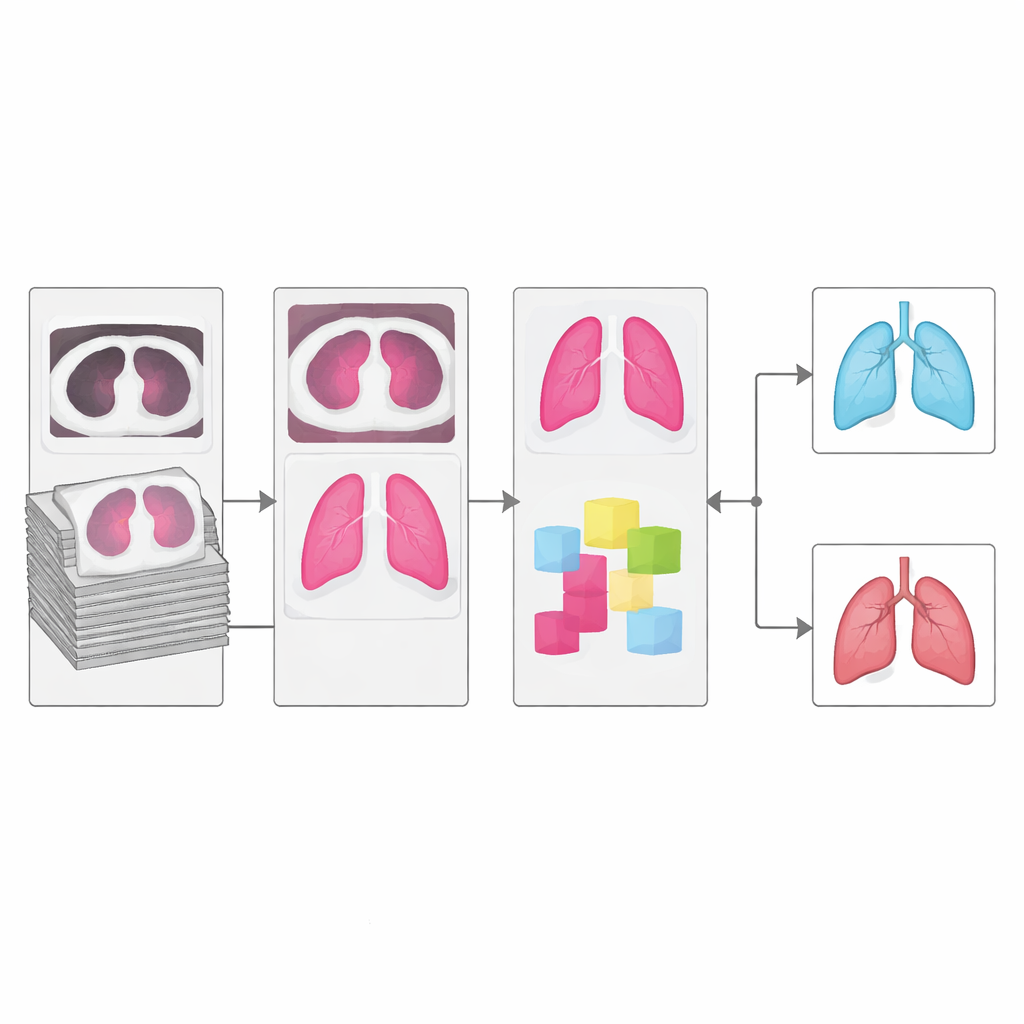

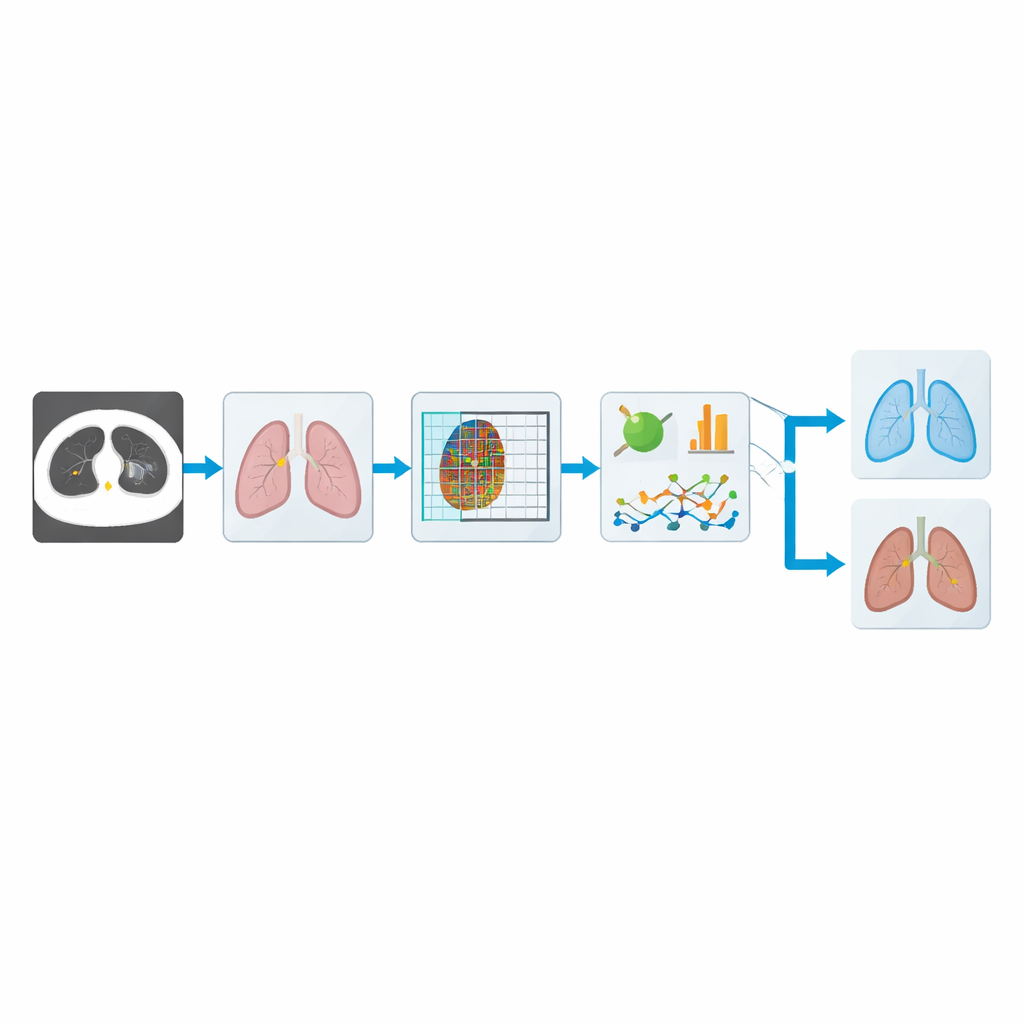

The authors build an automated pipeline that takes raw CT images of the chest and steadily refines them into a simple answer: likely cancer or not. First, the system improves the contrast of each image so that details in the lung tissue stand out more clearly. Then it carefully separates the lungs from the rest of the chest, focusing the analysis on the regions where tumors actually grow. From these cleaned lung images it extracts telltale patterns in texture and shape, and finally feeds this information into a compact deep‑learning model that makes the final judgment. The overall goal is not to replace physicians, but to give them a fast and reliable second opinion.

Teaching the system to see lung structure

One of the biggest hurdles in computer analysis of CT scans is segmentation: tracing out the true lung regions, and especially the boundaries of the lobes where small nodules may hide. The authors introduce an upgraded segmentation network called mRRB‑SegNet, which combines ideas from modern image recognition, including shortcut connections and recurrent loops that allow the model to look at both local detail and broader context. In tests against popular alternatives, this segmenter produced outlines that overlapped the expert‑defined lung regions far more closely, which is crucial because any error at this stage can ripple through all later steps.

Reading subtle texture and shape clues

Once the lungs are isolated, the system turns to the problem of recognizing what a cancerous nodule looks like. Instead of relying only on raw pixels, it computes several families of features. A refined “local gradient” measure focuses on tiny changes in brightness across neighboring pixels, which correspond to fine textures in the tissue. Additional shape measures capture how big, compact, or irregular a nodule is, and statistical summaries describe how intensities are distributed inside each region. Together, these cues help distinguish harmless round spots from more jagged, suspicious growths that are more typical of malignant tumors.

A lightweight brain for fast decisions

To turn these features into decisions, the authors adapt a deep‑learning architecture called ShuffleNet, originally designed to run quickly on mobile devices. They add a custom normalization step that stabilizes training on noisy medical data, and an attention module that learns to “look” harder at the most important channels and locations in the image. This upgraded CMN‑ShuffleNet keeps the network small and efficient, yet it learns to focus on the lung patterns that matter most for cancer. Because it uses relatively modest computing power, the system is a better fit for real‑world clinics, including those with limited hardware resources.

How well does it work in practice?

The team tested their approach on two widely used public datasets of lung CT scans. On the main set (LUNA16), their model correctly distinguished cancer from non‑cancer cases about 96% of the time, with particularly strong scores for sensitivity—its ability to catch true cancer cases—and for a balanced metric that weighs all types of errors. It also clearly outperformed a roster of established deep‑learning models, including versions of VGG, DenseNet, and other recurrent and convolutional networks, despite using less computation time than many of them. A separate cross‑validation test on an independent dataset showed similarly high performance, suggesting that the method is not just memorizing one collection of scans.

What this means for patients and clinics

For a non‑specialist reader, the key message is that the authors have built a fast, compact artificial‑intelligence assistant that can pick up subtle signs of lung cancer on CT scans with accuracy comparable to, and in some cases better than, larger and slower systems. By combining careful image cleaning, precise lung outlining, and focused analysis of texture and shape, the method reduces missed cancers while keeping false alarms relatively low. Although it still depends on good‑quality scans and can be thrown off if the earlier segmentation step fails, this work moves automated lung cancer screening closer to routine clinical use, where it could help doctors detect disease earlier and improve outcomes for many patients.

Citation: R, N., C M, V. Modified ShuffleNet trained on gradient pattern and shape-based features for lung cancer classification with improved M-SegNet segmentation. Sci Rep 16, 11185 (2026). https://doi.org/10.1038/s41598-026-39492-6

Keywords: lung cancer, CT imaging, deep learning, medical AI, computer-aided diagnosis