Clear Sky Science · en

Evaluating Sentinel-2 gap filling techniques for cloud removal and data reconstruction

Clearing the View from Space

Satellites like Europe’s Sentinel-2 give farmers, water managers, and climate scientists a bird’s‑eye view of Earth at high detail. But there is a stubborn problem: clouds and sensor glitches punch holes in these images, just when decisions about irrigation, crop health, or drought must be made. This paper asks a practical question with big consequences for food and water security: among the many ways to “fill in” missing satellite pixels, which ones actually work best, and under what conditions?

Why Missing Pixels Matter

High‑resolution optical satellites record how fields, forests, and water bodies change every few days. For agriculture, this means tracking crop growth, spotting stress early, and planning irrigation before plants suffer. Yet clouds often hide large parts of the ground, and occasional sensor failures can create permanent stripes of missing data. In some regions, long stretches of time pass with only a few clear images. If these gaps are not repaired carefully, estimates of crop yield, water use, or land cover can be badly biased, undermining decisions that depend on accurate, continuous information.

Different Ways to Patch the Holes

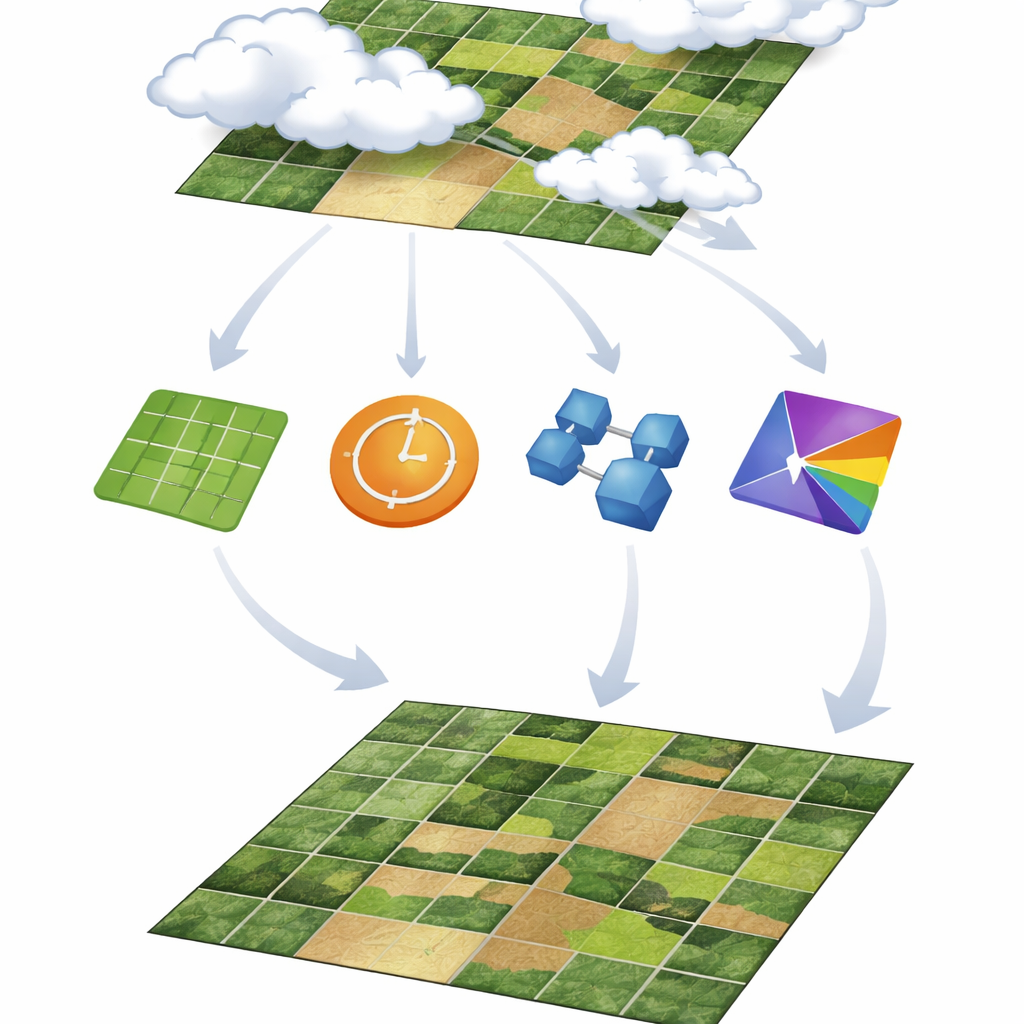

Scientists have developed a toolbox of gap‑filling methods, which the authors group into four families. Spatial methods look sideways, using nearby pixels in the same image to guess missing values. Temporal methods look along the time line of a single pixel, using past and future dates to fill gaps. Spatio‑temporal methods combine both directions, learning patterns across space and time at once. Finally, spatio‑spectral methods tap relationships between different color bands in the image, using information from other wavelengths to restore what is missing in one band. This study deliberately focuses on methods that use only Sentinel‑2 data itself, avoiding extra inputs like weather records or other satellites so that the solutions are easy to apply wherever Sentinel‑2 is available.

Testing Under Controlled Cloud Scenarios

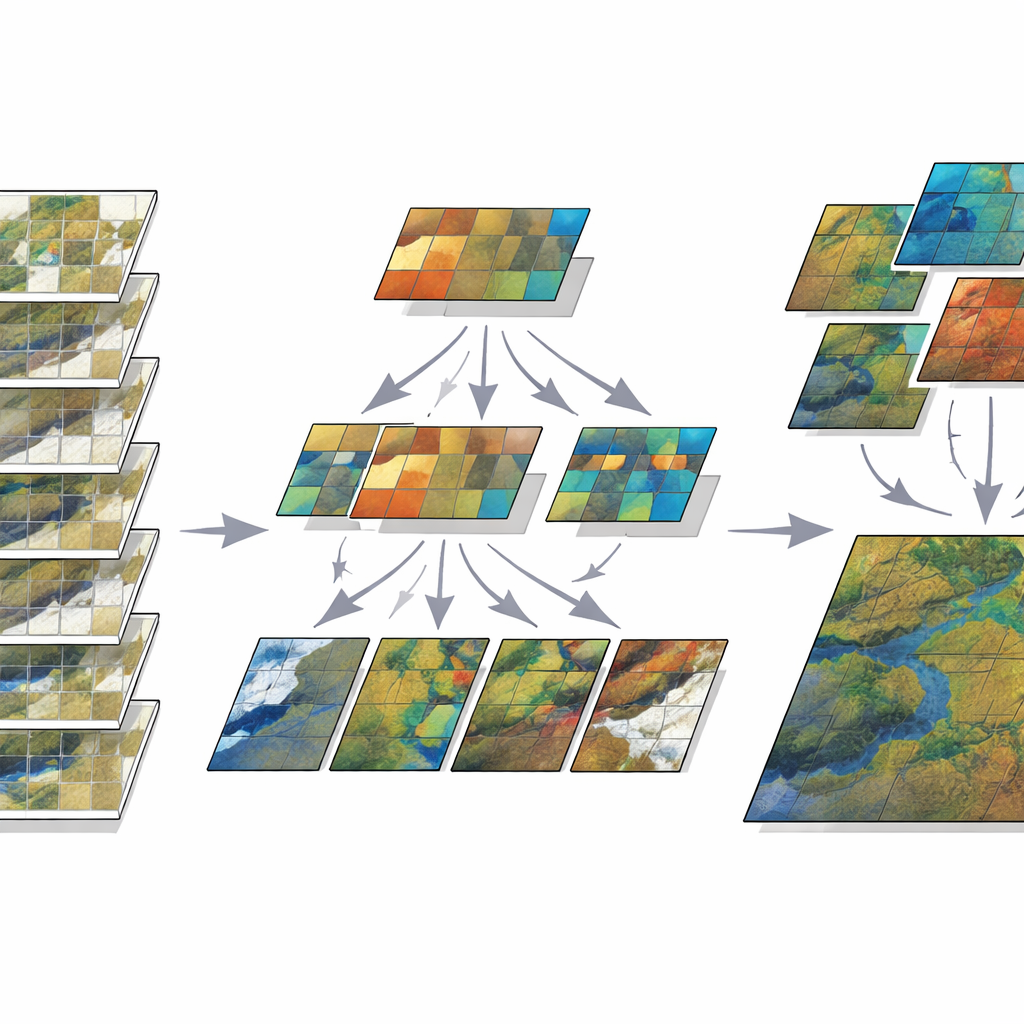

To compare these approaches fairly, the authors created artificial clouds over a mixed farming region in Morocco. They used a mostly cloud‑free Sentinel‑2 series from spring and summer 2022 and then “masked out” pixels to mimic different kinds of cloud cover. Some tests removed a single round patch in the middle of an image; others scattered several irregular patches to imitate more chaotic clouds. They also created time‑series gaps, both as long blocks of missing dates and as separate missing images sprinkled through the season. Six key Sentinel‑2 bands, from visible colors to shortwave infrared, were examined. For each method, the team measured how well the reconstructed pixels matched the original cloud‑free image, and they also judged visual quality and computing time.

Which Methods Come Out on Top

Simple spatial methods, such as kriging and distance‑based interpolation, did reasonably well for small, neat gaps but quickly broke down as clouds became larger or more irregular. They could also be very slow when applied to full high‑resolution images. Temporal methods, which follow each pixel over time, fared better, especially when gaps were short and broken up rather than long continuous blocks. However, their success depended on how stable the landscape was: smooth seasonal changes in crops or water were easier to handle than abrupt shifts in bare soil after rain or irrigation.

Power of Combining Space, Time, and Color

The most accurate and robust results came from methods that blend several kinds of information at once. A machine‑learning approach that clusters pixels with similar seasonal behavior and then applies linear regression (called CLR in the paper) consistently delivered low errors across many gap sizes, shapes, and bands. A deep learning model based on a U‑Net architecture also performed strongly, especially for complex spatial gaps, but required heavy training and struggled with long stretches of missing dates. Meanwhile, a spatio‑spectral method using random forests (SSRF) excelled at preserving fine detail and natural‑looking textures, particularly in visible and near‑infrared bands, as long as a nearby clear image in time was available for training.

What This Means for Real‑World Use

For non‑specialists who rely on satellite‑based products—such as irrigation planners, crop insurers, and environmental agencies—the message is clear. No single technique is best for every situation, but methods that harness space, time, and spectral color together now clearly outperform older, simpler tricks that look only at neighbors in a single image. The study shows that clustering‑plus‑regression and spatio‑spectral random forests offer a practical balance of accuracy, visual quality, and computing cost, while deep learning becomes attractive when powerful hardware and training data are available. By laying out a transparent testing framework and sharing their code openly, the authors provide a roadmap for choosing and improving gap‑filling tools, helping to turn cloudy, broken satellite records into reliable information for managing land and water.

Citation: Grich, S., Elfarkh, J., Ouaadi, N. et al. Evaluating Sentinel-2 gap filling techniques for cloud removal and data reconstruction. Sci Rep 16, 9464 (2026). https://doi.org/10.1038/s41598-026-39488-2

Keywords: Sentinel-2, cloud removal, gap filling, remote sensing, agricultural monitoring