Clear Sky Science · en

Benchmark evaluation of video large language models in quality assessment of science popularization videos for dry eye

Why this matters for everyday viewers

Short video apps are fast becoming people’s first stop for health advice, including eye problems like dry eye, which affects hundreds of millions worldwide. But alongside helpful clips, low‑quality or misleading videos are easy to find and hard for doctors to police. This study asks whether new artificial intelligence systems that can “watch” videos could help check the quality of these health clips automatically, and shows why, for now, such tools are not ready to replace expert judgment.

Dry eyes and the rise of health videos

Dry eye is more than a minor annoyance; it can blur vision, cause pain, and disrupt work and daily life. As the condition becomes more common, especially among older adults and heavy screen users, many people search online for explanations and self‑care tips. Platforms like TikTok host countless short videos about dry eye, but their open nature means anyone can post content, regardless of medical training. Poor or exaggerated advice can delay proper treatment or encourage unsafe home remedies, so reliable ways to check video quality at scale are urgently needed.

How the researchers tested AI video reviewers

The team collected 185 Chinese‑language TikTok videos about dry eye using a new, neutral account and strict rules to keep only original, educational clips. Two eye specialists then scored each video using three established tools often used in medical‑education research. One tool rated how easy the videos were to understand and how clearly they suggested concrete steps viewers could take. A second provided an overall quality grade from poor to excellent. The third broke quality down into aspects such as how smoothly information was presented, how accurate it was, how well extra elements like animations were used, and how closely the content matched the video’s title.

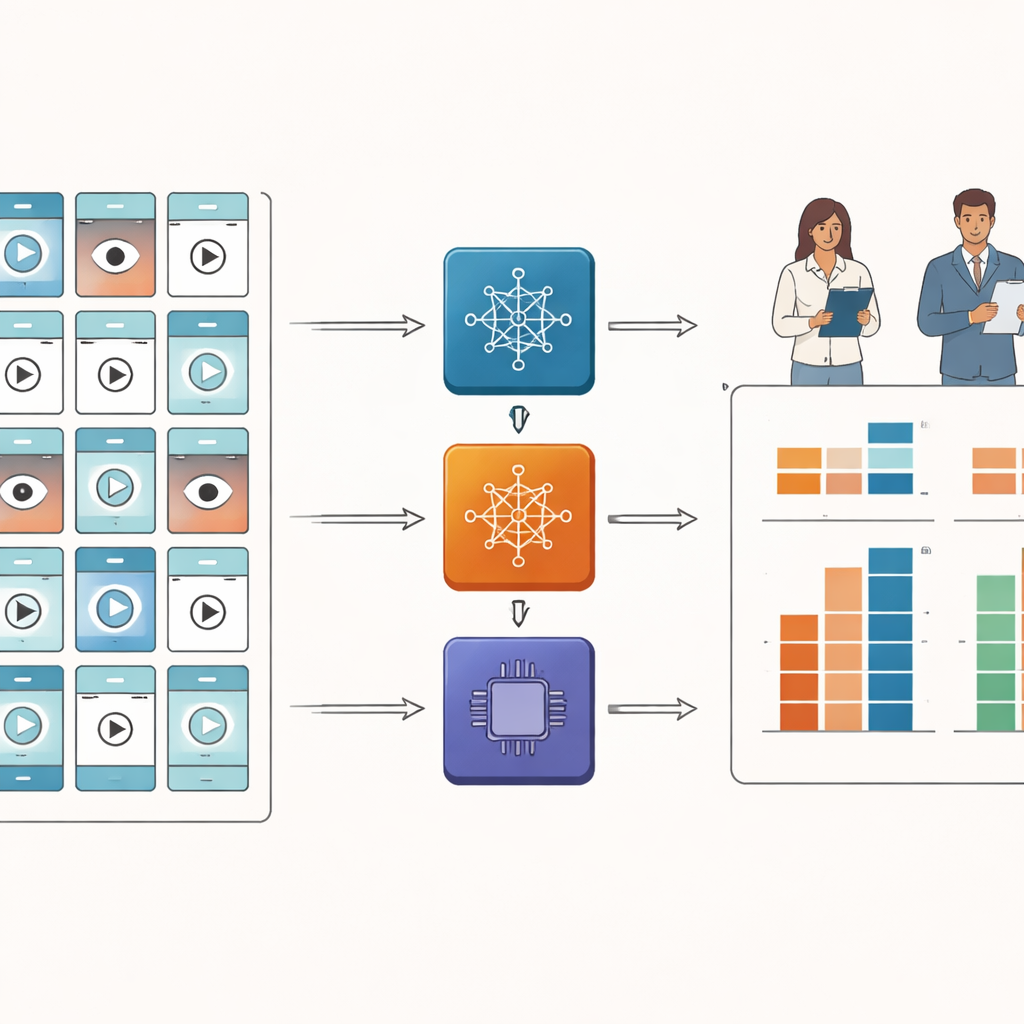

Putting video‑savvy AI models to the test

Next, the researchers fed the same videos into three advanced “video large language models,” AI systems designed to interpret visual information frame by frame and answer questions about what they see. They crafted detailed instructions so each model would mimic the doctors’ scoring tools as closely as possible. The key question was whether the AI and the human experts would give similar scores. To measure this, the team used a standard reliability statistic that captures how closely two different “judges” agree, not just in trends but in actual numbers.

What the AI got right—and wrong

Human raters largely agreed with each other, suggesting their scores were stable and trustworthy. By contrast, the three AI systems showed poor agreement with the experts in most areas. None of the models could reliably match doctors on overall video quality or on detailed features such as how well titles reflected content. One model tended to give higher scores than the experts, another tended to score lower, and only one sometimes landed in between. The single relative bright spot was “actionability”—how clearly videos told viewers what to do—where two models reached a middle‑of‑the‑road level of agreement, but still fell short of what would be needed for real‑world decision‑making.

Why today’s AI falls short

The authors suggest several reasons for this gap. The tested AI systems were trained mainly on everyday scenes and generic video tasks, not on carefully structured health education. Many science videos rely heavily on spoken explanations, subtitles, charts, and metaphors rather than dramatic moving images, yet the models in this study analyzed only the visual frames and did not listen to the audio or read the titles and other descriptive information that humans use to judge relevance and accuracy. As a result, large portions of meaning never reached the AI, especially when key details were spoken rather than shown. Figurative language common in Chinese health education may also confuse systems that interpret statements literally.

What this means for patients and platforms

This work provides an early roadmap, not a ready‑made safety net. It shows that, in principle, familiar quality checklists for health information can be translated into instructions for AI models that watch videos. It also makes clear that current general‑purpose systems are not yet dependable enough to grade medical videos or police misinformation without human oversight. By releasing their evaluation framework and annotated video dataset, the authors hope to spur better, more specialized models that can combine visuals, sound, and extra context, and that can work across diseases and languages. For now, viewers should continue to treat short health videos as starting points, not medical advice, and platforms should not rely on AI alone to guarantee trustworthy information.

Citation: Zhou, S., Huang, M., Wei, J. et al. Benchmark evaluation of video large language models in quality assessment of science popularization videos for dry eye. Sci Rep 16, 8756 (2026). https://doi.org/10.1038/s41598-026-39444-0

Keywords: dry eye disease, health videos, artificial intelligence, misinformation, TikTok