Clear Sky Science · en

A cascaded interferometer-microresonator structure for photonic reservoir computing

Light as an ultra-fast problem solver

Modern life runs on data: from streaming video to high-speed internet backbones, we constantly push electronics to move information faster. But traditional computer chips struggle to keep up without overheating or wasting huge amounts of energy. This work explores a different approach—using light on a chip to do part of the computing. The authors show how a clever combination of tiny optical circuits can process complex time-varying signals at tens of billions of operations per second, while remaining simpler and more practical than previous designs.

Turning a physics trick into a thinking machine

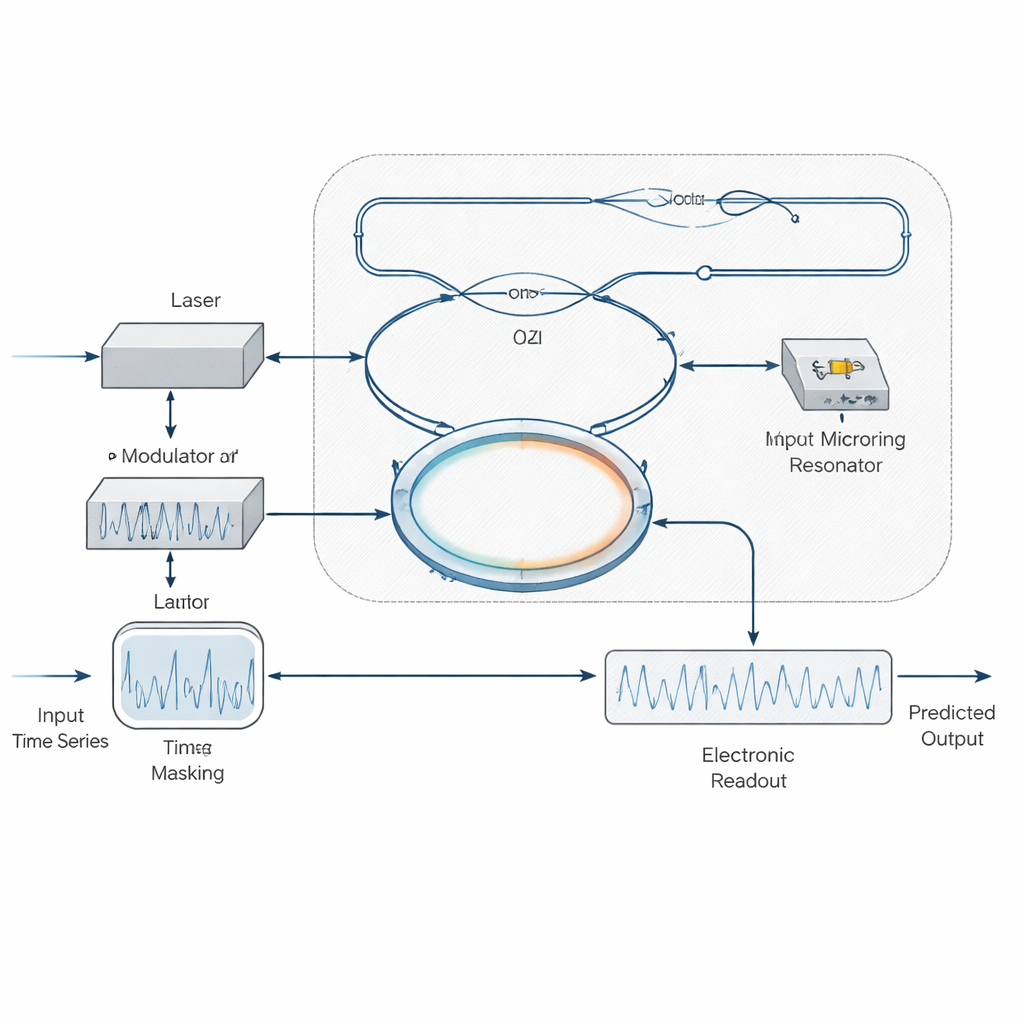

The core idea behind this research is a computing method called “reservoir computing.” Instead of building a large, carefully wired neural network, you send an input signal into a fixed, complex system—here, a network of tiny optical components on a chip. Because of the way waves of light interfere and mix inside this network, the system naturally transforms the input into a rich pattern of internal states. A simple electronic circuit at the output then learns how to combine those states to predict or classify signals, such as complicated time series used in machine learning benchmarks or distorted data streams in fiber-optic links.

Why previous photonic approaches hit a speed limit

Earlier optical reservoir computers often relied on the intrinsic nonlinear effects of silicon microring resonators—microscopic racetrack-like loops that trap and delay light. In these devices, intense light changes the material’s properties, which in turn changes how the ring behaves. While this provides the nonlinearity needed for computing, the key effects are tied to slow physical processes, such as the motion of charge carriers and heat flow, which unfold over billionths to hundred-billionths of a second. To match these slow timescales, engineers must add long delay lines on the chip, which are hard to fabricate, lossy, and ultimately cap the overall processing speed.

A simpler, faster way: keep the optics linear, move nonlinearity to the edges

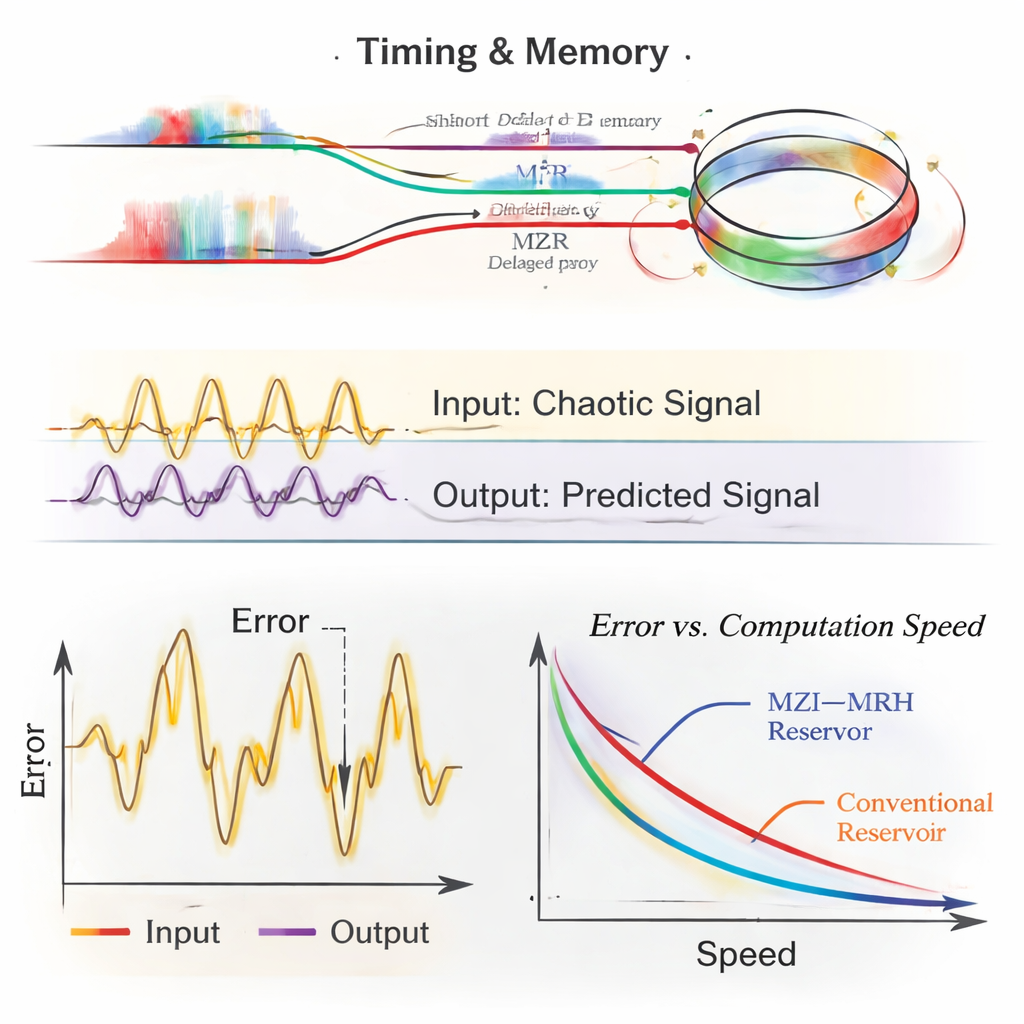

The authors propose a different strategy: operate the microring resonator in a purely linear regime, at extremely low optical powers where those slow material changes never kick in. Instead of asking the ring itself to behave nonlinearly, they place the nonlinear behavior at the modulation and detection stages. A continuous-wave laser is first imprinted with a masked version of the input signal—by varying either the light’s brightness or its phase—and then sent through an on-chip interferometer (a Mach–Zehnder structure) followed by the microring. These linear components create multiple delayed and filtered copies of the signal that interfere with one another. When this complex optical pattern hits a photodetector, which naturally converts field strength into intensity, the required nonlinearity emerges “for free.” An electronic readout layer then learns how to mix current and past detector samples, effectively sharing the memory duties between optics and electronics.

Building a compact optical “short-term memory”

To show what their design can do, the researchers simulate a reservoir made of an unbalanced Mach–Zehnder interferometer cascaded with a microring resonator. By carefully choosing how long one interferometer arm is compared with the other, and how strongly the ring couples to the bus waveguide, they tune how much different “moments in time” of the input can interact. They also explore how the length of the digital mask and the number of samples used in the electronic readout affect performance. With short masks and a relatively modest electronic memory, their system accurately tackles standard prediction challenges such as the NARMA-10, Mackey–Glass, and Santa Fe time-series tasks, achieving low error while operating at effective computation speeds from about 8 to 25 gigahertz—up to an order of magnitude faster than many earlier silicon-based optical reservoirs.

Cleaning up real-world optical communication signals

Beyond abstract benchmarks, the team applies their reservoir to a realistic fiber-optic communication scenario: a 112-gigabaud, four-level pulse-amplitude modulation (PAM-4) link in the O-band, similar to setups being standardized for 800-gigabit Ethernet. Such links suffer from dispersion in the fiber and distortions introduced by the transmitter laser. In simulations, the new photonic reservoir substantially lowers the bit-error rate compared with a conventional digital feed-forward equalizer of the same complexity. It also tolerates more accumulated dispersion—equivalent to extending the transmission distance by roughly 15 kilometers—without crossing common error-correction thresholds, all while keeping the heavy lifting in the optical domain.

What this means for future ultra-fast computing

In everyday terms, this study shows how to turn simple optical building blocks into a powerful, high-speed “analog pre-processor” for data. By avoiding slow material effects and long optical delays, and by leaning on fast modulators, detectors, and smart digital post-processing, the proposed design can in principle scale to tens or even a hundred gigahertz with existing technology. That could make future data centers and communication systems faster and more energy-efficient, with compact photonic chips acting as front-end co-processors that handle complex signal dynamics before digital electronics take over.

Citation: Mataji-Kojouri, A., Kühl, S., Seifi Laleh, M. et al. A cascaded interferometer-microresonator structure for photonic reservoir computing. Sci Rep 16, 6492 (2026). https://doi.org/10.1038/s41598-026-39410-w

Keywords: photonic reservoir computing, silicon photonics, microring resonator, optical signal processing, high-speed communications