Clear Sky Science · en

Optimization of infectious disease intervention measures using reinforcement learning with UK COVID-19 epidemic data

Smart Tools for Tough Health Decisions

When a new disease sweeps through a country, leaders must quickly decide how hard to clamp down on daily life. Close everything and you may save lives but wreck the economy; move too slowly and hospitals overflow. This paper explores whether a form of artificial intelligence, called reinforcement learning, can help governments find smarter, more balanced responses using detailed simulations of how a virus like COVID‑19 actually spreads through real communities.

Simulating a Country in a Computer

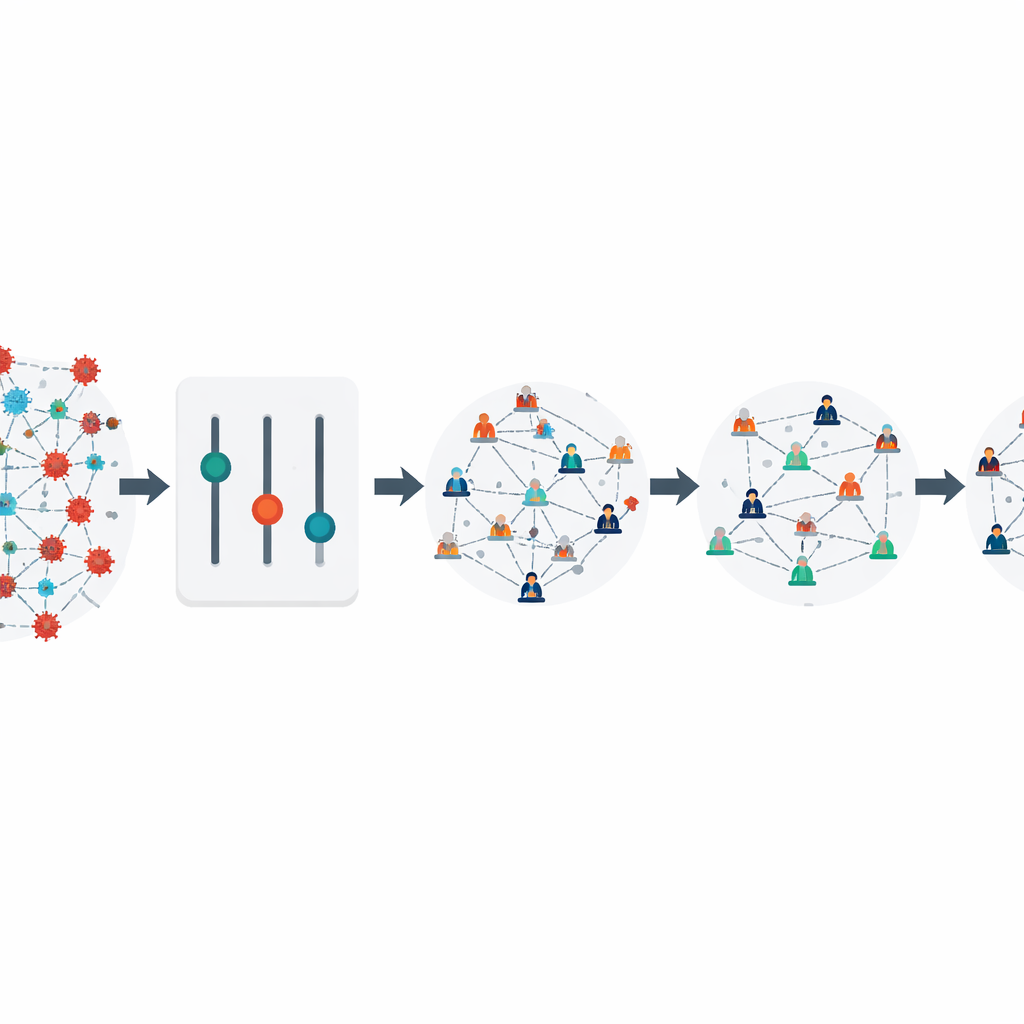

Instead of using simple equations that treat people as identical, the authors build on Covasim, a rich computer model that follows thousands of virtual individuals as they live, work, study, and interact. Each simulated person has an age, a place in family, school, and workplace networks, and a health status that can change from healthy to infected to recovered or dead. By carefully adjusting the model’s settings, the team makes this virtual United Kingdom behave like the real one did during the first wave of COVID‑19, matching official counts of cases and deaths from early 2020. This calibration step is crucial, because any strategy the computer learns must work in a world that resembles our own, not a toy universe.

Teaching a Digital Advisor to Act

Once the model behaves like reality, the researchers plug in reinforcement learning, a branch of AI in which a software “agent” repeatedly tries out decisions and is rewarded or penalized based on the outcomes. Here, the agent can adjust three main levers every simulated week: how strict partial lockdowns are, how many people are tested, and how aggressively contact tracing is used. The reward system is designed to capture two competing goals: keeping infections, severe illness, and deaths low, while also limiting damage to the economy caused by closing workplaces and isolating people. By running thousands of simulated epidemics, the agent discovers which combinations and timings of measures earn the highest overall score.

Finding Better Balance Than Fixed Rules

The study compares several learning methods and ways of describing the agent’s choices. One method that treats actions as smooth dial settings, rather than a small menu of fixed options, performs especially well. It learns to respond quickly when the virus starts to spread, imposing short but strong restrictions paired with intense testing and tracing. As the simulated outbreak comes under control, it relaxes lockdowns while keeping some testing and tracing in place, then tightens again briefly if infections threaten to surge. This flexible pattern keeps the total number of infections to about 300,000 in the model, far below what occurred under the real‑world policies used in the UK during the same period, and also below a simple “seven days open, seven days locked down” rule. Economic losses in the model are cut by more than two‑thirds compared with that rigid alternating lockdown strategy.

Timing Really Matters

The authors also examine how these different strategies affect the real‑time reproduction number, a measure of how many new infections each case generates. In their simulations, the AI‑designed policy pushes this number below the critical value of one about a month earlier than the actual UK response did. That seemingly small shift dramatically reduces cumulative infections, underscoring how much difference early, well‑planned action can make. They further test the learned policy on a very different setting, using data from Hong Kong’s large COVID‑19 wave in 2022, and find that the same strategy still performs well, suggesting that the learned rules capture general principles rather than overfitting to one country.

What This Means for Future Outbreaks

For non‑specialists, the main message is that we do not have to choose blindly between saving lives and saving livelihoods. By combining detailed simulations of how a virus moves through real social networks with AI that learns from trial and error, policymakers could be given data‑driven playbooks that adapt as conditions change. The authors emphasize that such tools are not meant to replace human judgment, but to act as powerful decision aids, exploring countless what‑if scenarios far faster than people can. As new epidemics emerge, this approach could help leaders act earlier and more precisely, using targeted testing, tracing, and partial closures to keep disease in check while preserving as much normal life and economic activity as possible.

Citation: Zhang, B., Chen, Y., Li, H. et al. Optimization of infectious disease intervention measures using reinforcement learning with UK COVID-19 epidemic data. Sci Rep 16, 10627 (2026). https://doi.org/10.1038/s41598-026-39377-8

Keywords: COVID-19 policy, reinforcement learning, epidemic simulation, non-pharmaceutical interventions, public health strategy