Clear Sky Science · en

Patient address parsing via KG-aware contrastive learning and constrained on-prem LLM inference

Why tidy patient addresses matter

Behind every hospital visit sits a humble line of text: the patient’s home address. Far from being a clerical detail, these addresses power disease-mapping, emergency planning, and decisions about where to place clinics and ambulances. Yet in many medical record systems, addresses are stored as messy, inconsistent text full of abbreviations, typos, and missing pieces. This article introduces AddrKG‑LLM, a new method that turns such unruly address text into clean, reliable records while keeping sensitive details private.

The problem with messy home addresses

When addresses are typed in freely, people leave out districts, swap word order, or use local nicknames that official maps do not recognize. Older computer methods compare strings character by character or as simple word lists, which works only when inputs are already neat and complete. Newer deep learning systems read context more intelligently, but they can still be tripped up by unusual wording and require heavy computing power. Recently, large language models have shown an impressive knack for understanding and generating text. However, when allowed to respond freely, they also tend to “hallucinate” details that are not really in the data—an unacceptable risk in healthcare, where records must be precise and auditable.

A two-step path from chaos to order

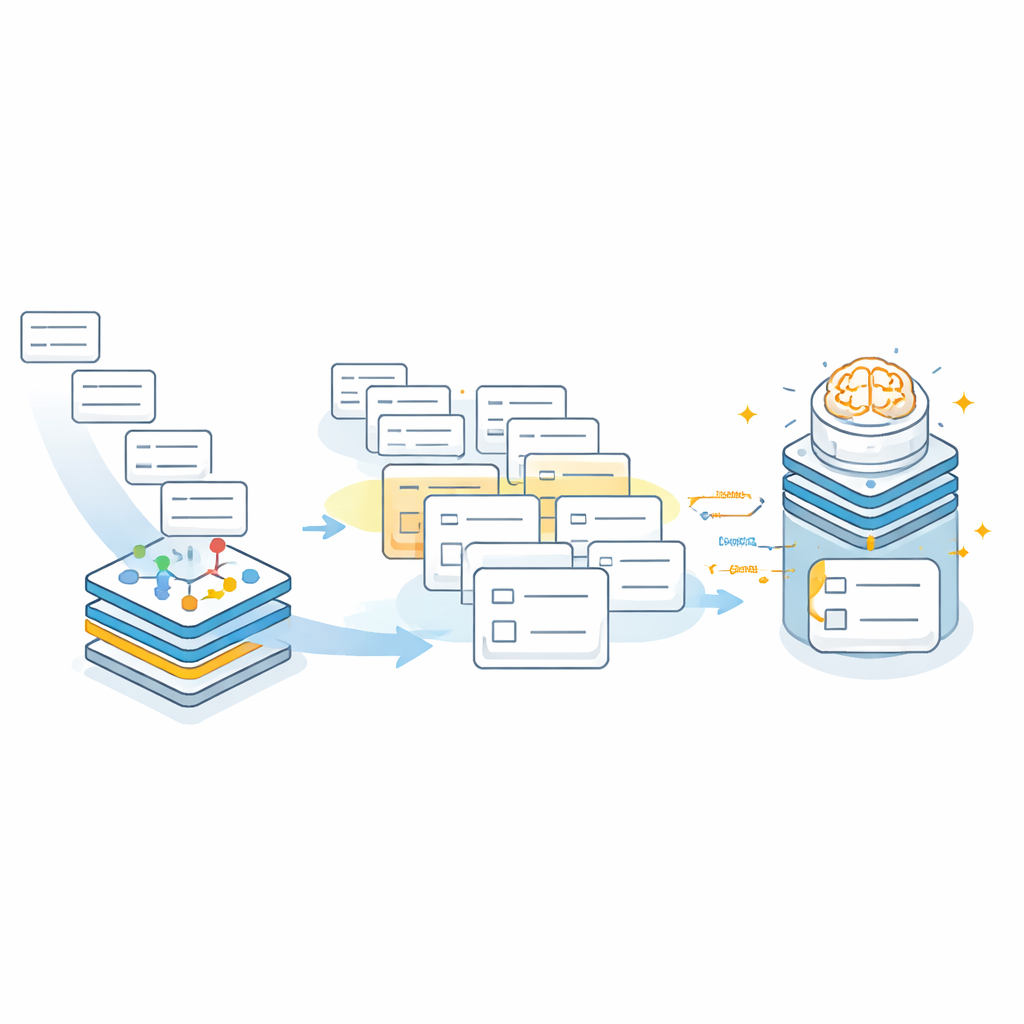

The researchers designed AddrKG‑LLM as a two-stage pipeline that adds structure and guardrails around the language model rather than letting it work alone. First, incoming patient addresses are cleaned to remove highly identifying details like building and room numbers and phone contacts, helping protect privacy. The remaining text is converted into a dense numerical representation that captures its meaning. At the same time, the team builds a knowledge graph—a map-like network that encodes the official relationships between cities, districts, streets, and residential communities. Using a technique called contrastive learning, they train the system so that addresses that refer to the same real-world community sit close together in this shared space, while unrelated places are pushed further apart. This lets the system rapidly retrieve a short list of likely address candidates for each new patient record.

Keeping the AI on a short leash

In the second stage, the large language model operates inside a carefully fenced-off search space. Instead of inventing an address from scratch, the model receives the original cleaned text plus the small set of candidate communities suggested by the knowledge graph. The prompt explicitly tells the model to pick only from these candidates and to output results in a fixed JSON structure with separate slots for city, district, street or township, and community. If none of the candidates fits—for instance, when the true community was never retrieved—the model is instructed to return empty values rather than guess. This “rejection-first” behavior sharply reduces the risk of plausible-sounding but wrong entries creeping into hospital records.

How well does it work in practice?

The team tested AddrKG‑LLM on ten thousand de‑identified real hospital addresses that reflect real-world noise: abbreviations, missing districts, spelling variants, and even completely invalid entries. They compared their system against classic string-matching tools, deep learning sequence-labeling models, general-purpose language models used in a free-form way, and a commercial address standardization service. On strict measures that require every field of an address to be correct at once, AddrKG‑LLM outperformed all of these baselines, raising overall accuracy by more than twelve percentage points over a strong BERT-based model. The gains were especially clear for abbreviated and partially missing addresses, where the knowledge graph’s built-in hierarchy helps fill in gaps. The authors also explored how performance changes with different language-model sizes and with different numbers of retrieved candidates, showing how hospitals can balance speed and accuracy for their own needs.

What this means for everyday care

For non-specialists, the key message is that AddrKG‑LLM offers a way to clean up vital but messy patient address data while keeping control firmly in human hands. By coupling a map-like knowledge graph with a constrained language model that runs entirely on hospital servers, the framework delivers more accurate, consistent addresses without sending sensitive details to external cloud services or allowing the AI to improvise. The result is a practical tool that can strengthen disease surveillance, improve resource planning, and support safer, more efficient hospital operations—simply by making sure every patient is reliably on the map.

Citation: Li, J., Pan, X. & Jia, Y. Patient address parsing via KG-aware contrastive learning and constrained on-prem LLM inference. Sci Rep 16, 8003 (2026). https://doi.org/10.1038/s41598-026-39348-z

Keywords: patient address parsing, health data quality, knowledge graph, large language model, medical informatics