Clear Sky Science · en

ReFaceX: donor-driven reversible face anonymisation with detached recovery

Why hiding faces still matters

Security cameras, social media, and medical datasets now capture billions of human faces. To share these images responsibly, organisations must hide who a person is without destroying what the image can tell us about things like where they are looking, how they move, or what expression they show. Simple tricks such as blurring or pixelating often fail on both counts: modern face-recognition systems can sometimes still recognise people, while humans and algorithms lose important visual detail. This paper introduces ReFaceX, a new way to disguise faces that aims to protect identity, keep images useful for analysis, and still allow authorised people to restore the original when needed.

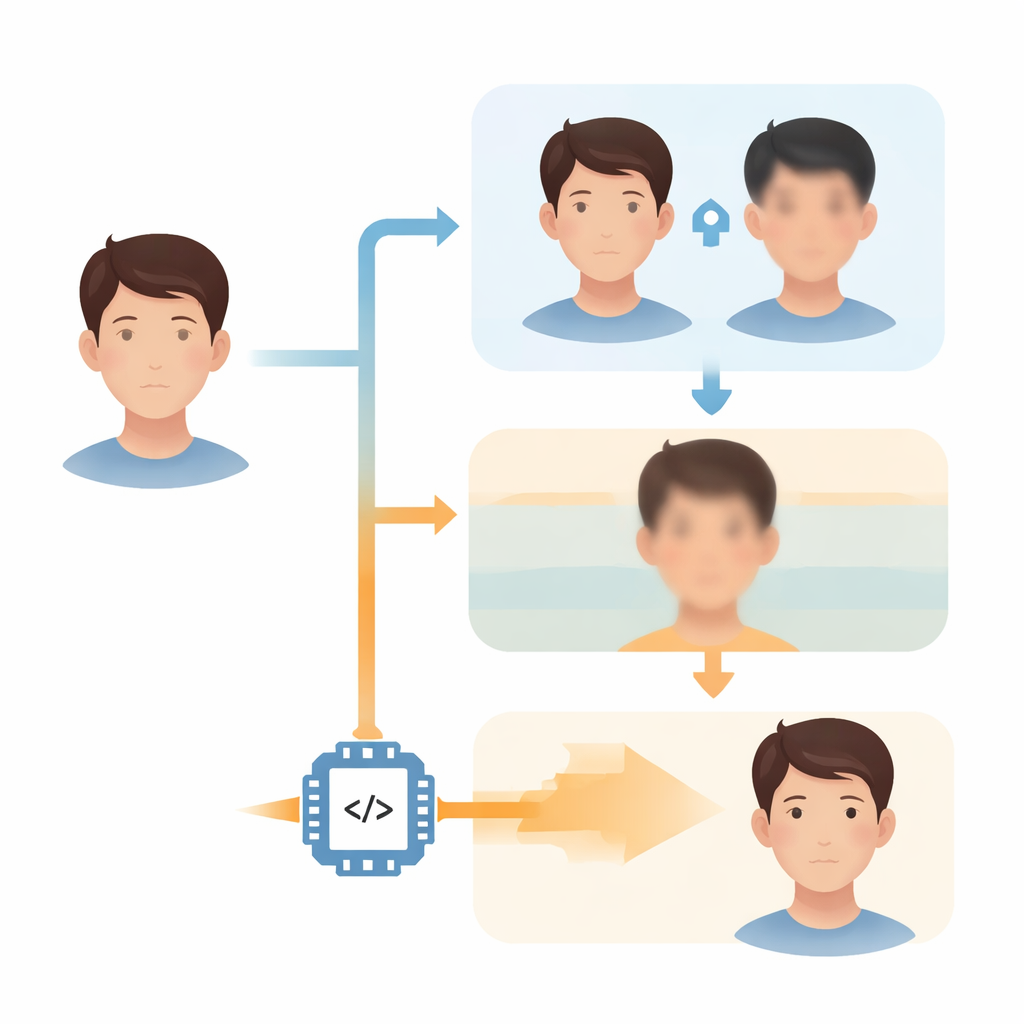

Changing who you look like, not what you are doing

ReFaceX starts from a simple idea: separate what must be hidden (who you are) from what must be preserved (what you are doing and where you are). Instead of just blurring or randomly altering a face, the system replaces the person’s identity with that of a “donor” face drawn from another image. A neural network takes features from the donor and blends them into the original face while carefully keeping pose, background, hair shape, and expression as unchanged as possible. The result is a new face that does not look like the original person, yet still fits naturally into the scene and remains useful for tasks such as detection, tracking, or reading facial landmarks.

A hidden key that rides inside the picture

Because some uses require getting back to the original face—for example, for medical follow-up or law‑enforcement review—ReFaceX is designed to be reversible under control. Instead of storing a separate file, it hides a compact “recovery code” inside the anonymised image itself using a learned form of digital watermarking. This hidden payload is not visible to the eye and is trained to survive common real‑world changes, such as JPEG recompression, mild cropping, resizing, and colour tweaks that occur when images are uploaded to online platforms. An authorised decoder can read out this code and feed it into a recovery network that reconstructs a close visual copy of the original face.

Keeping privacy and image repair from fighting each other

A major technical challenge in reversible systems is that the same network is often rewarded both for changing identity and for making it easy to reconstruct the original. This can tempt the model to quietly keep recognisable features, weakening privacy, or to over‑blur the image, killing usefulness. ReFaceX tackles this by physically separating the learning signals. The part of the system that hides identity is judged only on how unrecognisable the anonymised face is to strong commercial‑grade face recognisers. The part that restores the face is trained on a “detached” copy of the anonymised image, so its success cannot push the anonymiser to cheat by preserving identity. This careful wiring lets the authors tune privacy and utility as two dials rather than opposite ends of a single, fixed trade‑off.

Stress‑testing against real‑world attacks

To see if ReFaceX actually delivers on its promises, the authors evaluate it on standard face datasets (LFW and CelebA‑HQ) and compare it to several leading anonymisation methods. They measure how similar the anonymised faces look to the originals in the internal space of three powerful recognition systems and test how often a subject can be correctly matched from a large gallery. They also measure how close the restored faces are to the originals, using both pixel‑based scores and perception‑oriented metrics, and they time how fast the system runs on a single graphics card. Finally, they push the hidden recovery channel through repeated JPEG re‑encoding and other distortions, and even simulate adversarial attacks that try to pull the anonymised image back toward the original or the donor’s identity.

What this means for shared face data

The results show that ReFaceX consistently makes anonymised faces harder to match to the originals than competing methods, as judged by multiple independent recognisers, while at the same time producing the most faithful reconstructions for authorised users. It runs fast enough for real‑time use on standard hardware and keeps its hidden payload intact under realistic image handling. In plain terms, ReFaceX offers a practical blueprint for sharing face images that remain useful for research and industry without casually exposing who people are. By building in a clear attacker model, a robust recovery channel, and a controllable balance between secrecy and usefulness, it points toward a more responsible way to handle the ever‑growing archives of human faces.

Citation: Muhammad, D., Salman, M., Shah, S.M.H. et al. ReFaceX: donor-driven reversible face anonymisation with detached recovery. Sci Rep 16, 7882 (2026). https://doi.org/10.1038/s41598-026-39337-2

Keywords: face anonymization, privacy in imaging, deep learning, image steganography, face recognition