Clear Sky Science · en

Privacy-aware speaker trait and multimodal features relationship analysis in job interviews

Why Your Voice in Job Interviews Raises New Questions

More and more companies are turning to automated video interviews, where algorithms listen to how you speak and infer traits like confidence, reliability, or sociability. But your voice carries far more than a first impression—it can hint at your identity, health, and background. This paper explores whether it is possible to hide who you are in a recording while still letting computers judge how you come across as a job candidate. In other words, can we keep the benefits of AI-assisted hiring without quietly sacrificing our privacy?

From First Impressions to Automated Judgments

Hiring psychologists have long known that broad personality patterns—often described as the Big Five traits of openness, conscientiousness, extraversion, agreeableness, and emotional stability—matter for job success. Recent advances in artificial intelligence allow computers to estimate these traits from the way people talk in job interviews, capturing not just what candidates say but how they say it: their pitch, loudness, rhythm, and overall style of speaking. These systems promise faster, more consistent screening of applicants. Yet they also raise a troubling question: if a company stores your voice, could that same data later be used to recognize you, profile you, or infer sensitive details you never agreed to share?

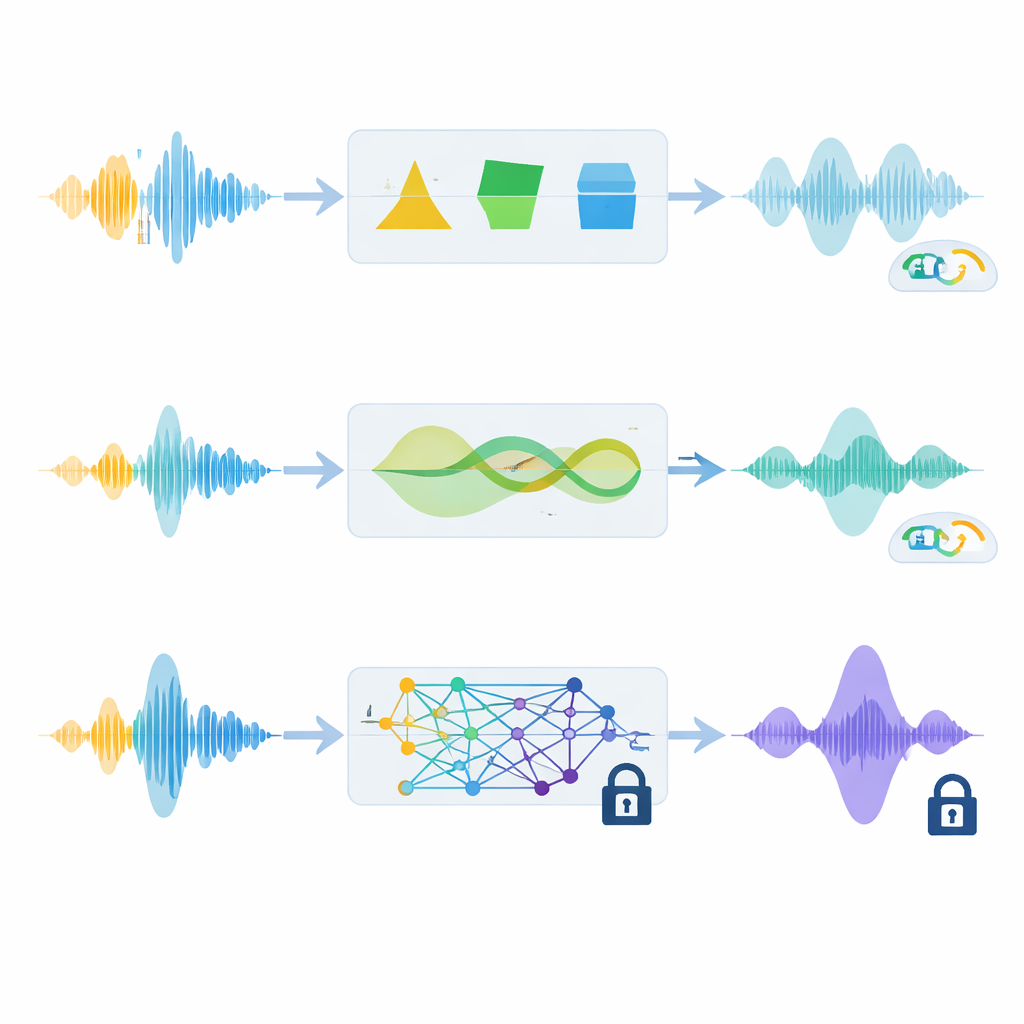

How to Hide a Voice Without Losing Its Character

To tackle this dilemma, the researchers studied techniques that alter a person’s voice so that it no longer sounds like them, while still preserving the cues needed for personality and hiring judgments. They focused on three anonymization methods. Two of them use traditional audio tricks, such as subtly reshaping sound frequencies and stretching or shifting the pitch over time. The third relies on a modern neural audio codec, which compresses the voice into a series of digital codes and then rebuilds it as a new, high-quality but different-sounding voice. Crucially, the team adjusted all methods so that a speaker’s perceived gender stayed the same and the converted voice was consistent across multiple answers in a long online interview.

Putting Privacy and Usefulness to the Test

Using nearly 1,900 real online job interview videos from people across the United States, the authors asked two main questions. First, how hard would it be for an attacker to match anonymized voices back to the original speakers using an advanced voice-recognition system? Second, after anonymization, could algorithms still predict key personality ratings and hiring recommendations with similar accuracy? They evaluated privacy using an error rate from automatic speaker verification—higher error means better protection—and measured usefulness via speech recognition accuracy, perceived audio quality, and how well machine-learning models could infer traits and hiring decisions from acoustic and language features.

What the Trade-Off Really Looks Like

The results reveal a nuanced balance between safety and performance. The simplest method, which lightly reshapes voice frequencies, provided only modest privacy and could fail almost completely when the attacker’s system was tailored to the anonymized voices. A more advanced signal-based technique that alters timing and pitch did much better: it significantly reduced the chances of successful re-identification while preserving the rhythm and expressiveness of speech. As a result, hiring and personality predictions remained close to those made from the original recordings. The neural audio codec method delivered the strongest privacy, making it far harder to link anonymized voices back to real speakers, and often even cleaned up background noise. However, in the noisy, real-world interview recordings, this method also disrupted subtle prosodic cues that drive how listeners perceive traits, leading to a noticeable drop in trait-estimation performance and higher errors in automatic transcription.

What This Means for Fair and Private Hiring

The study shows that there is no one-size-fits-all solution: stronger privacy often comes at a cost to how well AI can read personality and recommend candidates. For typical hiring settings where trait estimates and fair decisions are the priority, refined signal-processing approaches—especially the phase-based method tested here—may offer the best compromise, shielding identity while keeping the “feel” of a person’s voice intact. In situations with higher privacy demands, such as sharing speech data broadly or guarding against powerful attackers, newer neural codec methods can provide greater protection, but designers must accept some loss in how accurately personality and suitability are judged. Ultimately, the work argues that protecting candidates’ voices should be treated as an ethical requirement, not an afterthought, and that future tools must carefully target which aspects of speech to hide and which to preserve.

Citation: Mawalim, C.O., Leong, C.W. & Okada, S. Privacy-aware speaker trait and multimodal features relationship analysis in job interviews. Sci Rep 16, 8181 (2026). https://doi.org/10.1038/s41598-026-39322-9

Keywords: voice anonymization, AI hiring, speaker traits, privacy in speech data, job interviews