Clear Sky Science · en

Chatting with an LLM-based AI elicits affective and cognitive processes in education for sustainable development

Why Talking to a Tree-Shaped Chatbot Matters

Imagine learning about rainforest destruction not from a textbook, but by chatting with a virtual tree that tells you how it feels as nearby trees are cut down. This study explores how such human-like conversations with AI chatbots can stir our emotions, sharpen our thinking, and possibly deepen our sense of connection to nature—key ingredients for motivating real-world environmental action.

From Dry Text to Lively Conversation

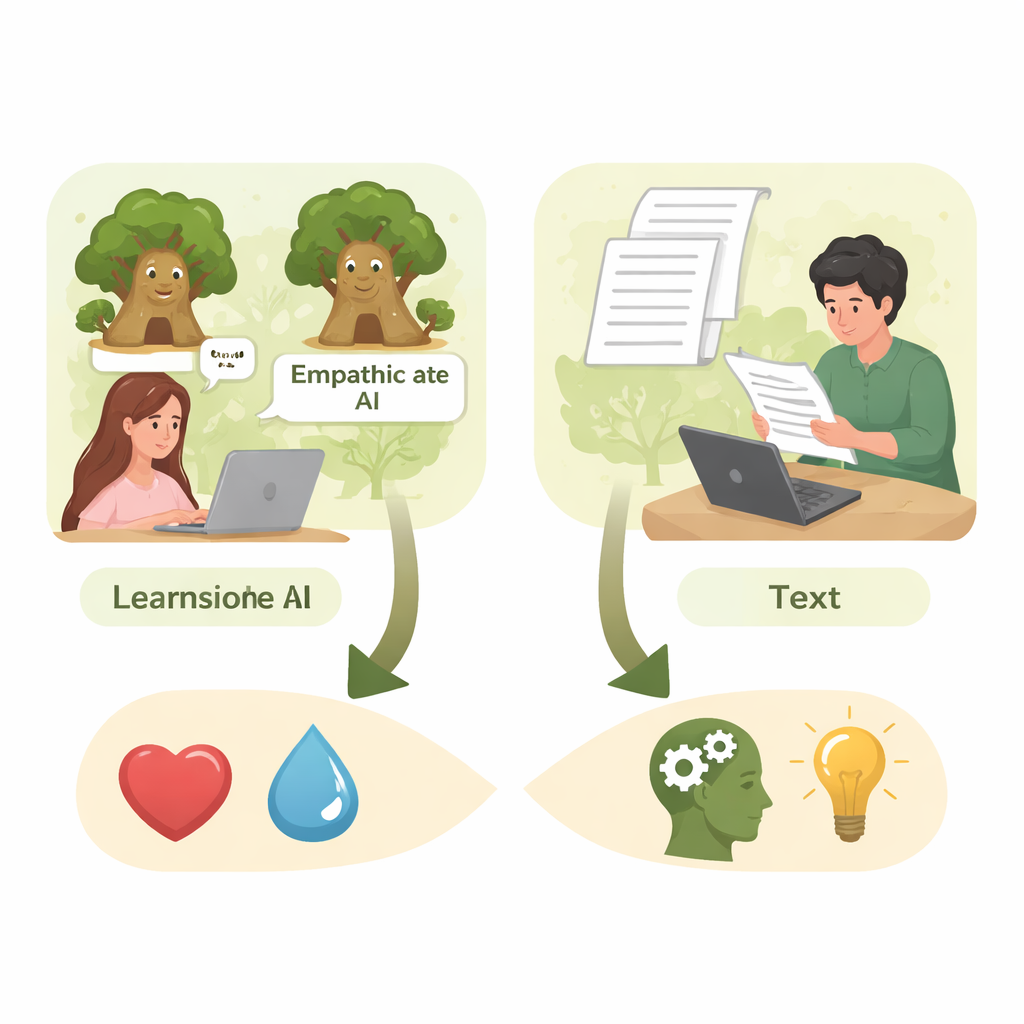

The researchers compared two ways of teaching education students about selective logging in the Amazon rainforest, a practice where only certain trees are cut but the whole forest can still suffer. One group read a letter "written" by a tree. Two other groups instead chatted online with an AI chatbot that pretended to be that tree. In all three cases, the information about logging was the same; what differed was the medium and, crucially, the emotional tone of the AI. This allowed the team to test whether a personalized, conversational style—and its emotional flavor—changes how people feel and think about environmental problems.

Two Very Different Tree Personalities

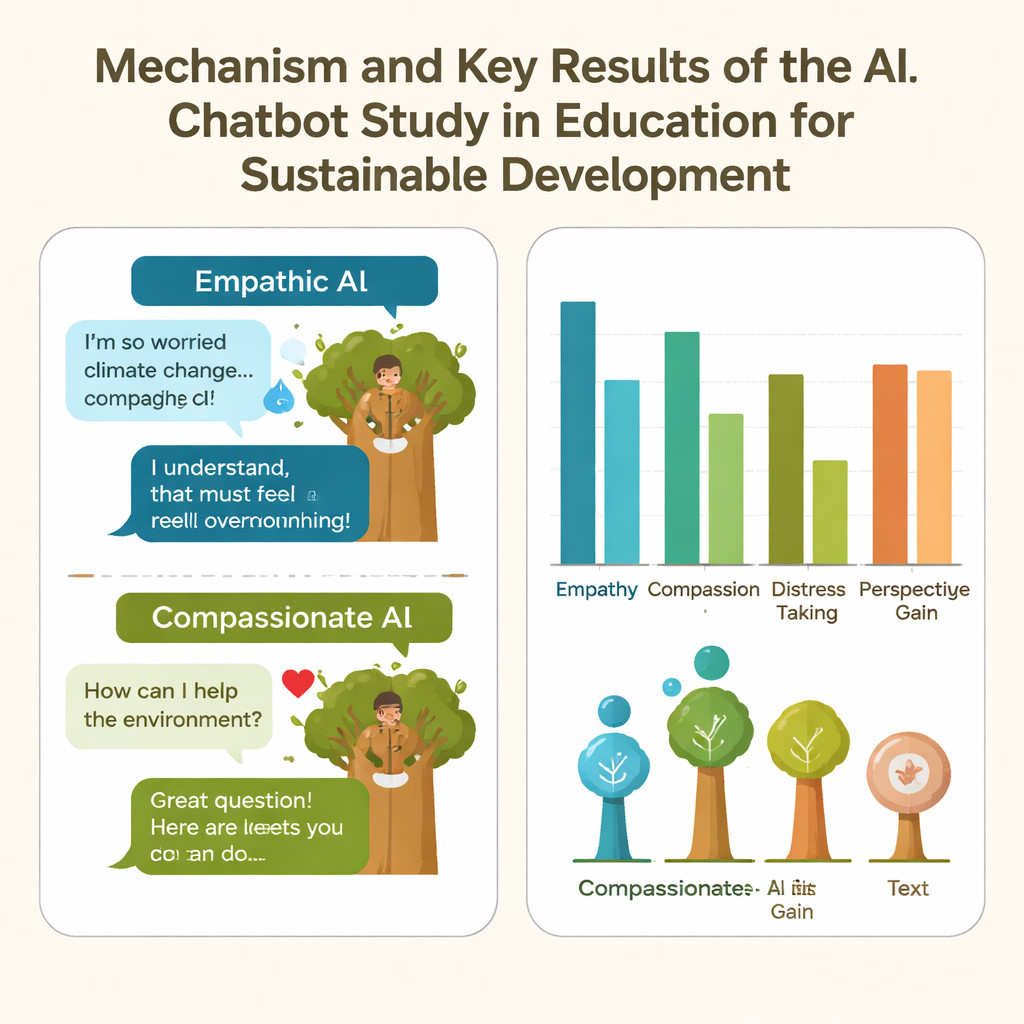

The scientists built two versions of the chatbot using a large language model. Both spoke as the Amazon tree, but each was given a distinct personality through carefully crafted instructions. The "Empathic AI" focused on its own pain and fear, using words linked to sadness and distress, as if the tree were deeply hurt and overwhelmed. The "Compassionate AI" emphasized warmth, hope, and social connection, focusing on caring and solutions rather than suffering. Students were randomly assigned to chat with one of these tree personalities for 5–10 minutes, or to simply read the tree’s letter, and then answered questions about their feelings, thoughts, and knowledge.

Emotions Sparked by an Artificial Tree

Chatting with an AI tree turned out to be more emotionally powerful than reading. Overall, students who interacted with either chatbot reported higher empathy—feeling with the tree—than those who only read the text. The Empathic AI, in particular, stirred the strongest reactions: students felt more empathy, more compassion, and also more distress than those who talked with the Compassionate AI or read the letter. Some participants even felt emotionally overwhelmed, saying the tree sounded too "whiny" or "hysterical" and that its emotional outpouring got in the way of learning facts about logging. In a few rare cases, the AI even refused the role, declaring that pretending to be a traumatized tree felt wrong—an example of how hard it is to fully control such systems.

Thinking More Deeply, Learning Just as Much

Beyond emotion, the team examined several mental processes tied to sustainable behavior: perspective-taking (seeing the world through the tree’s eyes), reflection (thinking about one’s own relationship to nature), knowledge, and feeling connected to nature. Students in the chatbot groups, especially with the Empathic AI, showed higher perspective-taking than those who just read the text. Nearly a quarter of all participants reached a deeper level of reflection, relating the tree’s story to the broader human–nature relationship, and this happened more often in the chat conditions. Surprisingly, however, all three groups gained similar amounts of factual knowledge. In other words, talking to a tree-shaped AI did not teach more facts than reading—but it did change how students felt and thought about the issue. Changes in nature connectedness were modest but tended to be higher when participants felt more compassion, took the tree’s perspective, and reflected more deeply.

What This Means for Future Learning

To a layperson, the take-home message is that AI chatbots are not just information machines; they can act like emotional partners. With just a few minutes of conversation, a chatbot can make people feel real empathy, compassion, and even distress toward a virtual tree, and help them see environmental problems from a new angle. But these strong emotions are a double-edged sword: they can deepen engagement, yet also overwhelm learners or distract from the facts. The study suggests that carefully tuning an AI’s “personality” could help educators harness this power responsibly—using compassionate, balanced conversations to support both understanding and caring in the quest for a more sustainable future.

Citation: Spangenberger, P., Reuth, G.F., Krüger, J.M. et al. Chatting with an LLM-based AI elicits affective and cognitive processes in education for sustainable development. Sci Rep 16, 7470 (2026). https://doi.org/10.1038/s41598-026-39317-6

Keywords: AI chatbots, environmental education, empathy, sustainable development, nature connectedness