Clear Sky Science · en

Video-dominant emotion recognition for portable EEG-based devices

Why Your Videos May Know How You Feel

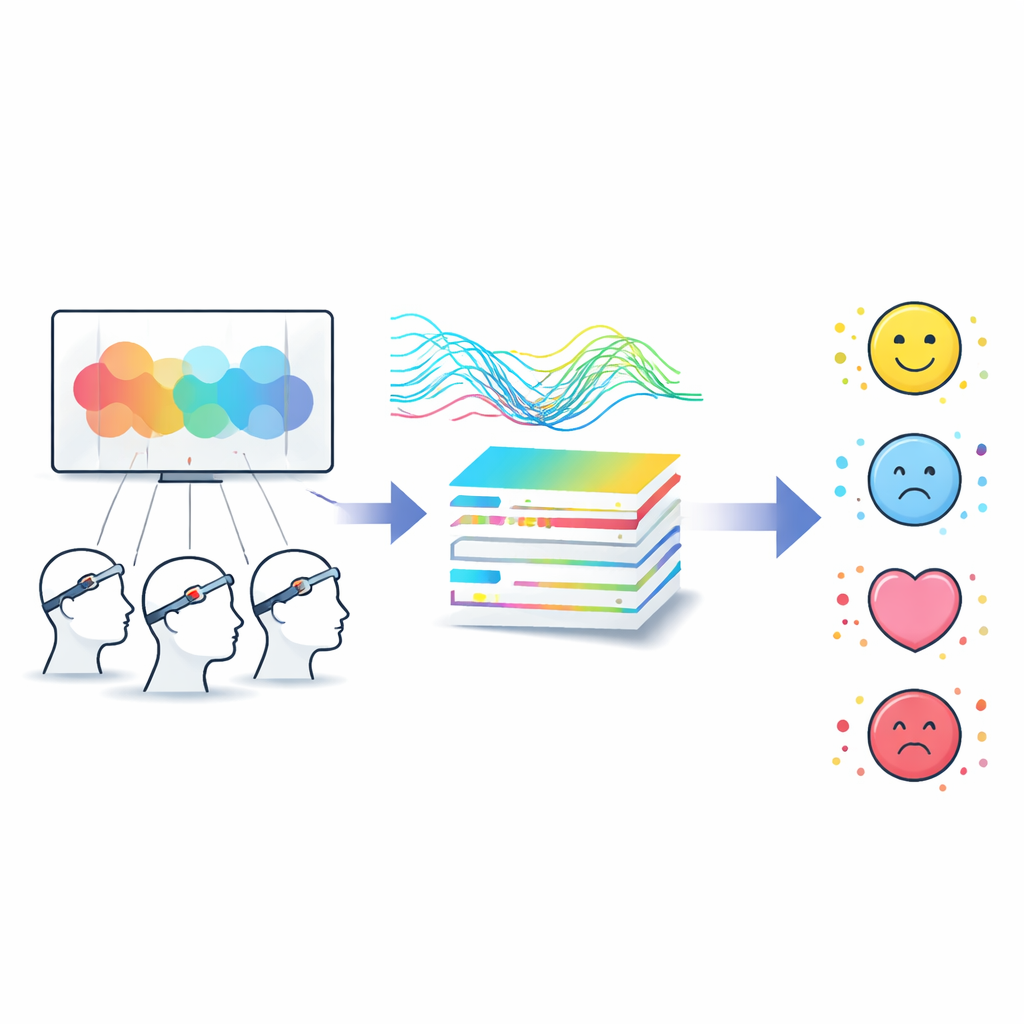

Imagine watching a movie trailer while a lightweight headband quietly listens to your brain and guesses whether you feel happy, relaxed, sad, or scared. This study explores how to make that scenario realistic using a small, portable brainwave (EEG) device instead of bulky lab equipment. The work matters for anyone interested in smarter media: from advertisers who want to understand audience reactions, to streaming platforms that could recommend shows based on how viewers actually feel, not just what they click.

Reading Feelings From Brainwaves

Our brains produce faint electrical signals that can be picked up at the scalp using electroencephalography, or EEG. These signals subtly shift when we experience different emotions. A popular research dataset, called DEAP, recorded people’s EEG while they watched music videos and then asked them to rate how pleasant, intense, controlled, and likable each video felt. Most previous studies tried to squeeze out the highest possible accuracy by using many electrodes and powerful computers, but under ideal lab conditions that do not match real life. This paper instead asks a more practical question: with a low-cost, portable device and fewer electrodes, can we still capture the main emotion a video tends to evoke across many people?

Finding a Shared Emotional Story

One obstacle is that people do not describe their feelings in the same way. Two viewers might watch the same clip, one calling it “exciting” and another “just okay.” The researchers tackle this by building a step-by-step label calibration system that looks for patterns across viewers rather than trusting any single person’s rating. First, all ratings are put on a common scale and compressed into a few key dimensions. Then, unsupervised clustering groups similar emotional responses together, aiming to split the videos into four broad corners of the emotional space: happy (pleasant and intense), relaxed (pleasant and calm), fearful (unpleasant and intense), and sad (unpleasant and calm). A final refinement stage nudges uncertain cases based on extra rating information, yielding a dominant emotion label for each video that better reflects the group’s overall impression.

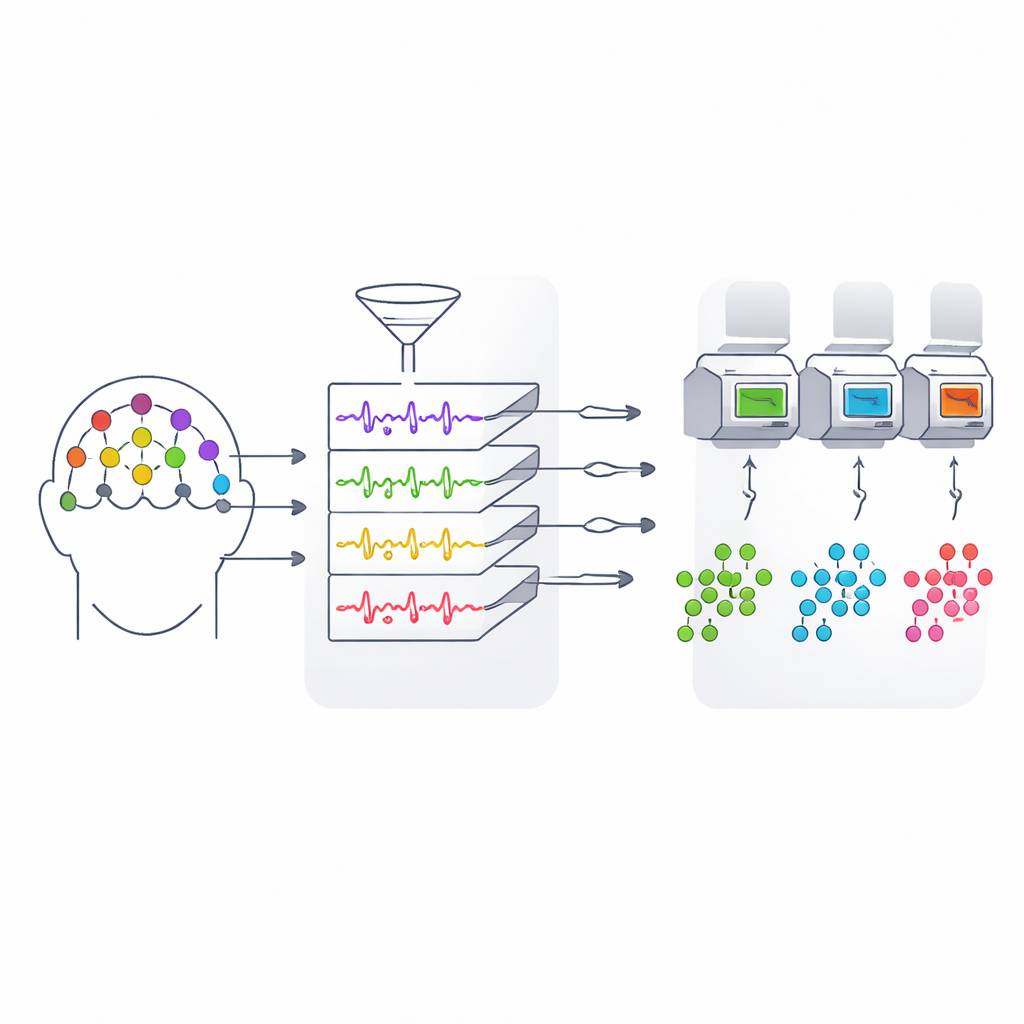

Making More From Less Hardware

Another challenge is hardware: full EEG caps with 32 or more electrodes are awkward and expensive. The team designs a way to slim this down to just 11 carefully chosen locations over the forehead, center, sides, and back of the head—regions tied to emotional control, arousal, hearing, vision, and attention. They then perform a detailed analysis of how signal energy spreads across classic brainwave bands (from slow to fast rhythms) under different emotional states. By comparing these patterns, they show that certain combinations of frequencies and scalp locations carry especially strong clues about whether a viewer is, for example, highly aroused or deeply relaxed. This multi-band energy ratio approach lets them keep the most informative signals while throwing away much of the redundancy.

Letting the Data Highlight What Matters

Even with fewer electrodes, each second of recording contains a flood of numbers. To avoid overwhelming the models, the authors combine several types of features—such as wavelet-based energy measures, how strongly different brain regions fluctuate together, and how the power in various frequencies changes over time—into a rich yet structured description of each viewing. A saliency-guided selection step then ranks these features by how helpful they are for telling emotions apart, keeping only a compact subset. Using this trimmed-down representation, three standard machine learning models are trained to recognize which of the four dominant emotions best fits a given video. In demanding tests where the system must generalize to completely new people, the best model reaches around 45% accuracy, a solid result for a four-way choice with noisy brain data and only 11 channels.

What This Means for Everyday Technology

For non-specialists, the key message is that we can begin to gauge how groups of people feel about videos using small, portable brainwave devices rather than full laboratory rigs. By cleaning up the emotional labels, focusing on the most informative parts of the EEG signal, and selecting only a handful of well-placed sensors, the authors show that it is possible to detect a video’s dominant emotional tone—happy, relaxed, fearful, or sad—across viewers. The system is not perfect, but it points toward practical tools for audience sentiment tracking, content testing, and emotion-aware recommendations that rely on objective brain responses rather than only on surveys or clicks.

Citation: Wen, X., Xu, W., Tian, L. et al. Video-dominant emotion recognition for portable EEG-based devices. Sci Rep 16, 7899 (2026). https://doi.org/10.1038/s41598-026-39315-8

Keywords: EEG emotion recognition, brain–computer interface, video affect analysis, wearable neuroscience, affective computing