Clear Sky Science · en

Use large language model to enhance reasoning of another large language model through reward updated GRPO

Teaching Machines to Think Things Through

Many of today’s language models can chat, translate, and answer questions, but they still struggle with showing their work the way a good math student or careful analyst would. This paper explores how one artificial intelligence system can be used to improve the reasoning skills of another, and how to do this without hand-building huge, specialized datasets. For readers interested in how AI may become more reliable in fields like finance, medicine, or scientific research, the work offers a practical recipe for making models explain their answers more clearly and consistently.

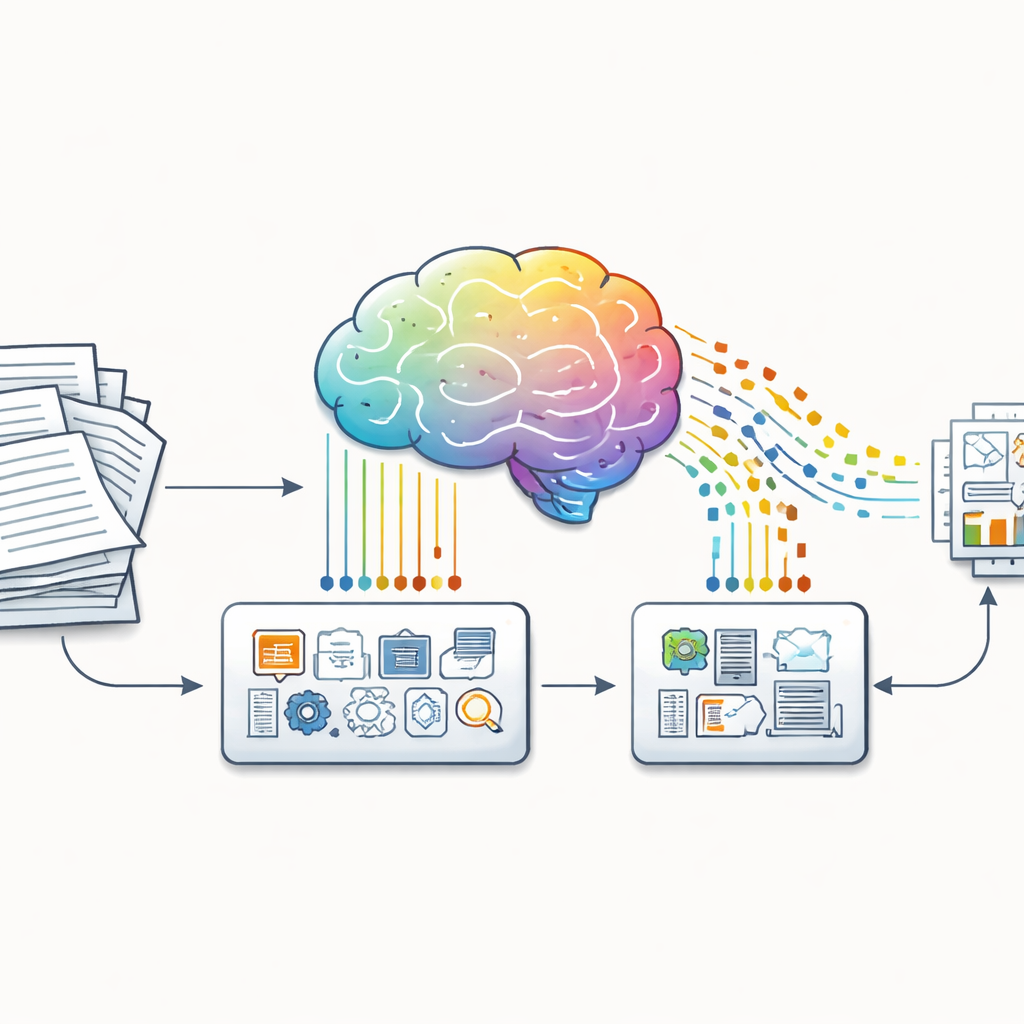

From Raw Documents to Teachable Examples

The authors start from a simple observation: most real-world information lives in messy forms such as reports, shareholder letters, or web pages, not in neat question-and-answer format. To bridge this gap, they introduce two software tools, Huggify-Data and the CoT Data Generator. These tools take unstructured text and automatically carve it into pairs of questions and answers, then ask a powerful language model to supply the missing reasoning steps in between. The result is a structured triple for each example: a question, a chain of reasoning, and an answer. Crucially, this pipeline can be pointed at almost any domain, from school mathematics to corporate finance, making it possible to build reasoning-focused training data without armies of human annotators.

How One Model Trains Another

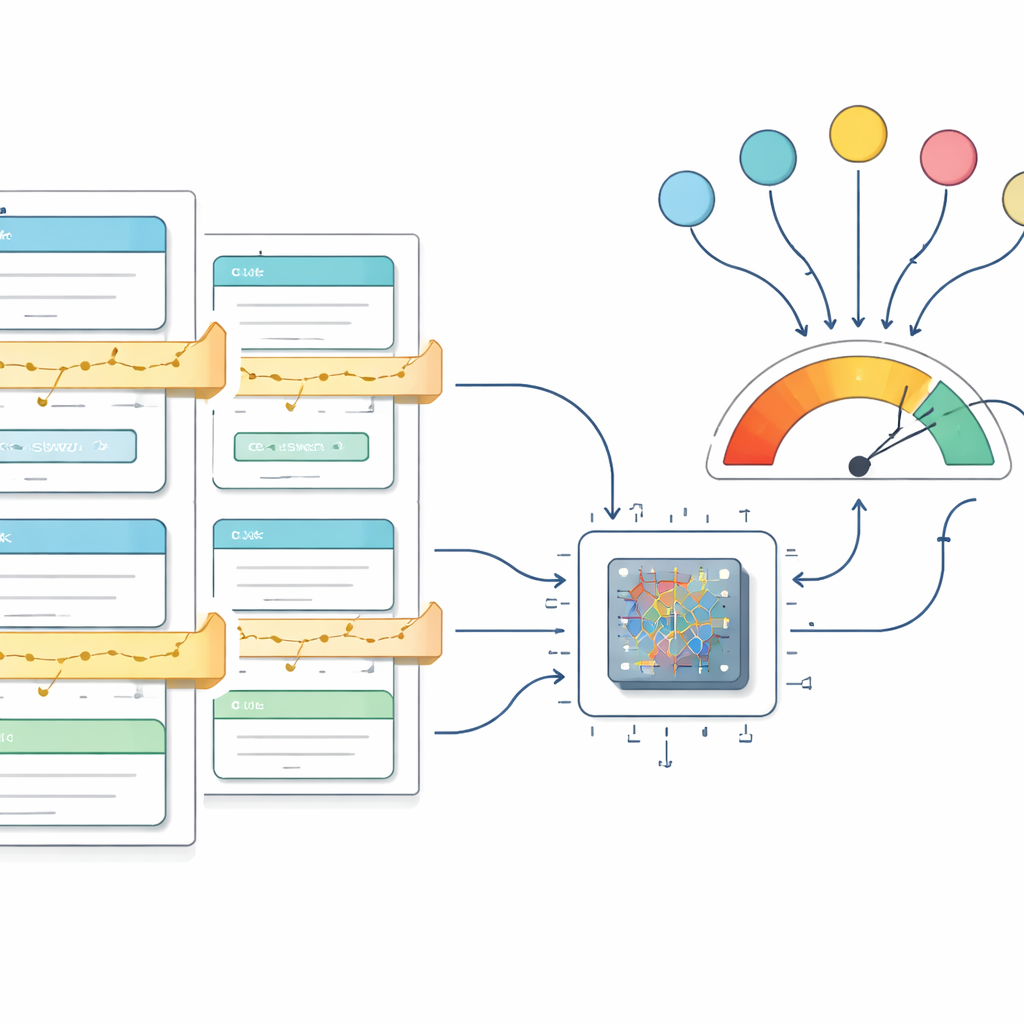

Once these question–reasoning–answer triplets are created, they are used to train a smaller “student” model to think in the same structured way. The student is asked not just to give a final answer, but to produce a clearly separated explanation followed by the conclusion. Training is guided by a method called Group Relative Policy Optimization, which compares several candidate responses to the same question and nudges the model toward better ones. The paper updates this method with an extra reward term that checks whether the model’s output follows the desired format, even down to how closely it matches a well-formed reference example. This reward gently penalizes scrambled or incomplete explanations, pushing the model toward tidy, interpretable answers.

Putting the Approach to the Test

To see whether the framework works in practice, the authors apply it to two very different datasets. The first, GSM8K, consists of grade-school word problems that require multi-step arithmetic reasoning. The second is built from Warren Buffett’s annual letters to shareholders, where the goal is to capture long-form reasoning about investing and corporate decisions. In both cases, the pipeline turns raw text into structured training data and fine-tunes a mid-sized model called Qwen 2.5. During training, a simple scoring rule rewards correct, well-formatted responses; as learning progresses, the average reward steadily climbs and stabilizes at its theoretical maximum, showing that the model has largely mastered the target behavior on the training data.

How Well the Improved Model Performs

Performance is measured using “mean token accuracy,” which asks, roughly, what fraction of the little text pieces (tokens) in the model’s outputs match the expected ones. While this is different from the usual all-or-nothing scoring of test answers, it is well suited to judging whether explanations and answers are produced in the right structure. On GSM8K, the best model reaches 98.2 percent token accuracy, and on the Buffett letters it reaches 98.5 percent. These scores are higher than those reported for widely known systems such as GPT‑4 and Claude 3.5 Sonnet under the same metric, all while using only a 3‑billion-parameter model that can be trained in under two days on rented hardware. The authors also share details about computing costs and hardware setups, and release all code, models, and datasets for others to inspect and build upon.

What This Means for Everyday AI Use

For non-specialists, the main takeaway is that AI systems can be taught not just to answer, but to answer in a disciplined, easy-to-follow way, using data automatically extracted from ordinary documents. By combining a reasoning-rich teacher model, a flexible data pipeline, and a reward scheme that values both correctness and clarity, the authors show how to sculpt smaller models into more reliable problem-solvers. Although they note limitations—such as the need for stronger tests of true understanding and safety—the framework points toward a future where organizations can turn their own text archives into tailored, transparent AI assistants for education, finance, and beyond.

Citation: Yin, Y. Use large language model to enhance reasoning of another large language model through reward updated GRPO. Sci Rep 16, 8360 (2026). https://doi.org/10.1038/s41598-026-39296-8

Keywords: large language models, chain-of-thought reasoning, reward optimization, data curation, domain-specific AI