Clear Sky Science · en

A comparative analysis of embedded chatbot models and ChatGPT-4 for answering orthodontic treatment queries

Why smarter chatbots matter for braces

Anyone who has worn braces knows that questions don’t wait for clinic hours: Will this pain stop? Can I eat this? Do I need to worry about my jaw? This study explores whether a purpose-built orthodontic chatbot—designed specifically to answer these everyday questions—can give clearer, more reliable answers than a general artificial intelligence system, ChatGPT‑4. The work offers a glimpse of how carefully tailored AI tools might support both patients and clinicians in modern dental care.

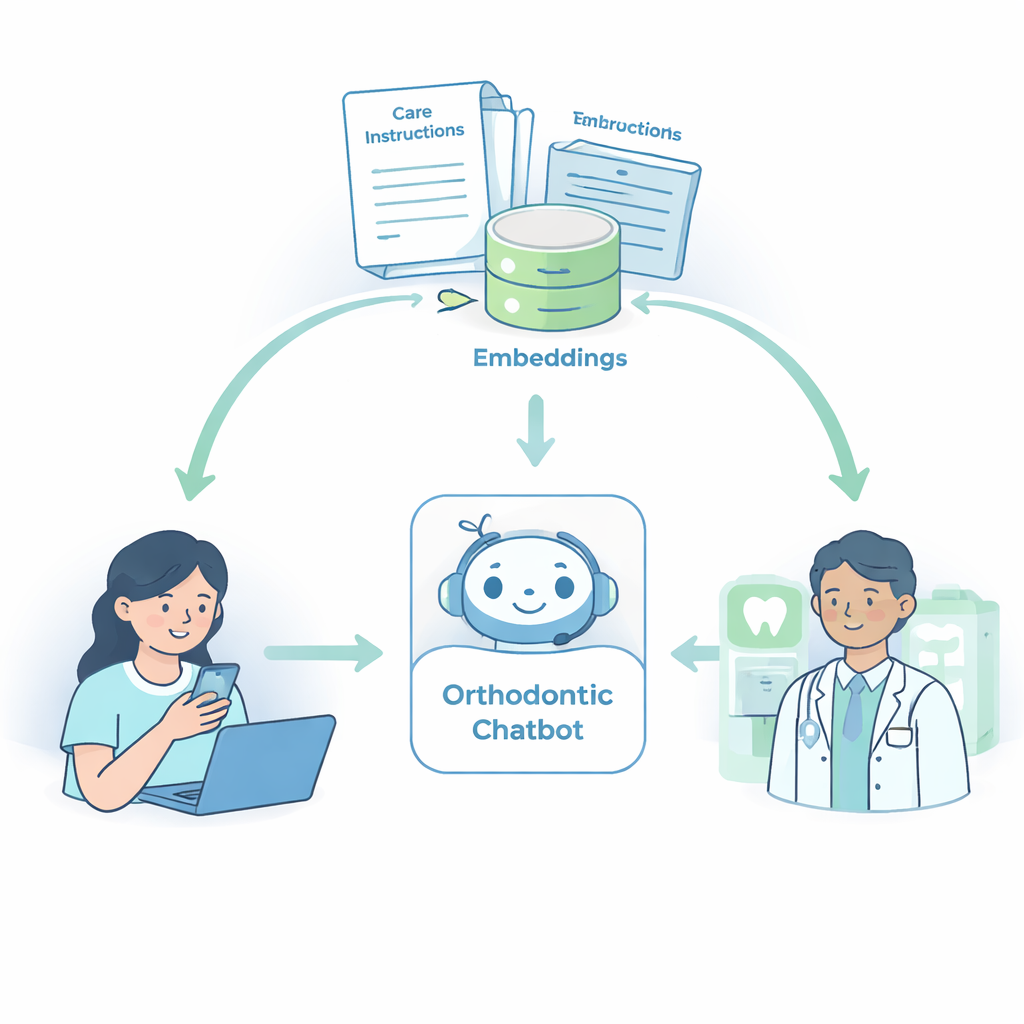

A chatbot built just for braces questions

The researchers created an embedded chatbot focused solely on orthodontic treatment. Instead of training a new AI from scratch, they connected an advanced language model to a curated library of patient materials and key textbook excerpts. This library included leaflets from the British Orthodontic Society on topics such as oral hygiene, diet, appliance care, elastics, and retainers, along with short explanations from standard orthodontic textbooks. Using a technique called retrieval‑augmented generation, the system searched this library for relevant passages whenever a question was asked and used them to shape its answer, aiming to mirror what a patient would hear during a typical chairside conversation.

How the study tested the two systems

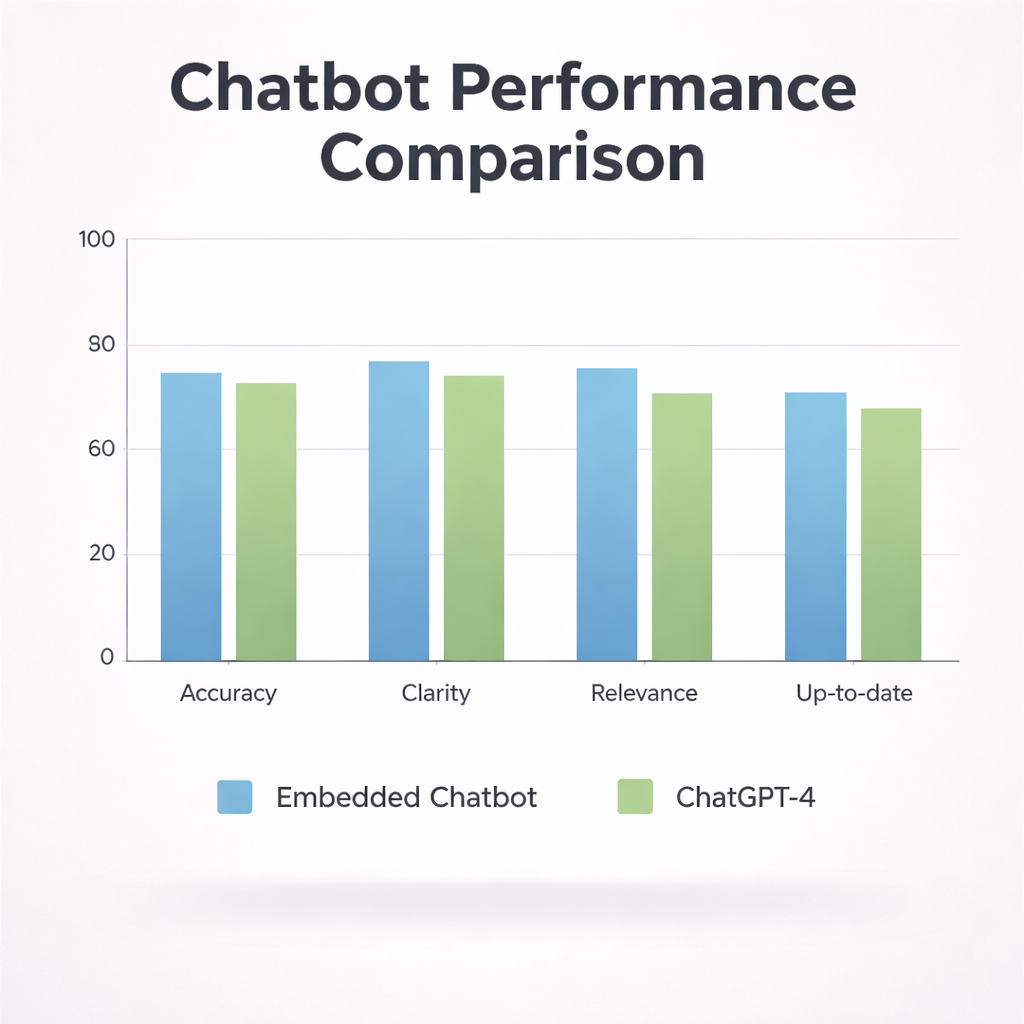

To see how well this specialized chatbot performed, the team compared it with ChatGPT‑4, used in a standard way via the ChatGPT Plus interface. They assembled 30 real‑world questions that patients commonly ask before, during, and after braces treatment—for example, whether braces cause pain, how they affect speaking or singing, how often checkups are needed, and whether braces can help with jaw joint problems. Both systems were given the same prompts, instructing them to respond as an orthodontic expert using clear, patient‑friendly language. Six experienced orthodontic consultants then rated each anonymized answer on four aspects: accuracy, clarity, relevance to the question, and how up‑to‑date the information seemed, using a five‑point scale.

Measuring quality, not just opinions

Rather than relying on general impressions, the researchers used a structured scoring method called the Content Validity Index. For each question and each quality aspect, they counted how many experts rated an answer as “agree” or “strongly agree” and converted this into a score between zero and one. High scores meant that most experts felt the answer was accurate, clear, relevant, or current. They also calculated averages across all questions to see how each system performed overall. Statistical tests were applied to check whether any differences between the two chatbots were large enough to be considered meaningful rather than due to chance alone.

What the orthodontists thought of the answers

The embedded chatbot generally came out ahead. About three‑quarters of its answers reached an acceptable quality threshold, compared with just over half of the answers from ChatGPT‑4. On average, the specialized chatbot scored better in accuracy, clarity, and relevance, and it also appeared slightly more in line with current guidance. For example, when explaining pain during braces treatment or whether braces affect speech, its responses were straightforward, concrete, and closely matched standard patient advice. By contrast, ChatGPT‑4’s answers, while often reasonable, tended to be more general and sometimes more technical, which may have reduced their clarity in the eyes of the experts. However, when the researchers ran formal statistical tests, the differences between the two systems were not large enough to count as statistically significant.

Limits and lessons for future AI in the clinic

The study also revealed that even experts do not always agree on what counts as the “best” answer. Overall agreement between the orthodontists was weaker than expected, especially for subjective aspects like clarity and relevance. The researchers noted several other limits: they studied only two AI setups, did not involve patients directly, and based their specialized chatbot on a specific set of written materials. Still, their work adds to growing evidence that AI systems can answer many common dental questions reasonably well, and that adding focused, up‑to‑date reference material can nudge performance higher.

What this means for people with braces

For patients, the takeaway is encouraging but cautious. A well‑designed, orthodontics‑focused chatbot can provide clear, trustworthy answers to many everyday questions and may reduce anxiety between appointments. At the same time, this study shows that such a tool does not yet replace the need for professional judgment or in‑person advice. The real promise lies in combining these tailored AI helpers with expert care, so that people with braces can get timely, easy‑to‑understand information while still relying on their orthodontist for final decisions.

Citation: Khalil, R., Amin, L., Sukhia, R.H. et al. A comparative analysis of embedded chatbot models and ChatGPT-4 for answering orthodontic treatment queries. Sci Rep 16, 7776 (2026). https://doi.org/10.1038/s41598-026-39263-3

Keywords: orthodontic chatbot, dental AI, braces questions, patient education, ChatGPT comparison