Clear Sky Science · en

Swamp-AI: a deep learning model for monitoring wetlands change across the globe

Why watching Earth’s soggy edges matters

Wetlands – marshes, swamps, deltas and floodplains – quietly protect our coasts, store carbon, filter water and shelter wildlife. Yet they are shrinking worldwide, often out of sight in remote or hard-to-reach places. This study introduces “Swamp-AI,” a computer vision system that scans satellite images to find wetlands and track how their footprints change over time, offering a faster, cheaper way to keep tabs on these threatened landscapes.

Seeing hidden waters from space

Traditional wetland surveys rely on experts visiting sites, measuring plants, soils and water levels. That kind of fieldwork is slow and expensive, and many wetlands sit in roadless tundra, tropical floodplains or politically unstable regions. Satellites, by contrast, circle the globe every few days, capturing repeat images of Earth’s surface. The challenge is turning those raw images into reliable wetland maps without a small army of human interpreters. Earlier mapping methods required specialists to carefully tune thresholds or hand-draw boundaries, and the resulting models often worked only in one country or for one type of wetland. Swamp-AI aims to break that bottleneck by learning general “visual signatures” of wetlands that hold up from Louisiana to the Mekong Delta.

Building a global training atlas

To teach an algorithm what a wetland looks like, the team first had to assemble a training atlas of labeled satellite scenes. They created the Global Swamp Annotated Database (GSADB) using 2019 imagery from Europe’s Sentinel-2 satellite, which provides medium-resolution color and infrared views of Earth every five days. From 34 locations worldwide, spanning 21 inland and 13 coastal regions, they drew 102 detailed masks marking where wetlands were present. Instead of visiting every site, they combined several global data products: an existing 30-meter wetland map, a digital elevation model that hints at low-lying, flood-prone terrain, and a vegetation index that highlights green, growing plants. Four annotators cross-checked each other’s work, discarding scenes when they could not agree, and defined a single broad class of “wetland” to keep the labels consistent from Arctic marshes to tropical swamps.

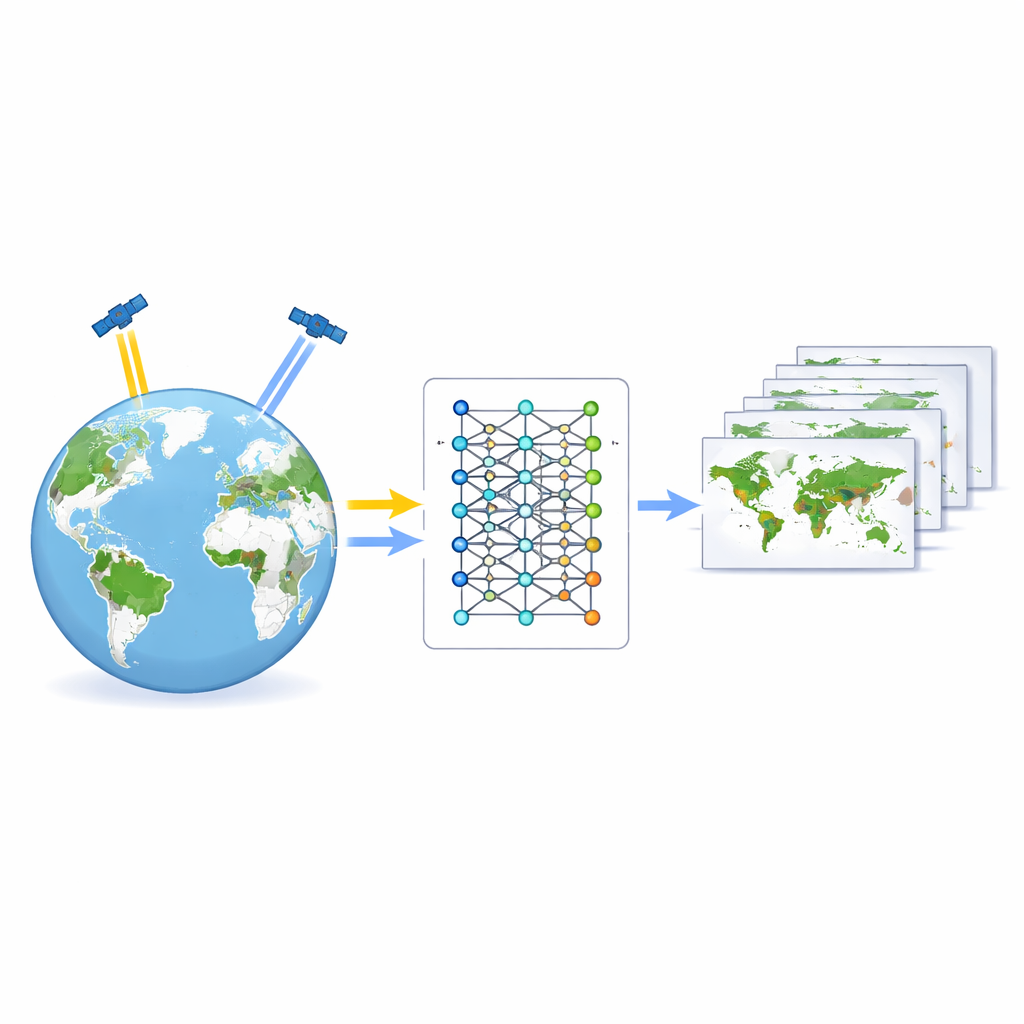

Teaching the machine to recognize soggy ground

Armed with this atlas, the researchers trained 15 different deep learning models that perform “semantic segmentation” – assigning every pixel in an image to either wetland or non-wetland. They tested three popular network designs that have worked well on medical scans and other environmental images, and paired each with five ways of measuring training errors, known as loss functions. Because wetlands were usually the minority in each scene, they also experimented with loss functions tailored to unbalanced data. The training images were split by geography, not at random, so that the models were always tested on places they had never seen nearby, reducing the risk of overfitting to local quirks.

Picking a winner and checking it in the real world

After training, the best-performing models were put through tougher exams. The team created an independent test set using sharper, three-meter imagery of three wildlife preserves in the United States, then downscaled the hand-drawn wetland outlines to match Sentinel-2’s coarser resolution. The champion turned out to be a network called ResUNet34 combined with a hybrid “focal-dice” loss. This version of Swamp-AI correctly labeled about 94% of pixels overall and achieved an intersection-over-union score – a strict measure of how well predicted and true wetland areas overlap – of about 75%. Visual checks showed that it continued to find marshes and swamps even outside the regions used for testing. The authors then applied Swamp-AI to famous wetlands worldwide, and found that, with slight tuning of its internal confidence threshold, it maintained high accuracy from cold northern bogs to tropical floodplains.

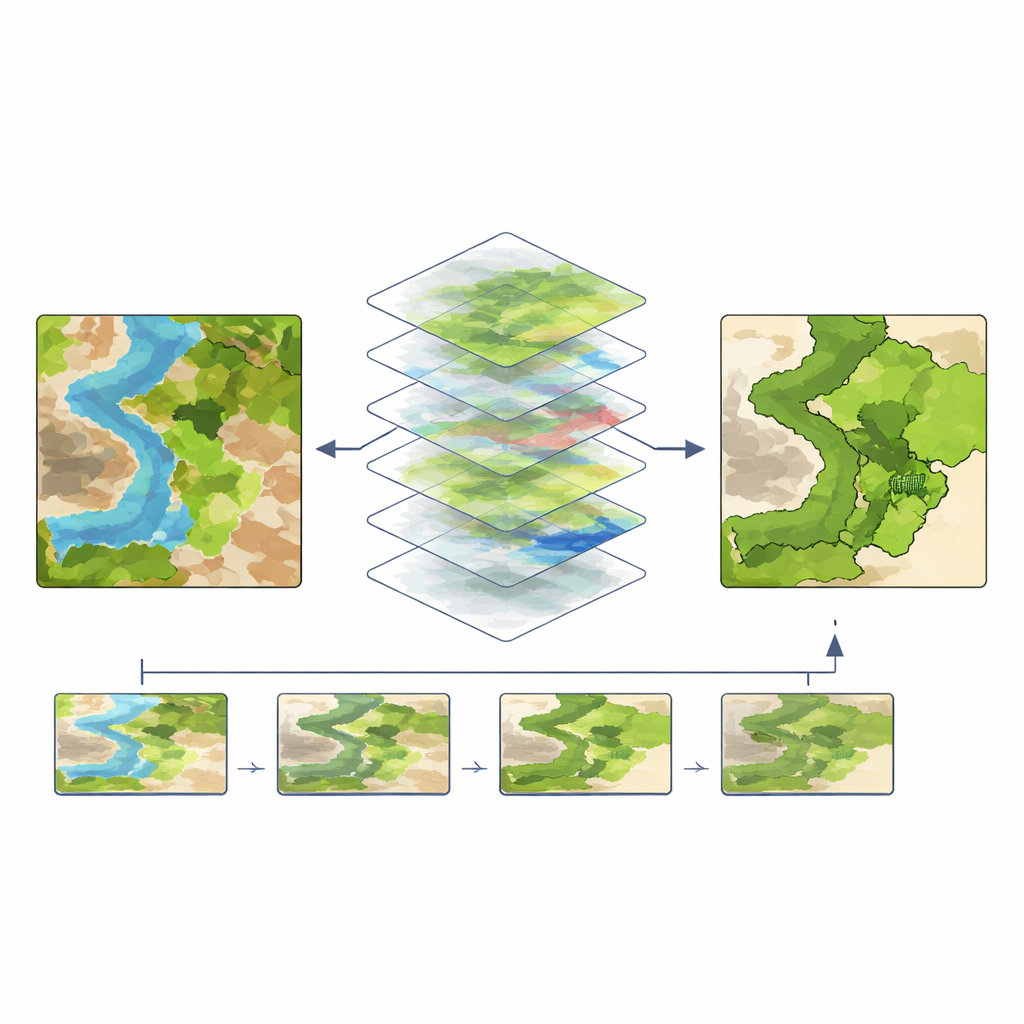

Following a shrinking shoreline in New York

To illustrate how Swamp-AI might be used in practice, the team tracked salt marsh islands in Jamaica Bay, New York, from 2019 to 2024. By running the model on yearly image composites, they estimated that the bay’s islands collectively lost about 18 hectares of wetland per year, with some islands remaining relatively stable while others showed strong signs of retreat. Comparing images taken at high and low tide in 2024 revealed another nuance: when water levels were low and marsh surfaces were exposed, Swamp-AI found almost 30% more wetland area than in the high-tide view, underscoring how sensitive satellite-based mapping can be to timing and water level.

A new early-warning system for wetland loss

For non-specialists, the key message is that Swamp-AI acts like an automated wetland inspector, scanning global satellite feeds and flagging where vegetated, waterlogged areas are holding steady or disappearing. It cannot yet distinguish fine details such as plant species or wetland subtypes, and it inherits some limitations from the reference maps used to train it. Still, by delivering fast, globally consistent maps with accuracy comparable to many local studies, Swamp-AI offers conservationists and planners an early-warning tool. It can help direct costly field surveys to the most at-risk sites and support smarter decisions about restoration, coastal defense and climate resilience.

Citation: Andros, C.S., Conery, I.W., Alvarado, T.R. et al. Swamp-AI: a deep learning model for monitoring wetlands change across the globe. Sci Rep 16, 8830 (2026). https://doi.org/10.1038/s41598-026-39257-1

Keywords: wetlands, remote sensing, deep learning, environmental monitoring, satellite imagery