Clear Sky Science · en

Evaluating AI-powered learning assistants in engineering higher education with implications for student engagement, ethics, and policy

Why this matters for today’s college students

College students are turning to chatbots and other artificial-intelligence tools for help with homework, coding, and confusing class concepts. But can these tools really support learning, and what happens to issues like cheating, fairness, and trust when software becomes a study partner? This paper follows a real-world experiment in civil and environmental engineering courses that tested an AI-powered “Educational AI Hub” and asked students what worked, what worried them, and how they think AI should fit into the future of higher education.

One digital study buddy, many built‑in tools

The Educational AI Hub is a course-specific learning assistant that lives inside the university’s online learning platform. Instead of sending questions to a general chatbot trained on the whole internet, students interact with an assistant that is tightly linked to their own lectures, assignments, and syllabus. Behind the scenes, the system reads instructor-approved course PDFs, turns them into searchable chunks, and feeds only those into an advanced language model to answer student questions. The Hub offers six main tools: short AI-generated notes, a question-and-answer chatbot, flashcards, auto-graded quizzes, a coding help “sandbox,” and quick answers to syllabus logistics. It is designed to act like a friendly, always-available teaching assistant rather than a shortcut engine.

How the study was carried out

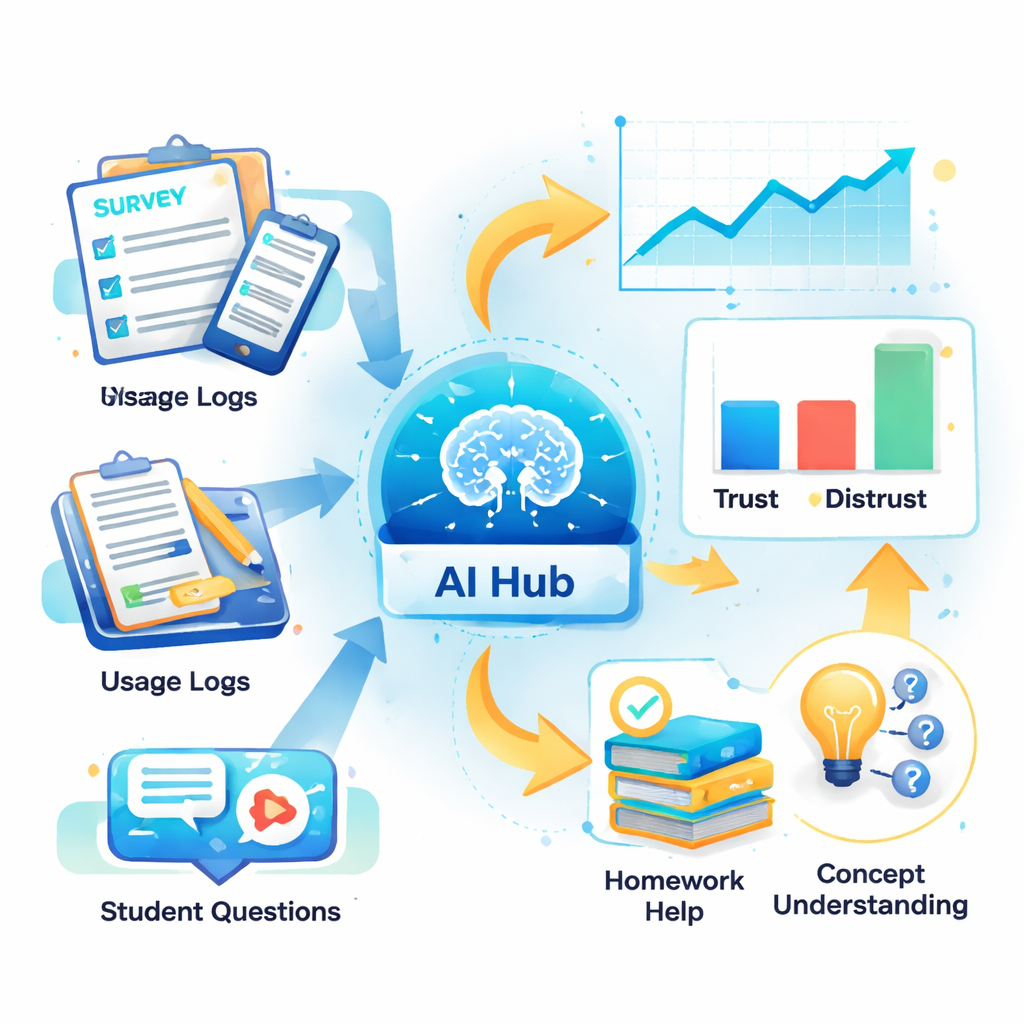

To see how students actually used this assistant, the researchers embedded it in two undergraduate engineering courses at a large public research university. Sixty-five of the 77 enrolled students volunteered to participate. They completed surveys at the start and end of the semester about their experience with AI, their trust in such tools, and their concerns about ethics and academic integrity. At the same time, the system quietly logged every interaction: which features students used, how often they came back, and what kinds of questions they asked. The team also coded hundreds of student queries into themes such as “software help,” “theory questions,” and “assignment clarification” to understand what kind of help students were really seeking.

What students liked—and what they didn’t fully trust

The most popular feature by far was the chatbot, which accounted for more than 90 percent of all interactions. Students used it heavily to get unstuck on technical software tasks in tools like ArcGIS and MATLAB, and to clarify core engineering concepts and formulas. Many reported that the assistant made it easier to understand course material and to finish homework, and some said they were more comfortable asking questions of the AI than of professors or teaching assistants. However, most students still saw human help as equal or better in quality. Concerns about incorrect or misleading answers from AI—sometimes called “hallucinations”—meant that many users treated the assistant as a convenient first pass rather than a final authority.

Ethical gray areas and policy confusion

Even when students appreciated the tool, they were uneasy about the rules around using it. Many were unsure exactly what counted as academic misconduct when working with AI and worried about being falsely accused of cheating. Almost half believed that AI did not automatically undermine academic integrity, but a similar share felt apprehensive about using it in graded work. Importantly, students did not want AI to be banned; instead, they called for “somewhat restrictive” course policies with clear guidance. Most agreed that using AI as an academic aid can be ethical and said they would like similar tools in other courses, as long as expectations are spelled out in plain language and tied to responsible habits like checking sources and citing assistance.

From data to better teaching and learning

Usage logs and survey responses told a consistent story: students used the AI Hub most when they trusted it, felt comfortable with it, and saw it as genuinely helpful for course tasks such as concept review and homework. Less trust in AI went hand-in-hand with lower usage, while comfort and perceived usefulness were linked to more frequent engagement. Yet students did not credit the tool alone for boosting their grades, and many still turned to general-purpose chatbots for coding or math that went beyond the course materials. These patterns suggest that AI assistants work best as a targeted support layer inside a course—not as a replacement for instructors, and not as a one-size-fits-all solution.

What this means for the classroom of the future

For a layperson, the takeaway is that AI study helpers can make technical subjects more approachable and give students a low-pressure way to ask questions at any hour, but they do not magically fix learning on their own. In this study, engineering students treated the Educational AI Hub as a handy companion that could unblock problems and explain tough ideas, while still relying on teachers and traditional study habits. Their biggest worries centered not on the technology itself but on unclear rules and fears about cheating accusations. The authors argue that if colleges want AI to genuinely improve education, they must pair smart, course-aware tools with clear, transparent policies and active guidance from faculty, helping students learn not just from AI, but also how to use it responsibly.

Citation: Sajja, R., Sermet, Y., Fodale, B. et al. Evaluating AI-powered learning assistants in engineering higher education with implications for student engagement, ethics, and policy. Sci Rep 16, 7565 (2026). https://doi.org/10.1038/s41598-026-39237-5

Keywords: AI in education, engineering students, learning assistant, academic integrity, student engagement