Clear Sky Science · en

Multimodal large language models challenge NEJM image challenge

Why this matters for patients and doctors

Getting the right diagnosis at the right time can be the difference between quick treatment and years of suffering. Yet doctors, even highly trained ones, still miss or delay diagnoses, especially for rare or unusual diseases. This study asks a striking question: when medical images and clinical details are fed into today’s most advanced artificial intelligence systems, can they actually diagnose complex cases better than large numbers of real physicians—and if so, what does that mean for future medical care?

A huge puzzle built from real-world cases

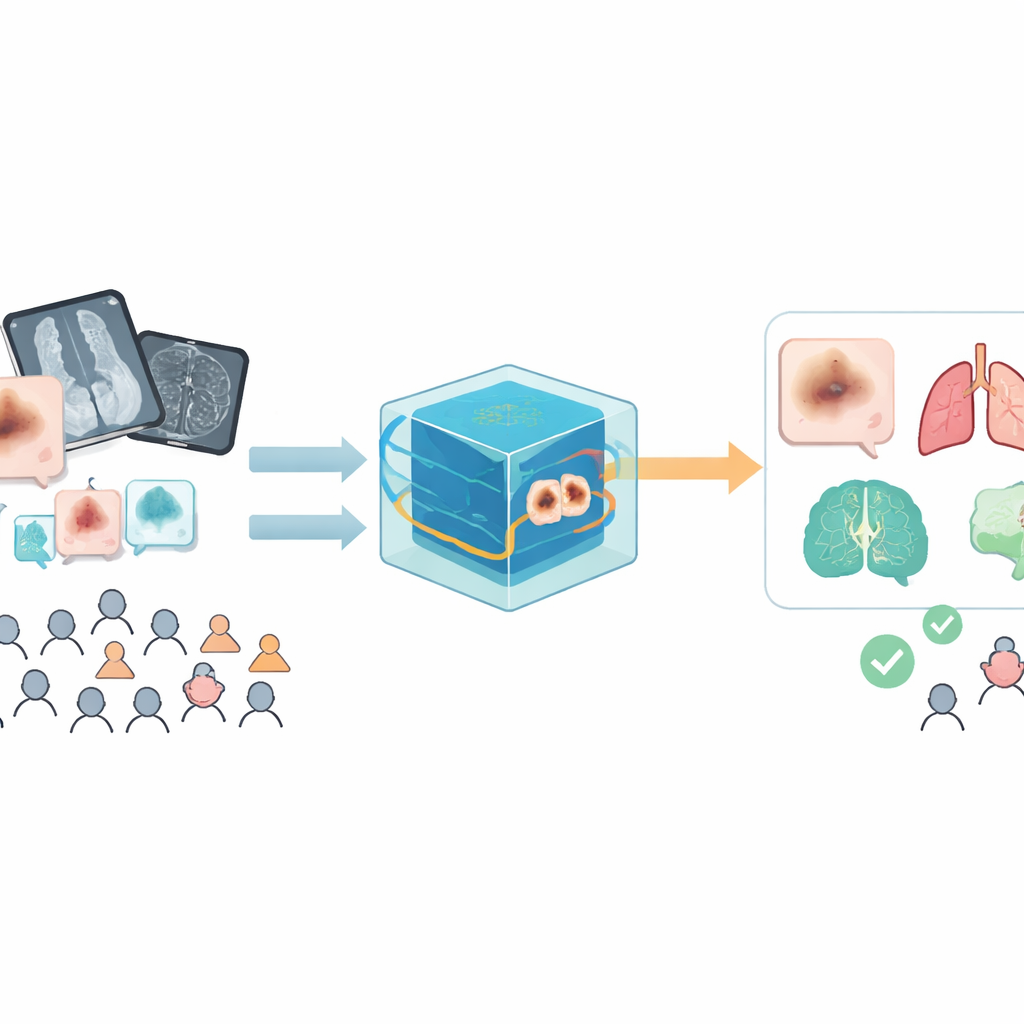

The researchers turned to a long-running feature from the New England Journal of Medicine called the "Image Challenge." Each challenge presents a real patient’s medical image—such as a skin photo, X-ray, MRI, or microscope slide—along with a short clinical story and five possible diagnoses. Over 16 million responses from more than 60,000 physicians per case have accumulated since 2009, creating a unique global record of how doctors perform on the same tough questions. From this archive, the team selected 272 cases covering all ages, both sexes, and a wide range of conditions, from infections and immune disorders to cancers, genetic diseases, and injuries.

Putting AI and doctors on the same playing field

The study tested three leading multimodal large language models—systems that can look at images and read text together: GPT‑4o, Claude 3.7, and Doubao. For each case, the models first saw only the image and had to choose one of the five options with an explanation. Then they saw the image plus the clinical description and answered again. To keep the test fair, the models were used in standard settings, with web search and extra reasoning features turned off, and every case was run in a fresh session to avoid contamination from earlier answers. Two physicians graded the AI responses against the official New England Journal solutions, focusing on whether the final choice matched the true diagnosis, just as the human benchmark does.

Superhuman performance across diseases and images

When given both images and text, all three AI systems clearly beat the global pool of physicians. Claude 3.7 and GPT‑4o each reached about 89–90% accuracy, compared with 46.7% for the majority vote of human respondents—a gap of more than 40 percentage points. Even in the hardest cases, where fewer than 40% of doctors were correct, Claude 3.7 still got 86.5% of diagnoses right. The advantage held across most disease types and image formats: the models were especially strong in drug‑related and genetic conditions, and they handled not only photos and X‑rays but also endoscopic, pathological, and mixed image sets. Performance was equally strong for men and women, and in some of the most vulnerable groups, such as infants under one year of age, the models were dramatically more accurate than physicians.

Different minds, not just faster ones

Perhaps the most surprising finding was how often the models succeeded when physicians did not. In nearly half the cases, Claude 3.7 was correct while the majority of doctors were wrong, and the reverse—doctors correct, model wrong—was rare. Overall, for Claude 3.7 there were about fifteen "model-advantage" cases for every one "physician-advantage" case. Yet agreement between humans and AI on which answer to pick was low, a sign that the systems are not simply echoing human patterns but are arriving at correct diagnoses through different routes. The addition of clinical text generally helped a lot, boosting AI accuracy by 28–42 percentage points over images alone. Still, in a tiny fraction of cases, extra details pushed models from a correct image-based answer to a wrong one, hinting at new kinds of biases and failure modes that will need careful study.

What this could mean for future care

The authors conclude that multimodal large language models have reached a "superhuman" level on this demanding diagnostic quiz: they are more accurate than the average physician crowd and keep their edge even when cases stump most doctors. At the same time, their low overlap with human choices suggests they think in complementary ways rather than acting as digital copies of clinicians. Used wisely, these systems could serve as powerful second readers, offering independent opinions on difficult or rare cases and helping to catch problems that human doctors might miss. They are not ready to replace clinical judgment, but they may soon become valuable partners at the bedside and in the reading room, quietly checking our work and broadening the safety net for patients.

Citation: Sheng, C., Shen, S., Wang, L. et al. Multimodal large language models challenge NEJM image challenge. Sci Rep 16, 8132 (2026). https://doi.org/10.1038/s41598-026-39201-3

Keywords: medical diagnosis, artificial intelligence, medical imaging, rare diseases, clinical decision support