Clear Sky Science · en

A lightweight hybrid CNN and transformer model for medicinal leaf disease classification with explainable AI

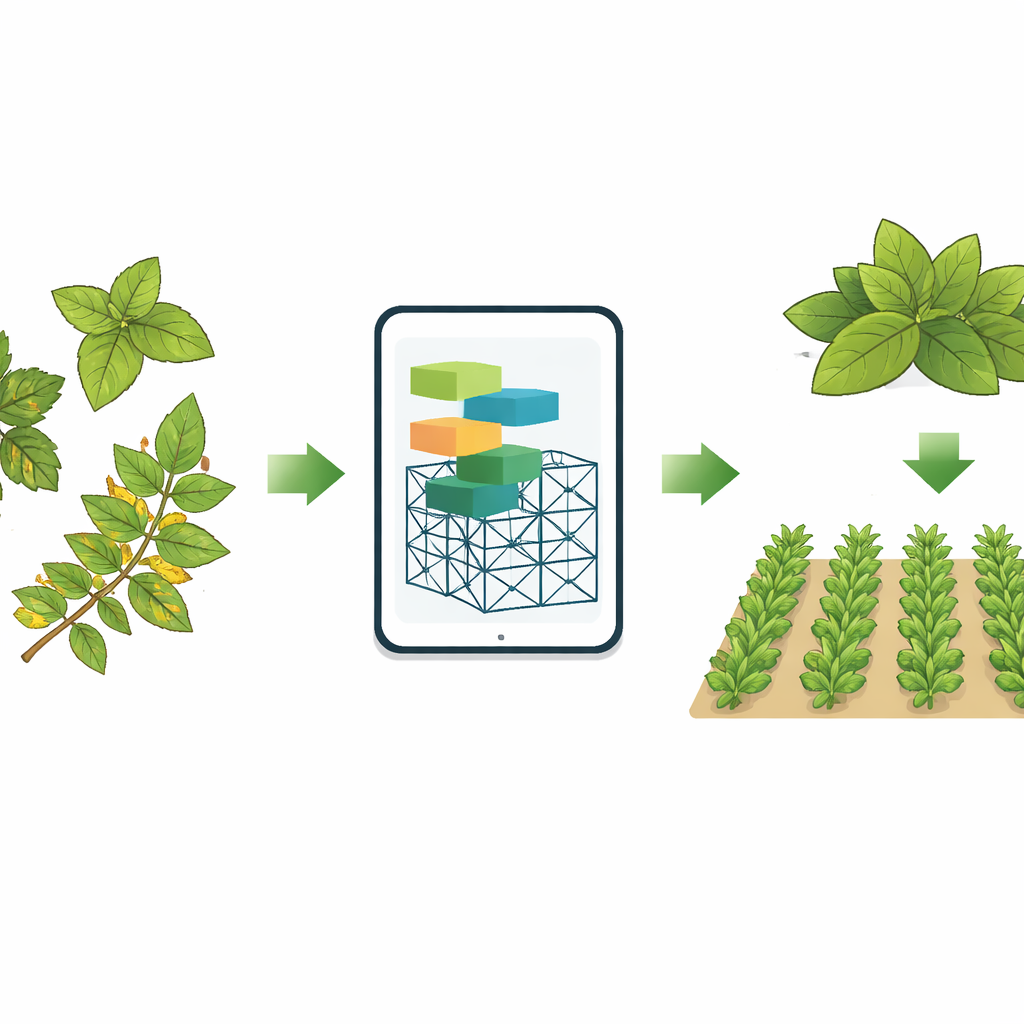

Why smarter plant care matters

Many of the herbs used in home remedies and modern drugs—such as tulsi (holy basil), neem, and patharkuchi—depend on healthy leaves to produce their healing compounds. When diseases attack these leaves, the plants lose both yield and medicinal strength. The paper introduces a compact artificial intelligence (AI) system that can spot different leaf diseases from photos with remarkable accuracy. Designed to run on low-cost devices and to show clearly what it “looks at,” this approach could help farmers and gardeners protect valuable medicinal plants in real time.

Hidden threats on familiar leaves

The study focuses on three widely used medicinal plants: Kalanchoe pinnata (patharkuchi), Azadirachta indica (neem), and Ocimum tenuiflorum (tulsi). These plants offer antibacterial, anti-inflammatory, and even anti‑cancer benefits, yet their leaves are vulnerable to fungal webs, yellowing from stress, and various spotting diseases. Traditional diagnosis relies on expert eyes in the field or slow, equipment‑heavy lab tests, both of which make it hard to catch problems early or across large areas. With plant health tied to both public health and local economies, there is a strong need for automatic, accurate, and understandable tools that can flag disease quickly using nothing more than images.

Building a smart eye for sick leaves

To tackle this challenge, the authors created a new model called LSeTNet, a lightweight hybrid of two popular AI ideas for images: convolutional networks, which are good at spotting fine textures and edges, and transformer layers, which excel at seeing long‑range patterns across an image. The system first learned from a carefully collected image set called MedicinalLeaf‑12, containing 12 classes that cover healthy and diseased versions of the three plants. Photos were taken under real‑world field conditions with varied lighting, angles, and backgrounds, then cleaned and enhanced so that disease spots and leaf veins stood out more clearly. The team also used extensive image augmentation—rotating, zooming, changing brightness, and more—to mimic the messy variety seen in real farms while keeping the dataset balanced.

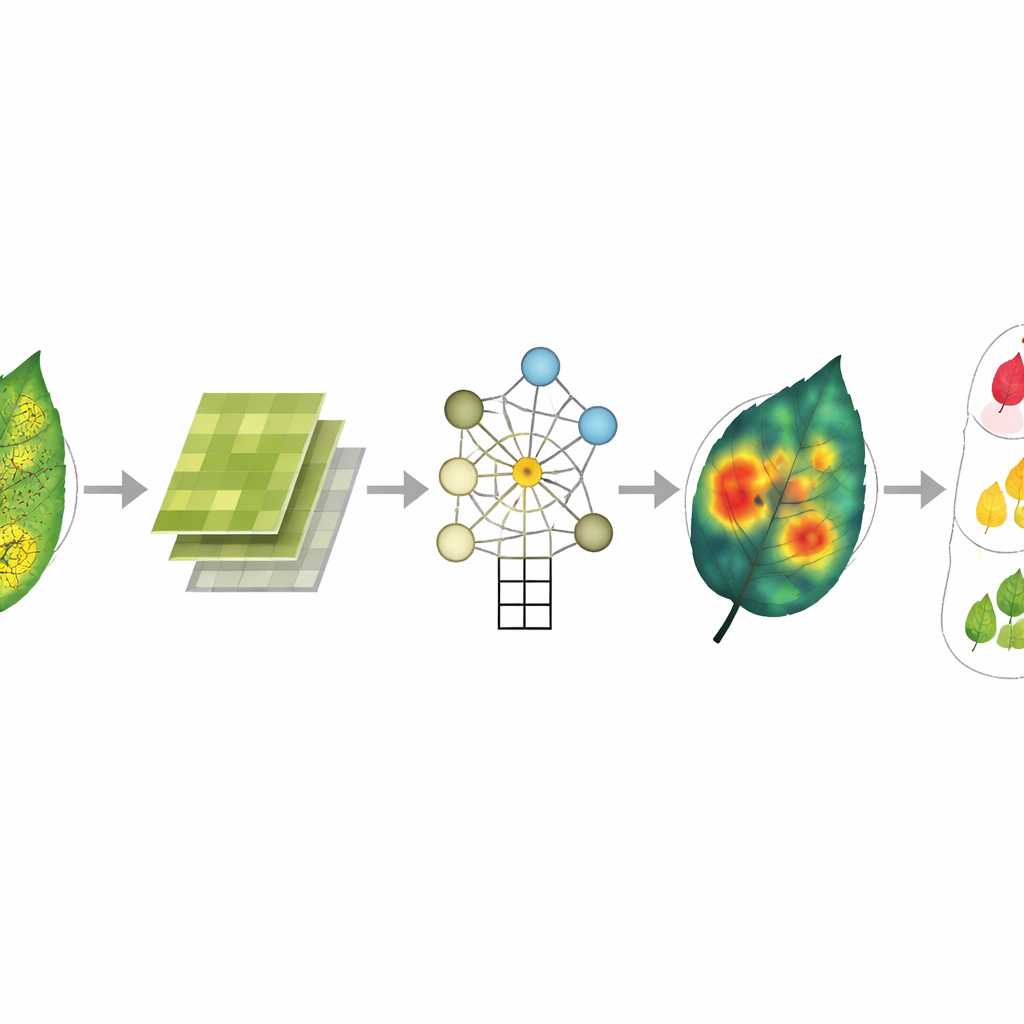

How the model thinks about leaves

LSeTNet processes each leaf picture in stages. Lightweight convolutional layers pick up local clues such as tiny spots, webbing, and the sharpness of leaf edges. Special “squeeze‑and‑excitation” modules then reweight these clues, quietly turning up channels that carry disease‑related signals and turning down those dominated by background. A transformer block follows, linking distant regions of the leaf so the model can, for example, relate scattered yellow patches or patterns that run along veins. Finally, a compact classifier decides which of the 12 conditions best matches each image. Despite using only about 9.4 million parameters and modest computing power, the model maintains high speed and low memory use, making it suitable for phones, tablets, or small single‑board computers.

Seeing inside the black box

Because farmers and agronomists must trust any automated diagnosis, the authors built explainability into their system. They used tools such as Grad‑CAM and LIME to create heatmaps that show where the model is “paying attention” on each leaf, and t‑SNE plots to visualize how different diseases cluster in the model’s internal feature space. These explanations reveal that the AI consistently focuses on lesions, discolored tissue, and fungal webs rather than on plain background or stems. Even in the rare misclassifications—only five errors out of 1,800 test images—the highlighted regions stay on biologically meaningful areas; the confusion arises mainly when two diseases look very similar to the human eye as well.

What the results mean for growers

Across the main dataset, LSeTNet correctly classified leaf images with an accuracy of about 99.7%, and it reached similarly high performance when tested on a separate external dataset of Bangladeshi medicinal plants it had not seen before. At the same time, it runs quickly (around seven thousandths of a second per image on a GPU) and fits into a small memory footprint, opening the door to low‑cost, field‑ready apps. In practical terms, this work shows that compact, transparent AI can reliably detect early disease signs in important medicinal plants and clearly show users why it reached a given decision. With further testing on more species and tougher field conditions, similar systems could help safeguard herbal medicine supply chains, support precision agriculture, and give smallholder farmers an accessible “second opinion” in their pockets.

Citation: Ahmmed, J., Kabir, M.A., Rehman, A.u. et al. A lightweight hybrid CNN and transformer model for medicinal leaf disease classification with explainable AI. Sci Rep 16, 8243 (2026). https://doi.org/10.1038/s41598-026-39182-3

Keywords: medicinal plants, leaf disease detection, deep learning, explainable AI, precision agriculture